metadata

license: apache-2.0

datasets:

- HuggingFaceTB/smollm-corpus

language:

- en

library_name: transformers

pipeline_tag: text2text-generation

tags:

- fineweb

- t5

- 1024 ctx

- SiLU activations

fineweb-edu-dedupsplit ofHuggingFaceTB/smollm-corpus

details

Model:

- Dropout rate: 0.0

- Activations:

silu,gated-silu - torch compile: true

Data processing:

- Input length: 1024

- MLM probability: 0.15

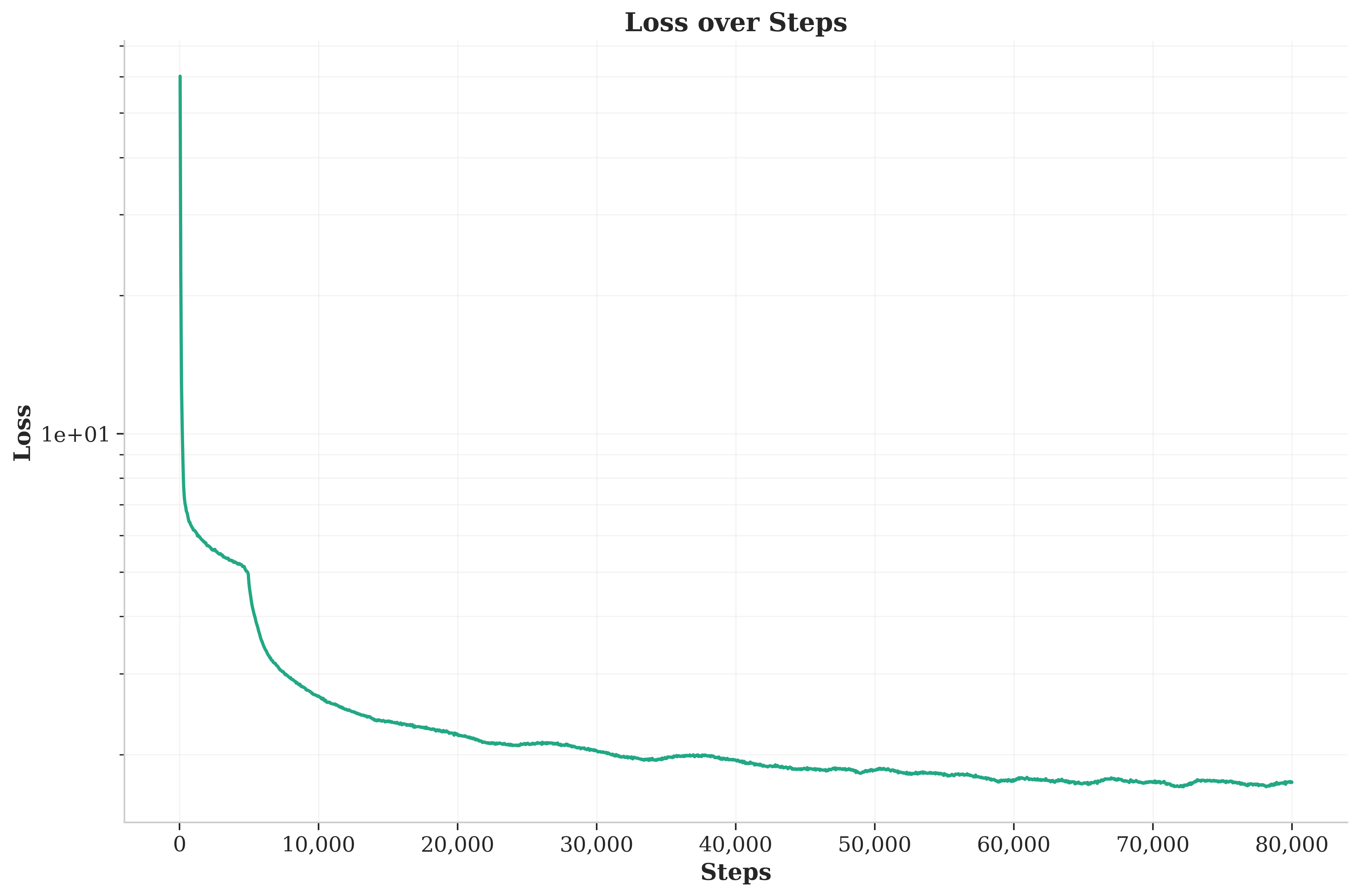

Optimization:

- Optimizer: AdamW with scaling

- Base learning rate: 0.008

- Batch size: 120

- Total training steps: 80,000

- Warmup steps: 10,000

- Learning rate scheduler: Cosine

- Weight decay: 0.0001

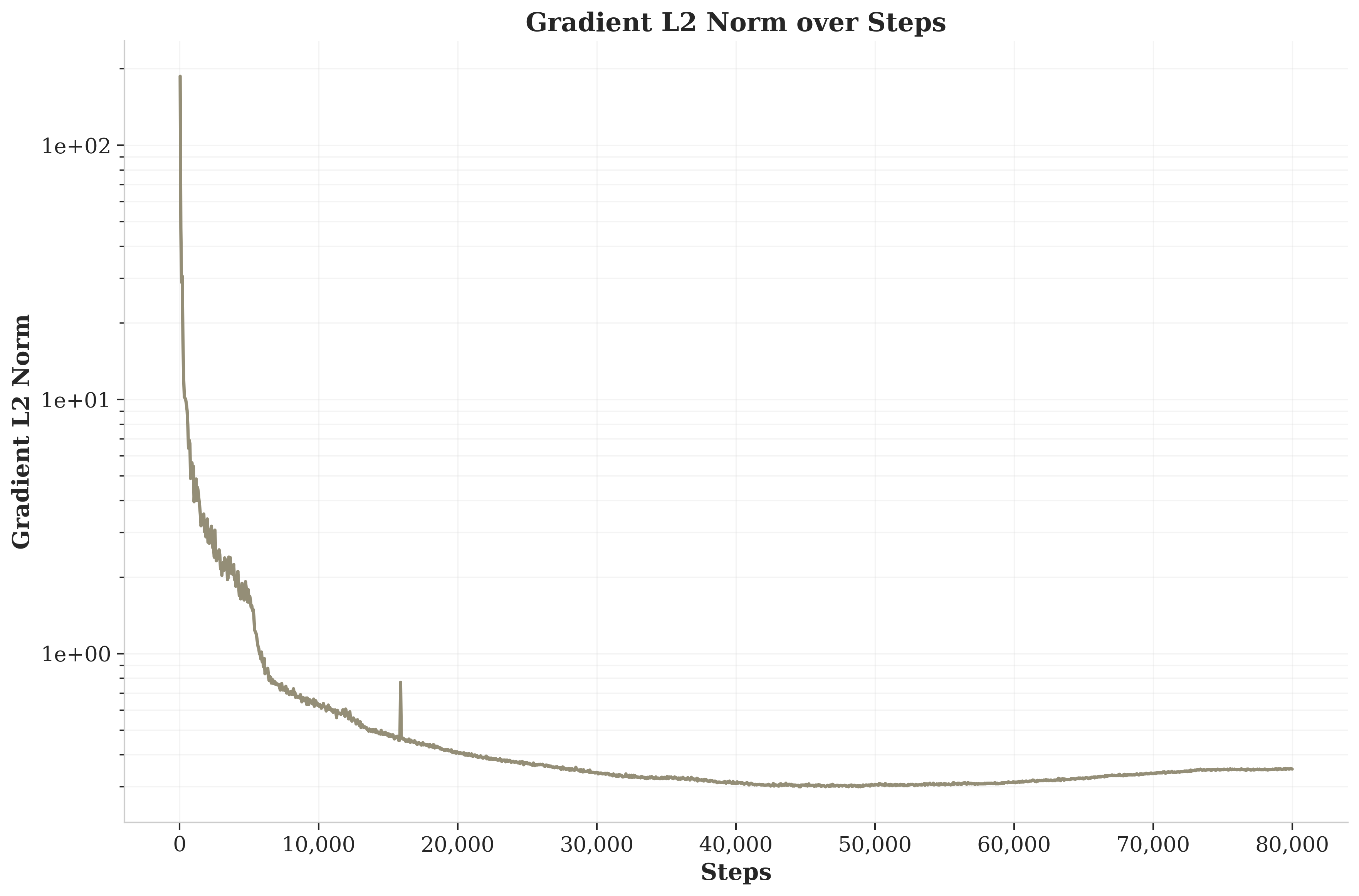

- Gradient clipping: 1.0

- Gradient accumulation steps: 24

- Final cosine learning rate: 1e-5

Hardware utilization:

- Device: GPU

- Precision: bfloat16, tf32

plots

training loss

grad norm

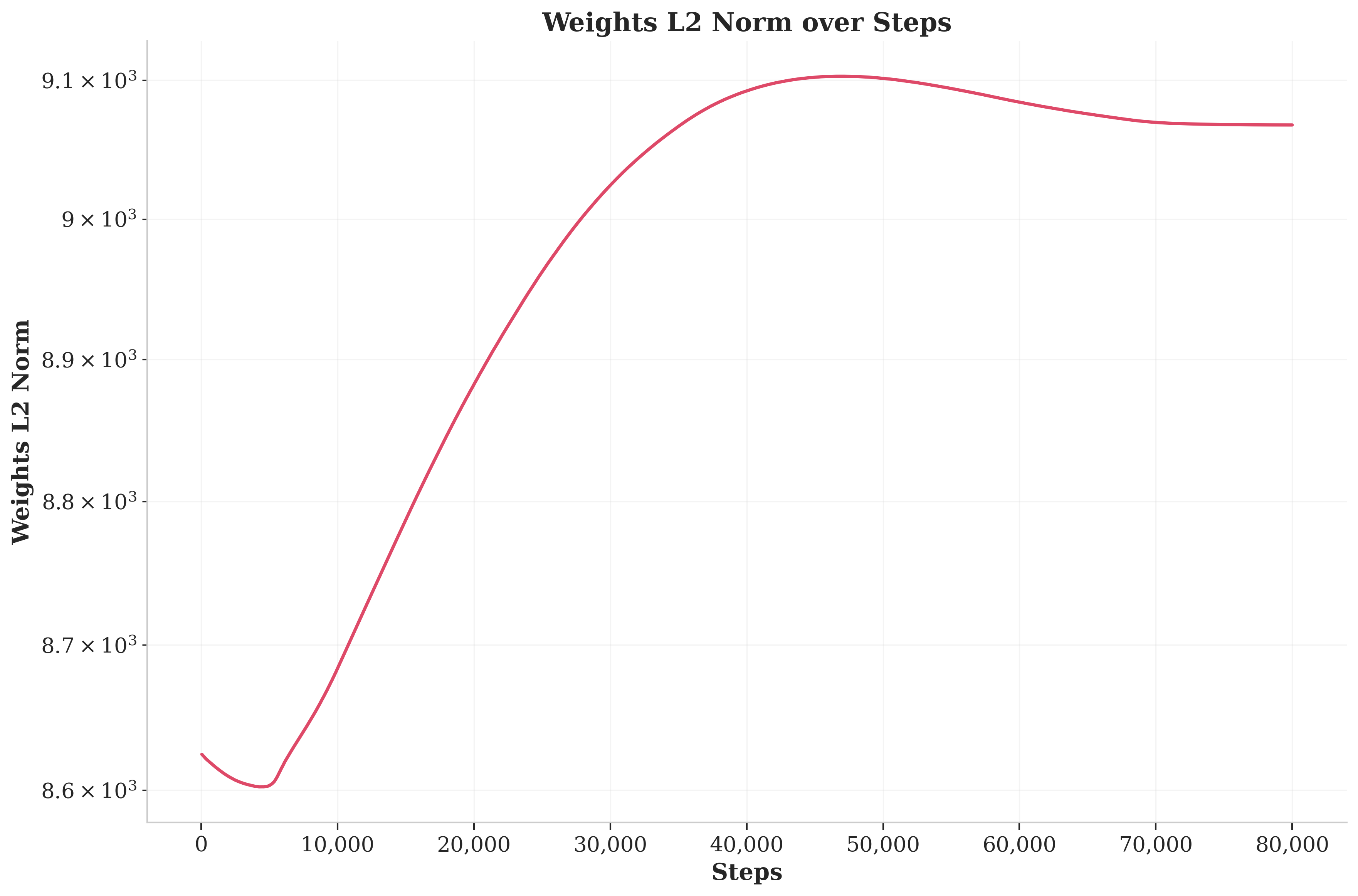

weights norm