SALMONN: Speech Audio Language Music Open Neural Network

🚀🚀 Welcome to the repo of SALMONN!

SALMONN is a large language model (LLM) enabling speech, audio events, and music inputs, which is developed by the Department of Electronic Engineering at Tsinghua University and ByteDance. Instead of speech-only input or audio-event-only input, SALMONN can perceive and understand all kinds of audio inputs and therefore obtain emerging capabilities such as multilingual speech recognition & translation and audio-speech co-reasoning. This can be regarded as giving the LLM "ears" and cognitive hearing abilities, which makes SALMONN a step towards hearing-enabled artificial general intelligence.

News

- [10-08] ✨ We have released the model checkpoint and the inference code for SALMONN-13B!

- [11-13] 🎁 We have released a 7B version of SALMONN at tsinghua-ee/SALMONN-7B and built the 7B demo here!

Structure

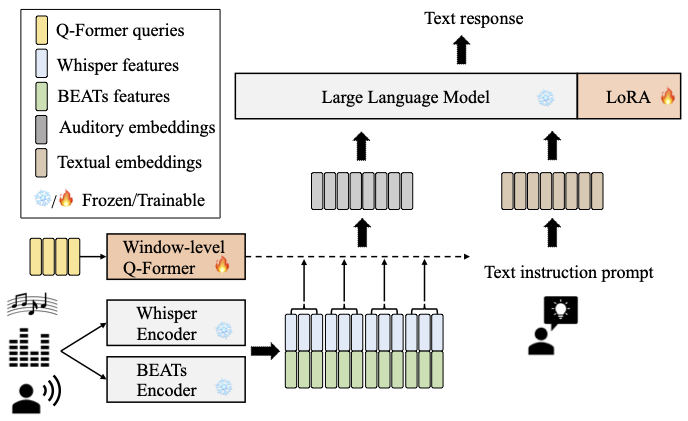

The model architecture of SALMONN is shown below. A window-level Q-Former is used as the connection module to fuse the outputs from a Whisper speech encoder and a BEATs audio encoder as augmented audio tokens, which are aligned with the LLM input space. The LoRA adaptor aligns the augmented LLM input space with its output space. The text prompt is used to instruct SALMONN to answer open-ended questions about the general audio inputs and the answers are in the LLM text responses.

Demos

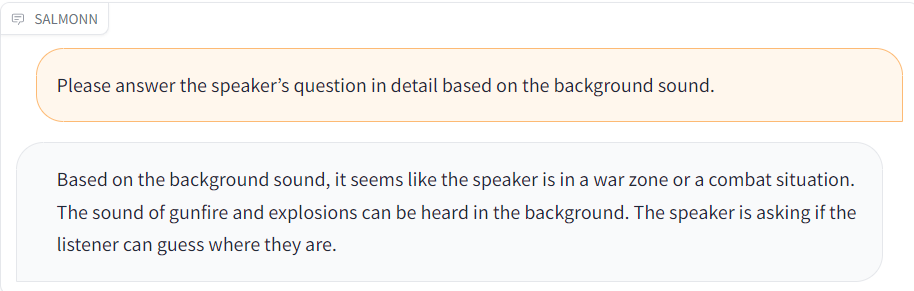

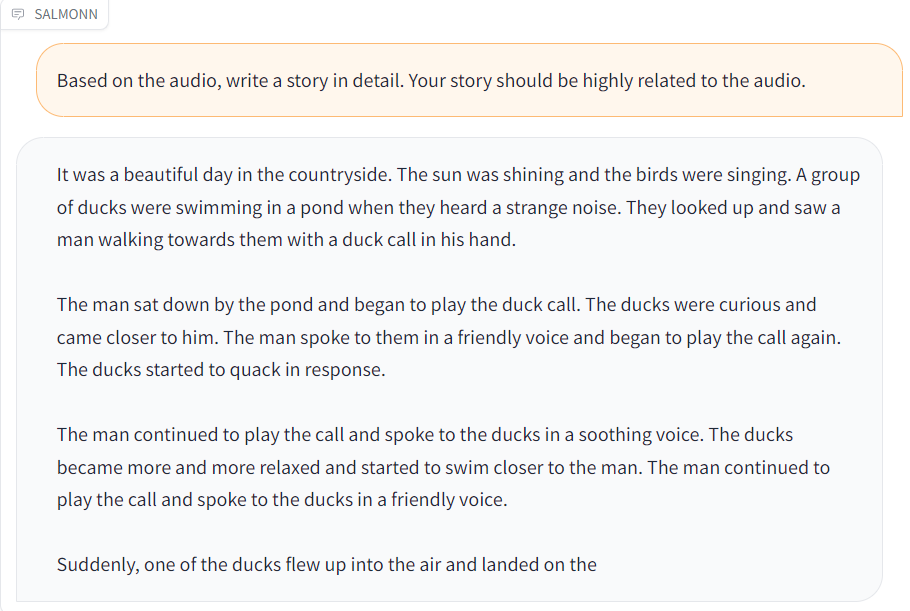

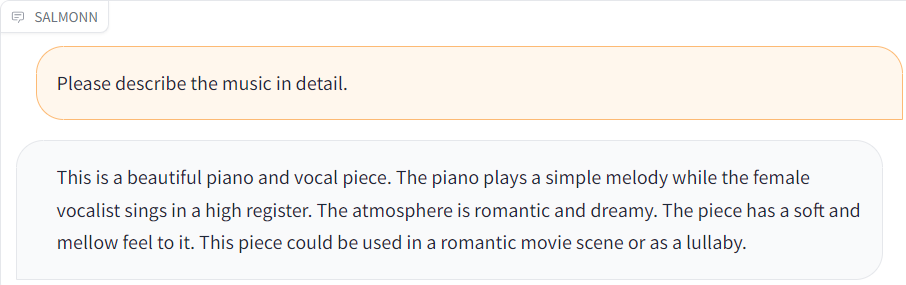

Compared with traditional speech and audio processing tasks such as speech recognition and audio caption, SALMONN leverages the general knowledge and cognitive abilities of the LLM to achieve a cognitively oriented audio perception, which dramatically improves the versatility of the model and the richness of the task. In addition, SALMONN is able to follow textual commands, and even spoken commands, with a relatively high degree of accuracy. Since SALMONN only uses training data based on textual commands, listening to spoken commands is also a cross-modal emergent ability.

Here are some examples of SALMONN.

| Audio | Response |

|---|---|

| gunshots.wav |  |

| duck.wav |  |

| music.wav |  |

How to inference in CLI

For SALMONN-7B v0, you need to use the following dependencies:

- Our environment: The python version is 3.9.17, and other required packages can be installed with the following command:

pip install -r requirements.txt. - Download whisper large v2 to

whisper_path. - Download Fine-tuned BEATs_iter3+ (AS2M) (cpt2) to

beats_path. - Download vicuna 7B v1.5 to

vicuna_path. - Download salmonn-7b v0 to

ckpt_path. - Running with

python3 cli_inference.py --ckpt_path xxx --whisper_path xxx --beats_path xxx --vicuna_path xxxto start cli inference. Please make sure your GPU has more than 40G of memory. If your GPU does not have enough memory (e.g. only 24G), you can quantize the model using the--low_resourceparameter to reduce the memory usage, and can reduce the LoRA scaling factor to maintain the model's emergent abilities, e.g.--lora_alpha=28.

How to launch a web demo

- Same as How to inference in CLI: 1-5.

- Running with

python3 web_demo.py --ckpt_path xxx --whisper_path xxx --beats_path xxx --vicuna_path xxxin A100-SXM-80GB. You can add--low_resourceparameter if the GPU memory is not enough, and reduce the LoRA scaling factor to maintain the model's emergent abilities.

Team

Team Tsinghua: Wenyi Yu, Changli Tang, Guangzhi Sun, Chao Zhang

Team ByteDance: Xianzhao Chen, Wei Li, Tian Tan, Lu Lu, Zejun Ma

Citation

If you find SALMONN great and useful, please cite our paper:

@article{tang2023salmonn,

title={{SALMONN}: Towards Generic Hearing Abilities for Large Language Models},

author={Changli, Tang and Wenyi, Yu and Guangzhi, Sun and Xianzhao, Chen and Tian, Tan and Wei, Li and Lu, Lu and Zejun, Ma and Chao, Zhang},

journal={arXiv:2310.13289},

year={2023}

}