library_name: transformers

license: other

license_name: nvidia-open-model-license

license_link: >-

https://www.nvidia.com/en-us/agreements/enterprise-software/nvidia-open-model-license/

pipeline_tag: text-generation

language:

- en

tags:

- nvidia

- llama-3

- pytorch

base_model:

- nvidia/Llama-3.1-Minitron-4B-Width-Base

datasets:

- nvidia/Llama-Nemotron-Post-Training-Dataset

Llama-3.1-Nemotron-Nano-4B-v1.1

Model Overview

Llama-3.1-Nemotron-Nano-4B-v1.1 is a large language model (LLM) which is a derivative of nvidia/Llama-3.1-Minitron-4B-Width-Base, which is created from Llama 3.1 8B using our LLM compression technique and offers improvements in model accuracy and efficiency. It is a reasoning model that is post trained for reasoning, human chat preferences, and tasks, such as RAG and tool calling.

Llama-3.1-Nemotron-Nano-4B-v1.1 is a model which offers a great tradeoff between model accuracy and efficiency. The model fits on a single RTX GPU and can be used locally. The model supports a context length of 128K.

This model underwent a multi-phase post-training process to enhance both its reasoning and non-reasoning capabilities. This includes a supervised fine-tuning stage for Math, Code, Reasoning, and Tool Calling as well as multiple reinforcement learning (RL) stages using Reward-aware Preference Optimization (RPO) algorithms for both chat and instruction-following. The final model checkpoint is obtained after merging the final SFT and RPO checkpoints

This model is part of the Llama Nemotron Collection. You can find the other model(s) in this family here:

This model is ready for commercial use.

License/Terms of Use

GOVERNING TERMS: Your use of this model is governed by the NVIDIA Open Model License. Additional Information: Llama 3.1 Community License Agreement. Built with Llama.

Model Developer: NVIDIA

Model Dates: Trained between August 2024 and May 2025

Data Freshness: The pretraining data has a cutoff of June 2023.

Use Case:

Developers designing AI Agent systems, chatbots, RAG systems, and other AI-powered applications. Also suitable for typical instruction-following tasks. Balance of model accuracy and compute efficiency (the model fits on a single RTX GPU and can be used locally).

Release Date:

5/20/2025

References

- [2408.11796] LLM Pruning and Distillation in Practice: The Minitron Approach

- [2502.00203] Reward-aware Preference Optimization: A Unified Mathematical Framework for Model Alignment

- [2505.00949] Llama-Nemotron: Efficient Reasoning Models

Model Architecture

Architecture Type: Dense decoder-only Transformer model

Network Architecture: Llama 3.1 Minitron Width 4B Base

Intended use

Llama-3.1-Nemotron-Nano-4B-v1.1 is a general purpose reasoning and chat model intended to be used in English and coding languages. Other non-English languages (German, French, Italian, Portuguese, Hindi, Spanish, and Thai) are also supported.

Input:

- Input Type: Text

- Input Format: String

- Input Parameters: One-Dimensional (1D)

- Other Properties Related to Input: Context length up to 131,072 tokens

Output:

- Output Type: Text

- Output Format: String

- Output Parameters: One-Dimensional (1D)

- Other Properties Related to Output: Context length up to 131,072 tokens

Model Version:

1.1 (5/20/2025)

Software Integration

- Runtime Engine: NeMo 24.12

- Recommended Hardware Microarchitecture Compatibility:

- NVIDIA Hopper

- NVIDIA Ampere

Quick Start and Usage Recommendations:

- Reasoning mode (ON/OFF) is controlled via the system prompt, which must be set as shown in the example below. All instructions should be contained within the user prompt

- We recommend setting temperature to

0.6, and Top P to0.95for Reasoning ON mode - We recommend using greedy decoding for Reasoning OFF mode

- We have provided a list of prompts to use for evaluation for each benchmark where a specific template is required

See the snippet below for usage with Hugging Face Transformers library. Reasoning mode (ON/OFF) is controlled via system prompt. Please see the example below.

Our code requires the transformers package version to be 4.44.2 or higher.

Example of “Reasoning On:”

import torch

import transformers

model_id = "nvidia/Llama-3.1-Nemotron-Nano-4B-v1.1"

model_kwargs = {"torch_dtype": torch.bfloat16, "device_map": "auto"}

tokenizer = transformers.AutoTokenizer.from_pretrained(model_id)

tokenizer.pad_token_id = tokenizer.eos_token_id

pipeline = transformers.pipeline(

"text-generation",

model=model_id,

tokenizer=tokenizer,

max_new_tokens=32768,

temperature=0.6,

top_p=0.95,

**model_kwargs

)

# Thinking can be "on" or "off"

thinking = "on"

print(pipeline([{"role": "system", "content": f"detailed thinking {thinking}"}, {"role": "user", "content": "Solve x*(sin(x)+2)=0"}]))

Example of “Reasoning Off:”

import torch

import transformers

model_id = "nvidia/Llama-3.1-Nemotron-Nano-4B-v1"

model_kwargs = {"torch_dtype": torch.bfloat16, "device_map": "auto"}

tokenizer = transformers.AutoTokenizer.from_pretrained(model_id)

tokenizer.pad_token_id = tokenizer.eos_token_id

pipeline = transformers.pipeline(

"text-generation",

model=model_id,

tokenizer=tokenizer,

max_new_tokens=32768,

do_sample=False,

**model_kwargs

)

# Thinking can be "on" or "off"

thinking = "off"

print(pipeline([{"role": "system", "content": f"detailed thinking {thinking}"}, {"role": "user", "content": "Solve x*(sin(x)+2)=0"}]))

For some prompts, even though thinking is disabled, the model emergently prefers to think before responding. But if desired, the users can prevent it by pre-filling the assistant response.

import torch

import transformers

model_id = "nvidia/Llama-3.1-Nemotron-Nano-4B-v1.1"

model_kwargs = {"torch_dtype": torch.bfloat16, "device_map": "auto"}

tokenizer = transformers.AutoTokenizer.from_pretrained(model_id)

tokenizer.pad_token_id = tokenizer.eos_token_id

# Thinking can be "on" or "off"

thinking = "off"

pipeline = transformers.pipeline(

"text-generation",

model=model_id,

tokenizer=tokenizer,

max_new_tokens=32768,

do_sample=False,

**model_kwargs

)

print(pipeline([{"role": "system", "content": f"detailed thinking {thinking}"}, {"role": "user", "content": "Solve x*(sin(x)+2)=0"}, {"role":"assistant", "content":"<think>\n</think>"}]))

Running a vLLM Server with Tool-call Support

Llama-3.1-Nemotron-Nano-4B-v1.1 supports tool calling. This HF repo hosts a tool-callilng parser as well as a chat template in Jinja, which can be used to launch a vLLM server.

Here is a shell script example to launch a vLLM server with tool-call support. vllm/vllm-openai:v0.6.6 or newer should support the model.

#!/bin/bash

CWD=$(pwd)

PORT=5000

git clone https://huggingface.co/nvidia/Llama-3.1-Nemotron-Nano-4B-v1.1

docker run -it --rm \

--runtime=nvidia \

--gpus all \

--shm-size=16GB \

-p ${PORT}:${PORT} \

-v ${CWD}:${CWD} \

vllm/vllm-openai:v0.6.6 \

--model $CWD/Llama-3.1-Nemotron-Nano-4B-v1.1 \

--trust-remote-code \

--seed 1 \

--host "0.0.0.0" \

--port $PORT \

--served-model-name "Llama-Nemotron-Nano-4B-v1.1" \

--tensor-parallel-size 1 \

--max-model-len 131072 \

--gpu-memory-utilization 0.95 \

--enforce-eager \

--enable-auto-tool-choice \

--tool-parser-plugin "${CWD}/Llama-3.1-Nemotron-Nano-4B-v1.1/llama_nemotron_nano_toolcall_parser.py" \

--tool-call-parser "llama_nemotron_json" \

--chat-template "${CWD}/Llama-3.1-Nemotron-Nano-4B-v1.1/llama_nemotron_nano_generic_tool_calling.jinja"

Alternatively, you can use a virtual environment to launch a vLLM server like below.

$ git clone https://huggingface.co/nvidia/Llama-3.1-Nemotron-Nano-4B-v1.1

$ conda create -n vllm python=3.12 -y

$ conda activate vllm

$ python -m vllm.entrypoints.openai.api_server \

--model Llama-3.1-Nemotron-Nano-4B-v1.1 \

--trust-remote-code \

--seed 1 \

--host "0.0.0.0" \

--port 5000 \

--served-model-name "Llama-Nemotron-Nano-4B-v1.1" \

--tensor-parallel-size 1 \

--max-model-len 131072 \

--gpu-memory-utilization 0.95 \

--enforce-eager \

--enable-auto-tool-choice \

--tool-parser-plugin "Llama-3.1-Nemotron-Nano-4B-v1.1/llama_nemotron_nano_toolcall_parser.py" \

--tool-call-parser "llama_nemotron_json" \

--chat-template "Llama-3.1-Nemotron-Nano-4B-v1.1/llama_nemotron_nano_generic_tool_calling.jinja"

After launching a vLLM server, you can call the server with tool-call support using a Python script like below.

>>> from openai import OpenAI

>>> client = OpenAI(

base_url="http://0.0.0.0:5000/v1",

api_key="dummy",

)

>>> completion = client.chat.completions.create(

model="Llama-Nemotron-Nano-4B-v1.1",

messages=[

{"role": "system", "content": "detailed thinking on"},

{"role": "user", "content": "My bill is $100. What will be the amount for 18% tip?"},

],

tools=[

{"type": "function", "function": {"name": "calculate_tip", "parameters": {"type": "object", "properties": {"bill_total": {"type": "integer", "description": "The total amount of the bill"}, "tip_percentage": {"type": "integer", "description": "The percentage of tip to be applied"}}, "required": ["bill_total", "tip_percentage"]}}},

{"type": "function", "function": {"name": "convert_currency", "parameters": {"type": "object", "properties": {"amount": {"type": "integer", "description": "The amount to be converted"}, "from_currency": {"type": "string", "description": "The currency code to convert from"}, "to_currency": {"type": "string", "description": "The currency code to convert to"}}, "required": ["from_currency", "amount", "to_currency"]}}},

],

)

>>> completion.choices[0].message.content

'<think>\nOkay, let\'s see. The user has a bill of $100 and wants to know the amount of a 18% tip. So, I need to calculate the tip amount. The available tools include calculate_tip, which requires bill_total and tip_percentage. The parameters are both integers. The bill_total is 100, and the tip percentage is 18. So, the function should multiply 100 by 18% and return 18.0. But wait, maybe the user wants the total including the tip? The question says "the amount for 18% tip," which could be interpreted as the tip amount itself. Since the function is called calculate_tip, it\'s likely that it\'s designed to compute the tip, not the total. So, using calculate_tip with bill_total=100 and tip_percentage=18 should give the correct result. The other function, convert_currency, isn\'t relevant here. So, I should call calculate_tip with those values.\n</think>\n\n'

>>> completion.choices[0].message.tool_calls

[ChatCompletionMessageToolCall(id='chatcmpl-tool-2972d86817344edc9c1e0f9cd398e999', function=Function(arguments='{"bill_total": 100, "tip_percentage": 18}', name='calculate_tip'), type='function')]

Inference:

Engine: Transformers Test Hardware:

- BF16:

- 1x RTX 50 Series GPUs

- 1x RTX 40 Series GPUs

- 1x RTX 30 Series GPUs

- 1x H100-80GB GPU

- 1x A100-80GB GPU

Preferred/Supported] Operating System(s): Linux

Training Datasets

A large variety of training data was used for the post-training pipeline, including manually annotated data and synthetic data.

The data for the multi-stage post-training phases for improvements in Code, Math, and Reasoning is a compilation of SFT and RL data that supports improvements of math, code, general reasoning, and instruction following capabilities of the original Llama instruct model.

Prompts have been sourced from either public and open corpus or synthetically generated. Responses were synthetically generated by a variety of models, with some prompts containing responses for both Reasoning On and Off modes, to train the model to distinguish between two modes.

Data Collection for Training Datasets:

- Hybrid: Automated, Human, Synthetic

Data Labeling for Training Datasets:

- N/A

Evaluation Datasets

We used the datasets listed below to evaluate Llama-3.1-Nemotron-Nano-4B-v1.1.

Data Collection for Evaluation Datasets: Hybrid: Human/Synthetic

Data Labeling for Evaluation Datasets: Hybrid: Human/Synthetic/Automatic

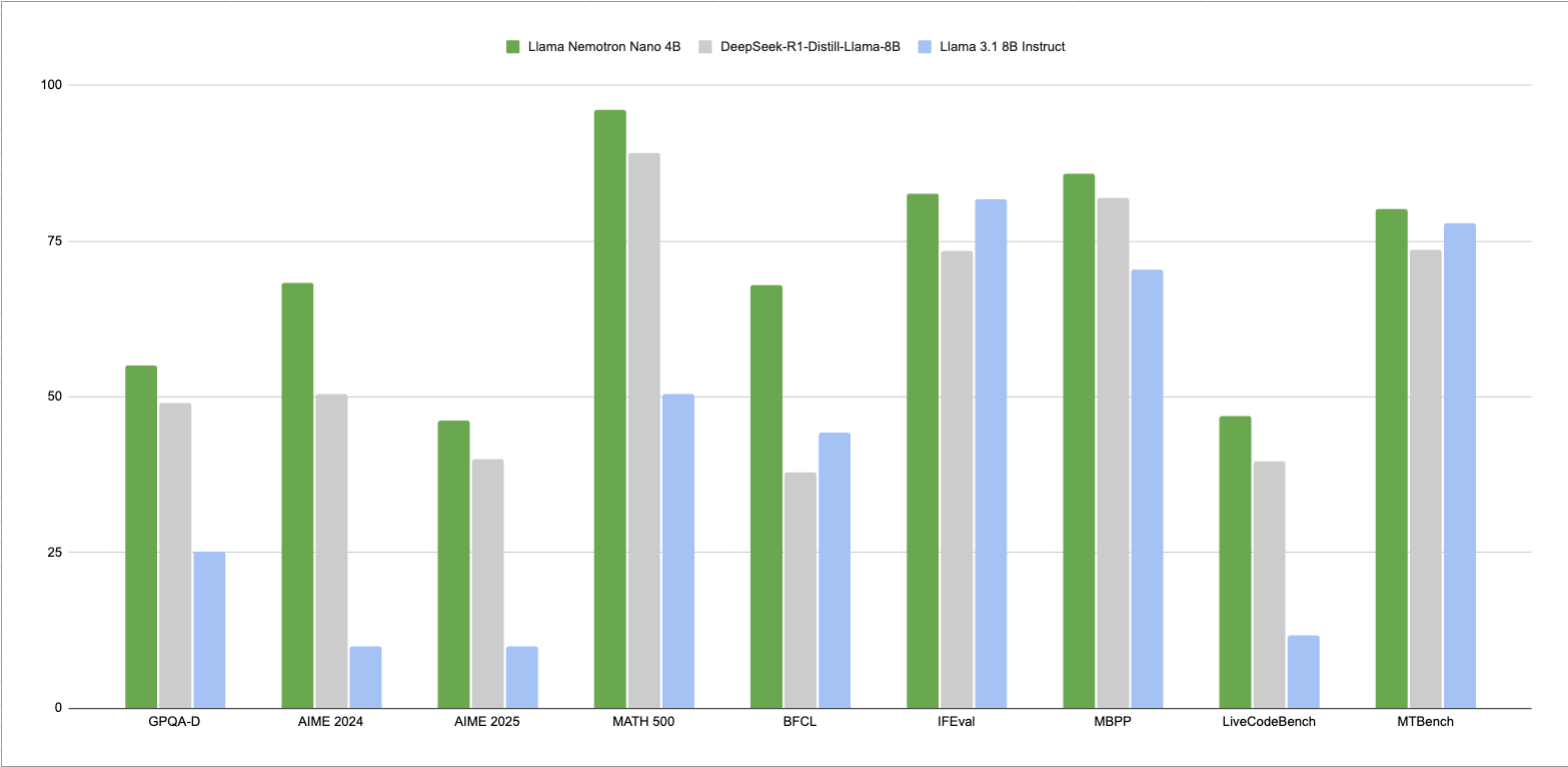

Evaluation Results

These results contain both “Reasoning On”, and “Reasoning Off”. We recommend using temperature=0.6, top_p=0.95 for “Reasoning On” mode, and greedy decoding for “Reasoning Off” mode. All evaluations are done with 32k sequence length. We run the benchmarks up to 16 times and average the scores to be more accurate.

NOTE: Where applicable, a Prompt Template will be provided. While completing benchmarks, please ensure that you are parsing for the correct output format as per the provided prompt in order to reproduce the benchmarks seen below.

MT-Bench

| Reasoning Mode | Score |

|---|---|

| Reasoning Off | 7.4 |

| Reasoning On | 8.0 |

MATH500

| Reasoning Mode | pass@1 |

|---|---|

| Reasoning Off | 71.8% |

| Reasoning On | 96.2% |

User Prompt Template:

"Below is a math question. I want you to reason through the steps and then give a final answer. Your final answer should be in \boxed{}.\nQuestion: {question}"

AIME25

| Reasoning Mode | pass@1 |

|---|---|

| Reasoning Off | 13.3% |

| Reasoning On | 46.3% |

User Prompt Template:

"Below is a math question. I want you to reason through the steps and then give a final answer. Your final answer should be in \boxed{}.\nQuestion: {question}"

GPQA-D

| Reasoning Mode | pass@1 |

|---|---|

| Reasoning Off | 33.8% |

| Reasoning On | 55.1% |

User Prompt Template:

"What is the correct answer to this question: {question}\nChoices:\nA. {option_A}\nB. {option_B}\nC. {option_C}\nD. {option_D}\nLet's think step by step, and put the final answer (should be a single letter A, B, C, or D) into a \boxed{}"

IFEval

| Reasoning Mode | Strict:Prompt | Strict:Instruction |

|---|---|---|

| Reasoning Off | 70.1% | 78.5% |

| Reasoning On | 75.5% | 82.6% |

BFCL v2 Live

| Reasoning Mode | Score |

|---|---|

| Reasoning Off | 63.6% |

| Reasoning On | 67.9% |

User Prompt Template:

<AVAILABLE_TOOLS>{functions}</AVAILABLE_TOOLS>

{user_prompt}

MBPP 0-shot

| Reasoning Mode | pass@1 |

|---|---|

| Reasoning Off | 61.9% |

| Reasoning On | 85.8% |

User Prompt Template:

You are an exceptionally intelligent coding assistant that consistently delivers accurate and reliable responses to user instructions.

@@ Instruction

Here is the given problem and test examples:

{prompt}

Please use the python programming language to solve this problem.

Please make sure that your code includes the functions from the test samples and that the input and output formats of these functions match the test samples.

Please return all completed codes in one code block.

This code block should be in the following format:

```python

# Your codes here

```

Ethical Considerations:

NVIDIA believes Trustworthy AI is a shared responsibility and we have established policies and practices to enable development for a wide array of AI applications. When downloaded or used in accordance with our terms of service, developers should work with their internal model team to ensure this model meets requirements for the relevant industry and use case and addresses unforeseen product misuse.

For more detailed information on ethical considerations for this model, please see the Model Card++ Explainability, Bias, Safety & Security, and Privacy Subcards.

Please report security vulnerabilities or NVIDIA AI Concerns here.