X-CLIP (base-sized model)

X-CLIP model (base-sized, patch resolution of 16) trained in a few-shot fashion (K=2) on UCF101. It was introduced in the paper Expanding Language-Image Pretrained Models for General Video Recognition by Ni et al. and first released in this repository.

This model was trained using 32 frames per video, at a resolution of 224x224.

Disclaimer: The team releasing X-CLIP did not write a model card for this model so this model card has been written by the Hugging Face team.

Model description

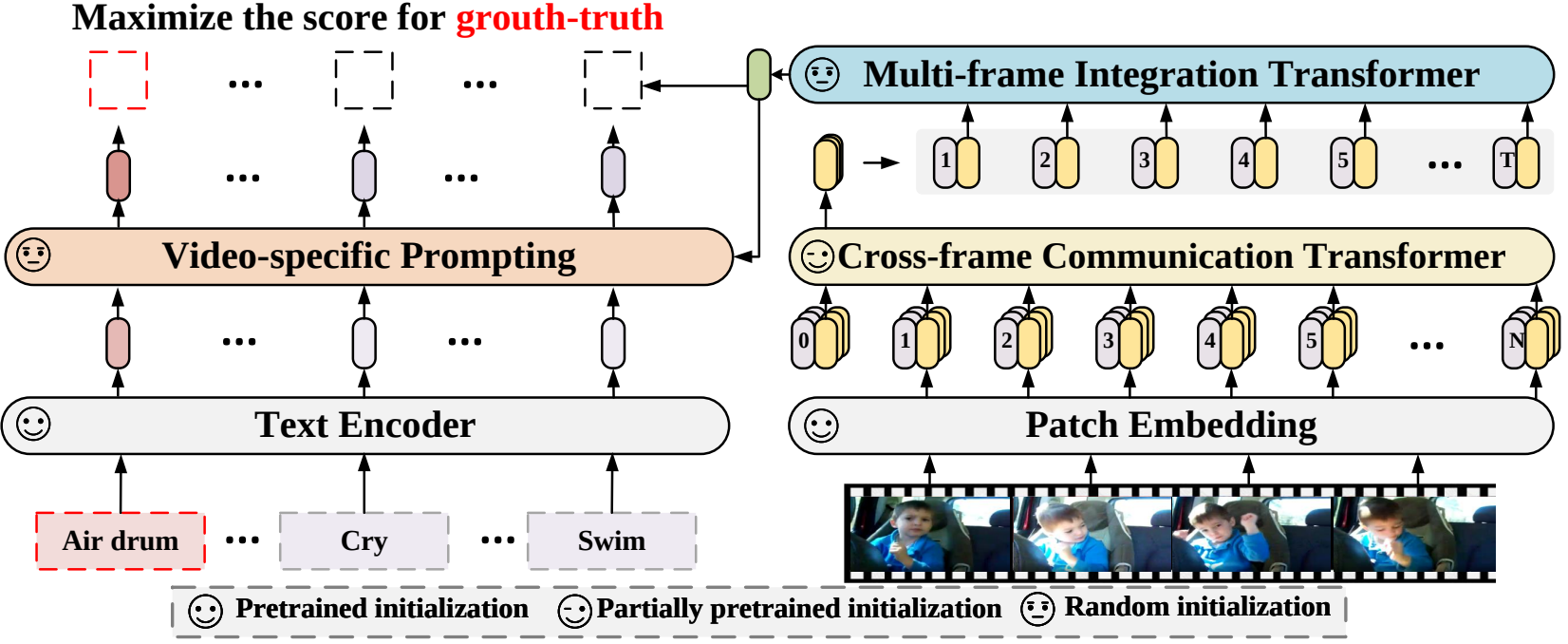

X-CLIP is a minimal extension of CLIP for general video-language understanding. The model is trained in a contrastive way on (video, text) pairs.

This allows the model to be used for tasks like zero-shot, few-shot or fully supervised video classification and video-text retrieval.

Intended uses & limitations

You can use the raw model for determining how well text goes with a given video. See the model hub to look for fine-tuned versions on a task that interests you.

How to use

For code examples, we refer to the documentation.

Training data

This model was trained on UCF101.

Preprocessing

The exact details of preprocessing during training can be found here.

The exact details of preprocessing during validation can be found here.

During validation, one resizes the shorter edge of each frame, after which center cropping is performed to a fixed-size resolution (like 224x224). Next, frames are normalized across the RGB channels with the ImageNet mean and standard deviation.

Evaluation results

This model achieves a top-1 accuracy of 76.4%.

- Downloads last month

- 26