LoRAs of the world unite

Collection

My favorite LoRAs - demos & checkpoints

•

17 items

•

Updated

•

3

To trigger image generation of trained concept(or concepts) replace each concept identifier in you prompt with the new inserted tokens:

to trigger concept TOK → use <s0><s1> in your prompt

from diffusers import AutoPipelineForText2Image

import torch

from huggingface_hub import hf_hub_download

from safetensors.torch import load_file

pipeline = AutoPipelineForText2Image.from_pretrained('stabilityai/stable-diffusion-xl-base-1.0', torch_dtype=torch.float16).to('cuda')

pipeline.load_lora_weights('LinoyTsaban/web_y2k', weight_name='pytorch_lora_weights.safetensors')

embedding_path = hf_hub_download(repo_id='LinoyTsaban/web_y2k', filename="embeddings.safetensors", repo_type="model")

state_dict = load_file(embedding_path)

pipeline.load_textual_inversion(state_dict["clip_l"], token=["<s0>", "<s1>"], text_encoder=pipe.text_encoder, tokenizer=pipe.tokenizer)

pipeline.load_textual_inversion(state_dict["clip_g"], token=["<s0>", "<s1>"], text_encoder=pipe.text_encoder_2, tokenizer=pipe.tokenizer_2)

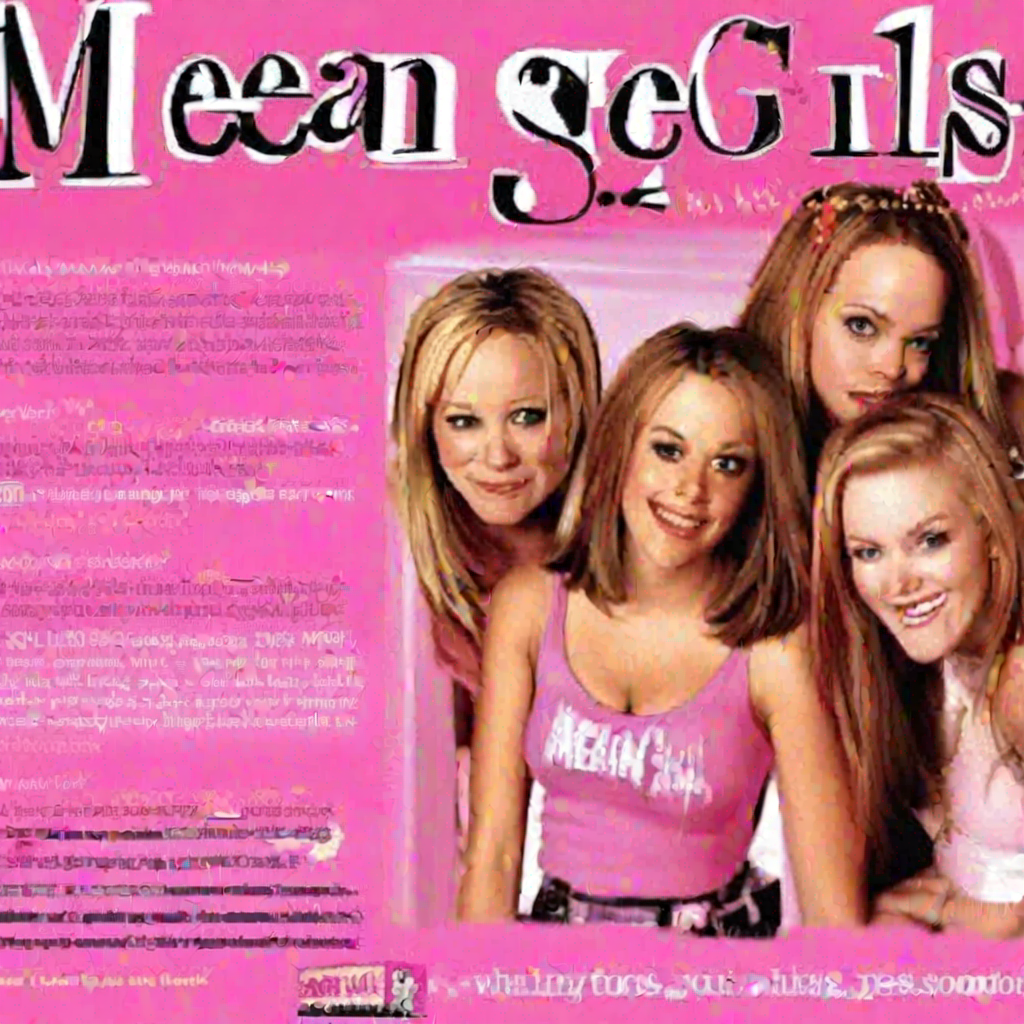

image = pipeline('<s0><s1> webpage about the movie Mean Girls').images[0]

For more details, including weighting, merging and fusing LoRAs, check the documentation on loading LoRAs in diffusers

All Files & versions.

The weights were trained using 🧨 diffusers Advanced Dreambooth Training Script.

LoRA for the text encoder was enabled. False.

Pivotal tuning was enabled: True.

Special VAE used for training: madebyollin/sdxl-vae-fp16-fix.

Base model

stabilityai/stable-diffusion-xl-base-1.0