license: apache-2.0

LimaRP-Mistral-7B-v0.1 (Alpaca, 8-bit LoRA adapter)

This is an experimental version of LimaRP for Mistral-7B-v0.1 with about 1800 training samples up to 4k tokens length. Contrarily to the previously released "v3" version for Llama-2, this one does not include a preliminary finetuning pass on several thousands short stories. Initial testing has shown Mistral to be capable of generating on its own the kind of stories that were included there; its training data appears to be quite diverse and does not seem to have been filtered for content type.

Due to software limitations, finetuning didn't take advantage yet of the Sliding Window Attention (SWA) which would have allowed to use longer conversations in the training data. Thus, this version of LimaRP should be considered an initial attempt and will be updated in the future.

For more details about LimaRP, see the model page for the previously released v2 version for Llama-2. Most details written there apply for this version as well. Generally speaking, LimaRP is a longform-oriented, novel-style roleplaying chat model intended to replicate the experience of 1-on-1 roleplay on Internet forums. Short-form, IRC/Discord-style RP (aka "Markdown format") is not supported yet. The model does not include instruction tuning, only manually picked and slightly edited RP conversations with persona and scenario data.

Important notes on generation settings

It's recommended not to go overboard with low tail-free-sampling (TFS) values. From previous testing with Llama-2, decreasing it too much appeared to easily yield rather repetitive responses. Extensive testing with Mistral has not been performed yet, but suggested starting generation settings are:

- TFS = 0.92~0.95

- Temperature = 0.70~0.85

- Repetition penalty = 1.05~1.10

- top-k = 0 (disabled)

- top-p = 1 (disabled)

Prompt format

Same as before. It uses the extended Alpaca format,

with ### Input: immediately preceding user inputs and ### Response: immediately preceding

model outputs. While Alpaca wasn't originally intended for multi-turn responses, in practice this

is not a problem; the format follows a pattern already used by other models.

### Instruction:

Character's Persona: {bot character description}

User's Persona: {user character description}

Scenario: {what happens in the story}

Play the role of Character. You must engage in a roleplaying chat with User below this line. Do not write dialogues and narration for User.

### Input:

User: {utterance}

### Response:

Character: {utterance}

### Input

User: {utterance}

### Response:

Character: {utterance}

(etc.)

You should:

- Replace all text in curly braces (curly braces included) with your own text.

- Replace

UserandCharacterwith appropriate names.

Message length control

Inspired by the previously named "Roleplay" preset in SillyTavern, with this version of LimaRP it is possible to append a length modifier to the response instruction sequence, like this:

### Input

User: {utterance}

### Response: (length = medium)

Character: {utterance}

This has an immediately noticeable effect on bot responses. The available lengths are:

tiny, short, medium, long, huge, humongous, extreme, unlimited. The

recommended starting length is medium. Keep in mind that the AI may ramble

or impersonate the user with very long messages.

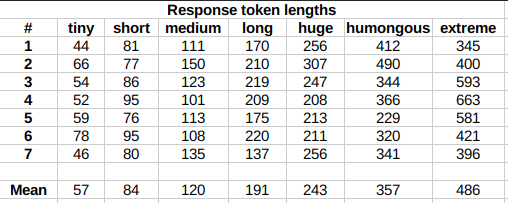

The length control effect is reproducible, but the messages will not necessarily follow lengths very precisely, rather follow certain ranges on average, as seen in this table with data from tests made with one reply at the beginning of the conversation:

Response length control appears to work well also deep into the conversation.

Suggested settings

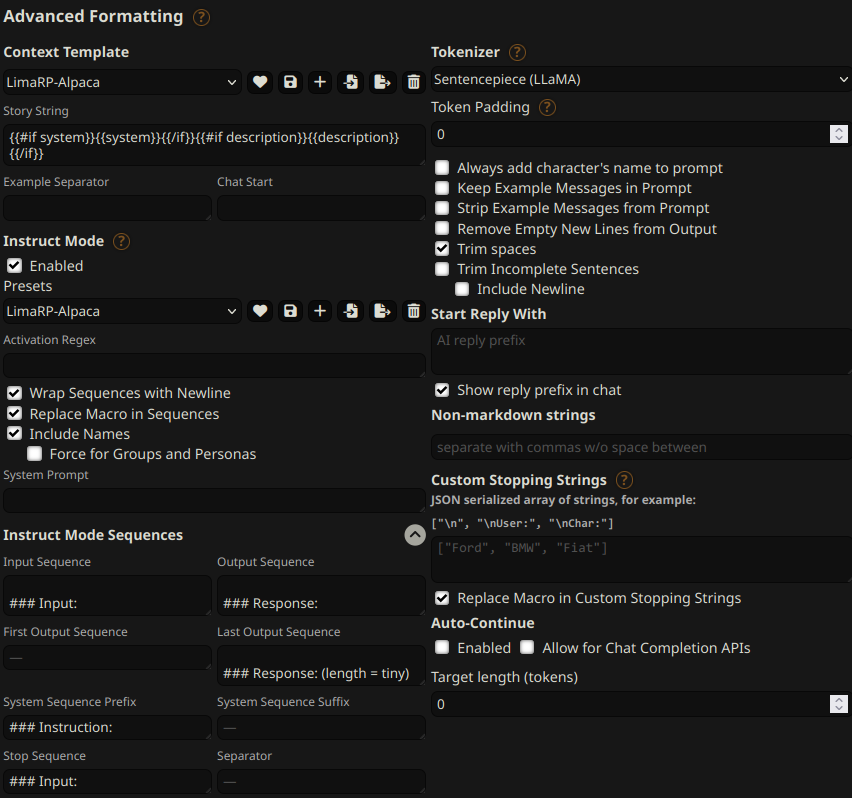

You can follow these instruction format settings in SillyTavern. Replace tiny with

your desired response length:

Training procedure

Axolotl was used for training on a 2x NVidia A40 GPU cluster.

The A40 GPU cluster has been graciously provided by Arc Compute.

The model has been trained as an 8-bit LoRA adapter, and it's so large because a LoRA rank of 256 was also used. The reasoning was that this might have helped the model internalize any newly acquired information, making the training process closer to a full finetune. It's suggested to merge the adapter to the base Mistral-7B-v0.1 model.

Training hyperparameters

- learning_rate: 0.001

- lr_scheduler_type: cosine

- num_epochs: 2

- lora_r: 256

- lora_alpha: 16

- lora_dropout: 0.05

- lora_target_linear: True

- bf16: True

- tf32: True

- load_in_8bit: True

- adapter: lora

- micro_batch_size: 1

- gradient_accumulation_steps: 16

- optimizer: adamw_torch

Using 2 GPUs, the effective global batch size would have been 32.