|

--- |

|

license: apache-2.0 |

|

--- |

|

|

|

# LimaRP-Mistral-7B-v0.1 (Alpaca, 8-bit LoRA adapter) |

|

|

|

This is a version of LimaRP for [Mistral-7B-v0.1](https://huggingface.co/mistralai/Mistral-7B-v0.1) with |

|

about 1800 training samples _up to_ 4k tokens length. A 2-pass training procedure has been employed. The first pass includes |

|

finetuning on about 6800 stories within 4k tokens length and the second pass is LimaRP with changes introducing more effective |

|

control on response length. |

|

|

|

**Due to software limitations, finetuning didn't take advantage yet of the Sliding Window Attention (SWA) which would have allowed |

|

to use longer conversations in the training data. Thus, this version of LimaRP should be considered an _initial finetuning attempt_ and |

|

will be updated in the future.** |

|

|

|

For more details about LimaRP, see the model page for the [previously released v2 version for Llama-2](https://huggingface.co/lemonilia/limarp-llama2-v2). |

|

Most details written there apply for this version as well. Generally speaking, LimaRP is a longform-oriented, novel-style |

|

roleplaying chat model intended to replicate the experience of 1-on-1 roleplay on Internet forums. Short-form, |

|

IRC/Discord-style RP (aka "Markdown format") is not supported yet. The model does not include instruction tuning, |

|

only manually picked and slightly edited RP conversations with persona and scenario data. |

|

|

|

## Prompt format |

|

Same as before. It uses the [extended Alpaca format](https://github.com/tatsu-lab/stanford_alpaca), |

|

with `### Input:` immediately preceding user inputs and `### Response:` immediately preceding |

|

model outputs. While Alpaca wasn't originally intended for multi-turn responses, in practice this |

|

is not a problem; the format follows a pattern already used by other models. |

|

|

|

``` |

|

### Instruction: |

|

Character's Persona: {bot character description} |

|

|

|

User's Persona: {user character description} |

|

|

|

Scenario: {what happens in the story} |

|

|

|

Play the role of Character. You must engage in a roleplaying chat with User below this line. Do not write dialogues and narration for User. |

|

|

|

### Input: |

|

User: {utterance} |

|

|

|

### Response: |

|

Character: {utterance} |

|

|

|

### Input |

|

User: {utterance} |

|

|

|

### Response: |

|

Character: {utterance} |

|

|

|

(etc.) |

|

``` |

|

|

|

You should: |

|

- Replace all text in curly braces (curly braces included) with your own text. |

|

- Replace `User` and `Character` with appropriate names. |

|

|

|

|

|

### Message length control |

|

Inspired by the previously named "Roleplay" preset in SillyTavern, with this |

|

version of LimaRP it is possible to append a length modifier to the response instruction |

|

sequence, like this: |

|

|

|

``` |

|

### Input |

|

User: {utterance} |

|

|

|

### Response: (length = medium) |

|

Character: {utterance} |

|

``` |

|

|

|

This has an immediately noticeable effect on bot responses. The available lengths are: |

|

`tiny`, `short`, `medium`, `long`, `huge`, `humongous`, `extreme`, `unlimited`. **The |

|

recommended starting length is `medium`**. Keep in mind that the AI may ramble |

|

or impersonate the user with very long messages. |

|

|

|

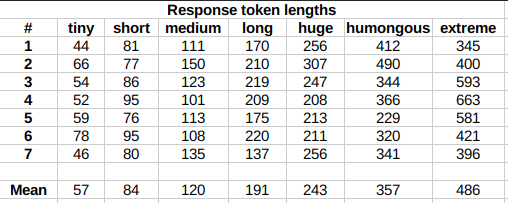

The length control effect is reproducible, but the messages will not necessarily follow |

|

lengths very precisely, rather follow certain ranges on average, as seen in this table |

|

with data from tests made with one reply at the beginning of the conversation: |

|

|

|

|

|

|

|

Response length control appears to work well also deep into the conversation. |

|

|

|

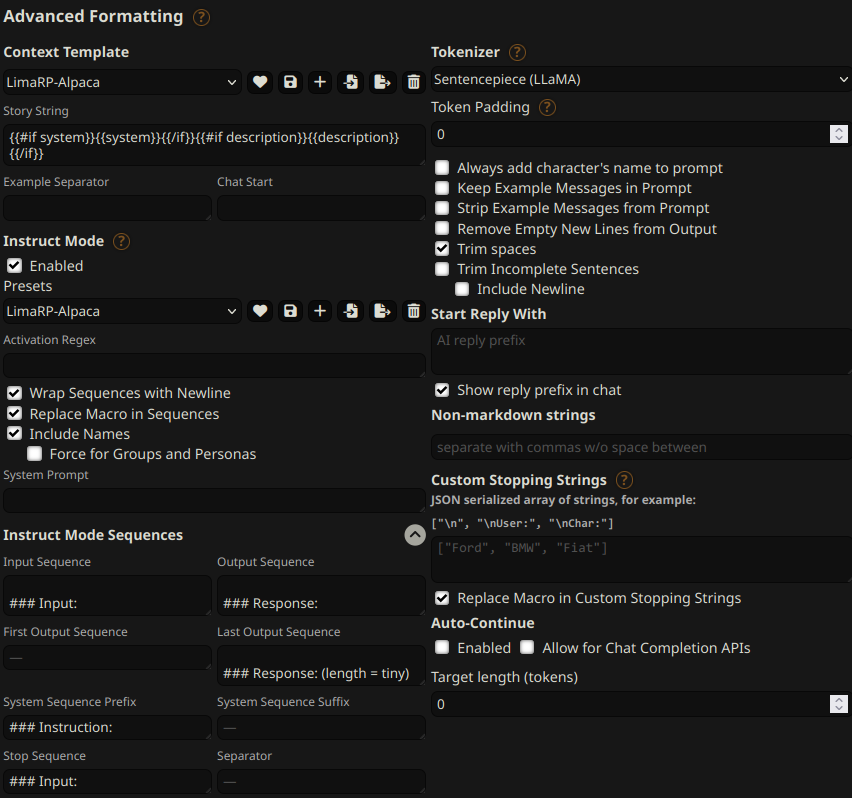

## Suggested settings |

|

You can follow these instruction format settings in SillyTavern. Replace `tiny` with |

|

your desired response length: |

|

|

|

|

|

|

|

## Text generation settings |

|

Extensive testing with Mistral has not been performed yet, but suggested starting text |

|

generation settings may be: |

|

|

|

- TFS = 0.90~0.95 |

|

- Temperature = 0.70~0.85 |

|

- Repetition penalty = 1.08~1.10 |

|

- top-k = 0 (disabled) |

|

- top-p = 1 (disabled) |

|

|

|

## Training procedure |

|

[Axolotl](https://github.com/OpenAccess-AI-Collective/axolotl) was used for training |

|

on a 4x NVidia A40 GPU cluster. |

|

|

|

The A40 GPU cluster has been graciously provided by [Arc Compute](https://www.arccompute.io/). |

|

|

|

The model has been trained as an 8-bit LoRA adapter, and |

|

it's so large because a LoRA rank of 256 was also used. The reasoning was that this |

|

might have helped the model internalize any newly acquired information, making the |

|

training process closer to a full finetune. It's suggested to merge the adapter to |

|

the base Mistral-7B-v0.1 model. |

|

|

|

### Training hyperparameters |

|

- learning_rate: 0.0001 |

|

- lr_scheduler_type: cosine |

|

- num_epochs: 2 (1 for the first pass) |

|

- sequence_len: 4096 |

|

- lora_r: 256 |

|

- lora_alpha: 16 |

|

- lora_dropout: 0.05 |

|

- lora_target_linear: True |

|

- bf16: True |

|

- fp16: false |

|

- tf32: True |

|

- load_in_8bit: True |

|

- adapter: lora |

|

- micro_batch_size: 2 |

|

- gradient_accumulation_steps: 1 |

|

- warmup_steps: 40 |

|

- optimizer: adamw_torch |

|

|

|

For the second pass, the `lora_model_dir` option was used to continue finetuning on the LoRA |

|

adapter obtained from the first pass. |

|

|

|

Using 2 GPUs, the effective global batch size would have been 8. |