MultiMed: Multilingual Medical Speech Recognition via Attention Encoder Decoder

Please refer to newer version which integrates ASR + MT models: https://huggingface.co/leduckhai/MultiMed-ST

ACL 2025

Khai Le-Duc, Phuc Phan, Tan-Hanh Pham, Bach Phan Tat,

Minh-Huong Ngo, Chris Ngo, Thanh Nguyen-Tang, Truong-Son Hy

Please press ⭐ button and/or cite papers if you feel helpful.

- Abstract:

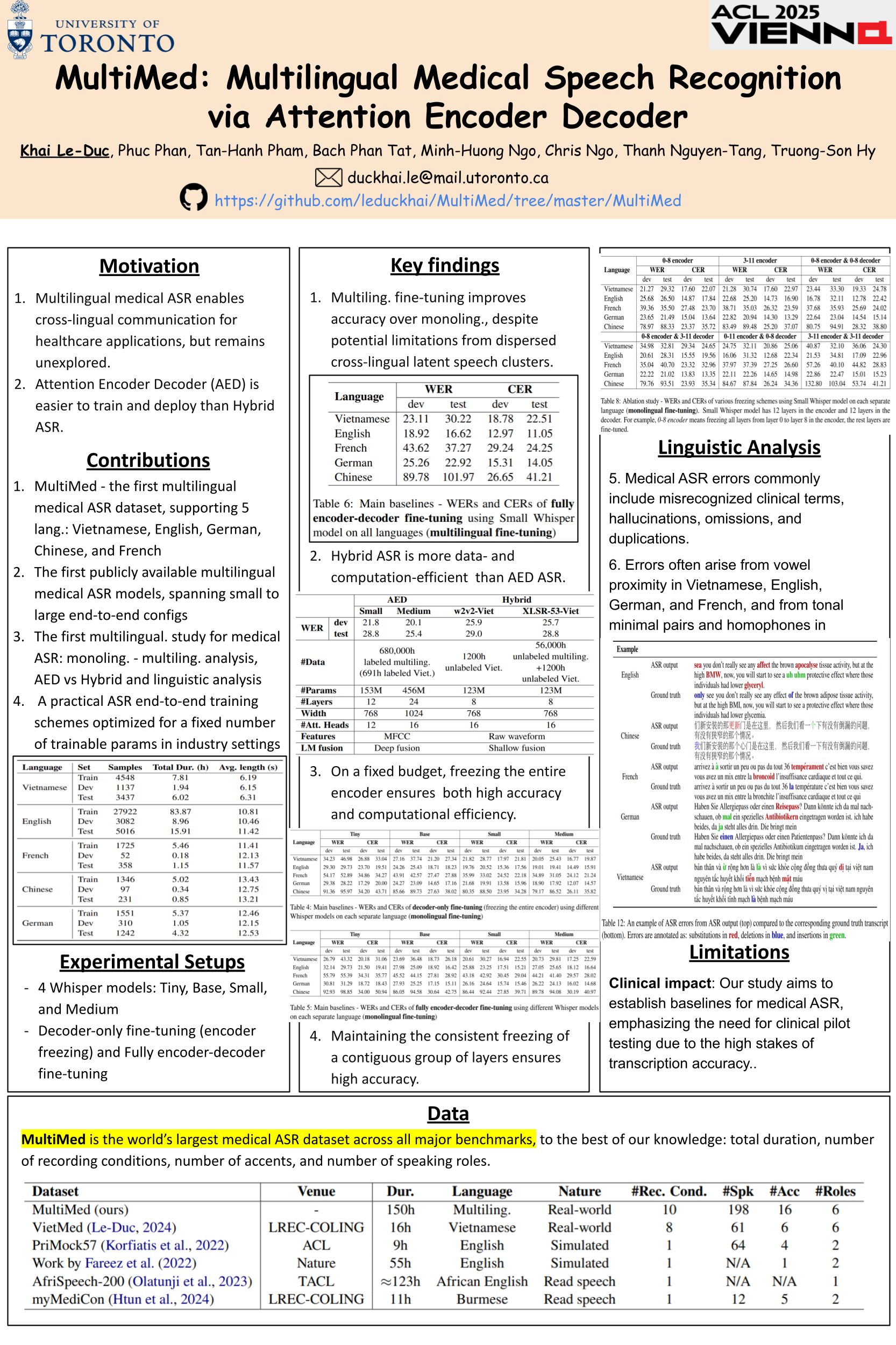

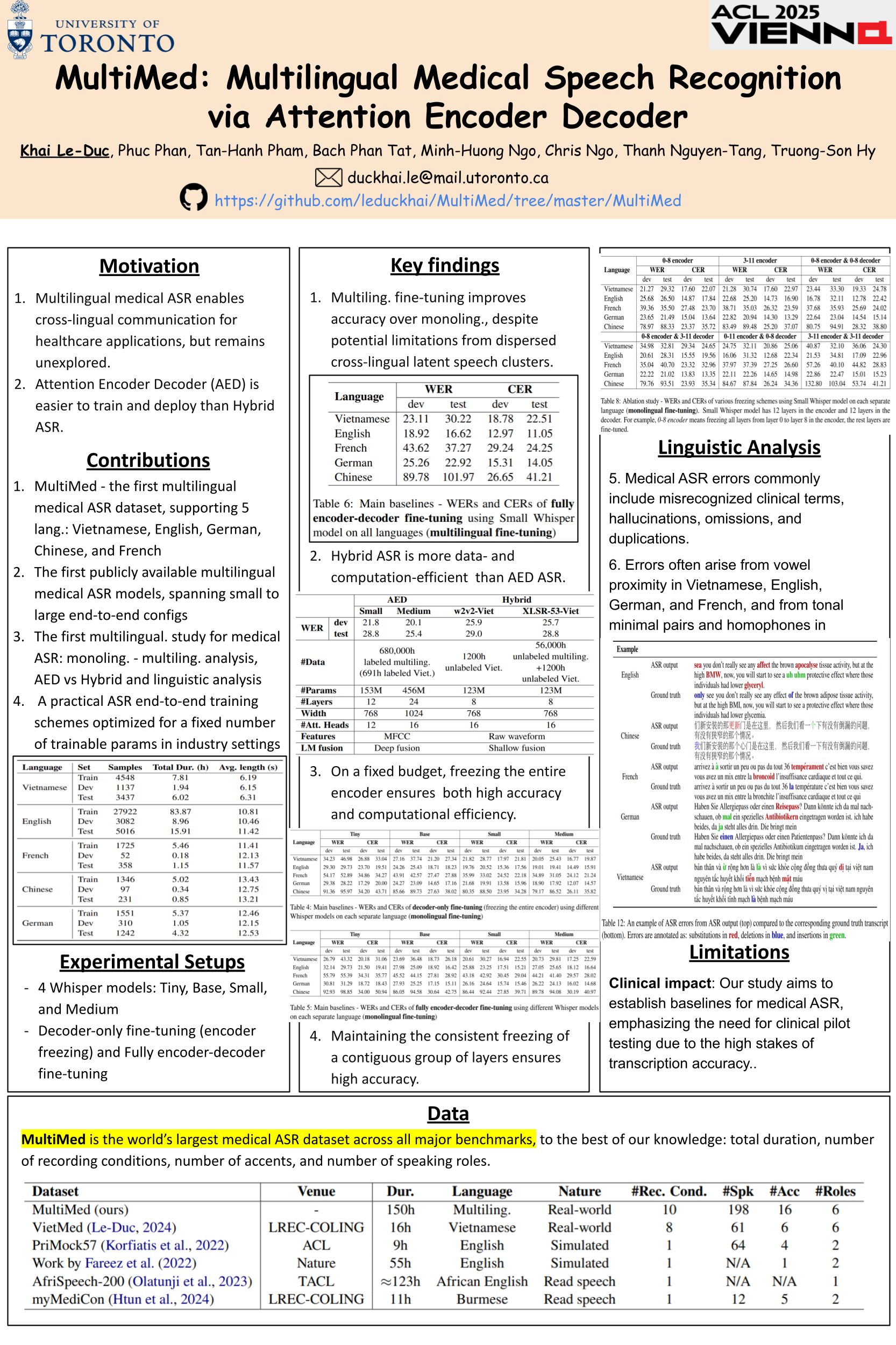

Multilingual automatic speech recognition (ASR) in the medical domain serves as a foundational task for various downstream applications such as speech translation, spoken language understanding, and voice-activated assistants. This technology improves patient care by enabling efficient communication across language barriers, alleviating specialized workforce shortages, and facilitating improved diagnosis and treatment, particularly during pandemics. In this work, we introduce MultiMed, the first multilingual medical ASR dataset, along with the first collection of small-to-large end-to-end medical ASR models, spanning five languages: Vietnamese, English, German, French, and Mandarin Chinese. To our best knowledge, MultiMed stands as the world’s largest medical ASR dataset across all major benchmarks: total duration, number of recording conditions, number of accents, and number of speaking roles. Furthermore, we present the first multilinguality study for medical ASR, which includes reproducible empirical baselines, a monolinguality-multilinguality analysis, Attention Encoder Decoder (AED) vs Hybrid comparative study and a linguistic analysis. We present practical ASR end-to-end training schemes optimized for a fixed number of trainable parameters that are common in industry settings. All code, data, and models are available online: https://github.com/leduckhai/MultiMed/tree/master/MultiMed.

- Citation:

Please cite this paper: https://arxiv.org/abs/2409.14074

@article{le2024multimed,

title={MultiMed: Multilingual Medical Speech Recognition via Attention Encoder Decoder},

author={Le-Duc, Khai and Phan, Phuc and Pham, Tan-Hanh and Tat, Bach Phan and Ngo, Minh-Huong and Ngo, Chris and Nguyen-Tang, Thanh and Hy, Truong-Son},

journal={arXiv preprint arXiv:2409.14074},

year={2024}

}

Dataset and Pre-trained Models:

Dataset: 🤗 HuggingFace dataset, Paperswithcodes dataset

Pre-trained models: 🤗 HuggingFace models

| Model Name |

Description |

Link |

Whisper-Small-Chinese |

Small model fine-tuned on medical Chinese set |

Hugging Face models |

Whisper-Small-English |

Small model fine-tuned on medical English set |

Hugging Face models |

Whisper-Small-French |

Small model fine-tuned on medical French set |

Hugging Face models |

Whisper-Small-German |

Small model fine-tuned on medical German set |

Hugging Face models |

Whisper-Small-Vietnamese |

Small model fine-tuned on medical Vietnamese set |

Hugging Face models |

Whisper-Small-Multilingual |

Small model fine-tuned on medical Multilingual set (5 languages) |

Hugging Face models |

Contact:

If any links are broken, please contact me for fixing!

Le Duc Khai

University of Toronto, Canada

Email: duckhai.le@mail.utoronto.ca

GitHub: https://github.com/leduckhai