Datasets:

The dataset viewer is not available for this split.

Error code: FeaturesError

Exception: ArrowTypeError

Message: ("Expected bytes, got a 'list' object", 'Conversion failed for column SCMm5ob_10x10 with type object')

Traceback: Traceback (most recent call last):

File "/src/services/worker/.venv/lib/python3.9/site-packages/datasets/packaged_modules/json/json.py", line 151, in _generate_tables

pa_table = paj.read_json(

File "pyarrow/_json.pyx", line 308, in pyarrow._json.read_json

File "pyarrow/error.pxi", line 154, in pyarrow.lib.pyarrow_internal_check_status

File "pyarrow/error.pxi", line 91, in pyarrow.lib.check_status

pyarrow.lib.ArrowInvalid: JSON parse error: Column() changed from object to number in row 0

During handling of the above exception, another exception occurred:

Traceback (most recent call last):

File "/src/services/worker/src/worker/job_runners/split/first_rows.py", line 228, in compute_first_rows_from_streaming_response

iterable_dataset = iterable_dataset._resolve_features()

File "/src/services/worker/.venv/lib/python3.9/site-packages/datasets/iterable_dataset.py", line 3422, in _resolve_features

features = _infer_features_from_batch(self.with_format(None)._head())

File "/src/services/worker/.venv/lib/python3.9/site-packages/datasets/iterable_dataset.py", line 2187, in _head

return next(iter(self.iter(batch_size=n)))

File "/src/services/worker/.venv/lib/python3.9/site-packages/datasets/iterable_dataset.py", line 2391, in iter

for key, example in iterator:

File "/src/services/worker/.venv/lib/python3.9/site-packages/datasets/iterable_dataset.py", line 1882, in __iter__

for key, pa_table in self._iter_arrow():

File "/src/services/worker/.venv/lib/python3.9/site-packages/datasets/iterable_dataset.py", line 1904, in _iter_arrow

yield from self.ex_iterable._iter_arrow()

File "/src/services/worker/.venv/lib/python3.9/site-packages/datasets/iterable_dataset.py", line 499, in _iter_arrow

for key, pa_table in iterator:

File "/src/services/worker/.venv/lib/python3.9/site-packages/datasets/iterable_dataset.py", line 346, in _iter_arrow

for key, pa_table in self.generate_tables_fn(**gen_kwags):

File "/src/services/worker/.venv/lib/python3.9/site-packages/datasets/packaged_modules/json/json.py", line 181, in _generate_tables

pa_table = pa.Table.from_pandas(df, preserve_index=False)

File "pyarrow/table.pxi", line 3874, in pyarrow.lib.Table.from_pandas

File "/src/services/worker/.venv/lib/python3.9/site-packages/pyarrow/pandas_compat.py", line 611, in dataframe_to_arrays

arrays = [convert_column(c, f)

File "/src/services/worker/.venv/lib/python3.9/site-packages/pyarrow/pandas_compat.py", line 611, in <listcomp>

arrays = [convert_column(c, f)

File "/src/services/worker/.venv/lib/python3.9/site-packages/pyarrow/pandas_compat.py", line 598, in convert_column

raise e

File "/src/services/worker/.venv/lib/python3.9/site-packages/pyarrow/pandas_compat.py", line 592, in convert_column

result = pa.array(col, type=type_, from_pandas=True, safe=safe)

File "pyarrow/array.pxi", line 339, in pyarrow.lib.array

File "pyarrow/array.pxi", line 85, in pyarrow.lib._ndarray_to_array

File "pyarrow/error.pxi", line 91, in pyarrow.lib.check_status

pyarrow.lib.ArrowTypeError: ("Expected bytes, got a 'list' object", 'Conversion failed for column SCMm5ob_10x10 with type object')Need help to make the dataset viewer work? Make sure to review how to configure the dataset viewer, and open a discussion for direct support.

Note: This page is currently under construction. See our official project page here: https://jmaasch.github.io/carc/

Overview

Reasoning requires adaptation to novel problem settings under limited data and distribution shift. This work introduces CausalARC: an experimental testbed for AI reasoning in low-data and out-of-distribution regimes, modeled after the Abstraction and Reasoning Corpus (ARC). Each CausalARC reasoning task is sampled from a fully specified causal world model, formally expressed as a structural causal model (SCM). Principled data augmentations provide observational, interventional, and counterfactual feedback about the world model in the form of few-shot, in-context learning demonstrations. As a proof-of-concept, we illustrate the use of CausalARC for four language model evaluation settings: (1) abstract reasoning with test-time training, (2) counterfactual reasoning with in-context learning, (3) program synthesis, and (4) causal discovery with logical reasoning.

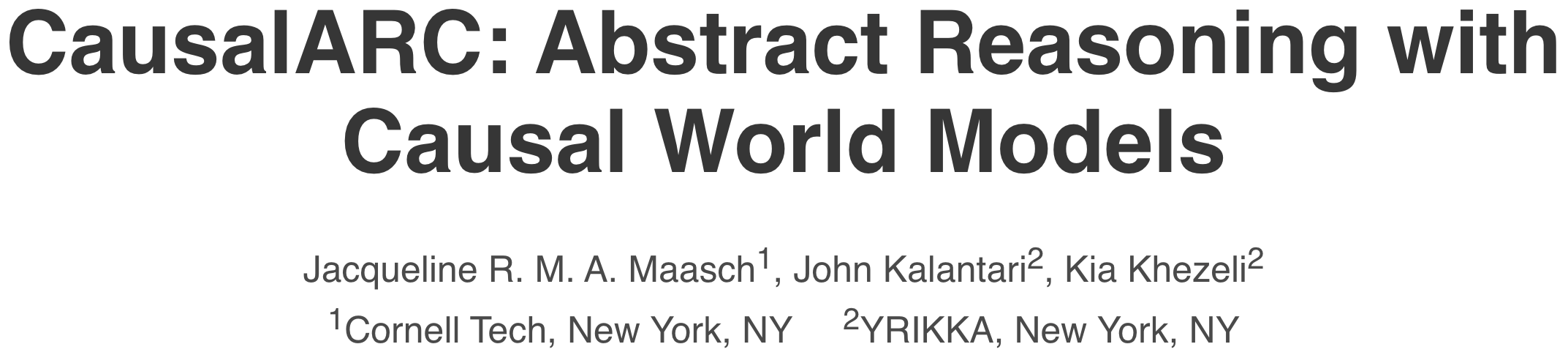

Pearl Causal Hierarchy: observing factual realities (L1), exerting actions to

induce interventional realities (L2), and imagining alternate counterfactual realities (L3)

[1]. Lower levels generally underdetermine higher levels.

Pearl Causal Hierarchy: observing factual realities (L1), exerting actions to

induce interventional realities (L2), and imagining alternate counterfactual realities (L3)

[1]. Lower levels generally underdetermine higher levels.

This work extends and reconceptualizes the ARC setup to support causal reasoning evaluation under limited data and distribution shift. Given a fully specified SCM, all three levels of the Pearl Causal Hierarchy (PCH) are well-defined: any observational (L1), interventional (L2), or counterfactual (L3) query can be answered about the environment under study [2]. This formulation makes CausalARC an open-ended playground for testing reasoning hypotheses at all three levels of the PCH, with an emphasis on abstract, logical, and counterfactual reasoning.

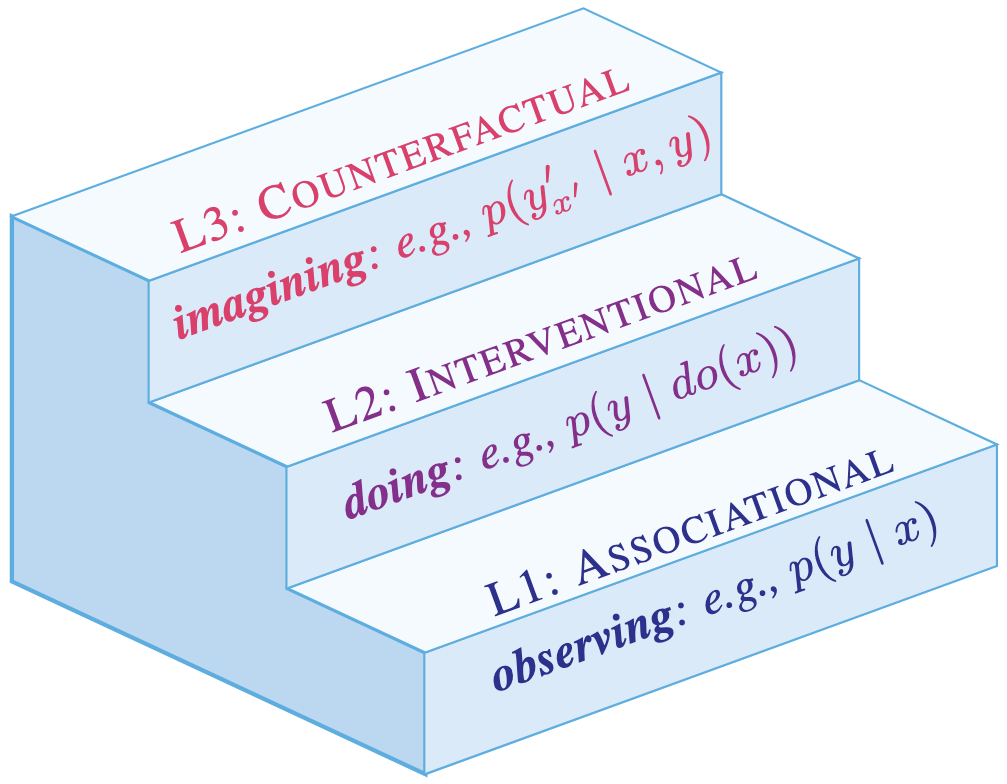

The CausalARC testbed. (A) First, SCM M is manually transcribed in Python code. (B)

Input-output pairs are randomly sampled, providing observational (L1) learning signals about the

world model. (C) Sampling from interventional submodels M' of M yields interventional (L2)

samples (x', y'). Given pair (x, y), performing multiple interventions while holding the exogenous

context constant yields a set of counterfactual (L3) pairs. (D) Using L1 and L3 pairs as in-context

demonstrations, we can automatically generate natural language prompts for diverse reasoning tasks.

The CausalARC testbed. (A) First, SCM M is manually transcribed in Python code. (B)

Input-output pairs are randomly sampled, providing observational (L1) learning signals about the

world model. (C) Sampling from interventional submodels M' of M yields interventional (L2)

samples (x', y'). Given pair (x, y), performing multiple interventions while holding the exogenous

context constant yields a set of counterfactual (L3) pairs. (D) Using L1 and L3 pairs as in-context

demonstrations, we can automatically generate natural language prompts for diverse reasoning tasks.

- Downloads last month

- 112