Dataset Description

- Homepage: https://ali-vilab.github.io/IDEA-Bench-Page

- Repository: https://github.com/ali-vilab/IDEA-Bench

- Paper: https://arxiv.org/abs/2412.11767

- Arena: https://huggingface.co/spaces/ali-vilab/IDEA-Bench-Arena

Dataset Overview

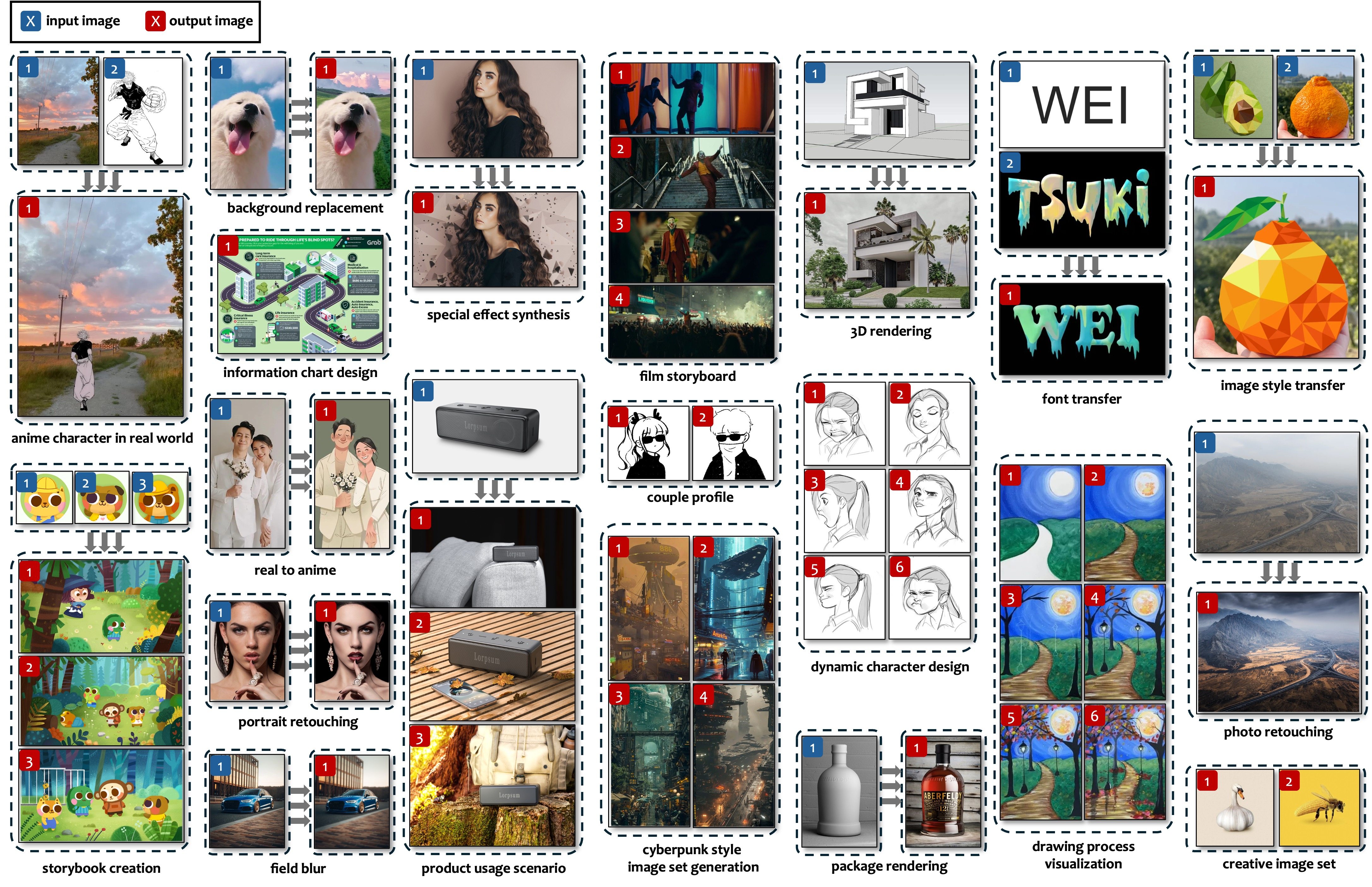

IDEA-Bench is a comprehensive benchmark designed to evaluate generative models' performance in professional design tasks. It includes 100 carefully selected tasks across five categories: text-to-image, image-to-image, images-to-image, text-to-images, and image(s)-to-images. These tasks encompass a wide range of applications, including storyboarding, visual effects, photo retouching, and more.

IDEA-Bench provides a robust framework for assessing models' capabilities through 275 test cases and 1,650 detailed evaluation criteria, aiming to bridge the gap between current generative model capabilities and professional-grade requirements.

Supported Tasks

The dataset supports the following tasks:

- Text-to-Image generation

- Image-to-Image transformation

- Images-to-Image synthesis

- Text-to-Images generation

- Image(s)-to-Images generation

Use Cases

IDEA-Bench is designed for evaluating generative models in professional-grade image design, testing capabilities such as consistency, contextual relevance, and multimodal integration. It is suitable for benchmarking advancements in text-to-image models, image editing tools, and general-purpose generative systems.

Dataset Format and Structure

Data Organization

The dataset is structured into 275 subdirectories, with each subdirectory representing a unique evaluation case. Each subdirectory contains the following components:

instruction.txt

A plain text file containing the prompt used for generating images in the evaluation case.meta.json

A JSON file providing metadata about the specific evaluation case. The structure ofmeta.jsonis as follows:{ "task_name": "special effect adding", "num_of_cases": 3, "image_reference": true, "multi_image_reference": true, "multi_image_output": false, "uid": "0085", "output_image_count": 1, "case_id": "0001" }- task_name: Name of the task.

- num_of_cases: The number of individual cases in the task.

- image_reference: Indicates if the task involves input reference images (true or false).

- multi_image_reference: Specifies if the task involves multiple input images (true or false).

- multi_image_output: Specifies if the task generates multiple output images (true or false).

- uid: Unique identifier for the task.

- output_image_count: Number of images expected as output.

- case_id: Identifier for this case.

Image FilesOptional .jpg files named in sequence (e.g., 0001.jpg, 0002.jpg) representing the input images for the case. Some cases may not include image files.eval.jsonA JSON file containing six evaluation questions, along with detailed scoring criteria. Example format:{ "questions": [ { "question": "Does the output image contain circular background elements similar to the second input image?", "0_point_standard": "The output image does not have circular background elements, or the background shape significantly deviates from the circular structure in the second input image.", "1_point_standard": "The output image contains a circular background element located behind the main subject's head, similar to the visual structure of the second input image. This circular element complements the subject's position, enhancing the composition effect." }, { "question": "Is the visual style of the output image consistent with the stylized effect in the second input image?", "0_point_standard": "The output image lacks the stylized graphic effects of the second input image, retaining too much photographic detail or having inconsistent visual effects.", "1_point_standard": "The output image adopts a graphic, simplified color style similar to the second input image, featuring bold, flat color areas with minimal shadow effects." }, ... ] }Each question includes:

- question: The evaluation query.

- 0_point_standard: Criteria for assigning a score of 0.

- 1_point_standard: Criteria for assigning a score of 1.

auto_eval.jsonlSome subdirectories contain anauto_eval.jsonlfile. This file is part of a subset specifically designed for automated evaluation using multimodal large language models (MLLMs). Each prompt in the file has been meticulously refined by annotators to ensure high quality and detail, enabling precise and reliable automated assessments.

Example case structure

For a task “special effect adding” with UID 0085, the folder structure may look like this:

special_effect_adding_0001/

├── 0001.jpg

├── 0002.jpg

├── 0003.jpg

├── instruction.txt

├── meta.json

├── eval.json

├── auto_eval.jsonl

Evaluation

Human Evaluation

The evaluation process for IDEA-Bench includes a rigorous human scoring system. Each case is assessed based on the corresponding eval.json file in its subdirectory. The file contains six binary evaluation questions, each with clearly defined 0-point and 1-point standards. The scoring process follows a hierarchical structure:

Hierarchical Scoring:

- If either Question 1 or Question 2 receives a score of 0, the remaining four questions (Questions 3–6) are automatically scored as 0.

- Similarly, if either Question 3 or Question 4 receives a score of 0, the last two questions (Questions 5 and 6) are scored as 0.

Task-Level Scores:

- Scores for cases sharing the same

uidare averaged to calculate the task score.

- Scores for cases sharing the same

Category and Final Scores:

- Certain tasks are grouped under professional-level categories, and their scores are consolidated as described in

task_split.json. - Final scores for the five major categories are obtained by averaging the task scores within each category.

- The overall model score is computed as the average of the five major category scores.

- Certain tasks are grouped under professional-level categories, and their scores are consolidated as described in

Scripts for score computation will be provided soon to streamline this process.

MLLM Evaluation

The automated evaluation leverages multimodal large language models (MLLMs) to assess a subset of cases equipped with finely tuned prompts in the auto_eval.jsonl files. These prompts have been meticulously refined by annotators to ensure detailed and accurate assessments. MLLMs evaluate the model outputs by interpreting the detailed questions and criteria provided in these prompts.

Further details about the MLLM evaluation process can be found in the IDEA-Bench GitHub repository. The repository includes additional resources and instructions for implementing automated evaluations.

These two complementary evaluation methods ensure that IDEA-Bench provides a comprehensive framework for assessing both human-aligned quality and automated model performance in professional-grade image generation tasks.

- Downloads last month

- 1,076