metadata

task_categories:

- question-answering

language:

- ms

tags:

- knowledge

pretty_name: MalayMMLU

size_categories:

- 10K<n<100K

configs:

- config_name: default

data_files:

- split: eval

path:

- MalayMMLU_0shot.json

- MalayMMLU_1shot.json

- MalayMMLU_2shot.json

- MalayMMLU_3shot.json

MalayMMLU

Released on September 27, 2024

English | Bahasa Melayu

Introduction

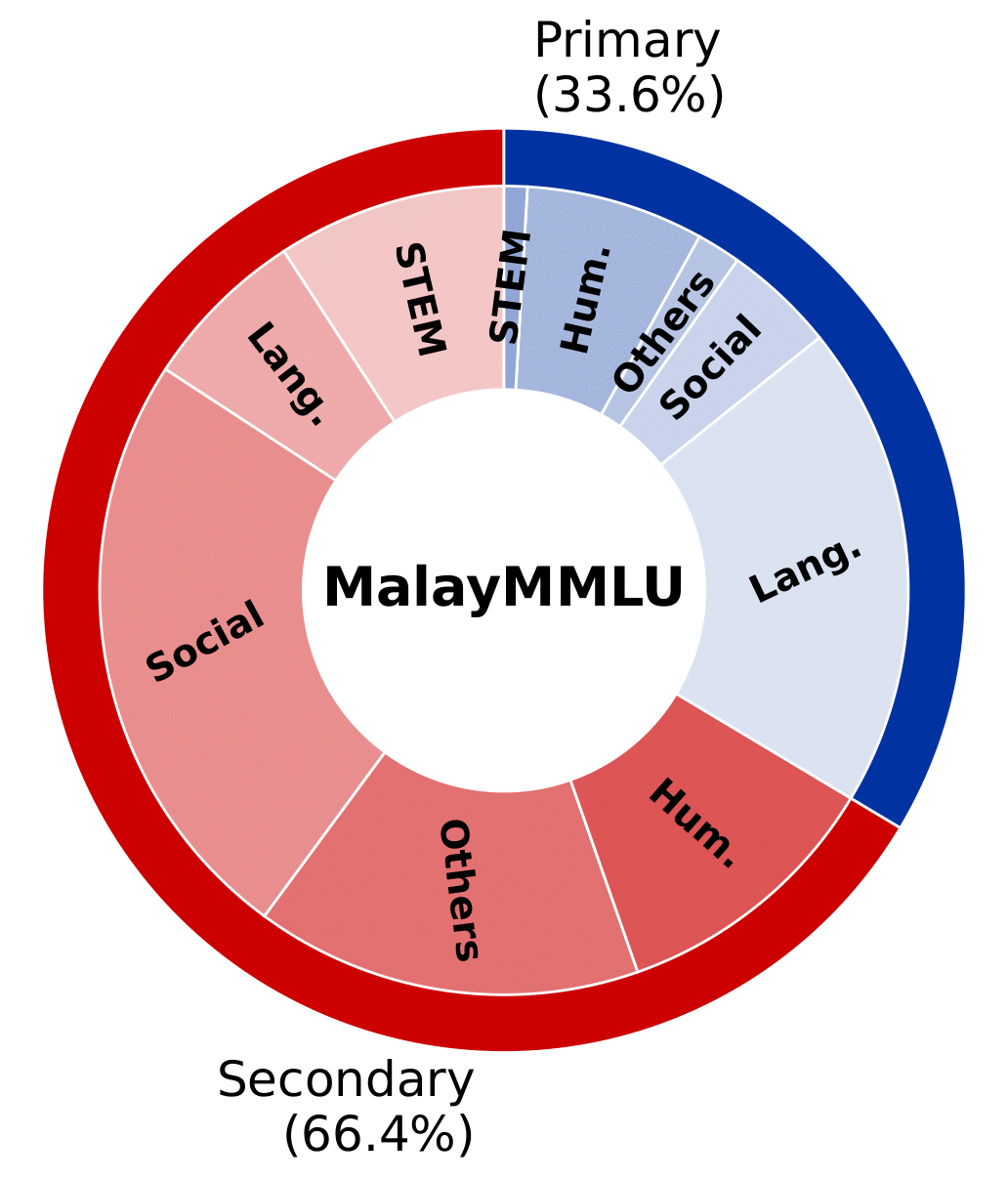

MalayMMLU is the first multitask language understanding (MLU) for Malay Language. The benchmark comprises 24,213 questions spanning both primary (Year 1-6) and secondary (Form 1-5) education levels in Malaysia, encompassing 5 broad topics that further divide into 22 subjects.

| Category | Subjects |

|---|---|

| STEM | Computer Science (Secondary), Biology (Secondary), Chemistry (Secondary), Computer Literacy (Secondary), Mathematics (Primary, Secondary), Additional Mathematics (Secondary), Design and Technology (Primary, Secondary), Core Science (Primary, Secondary), Information and Communication Technology (Primary), Automotive Technology (Secondary) |

| Language | Malay Language (Primary, Secondary) |

| Social science | Geography (Secondary), Local Studies (Primary), History (Primary, Secondary) |

| Others | Life Skills (Primary, Secondary), Principles of Accounting (Secondary), Economics (Secondary), Business (Secondary), Agriculture (Secondary) |

| Humanities | Quran and Sunnah (Secondary), Islam (Primary, Secondary), Sports Science Knowledge (Secondary) |

Result

Zero-shot results of LLMs on MalayMMLU (First token accuracy)

| Organization | Model | Vision | Acc. | ||||||

|---|---|---|---|---|---|---|---|---|---|

| Language | Humanities | STEM | Social Science | Others | Average | ||||

| Random | 38.01 | 42.09 | 36.31 | 36.01 | 38.07 | 38.02 | |||

| YTL | Ilmu 0.1 | 87.77 | 89.26 | 86.66 | 85.27 | 86.40 | 86.98 | ||

| OpenAI | GPT-4o | ✓ | 87.12 | 88.12 | 83.83 | 82.58 | 83.09 | 84.98 | |

| GPT-4 | ✓ | 82.90 | 83.91 | 78.80 | 77.29 | 77.33 | 80.11 | ||

| GPT-4o mini | ✓ | 82.03 | 81.50 | 78.51 | 75.67 | 76.30 | 78.78 | ||

| GPT-3.5 | 69.62 | 71.01 | 67.17 | 66.70 | 63.73 | 67.78 | |||

| Meta | LLaMA-3.1 (70B) | 78.75 | 82.59 | 78.96 | 77.20 | 75.32 | 78.44 | ||

| LLaMA-3.3 (70B) | 78.82 | 80.46 | 78.71 | 75.79 | 73.85 | 77.38 | |||

| LLaMA-3.1 (8B) | 65.47 | 67.17 | 64.10 | 62.59 | 62.13 | 64.24 | |||

| LLaMA-3 (8B) | 63.93 | 66.21 | 62.26 | 62.97 | 61.38 | 63.46 | |||

| LLaMA-2 (13B) | 45.58 | 50.72 | 44.13 | 44.55 | 40.87 | 45.26 | |||

| LLaMA-2 (7B) | 47.47 | 52.74 | 48.71 | 50.72 | 48.19 | 49.61 | |||

| LLaMA-3.2 (3B) | 58.52 | 60.66 | 56.65 | 54.06 | 52.75 | 56.45 | |||

| LLaMA-3.2 (1B) | 38.88 | 43.30 | 40.65 | 40.56 | 39.55 | 40.46 | |||

| Qwen (Alibaba) | Qwen 2.5 (72B) | 79.09 | 79.95 | 80.88 | 75.80 | 75.05 | 77.79 | ||

| Qwen-2.5 (32B) | 76.96 | 76.70 | 79.74 | 72.35 | 70.88 | 74.83 | |||

| Qwen-2-VL (7B) | ✓ | 68.16 | 63.62 | 67.58 | 60.38 | 59.08 | 63.49 | ||

| Qwen-2-VL (2B) | ✓ | 58.22 | 55.56 | 57.51 | 53.67 | 55.10 | 55.83 | ||

| Qwen-1.5 (14B) | 64.47 | 60.64 | 61.97 | 57.66 | 58.05 | 60.47 | |||

| Qwen-1.5 (7B) | 60.13 | 59.14 | 58.62 | 54.26 | 54.67 | 57.18 | |||

| Qwen-1.5 (4B) | 48.39 | 52.01 | 51.37 | 50.00 | 49.10 | 49.93 | |||

| Qwen-1.5 (1.8B) | 42.70 | 43.37 | 43.68 | 43.12 | 44.42 | 43.34 | |||

| Zhipu | GLM-4-Plus | 78.04 | 75.63 | 77.49 | 74.07 | 72.66 | 75.48 | ||

| GLM-4-Air | 67.88 | 69.56 | 70.20 | 66.06 | 66.18 | 67.60 | |||

| GLM-4-Flash | 63.52 | 65.69 | 66.31 | 63.21 | 63.59 | 64.12 | |||

| GLM-4 | 63.39 | 56.72 | 54.40 | 57.24 | 55.00 | 58.07 | |||

| GLM-4†† (9B) | 58.51 | 60.48 | 56.32 | 55.04 | 53.97 | 56.87 | |||

| Gemma-2 (9B) | 75.83 | 72.83 | 75.07 | 69.72 | 70.33 | 72.51 | |||

| Gemma (7B) | 45.53 | 50.92 | 46.13 | 47.33 | 46.27 | 47.21 | |||

| Gemma (2B) | 46.50 | 51.15 | 49.20 | 48.06 | 48.79 | 48.46 | |||

| SAIL (Sea) | Sailor† (14B) | 78.40 | 72.88 | 69.63 | 69.47 | 68.67 | 72.29 | ||

| Sailor† (7B) | 74.54 | 68.62 | 62.79 | 64.69 | 63.61 | 67.58 | |||

| Mesolitica | MaLLaM-v2.5 Small‡ | 73.00 | 71.00 | 70.00 | 72.00 | 70.00 | 71.53 | ||

| MaLLaM-v2.5 Tiny‡ | 67.00 | 66.00 | 68.00 | 69.00 | 66.00 | 67.32 | |||

| MaLLaM-v2† (5B) | 42.57 | 46.44 | 42.24 | 40.82 | 38.74 | 42.08 | |||

| Cohere for AI | Command R (32B) | 71.68 | 71.49 | 66.68 | 67.19 | 63.64 | 68.47 | ||

| OpenGVLab | InternVL2 (40B) | ✓ | 70.36 | 68.49 | 64.88 | 65.93 | 60.54 | 66.51 | |

| Damo (Alibaba) | SeaLLM-v2.5† (7B) | 69.75 | 67.94 | 65.29 | 62.66 | 63.61 | 65.89 | ||

| Mistral | Pixtral (12B) | ✓ | 64.81 | 62.68 | 64.72 | 63.93 | 59.49 | 63.25 | |

| Mistral Small (22B) | 65.19 | 65.03 | 63.36 | 61.58 | 59.99 | 63.05 | |||

| Mistral-v0.3 (7B) | 56.97 | 59.29 | 57.14 | 58.28 | 56.56 | 57.71 | |||

| Mistral-v0.2 (7B) | 56.23 | 59.86 | 57.10 | 56.65 | 55.22 | 56.92 | |||

| Microsoft | Phi-3 (14B) | 60.07 | 58.89 | 60.91 | 58.73 | 55.24 | 58.72 | ||

| Phi-3 (3.8B) | 52.24 | 55.52 | 54.81 | 53.70 | 51.74 | 53.43 | |||

| 01.AI | Yi-1.5 (9B) | 56.20 | 53.36 | 57.47 | 50.53 | 49.75 | 53.08 | ||

| Stability AI | StableLM 2 (12B) | 53.40 | 54.84 | 51.45 | 51.79 | 50.16 | 52.45 | ||

| StableLM 2 (1.6B) | 43.92 | 51.10 | 45.27 | 46.14 | 46.75 | 46.48 | |||

| Baichuan | Baichuan-2 (7B) | 40.41 | 47.35 | 44.37 | 46.33 | 43.54 | 44.30 | ||

| Yellow.ai | Komodo† (7B) | 43.62 | 45.53 | 39.34 | 39.75 | 39.48 | 41.72 | ||

Citation

@InProceedings{MalayMMLU2024,

author = {Poh, Soon Chang and Yang, Sze Jue and Tan, Jeraelyn Ming Li and Chieng, Lawrence Leroy Tze Yao and Tan, Jia Xuan and Yu, Zhenyu and Foong, Chee Mun and Chan, Chee Seng },

title = {MalayMMLU: A Multitask Benchmark for the Low-Resource Malay Language},

booktitle = {Findings of the Association for Computational Linguistics: EMNLP 2024},

month = {November},

year = {2024},

}

Feedback

Suggestions and opinions (both positive and negative) are greatly welcome. Please contact the author by sending email to cs.chan at um.edu.my.