Dataset Card for Speech Wikimedia

Table of Contents

- Dataset Card for Speech Wikimedia

Dataset Description

- Point of Contact: [email protected]

Dataset Summary

The Speech Wikimedia Dataset is a compilation of audiofiles with transcriptions extracted from wikimedia commons that is licensed for academic and commercial usage under CC and Public domain. It includes 2,000+ hours of transcribed speech in different languages with a diverse set of speakers. Each audiofile should have one or more transcriptions in different languages.

Transcription languages

- English

- German

- Dutch

- Arabic

- Hindi

- Portuguese

- Spanish

- Polish

- French

- Russian

- Esperanto

- Swedish

- Korean

- Bengali

- Hungarian

- Oriya

- Thai

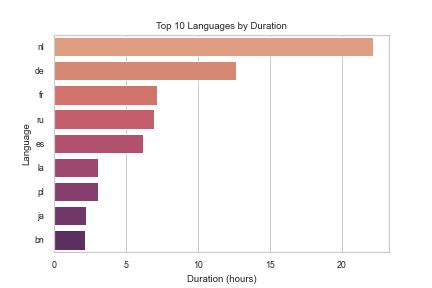

Hours of Audio for each language

We determine the amount of data available for the ASR tasks by extractign the total duration of audio we have where we also have the transcription in the same langauge. We present the duration for the top 10 languages besides from English, from which we have a total of 1488 hours of audio:

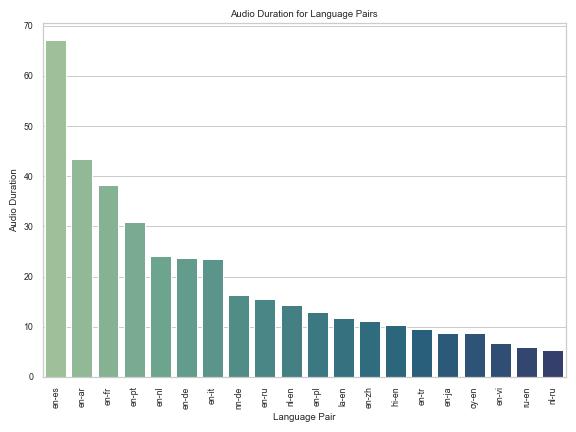

Hours of language pairs for speech translation

Our dataset contains some audios with more than one transcription, all of which correspond to a different language transcription. In total, we have 628 hours fo audio with transcripts in different languages. We present the hours of audio for the 20 most common language pairs:

Dataset Structure

audios

Folder with audios in flac format and sampling_rate=16,000 Hz.

transcription and transcription2

Folders with transcriptions in srt format.

We split this into two directories because Hugging Face does not support more than 10,000 files in a single directory.

real_correspondence.json

File with relationship between audios and transcriptions, as one large json dictionary.

Key is the name of an audio file in the "reformat" directory, value is the list of corresponding transcript files, which sit in either the transcription or transcription2 directory.

license.json

File with license information. The key is the name of the original audio file on Wikimedia Commons.

Data License

Here is an excerpt from license.json:

""" '"Berlin Wall" Speech - President Reagan's Address at the Brandenburg Gate - 6-12-87.webm': {'author': '\nReaganFoundation', 'source': '\n<a href="/wiki/Commons:YouTube_files" [...], 'html_license': '['<table class="layouttemplate mw-content-ltr" lang="en" style="width:100%; [...], 'license': 'Public Domain'},

Dataset Creation

Source Data

Initial Data Collection and Normalization

Data was downloaded from https://commons.wikimedia.org/.

Preprocessing

As the original format of most of the files was video, we decided to convert them to flac format with samplerate=16_000 using ffmpeg

Annotations

Annotation process

No manual annotation is done. We download only source audio with already existing transcripts.

In particular, there is no "forced alignment" or "segmentation" done on this dataset.

Personal and Sensitive Information

Several of our sources are legal and government proceedings, spoken stories, speeches, and so on. Given that these were intended as public documents and licensed as such, it is natural that the involved individuals are aware of this.

Considerations for Using the Data

Discussion of Biases

Our data is downloaded from commons.wikimedia.org. As such, the data is biased towards whatever users decide to upload there.

The data is also mostly English, though this has potential for multitask learning because several audio files have more than one transcription.

Additional Information

Licensing Information

The source data contains data under Public Domain and Creative Commons Licenses.

We license this dataset under https://creativecommons.org/licenses/by-sa/4.0/

The appropriate attributions are in license.json