metadata

license: other

try to build a 200K context length MoE Yi based chat model.

[[GGUF 4bit is here ]https://huggingface.co/cloudyu/Yi-34Bx2-MOE-200K-gguf ]

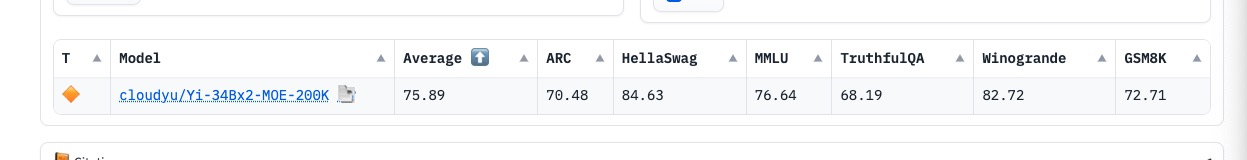

metrics :

code example

import torch

from transformers import AutoTokenizer, AutoModelForCausalLM

import math

##

model_path = "cloudyu/Yi-34Bx2-MOE-200K"

tokenizer = AutoTokenizer.from_pretrained(model_path, use_default_system_prompt=False)

model = AutoModelForCausalLM.from_pretrained(

model_path, torch_dtype=torch.float32, device_map='auto',local_files_only=False, load_in_4bit=True

)

print(model)

prompt = input("please input prompt:")

while len(prompt) > 0:

input_ids = tokenizer(prompt, return_tensors="pt").input_ids.to("cuda")

generation_output = model.generate(

input_ids=input_ids, max_new_tokens=500,repetition_penalty=1.2

)

print(tokenizer.decode(generation_output[0]))

prompt = input("please input prompt:")