gguf quantized version of HiDream-i1-Full (incl. full + dev + fast + e1 + e1-1)

- full set gguf works right away (all gguf: model + encoder + vae)

- upgrade your node(pypi|repo|pack) for model support

setup

- drag hidream to >

./ComfyUI/models/diffusion_models - drag encoder: g, l, t5xxl and llama to >

./ComfyUI/models/text_encoders - drag pig to >

./ComfyUI/models/vae

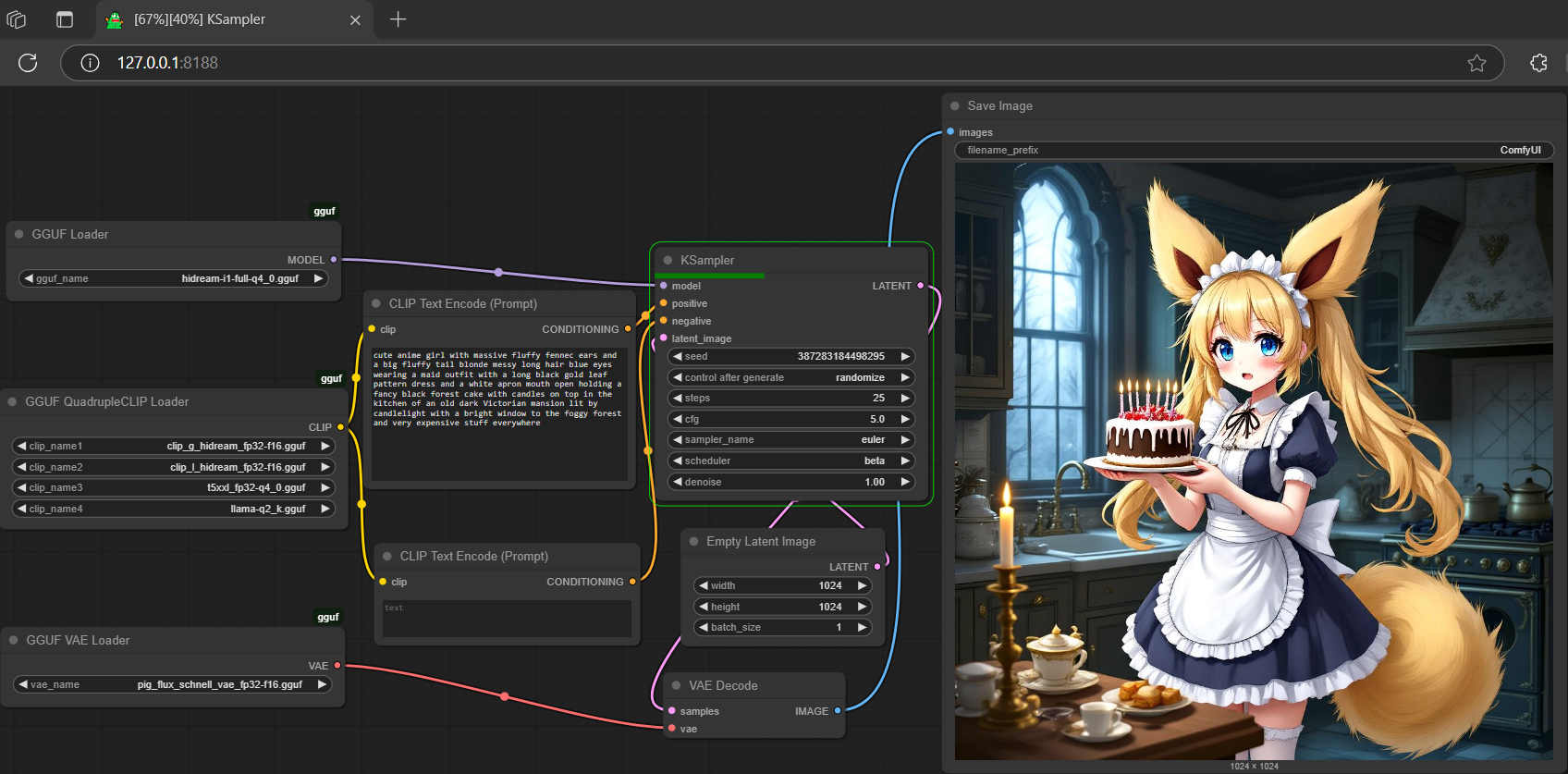

workflow

- drag the json file or demo picture below for example workflow

- Prompt

- cute anime girl with massive fluffy fennec ears and a big fluffy tail blonde messy long hair blue eyes wearing a maid outfit with a long black gold leaf pattern dress and a white apron mouth open holding a fancy black forest cake with candles on top in the kitchen of an old dark Victorian mansion lit by candlelight with a bright window to the foggy forest and very expensive stuff everywhere

- Negative Prompt

- full (info only, don't copy this)

- Prompt

- fast (same prompt as full)

- Prompt

- dev (same prompt as full)

- Prompt

- 1-clip (llama only)

- Prompt

- 2-clip (llama + t5xxl)

reference

- base model from hidream-ai

- get safetensors from comfy-org

- get more t5xxl-encoder gguf here

- get more lama3.1-8b-encoder gguf here

- Downloads last month

- 3,587

Hardware compatibility

Log In

to view the estimation

2-bit

3-bit

4-bit

5-bit

6-bit

8-bit

16-bit

32-bit

Model tree for calcuis/hidream-gguf

Base model

HiDream-ai/HiDream-I1-Full