[VLDB' 25] ChatTS-14B-0801 Model

[VLDB' 25] ChatTS: Aligning Time Series with LLMs via Synthetic Data for Enhanced Understanding and Reasoning

ChatTS focuses on Understanding and Reasoning about time series, much like what vision/video/audio-MLLMs do.

This repo provides code, datasets and model for ChatTS: ChatTS: Aligning Time Series with LLMs via Synthetic Data for Enhanced Understanding and Reasoning.

Web Demo

The Web Demo of ChatTS-14B-0801 is available at HuggingFace Spaces:

Key Features

ChatTS is a Multimodal LLM built natively for time series as a core modality:

- ✅ Native support for multivariate time series

- ✅ Flexible input: Supports multivariate time series with different lengths and flexible dimensionality

- ✅ Conversational understanding + reasoning:

Enables interactive dialogue over time series to explore insights about time series - ✅ Preserves raw numerical values:

Can answer statistical questions, such as "How large is the spike at timestamp t?" - ✅ Easy integration with existing LLM pipelines, including support for vLLM.

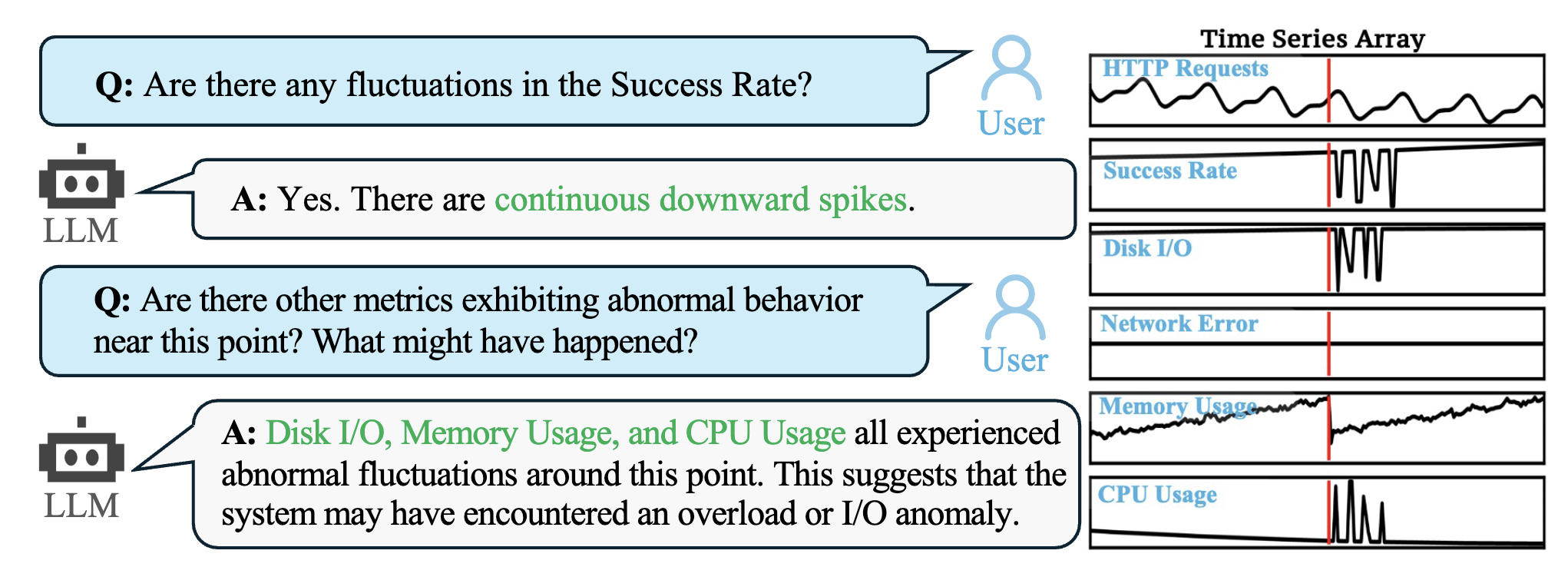

Example Application

Here is an example of a ChatTS application, which allows users to interact with a LLM to understand and reason about time series data:

Usage

- This model is fine-tuned on the QWen2.5-14B-Instruct (https://huggingface.co/Qwen/Qwen2.5-14B-Instruct) model. For more usage details, please refer to the

README.mdin the ChatTS repository. - An example usage of ChatTS (with

HuggingFace):

from transformers import AutoModelForCausalLM, AutoTokenizer, AutoProcessor

import torch

import numpy as np

hf_model = "bytedance-research/ChatTS-14B"

# Load the model, tokenizer and processor

# For pre-Ampere GPUs (like V100) use `_attn_implementation='eager'`

model = AutoModelForCausalLM.from_pretrained(hf_model, trust_remote_code=True, device_map="auto", torch_dtype='float16')

tokenizer = AutoTokenizer.from_pretrained(hf_model, trust_remote_code=True)

processor = AutoProcessor.from_pretrained(hf_model, trust_remote_code=True, tokenizer=tokenizer)

# Create time series and prompts

timeseries = np.sin(np.arange(256) / 10) * 5.0

timeseries[100:] -= 10.0

prompt = f"I have a time series length of 256: <ts><ts/>. Please analyze the local changes in this time series."

# Apply Chat Template

prompt = f"""<|im_start|>system

You are a helpful assistant.<|im_end|><|im_start|>user

{prompt}<|im_end|><|im_start|>assistant

"""

# Convert to tensor

inputs = processor(text=[prompt], timeseries=[timeseries], padding=True, return_tensors="pt")

# Model Generate

outputs = model.generate(**inputs, max_new_tokens=300)

print(tokenizer.decode(outputs[0][len(inputs['input_ids'][0]):], skip_special_tokens=True))

Reproduction of Paper Results

Please download the legacy ChatTS-14B model to reproduce the results in the paper.

Reference

- QWen2.5-14B-Instruct (https://huggingface.co/Qwen/Qwen2.5-14B-Instruct)

- transformers (https://github.com/huggingface/transformers.git)

- ChatTS Paper

License

This model is licensed under the Apache License 2.0.

Cite

@article{xie2024chatts,

title={ChatTS: Aligning Time Series with LLMs via Synthetic Data for Enhanced Understanding and Reasoning},

author={Xie, Zhe and Li, Zeyan and He, Xiao and Xu, Longlong and Wen, Xidao and Zhang, Tieying and Chen, Jianjun and Shi, Rui and Pei, Dan},

journal={arXiv preprint arXiv:2412.03104},

year={2024}

}

- Downloads last month

- 511