Gemma-2-2b-it Urgency Detection Model Card

This model is used to classify whether a user message is an urgent maternal health-related message.

Model Details

Given a list of urgency rules related to maternal health and a user message, this model detects whether the

user message qualifies as an urgent health-related message according to the rules. The model is trained to produce the following JSON

output as its response:

{

"best_matching_rule": "The rule that best matches with the user message.",

"probability": "A probability between 0 and 1 in increments of 0.05 that ANY part of the user message matches one of the urgency rules.",

"reason": "The reason for selecting the best matching rule and probability."

}

Model Description

- Developed by: IDInsight

- Funded by: Google.org

- Model type: Instruction-tuned

- Language(s) (NLP): English

- License: MIT

- Finetuned from model: gemma-2-2b-it

Direct Use

Below we share some code snippets on how to get quickly started with running the model. First, install the Transformers library with:

pip install -U transformers

Then, copy the snippet from the section that is relevant for your usecase.

Running the model on a single / multi GPU

# pip install accelerate

import torch

from .gemma2_inference_hf import get_completions

from transformers import AutoModelForCausalLM, AutoTokenizer, PreTrainedTokenizerBase

DTYPE = torch.bfloat16

MODEL_ID = "idinsight/gemma-2-2b-it-ud"

tokenizer = AutoTokenizer.from_pretrained(MODEL_ID, add_eos_token=False)

tokenizer.pad_token = tokenizer.eos_token

tokenizer.padding_side = "right"

model = AutoModelForCausalLM.from_pretrained(

MODEL_ID, device_map="auto", return_dict=True, torch_dtype=DTYPE

)

text_generation_params = {

"do_sample": True,

"eos_token_id": tokenizer.eos_token_id,

"max_new_tokens": 1024,

"num_return_sequences": 1,

"repetition_penalty": 1.1,

"temperature": 1e-6,

"top_p": 0.9,

}

response = get_completions(

model=model,

rules_list=[

"NOT URGENT",

"Bleeding from the vagina",

"Bad tummy pain",

"Bad headache that won’t go away",

"Changes to vision",

"Trouble breathing",

"Hot or very cold, and very weak",

"Fits or uncontrolled shaking",

"Baby moves less",

"Fluid from the vagina",

"Feeding problems",

"Fits or uncontrolled shaking",

"Fast, slow or difficult breathing",

"Too hot or cold",

"Baby’s colour changes",

"Vomiting and watery poo",

"Infected belly button",

"Swollen or infected eyes",

"Bulging or sunken soft spot",

],

skip_special_tokens_during_decode=False,

text_generation_params=text_generation_params,

tokenizer=tokenizer,

user_message="If my newborn can't able to breathe what can i do",

)

print(f"{response = }")

The gemma2_inference_hf.py module is provided for downloaded with the model files.

Evaluation

Model evaluation metrics and results on a private test dataset containing 3k samples.

Benchmark Results

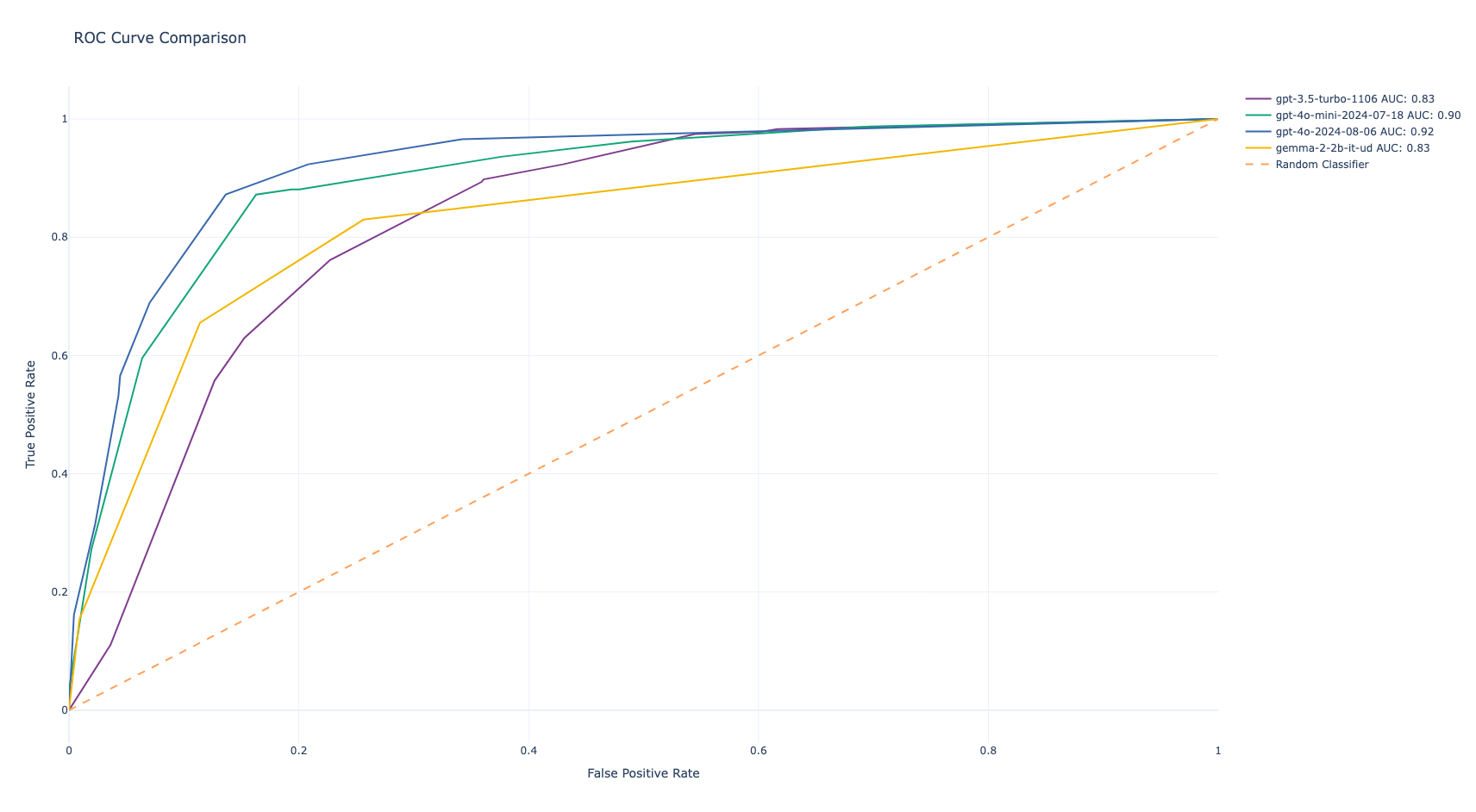

The finetuned Gemma-2 model was evaluated against GPT-3.5-Turbo, GPT-4o-mini, and GPT-4o:

| Metric | gemma-2-2b-it-ud | GPT-3.5-Turbo-1106 | GPT-4o-mini-2024-07-18 | GPT-4o-2024-08-06 |

|---|---|---|---|---|

| Accuracy | 0.87 | 0.83 | 0.90 | 0.92 |

| AUC | 0.84 | 0.83 | 0.91 | 0.92 |

AUC-ROC Comparison

Usage and Limitations

This model has certain limitations that users should be aware of.

Intended Usage

This model has been finetuned specifically for the task of determining whether a user message is an urgent or non-urgent maternal-health related message. As a result, no guarantees are made with respect to model performance for other use cases. Users may use the model to detect urgency messages for new rule sets and user messages and for research and education purposes.

Limitations

- Training Data

- The quality and diversity of the training data significantly influence the model's capabilities. Biases or gaps in the training data can lead to limitations in the model's responses.

- The scope of the training dataset determines the subject areas the model can handle effectively.

- Context and Task Complexity

- LLMs are better at tasks that can be framed with clear prompts and instructions. Open-ended or highly complex tasks might be challenging.

- A model's performance can be influenced by the amount of context provided (longer context generally leads to better outputs, up to a certain point).

- Language Ambiguity and Nuance

- Natural language is inherently complex. LLMs might struggle to grasp subtle nuances, sarcasm, or figurative language.

- Factual Accuracy

- LLMs generate responses based on information they learned from their training datasets, but they are not knowledge bases. They may generate incorrect or outdated factual statements.

- Common Sense

- LLMs rely on statistical patterns in language. They might lack the ability to apply common sense reasoning in certain situations.

Ethical Considerations and Risks

The development of large language models (LLMs) raises several ethical concerns. In creating an open model, we have carefully considered the following:

Bias and Fairness

- LLMs trained on large-scale, real-world text data can reflect socio-cultural biases embedded in the training material. These models underwent careful scrutiny, input data pre-processing described and posterior evaluations reported in this card.

Misinformation and Misuse

- LLMs can be misused to generate text that is false, misleading, or harmful.

- Guidelines are provided for responsible use with the model, see the Responsible Generative AI Toolkit.

Transparency and Accountability:

- This model card summarizes details on the models' architecture, capabilities, limitations, and evaluation processes.

- A responsibly developed open model offers the opportunity to share innovation by making LLM technology accessible to developers and researchers across the AI ecosystem. Risks identified and mitigations:

Perpetuation of biases: It's encouraged to perform continuous monitoring (using evaluation metrics, human review) and the exploration of de-biasing techniques during model training, fine-tuning, and other use cases.

Generation of harmful content: Mechanisms and guidelines for content safety are essential. Developers are encouraged to exercise caution and implement appropriate content safety safeguards based on their specific product policies and application use cases.

Misuse for malicious purposes: Technical limitations and developer and end-user education can help mitigate against malicious applications of LLMs. Educational resources and reporting mechanisms for users to flag misuse are provided. Prohibited uses of Gemma models are outlined in the Gemma Prohibited Use Policy.

Privacy violations: Models were trained on data filtered for removal of PII (Personally Identifiable Information). Developers are encouraged to adhere to privacy regulations with privacy-preserving techniques.

Hardware

Inference: NVIDIA L4 GPU

Training: NVIDIA A100 80 GB

Citation [optional]

TBD

More Information

For more information regarding this model, its training dataset, and use cases, please contact Tony Zhao.

Model Card Authors

Model Card Contact

Framework versions

- PEFT 0.12.0

- Downloads last month

- 10