Upload folder using huggingface_hub

Browse files- .ipynb_checkpoints/Granite3.2-2B-BF16-lora-FP16-Evaluation_Results-checkpoint.json +34 -0

- Granite3.2-2B-BF16-lora-FP16-Evaluation_Results.json +34 -0

- Granite3.2-2B-BF16-lora-FP16-Inference_Curve.png +0 -0

- Granite3.2-2B-BF16-lora-FP16-Latency_Histogram.png +0 -0

- Granite3.2-2B-BF16-lora-FP16-Memory_Histogram.png +0 -0

- Granite3.2-2B-BF16-lora-FP16-Memory_Usage_Curve.png +0 -0

.ipynb_checkpoints/Granite3.2-2B-BF16-lora-FP16-Evaluation_Results-checkpoint.json

ADDED

|

@@ -0,0 +1,34 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"eval_loss:": 0.6290175174139142,

|

| 3 |

+

"perplexity:": 1.8757667654725518,

|

| 4 |

+

"performance_metrics:": {

|

| 5 |

+

"accuracy:": 0.9993324432576769,

|

| 6 |

+

"precision:": 1.0,

|

| 7 |

+

"recall:": 1.0,

|

| 8 |

+

"f1:": 1.0,

|

| 9 |

+

"bleu:": 0.9583276083314752,

|

| 10 |

+

"rouge:": {

|

| 11 |

+

"rouge1": 0.9772097831739393,

|

| 12 |

+

"rouge2": 0.9770857665194296,

|

| 13 |

+

"rougeL": 0.9772097831739393

|

| 14 |

+

},

|

| 15 |

+

"semantic_similarity_avg:": 0.99727463722229

|

| 16 |

+

},

|

| 17 |

+

"mauve:": 0.8845393474939447,

|

| 18 |

+

"inference_performance:": {

|

| 19 |

+

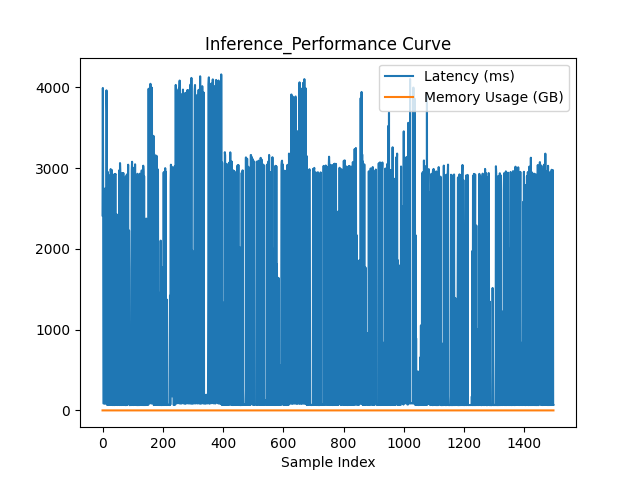

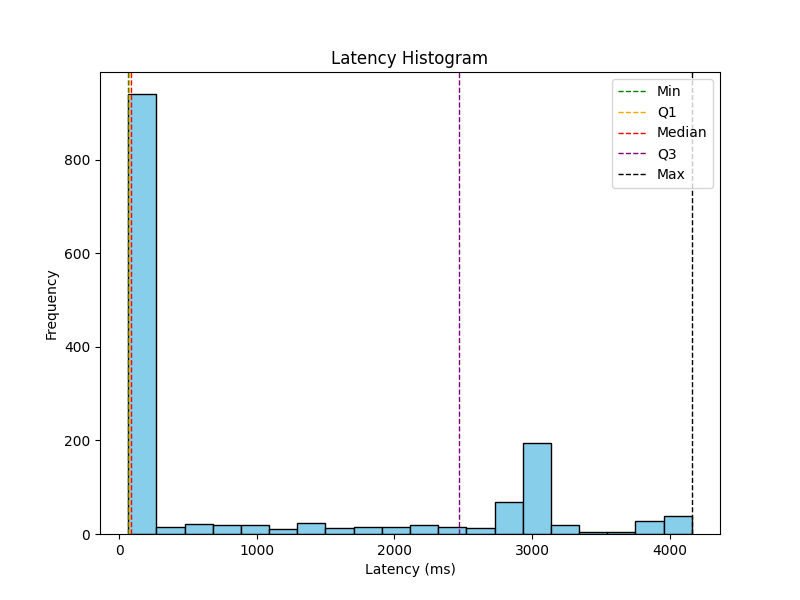

"min_latency_ms": 65.03963470458984,

|

| 20 |

+

"max_latency_ms": 4162.008047103882,

|

| 21 |

+

"lower_quartile_ms": 67.63327121734619,

|

| 22 |

+

"median_latency_ms": 85.66915988922119,

|

| 23 |

+

"upper_quartile_ms": 2466.5658473968506,

|

| 24 |

+

"avg_latency_ms": 1007.1729932512555,

|

| 25 |

+

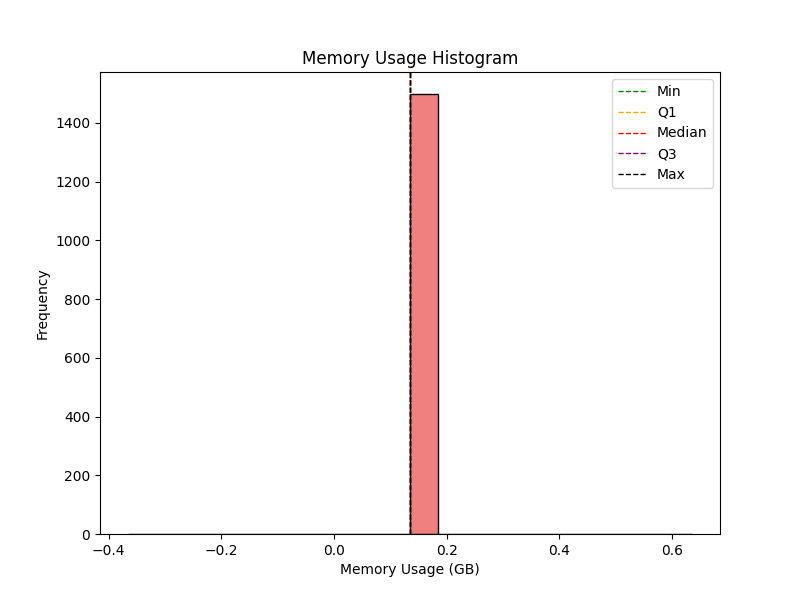

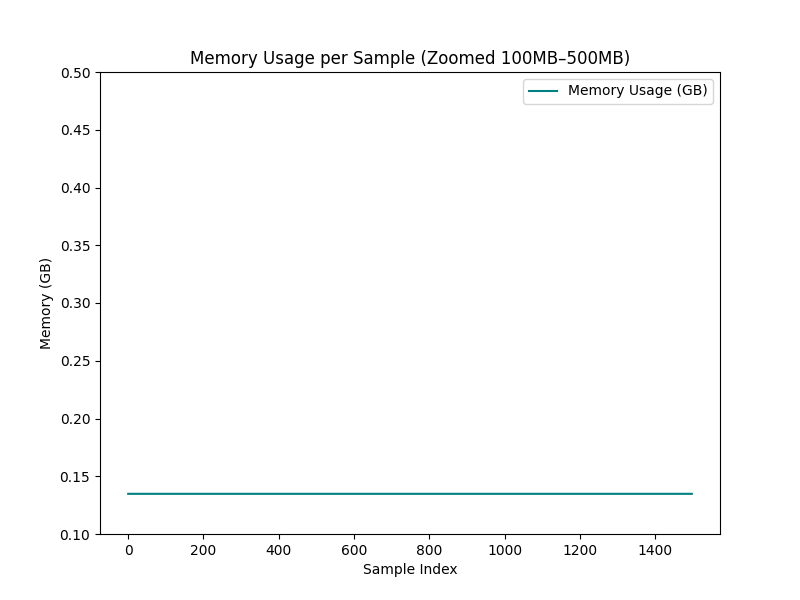

"min_memory_gb": 0.13478469848632812,

|

| 26 |

+

"max_memory_gb": 0.13478469848632812,

|

| 27 |

+

"lower_quartile_gb": 0.13478469848632812,

|

| 28 |

+

"median_memory_gb": 0.13478469848632812,

|

| 29 |

+

"upper_quartile_gb": 0.13478469848632812,

|

| 30 |

+

"avg_memory_gb": 0.13478469848632812,

|

| 31 |

+

"model_load_memory_gb": 7.857240676879883,

|

| 32 |

+

"avg_inference_memory_gb": 0.13478469848632812

|

| 33 |

+

}

|

| 34 |

+

}

|

Granite3.2-2B-BF16-lora-FP16-Evaluation_Results.json

ADDED

|

@@ -0,0 +1,34 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"eval_loss:": 0.6290175174139142,

|

| 3 |

+

"perplexity:": 1.8757667654725518,

|

| 4 |

+

"performance_metrics:": {

|

| 5 |

+

"accuracy:": 0.9993324432576769,

|

| 6 |

+

"precision:": 1.0,

|

| 7 |

+

"recall:": 1.0,

|

| 8 |

+

"f1:": 1.0,

|

| 9 |

+

"bleu:": 0.9583276083314752,

|

| 10 |

+

"rouge:": {

|

| 11 |

+

"rouge1": 0.9772097831739393,

|

| 12 |

+

"rouge2": 0.9770857665194296,

|

| 13 |

+

"rougeL": 0.9772097831739393

|

| 14 |

+

},

|

| 15 |

+

"semantic_similarity_avg:": 0.99727463722229

|

| 16 |

+

},

|

| 17 |

+

"mauve:": 0.8845393474939447,

|

| 18 |

+

"inference_performance:": {

|

| 19 |

+

"min_latency_ms": 65.03963470458984,

|

| 20 |

+

"max_latency_ms": 4162.008047103882,

|

| 21 |

+

"lower_quartile_ms": 67.63327121734619,

|

| 22 |

+

"median_latency_ms": 85.66915988922119,

|

| 23 |

+

"upper_quartile_ms": 2466.5658473968506,

|

| 24 |

+

"avg_latency_ms": 1007.1729932512555,

|

| 25 |

+

"min_memory_gb": 0.13478469848632812,

|

| 26 |

+

"max_memory_gb": 0.13478469848632812,

|

| 27 |

+

"lower_quartile_gb": 0.13478469848632812,

|

| 28 |

+

"median_memory_gb": 0.13478469848632812,

|

| 29 |

+

"upper_quartile_gb": 0.13478469848632812,

|

| 30 |

+

"avg_memory_gb": 0.13478469848632812,

|

| 31 |

+

"model_load_memory_gb": 7.857240676879883,

|

| 32 |

+

"avg_inference_memory_gb": 0.13478469848632812

|

| 33 |

+

}

|

| 34 |

+

}

|

Granite3.2-2B-BF16-lora-FP16-Inference_Curve.png

ADDED

|

Granite3.2-2B-BF16-lora-FP16-Latency_Histogram.png

ADDED

|

Granite3.2-2B-BF16-lora-FP16-Memory_Histogram.png

ADDED

|

Granite3.2-2B-BF16-lora-FP16-Memory_Usage_Curve.png

ADDED

|