Upload folder using huggingface_hub

Browse files- .ipynb_checkpoints/Granite3.2-2B-FP4-lora-FP16-Evaluation_Results-checkpoint.json +34 -0

- Granite3.2-2B-FP4-lora-FP16-Evaluation_Results.json +34 -0

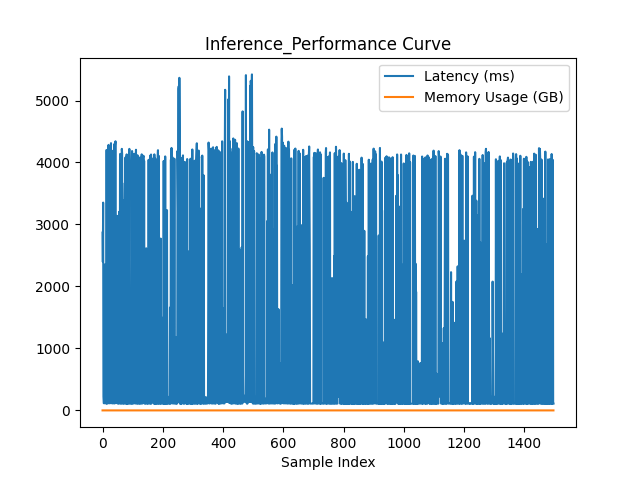

- Granite3.2-2B-FP4-lora-FP16-Inference_Curve.png +0 -0

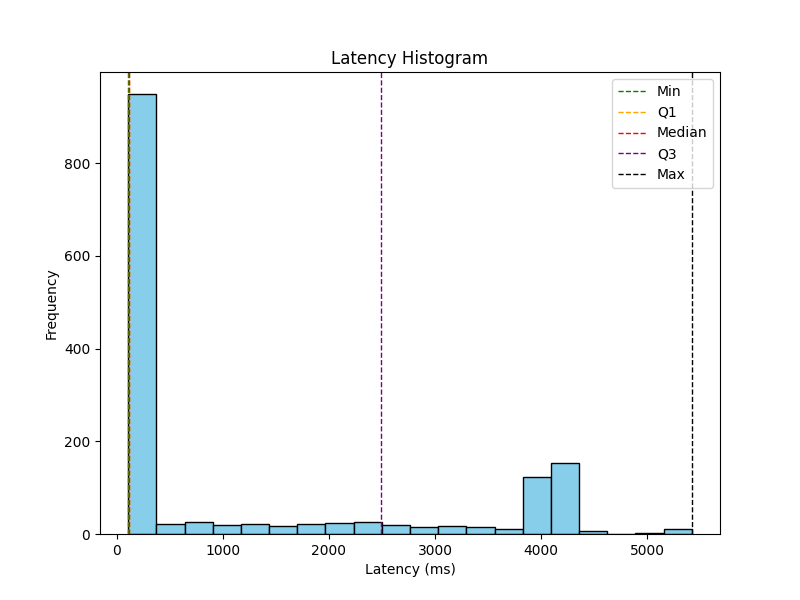

- Granite3.2-2B-FP4-lora-FP16-Latency_Histogram.png +0 -0

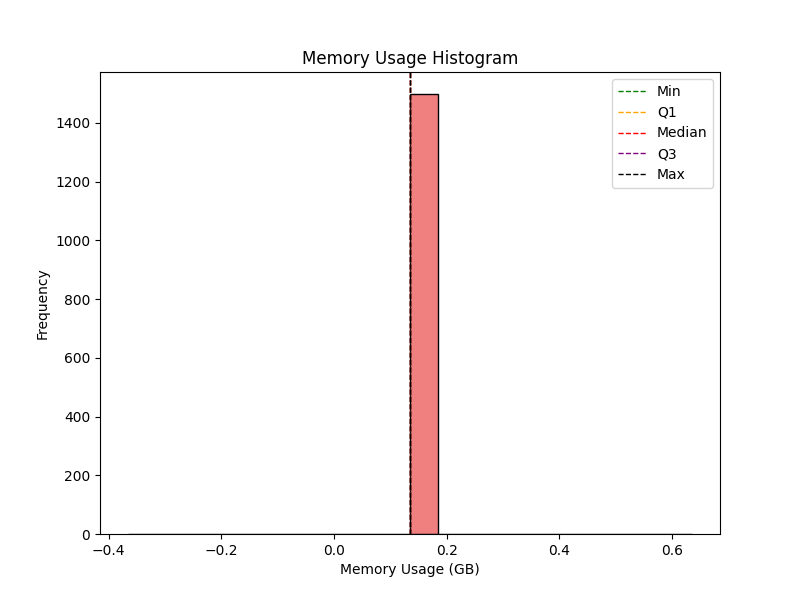

- Granite3.2-2B-FP4-lora-FP16-Memory_Histogram.png +0 -0

- Granite3.2-2B-FP4-lora-FP16-Memory_Usage_Curve.png +0 -0

.ipynb_checkpoints/Granite3.2-2B-FP4-lora-FP16-Evaluation_Results-checkpoint.json

ADDED

|

@@ -0,0 +1,34 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"eval_loss:": 0.7104760890469721,

|

| 3 |

+

"perplexity:": 2.0349598501562385,

|

| 4 |

+

"performance_metrics:": {

|

| 5 |

+

"accuracy:": 0.9993324432576769,

|

| 6 |

+

"precision:": 1.0,

|

| 7 |

+

"recall:": 1.0,

|

| 8 |

+

"f1:": 1.0,

|

| 9 |

+

"bleu:": 0.9608071662029927,

|

| 10 |

+

"rouge:": {

|

| 11 |

+

"rouge1": 0.9785375186875944,

|

| 12 |

+

"rouge2": 0.9784191926971961,

|

| 13 |

+

"rougeL": 0.9785375186875944

|

| 14 |

+

},

|

| 15 |

+

"semantic_similarity_avg:": 0.9974932670593262

|

| 16 |

+

},

|

| 17 |

+

"mauve:": 0.8804768654231001,

|

| 18 |

+

"inference_performance:": {

|

| 19 |

+

"min_latency_ms": 106.68039321899414,

|

| 20 |

+

"max_latency_ms": 5421.270132064819,

|

| 21 |

+

"lower_quartile_ms": 109.932541847229,

|

| 22 |

+

"median_latency_ms": 114.32981491088867,

|

| 23 |

+

"upper_quartile_ms": 2489.4919991493225,

|

| 24 |

+

"avg_latency_ms": 1233.0216747100585,

|

| 25 |

+

"min_memory_gb": 0.13478469848632812,

|

| 26 |

+

"max_memory_gb": 0.13478469848632812,

|

| 27 |

+

"lower_quartile_gb": 0.13478469848632812,

|

| 28 |

+

"median_memory_gb": 0.13478469848632812,

|

| 29 |

+

"upper_quartile_gb": 0.13478469848632812,

|

| 30 |

+

"avg_memory_gb": 0.13478469848632812,

|

| 31 |

+

"model_load_memory_gb": 4.49504280090332,

|

| 32 |

+

"avg_inference_memory_gb": 0.13478469848632812

|

| 33 |

+

}

|

| 34 |

+

}

|

Granite3.2-2B-FP4-lora-FP16-Evaluation_Results.json

ADDED

|

@@ -0,0 +1,34 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"eval_loss:": 0.7104760890469721,

|

| 3 |

+

"perplexity:": 2.0349598501562385,

|

| 4 |

+

"performance_metrics:": {

|

| 5 |

+

"accuracy:": 0.9993324432576769,

|

| 6 |

+

"precision:": 1.0,

|

| 7 |

+

"recall:": 1.0,

|

| 8 |

+

"f1:": 1.0,

|

| 9 |

+

"bleu:": 0.9608071662029927,

|

| 10 |

+

"rouge:": {

|

| 11 |

+

"rouge1": 0.9785375186875944,

|

| 12 |

+

"rouge2": 0.9784191926971961,

|

| 13 |

+

"rougeL": 0.9785375186875944

|

| 14 |

+

},

|

| 15 |

+

"semantic_similarity_avg:": 0.9974932670593262

|

| 16 |

+

},

|

| 17 |

+

"mauve:": 0.8804768654231001,

|

| 18 |

+

"inference_performance:": {

|

| 19 |

+

"min_latency_ms": 106.68039321899414,

|

| 20 |

+

"max_latency_ms": 5421.270132064819,

|

| 21 |

+

"lower_quartile_ms": 109.932541847229,

|

| 22 |

+

"median_latency_ms": 114.32981491088867,

|

| 23 |

+

"upper_quartile_ms": 2489.4919991493225,

|

| 24 |

+

"avg_latency_ms": 1233.0216747100585,

|

| 25 |

+

"min_memory_gb": 0.13478469848632812,

|

| 26 |

+

"max_memory_gb": 0.13478469848632812,

|

| 27 |

+

"lower_quartile_gb": 0.13478469848632812,

|

| 28 |

+

"median_memory_gb": 0.13478469848632812,

|

| 29 |

+

"upper_quartile_gb": 0.13478469848632812,

|

| 30 |

+

"avg_memory_gb": 0.13478469848632812,

|

| 31 |

+

"model_load_memory_gb": 4.49504280090332,

|

| 32 |

+

"avg_inference_memory_gb": 0.13478469848632812

|

| 33 |

+

}

|

| 34 |

+

}

|

Granite3.2-2B-FP4-lora-FP16-Inference_Curve.png

ADDED

|

Granite3.2-2B-FP4-lora-FP16-Latency_Histogram.png

ADDED

|

Granite3.2-2B-FP4-lora-FP16-Memory_Histogram.png

ADDED

|

Granite3.2-2B-FP4-lora-FP16-Memory_Usage_Curve.png

ADDED

|