base_model:

- Qwen/Qwen2.5-VL-7B-Instruct

datasets:

- Code2Logic/GameQA-140K

- Code2Logic/GameQA-5K

license: apache-2.0

pipeline_tag: image-text-to-text

library_name: transformers

This model (GameQA-Qwen2.5-VL-7B) results from training Qwen2.5-VL-7B with GRPO solely on our GameQA-5K (sampled from the full GameQA-140K dataset).

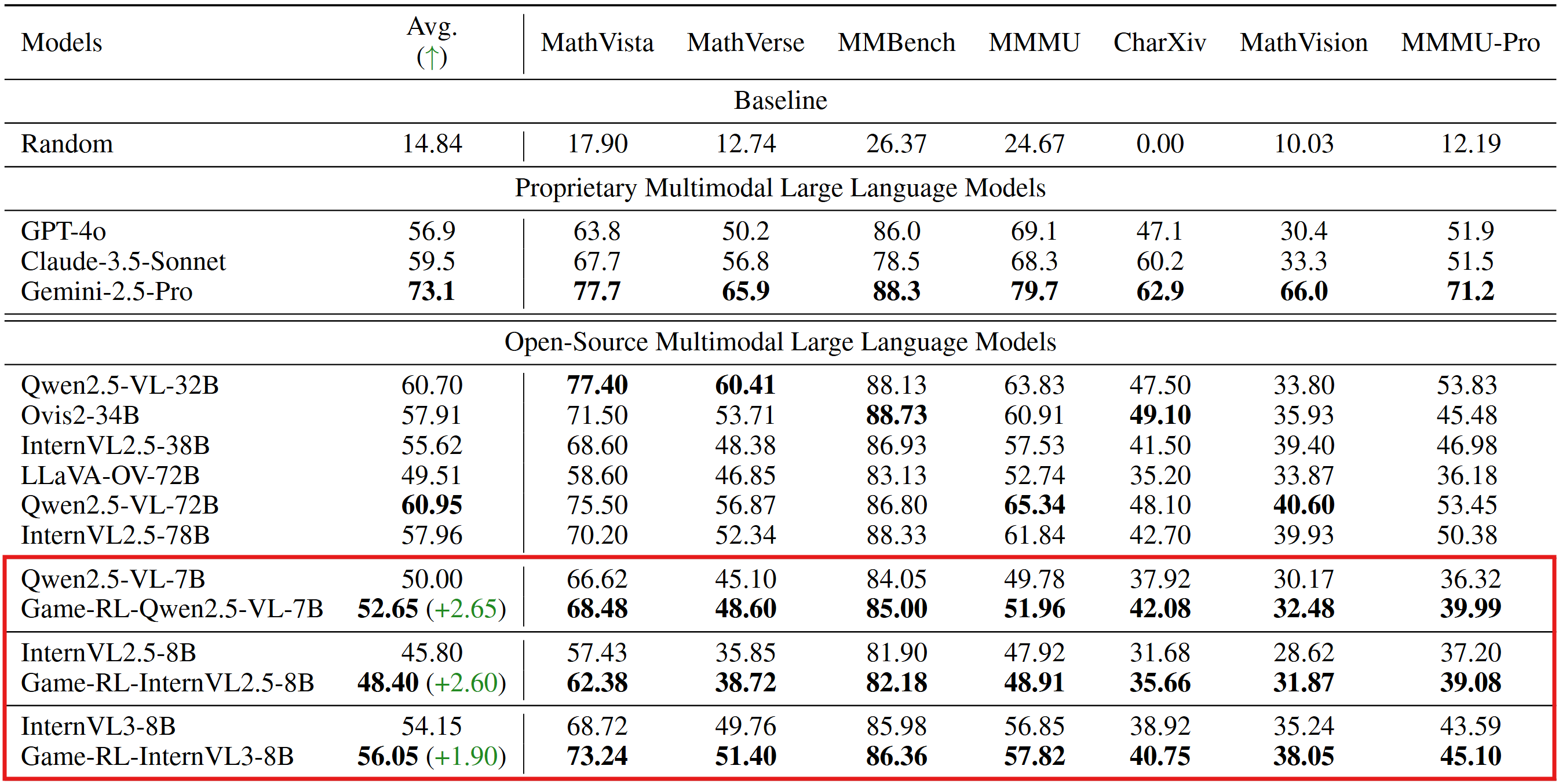

Evaluation Results on General Vision BenchMarks

(The inference and evaluation configurations were unified across both the original open-source models and our trained models.)

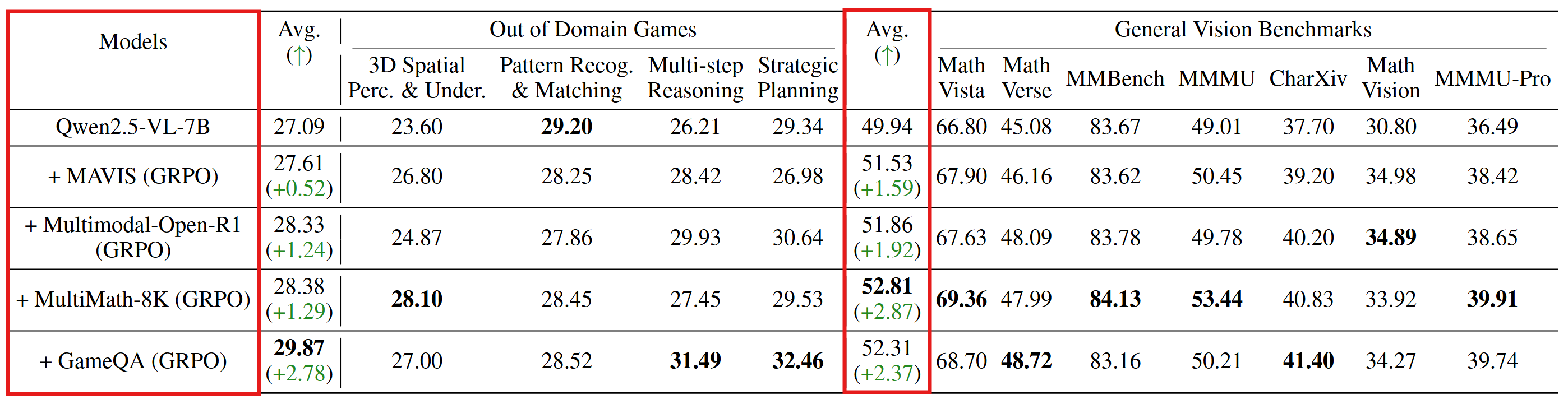

It's also found that getting trained on 5k samples from our GameQA dataset can lead to better results than on multimodal-open-r1-8k-verified.

Code2Logic: Game-Code-Driven Data Synthesis for Enhancing VLMs General Reasoning

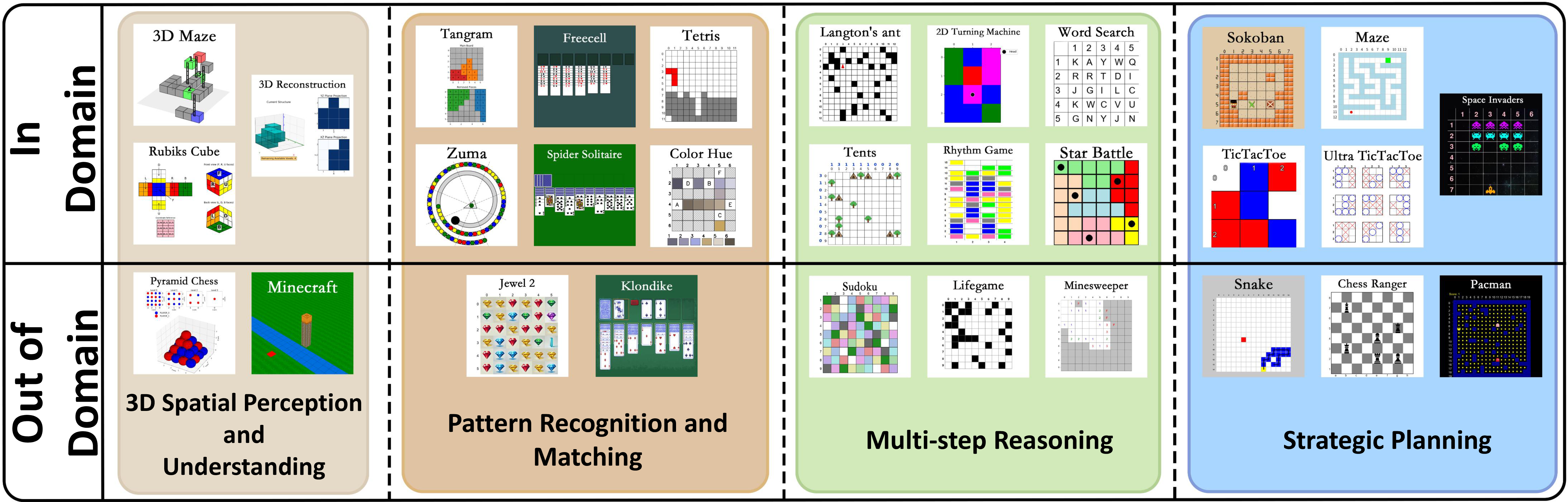

This is the first work, to the best of our knowledge, that leverages game code to synthesize multimodal reasoning data for training VLMs. Furthermore, when trained with a GRPO strategy solely on GameQA (synthesized via our proposed Code2Logic approach), multiple cutting-edge open-source models exhibit significantly enhanced out-of-domain generalization.

[📖 Paper] [🤗 GameQA-140K Dataset] [🤗 GameQA-5K Dataset] [🤗 GameQA-InternVL3-8B ] [🤗 GameQA-Qwen2.5-VL-7B] [🤗 GameQA-LLaVA-OV-7B ]

Code: https://github.com/tongjingqi/Code2Logic

News

- We've open-sourced the three models trained with GRPO on GameQA on Huggingface.