Upload folder using huggingface_hub

Browse files- .gitattributes +3 -0

- README.md +190 -0

- added_tokens.json +11 -0

- assets/CoMemo_framework.png +3 -0

- assets/RoPE_DHR.png +3 -0

- assets/image1.jpg +0 -0

- assets/image2.jpg +3 -0

- config.json +212 -0

- configuration_comemo_chat.py +102 -0

- configuration_intern_vit.py +119 -0

- configuration_internlm2.py +150 -0

- configuration_mixin.py +43 -0

- conversation.py +422 -0

- flash_attention.py +76 -0

- generation_config.json +4 -0

- helpers.py +244 -0

- mixin_lm.py +202 -0

- model-00001-of-00002.safetensors +3 -0

- model-00002-of-00002.safetensors +3 -0

- model.safetensors.index.json +584 -0

- modeling_comemo_chat.py +502 -0

- modeling_intern_vit.py +362 -0

- modeling_internlm2.py +1431 -0

- preprocessor_config.json +19 -0

- special_tokens_map.json +47 -0

- tokenization_internlm2.py +236 -0

- tokenization_internlm2_fast.py +211 -0

- tokenizer.model +3 -0

- tokenizer_config.json +179 -0

- utils.py +69 -0

.gitattributes

CHANGED

|

@@ -33,3 +33,6 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

assets/CoMemo_framework.png filter=lfs diff=lfs merge=lfs -text

|

| 37 |

+

assets/RoPE_DHR.png filter=lfs diff=lfs merge=lfs -text

|

| 38 |

+

assets/image2.jpg filter=lfs diff=lfs merge=lfs -text

|

README.md

ADDED

|

@@ -0,0 +1,190 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

license: mit

|

| 3 |

+

pipeline_tag: image-text-to-text

|

| 4 |

+

library_name: transformers

|

| 5 |

+

base_model:

|

| 6 |

+

- OpenGVLab/InternViT-300M-448px

|

| 7 |

+

- internlm/internlm2-chat-1_8b

|

| 8 |

+

base_model_relation: merge

|

| 9 |

+

language:

|

| 10 |

+

- multilingual

|

| 11 |

+

tags:

|

| 12 |

+

- internvl

|

| 13 |

+

- custom_code

|

| 14 |

+

---

|

| 15 |

+

|

| 16 |

+

# CoMemo-2B

|

| 17 |

+

|

| 18 |

+

[\[📂 GitHub\]](https://github.com/LALBJ/CoMemo) [\[📜 Paper\]](https://arxiv.org/pdf/2506.06279) [\[🚀 Quick Start\]](#quick-start)

|

| 19 |

+

|

| 20 |

+

|

| 21 |

+

## Introduction

|

| 22 |

+

|

| 23 |

+

LVLMs inherited LLMs architectural designs, which introduce suboptimal characteristics for multimodal processing. First, LVLMs exhibit a bimodal distribution in attention allocation, leading to the progressive neglect of central visual content as context expands. Second, conventional positional encoding schemes fail to preserve vital 2D structural relationships when processing dynamic high-resolution images.

|

| 24 |

+

|

| 25 |

+

To address these issues, we propose CoMemo, a novel model architecture. CoMemo employs a dual-path approach for visual processing: one path maps image tokens to the text token representation space for causal self-attention, while the other introduces cross-attention, enabling context-agnostic computation between the input sequence and image information. Additionally, we developed RoPE-DHR, a new positional encoding method tailored for LVLMs with dynamic high-resolution inputs. RoPE-DHR mitigates the remote decay problem caused by dynamic high-resolution inputs while preserving the 2D structural information of images.

|

| 26 |

+

|

| 27 |

+

Evaluated on seven diverse tasks, including long-context understanding, multi-image reasoning, and visual question answering, CoMemo achieves relative improvements of 17.2%, 7.0%, and 5.6% on Caption, Long-Generation, and Long-Context tasks, respectively, with consistent performance gains across various benchmarks. For more details, please refer to our [paper](https://arxiv.org/pdf/2506.06279) and [GitHub](https://github.com/LALBJ/CoMemo).

|

| 28 |

+

|

| 29 |

+

| Model Name | Vision Part | Language Part | HF Link |

|

| 30 |

+

| :------------------: | :---------------------------------------------------------------------------------: | :------------------------------------------------------------------------------------------: | :--------------------------------------------------------------: |

|

| 31 |

+

| CoMemo-2B | [InternViT-300M-448px](https://huggingface.co/OpenGVLab/InternViT-300M-448px) | [internlm2-chat-1_8b](https://huggingface.co/internlm/internlm2-chat-1_8b) | [🤗 link](https://huggingface.co/CLLBJ16/CoMemo-2B) |

|

| 32 |

+

| CoMemo-9B | [InternViT-300M-448px](https://huggingface.co/OpenGVLab/InternViT-300M-448px) | [internlm2-chat-7b](https://huggingface.co/internlm/internlm2-chat-7b) | [🤗 link](https://huggingface.co/CLLBJ16/CoMemo-9B) |

|

| 33 |

+

|

| 34 |

+

## Method Overview

|

| 35 |

+

<div class="image-row" style="display: flex; justify-content: center; gap: 10px; margin: 20px 0;">

|

| 36 |

+

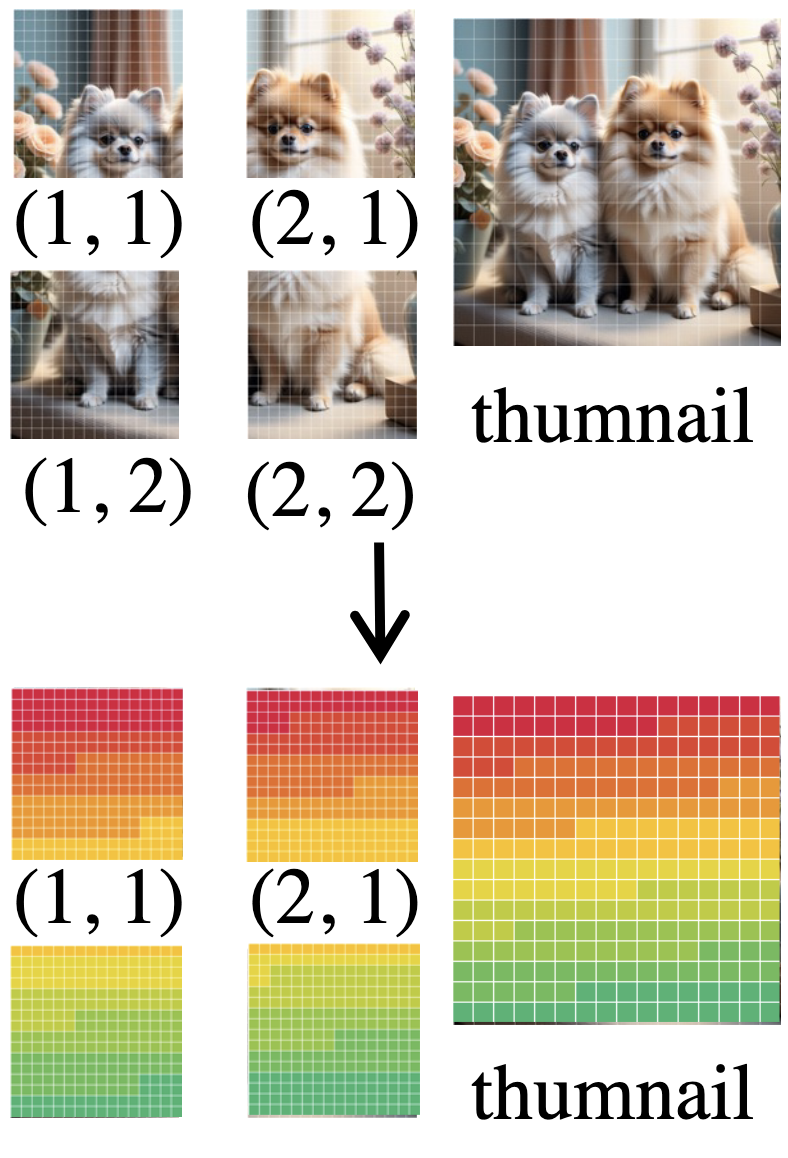

<img src="assets/RoPE_DHR.png" alt="teaser" style="max-width: 30%; height: auto;" />

|

| 37 |

+

<img src="assets/CoMemo_framework.png" alt="teaser" style="max-width: 53%; height: auto;" />

|

| 38 |

+

</div>

|

| 39 |

+

|

| 40 |

+

**Left:** The computation process of Rope-DHR. The colors are assigned based on a mapping of position IDs in RoPE.

|

| 41 |

+

**Right:** Framework of CoMemo. Both paths share the same encoder and projector

|

| 42 |

+

|

| 43 |

+

## Quick Start

|

| 44 |

+

|

| 45 |

+

We provide an example code to run `CoMemo-2B` using `transformers`.

|

| 46 |

+

|

| 47 |

+

> Please use transformers>=4.37.2 to ensure the model works normally.

|

| 48 |

+

|

| 49 |

+

### Inference with Transformers

|

| 50 |

+

|

| 51 |

+

> Note: We determine whether to use RoPE-DHR by checking if the target_aspect_ratio parameter is passed to generate.

|

| 52 |

+

> For OCR-related tasks requiring fine-grained image information, we recommend using the original RoPE. For long-context tasks, we recommend using RoPE-DHR.

|

| 53 |

+

|

| 54 |

+

```python

|

| 55 |

+

import torch

|

| 56 |

+

from PIL import Image

|

| 57 |

+

import torchvision.transforms as T

|

| 58 |

+

from torchvision.transforms.functional import InterpolationMode

|

| 59 |

+

from transformers import AutoModel, AutoTokenizer

|

| 60 |

+

|

| 61 |

+

path = "CLLBJ16/CoMemo-2B"

|

| 62 |

+

model = AutoModel.from_pretrained(

|

| 63 |

+

path,

|

| 64 |

+

torch_dtype=torch.bfloat16,

|

| 65 |

+

trust_remote_code=True,

|

| 66 |

+

low_cpu_mem_usage=True).eval().cuda()

|

| 67 |

+

tokenizer = AutoTokenizer.from_pretrained(path, trust_remote_code=True, use_fast=False)

|

| 68 |

+

|

| 69 |

+

IMAGENET_MEAN = (0.485, 0.456, 0.406)

|

| 70 |

+

IMAGENET_STD = (0.229, 0.224, 0.225)

|

| 71 |

+

|

| 72 |

+

def build_transform(input_size):

|

| 73 |

+

MEAN, STD = IMAGENET_MEAN, IMAGENET_STD

|

| 74 |

+

transform = T.Compose([

|

| 75 |

+

T.Lambda(lambda img: img.convert('RGB') if img.mode != 'RGB' else img),

|

| 76 |

+

T.Resize((input_size, input_size), interpolation=InterpolationMode.BICUBIC),

|

| 77 |

+

T.ToTensor(),

|

| 78 |

+

T.Normalize(mean=MEAN, std=STD)

|

| 79 |

+

])

|

| 80 |

+

return transform

|

| 81 |

+

|

| 82 |

+

def find_closest_aspect_ratio(aspect_ratio, target_ratios, width, height, image_size):

|

| 83 |

+

best_ratio_diff = float('inf')

|

| 84 |

+

best_ratio = (1, 1)

|

| 85 |

+

area = width * height

|

| 86 |

+

for ratio in target_ratios:

|

| 87 |

+

target_aspect_ratio = ratio[0] / ratio[1]

|

| 88 |

+

ratio_diff = abs(aspect_ratio - target_aspect_ratio)

|

| 89 |

+

if ratio_diff < best_ratio_diff:

|

| 90 |

+

best_ratio_diff = ratio_diff

|

| 91 |

+

best_ratio = ratio

|

| 92 |

+

elif ratio_diff == best_ratio_diff:

|

| 93 |

+

if area > 0.5 * image_size * image_size * ratio[0] * ratio[1]:

|

| 94 |

+

best_ratio = ratio

|

| 95 |

+

return best_ratio

|

| 96 |

+

|

| 97 |

+

def dynamic_preprocess(image, min_num=1, max_num=12, image_size=448, use_thumbnail=False):

|

| 98 |

+

orig_width, orig_height = image.size

|

| 99 |

+

aspect_ratio = orig_width / orig_height

|

| 100 |

+

|

| 101 |

+

# calculate the existing image aspect ratio

|

| 102 |

+

target_ratios = set(

|

| 103 |

+

(i, j) for n in range(min_num, max_num + 1) for i in range(1, n + 1) for j in range(1, n + 1) if

|

| 104 |

+

i * j <= max_num and i * j >= min_num)

|

| 105 |

+

target_ratios = sorted(target_ratios, key=lambda x: x[0] * x[1])

|

| 106 |

+

|

| 107 |

+

# find the closest aspect ratio to the target

|

| 108 |

+

target_aspect_ratio = find_closest_aspect_ratio(

|

| 109 |

+

aspect_ratio, target_ratios, orig_width, orig_height, image_size)

|

| 110 |

+

|

| 111 |

+

# calculate the target width and height

|

| 112 |

+

target_width = image_size * target_aspect_ratio[0]

|

| 113 |

+

target_height = image_size * target_aspect_ratio[1]

|

| 114 |

+

blocks = target_aspect_ratio[0] * target_aspect_ratio[1]

|

| 115 |

+

|

| 116 |

+

# resize the image

|

| 117 |

+

resized_img = image.resize((target_width, target_height))

|

| 118 |

+

processed_images = []

|

| 119 |

+

for i in range(blocks):

|

| 120 |

+

box = (

|

| 121 |

+

(i % (target_width // image_size)) * image_size,

|

| 122 |

+

(i // (target_width // image_size)) * image_size,

|

| 123 |

+

((i % (target_width // image_size)) + 1) * image_size,

|

| 124 |

+

((i // (target_width // image_size)) + 1) * image_size

|

| 125 |

+

)

|

| 126 |

+

# split the image

|

| 127 |

+

split_img = resized_img.crop(box)

|

| 128 |

+

processed_images.append(split_img)

|

| 129 |

+

assert len(processed_images) == blocks

|

| 130 |

+

if use_thumbnail and len(processed_images) != 1:

|

| 131 |

+

thumbnail_img = image.resize((image_size, image_size))

|

| 132 |

+

processed_images.append(thumbnail_img)

|

| 133 |

+

return processed_images, target_aspect_ratio

|

| 134 |

+

|

| 135 |

+

def load_image(image_file, input_size=448, max_num=12):

|

| 136 |

+

image = Image.open(image_file).convert('RGB')

|

| 137 |

+

transform = build_transform(input_size=input_size)

|

| 138 |

+

images, target_aspect_ratio = dynamic_preprocess(image, image_size=input_size, use_thumbnail=True, max_num=max_num)

|

| 139 |

+

pixel_values = [transform(image) for image in images]

|

| 140 |

+

pixel_values = torch.stack(pixel_values)

|

| 141 |

+

return pixel_values, target_aspect_ratio

|

| 142 |

+

|

| 143 |

+

pixel_values, target_aspect_ratio = load_image('./assets/image1.jpg', max_num=12)

|

| 144 |

+

pixel_values = pixel_values.to(torch.bfloat16).cuda()

|

| 145 |

+

generation_config = dict(max_new_tokens=1024, do_sample=True)

|

| 146 |

+

|

| 147 |

+

# single-image single-round conversation (单图单轮对话)

|

| 148 |

+

question = '<image>\nPlease describe the image shortly.'

|

| 149 |

+

target_aspect_ratio = [target_aspect_ratio]

|

| 150 |

+

# Use RoPE-DHR

|

| 151 |

+

response = model.chat(tokenizer, pixel_values, question, generation_config, target_aspect_ratio=target_aspect_ratio)

|

| 152 |

+

# # Use Original Rope

|

| 153 |

+

# response = model.chat(tokenizer, pixel_values, question, generation_config, target_aspect_ratio=target_aspect_ratio)

|

| 154 |

+

print(f'User: {question}\nAssistant: {response}')

|

| 155 |

+

|

| 156 |

+

# multi-image single-round conversation, separate images (多图多轮对话,独立图像)

|

| 157 |

+

pixel_values1, target_aspect_ratio1 = load_image('./assets/image1.jpg', max_num=12)

|

| 158 |

+

pixel_values1 = pixel_values1.to(torch.bfloat16).cuda()

|

| 159 |

+

pixel_values2, target_aspect_ratio2 = load_image('./assets/image2.jpg', max_num=12)

|

| 160 |

+

pixel_values2 = pixel_values2.to(torch.bfloat16).cuda()

|

| 161 |

+

pixel_values = torch.cat((pixel_values1, pixel_values2), dim=0)

|

| 162 |

+

target_aspect_ratio = [target_aspect_ratio1, target_aspect_ratio2]

|

| 163 |

+

num_patches_list = [pixel_values1.size(0), pixel_values2.size(0)]

|

| 164 |

+

|

| 165 |

+

question = 'Image-1: <image>\nImage-2: <image>\nWhat are the similarities and differences between these two images.'

|

| 166 |

+

# Use RoPE-DHR

|

| 167 |

+

response = model.chat(tokenizer, pixel_values, question, generation_config,

|

| 168 |

+

num_patches_list=num_patches_list, target_aspect_ratio=target_aspect_ratio)

|

| 169 |

+

# # Use Original RoPE

|

| 170 |

+

# response = model.chat(tokenizer, pixel_values, question, generation_config,

|

| 171 |

+

# num_patches_list=num_patches_list, target_aspect_ratio=target_aspect_ratio)

|

| 172 |

+

print(f'User: {question}\nAssistant: {response}')

|

| 173 |

+

```

|

| 174 |

+

|

| 175 |

+

## License

|

| 176 |

+

|

| 177 |

+

This project is released under the MIT License. This project uses the pre-trained internlm2-chat-1_8b as a component, which is licensed under the Apache License 2.0.

|

| 178 |

+

|

| 179 |

+

## Citation

|

| 180 |

+

|

| 181 |

+

If you find this project useful in your research, please consider citing:

|

| 182 |

+

|

| 183 |

+

```BibTeX

|

| 184 |

+

@article{liu2025comemo,

|

| 185 |

+

title={CoMemo: LVLMs Need Image Context with Image Memory},

|

| 186 |

+

author={Liu, Shi and Su, Weijie and Zhu, Xizhou and Wang, Wenhai and Dai, Jifeng},

|

| 187 |

+

journal={arXiv preprint arXiv:2506.06279},

|

| 188 |

+

year={2025}

|

| 189 |

+

}

|

| 190 |

+

```

|

added_tokens.json

ADDED

|

@@ -0,0 +1,11 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"</box>": 92552,

|

| 3 |

+

"</img>": 92545,

|

| 4 |

+

"</quad>": 92548,

|

| 5 |

+

"</ref>": 92550,

|

| 6 |

+

"<IMG_CONTEXT>": 92546,

|

| 7 |

+

"<box>": 92551,

|

| 8 |

+

"<img>": 92544,

|

| 9 |

+

"<quad>": 92547,

|

| 10 |

+

"<ref>": 92549

|

| 11 |

+

}

|

assets/CoMemo_framework.png

ADDED

|

Git LFS Details

|

assets/RoPE_DHR.png

ADDED

|

Git LFS Details

|

assets/image1.jpg

ADDED

|

assets/image2.jpg

ADDED

|

Git LFS Details

|

config.json

ADDED

|

@@ -0,0 +1,212 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_commit_hash": null,

|

| 3 |

+

"architectures": [

|

| 4 |

+

"CoMemoChatModel"

|

| 5 |

+

],

|

| 6 |

+

"auto_map": {

|

| 7 |

+

"AutoConfig": "configuration_comemo_chat.CoMemoChatConfig",

|

| 8 |

+

"AutoModel": "modeling_comemo_chat.CoMemoChatModel",

|

| 9 |

+

"AutoModelForCausalLM": "modeling_comemo_chat.CoMemoChatModel"

|

| 10 |

+

},

|

| 11 |

+

"downsample_ratio": 0.5,

|

| 12 |

+

"dynamic_image_size": true,

|

| 13 |

+

"force_image_size": 448,

|

| 14 |

+

"llm_config": {

|

| 15 |

+

"_name_or_path": "internlm/internlm2-chat-1_8b",

|

| 16 |

+

"add_cross_attention": false,

|

| 17 |

+

"architectures": [

|

| 18 |

+

"InternLM2ForCausalLM"

|

| 19 |

+

],

|

| 20 |

+

"attn_implementation": "flash_attention_2",

|

| 21 |

+

"auto_map": {

|

| 22 |

+

"AutoConfig": "configuration_internlm2.InternLM2Config",

|

| 23 |

+

"AutoModel": "modeling_internlm2.InternLM2ForCausalLM",

|

| 24 |

+

"AutoModelForCausalLM": "modeling_internlm2.InternLM2ForCausalLM"

|

| 25 |

+

},

|

| 26 |

+

"bad_words_ids": null,

|

| 27 |

+

"begin_suppress_tokens": null,

|

| 28 |

+

"bias": false,

|

| 29 |

+

"bos_token_id": 1,

|

| 30 |

+

"chunk_size_feed_forward": 0,

|

| 31 |

+

"cross_attention_hidden_size": null,

|

| 32 |

+

"decoder_start_token_id": null,

|

| 33 |

+

"diversity_penalty": 0.0,

|

| 34 |

+

"do_sample": false,

|

| 35 |

+

"early_stopping": false,

|

| 36 |

+

"encoder_no_repeat_ngram_size": 0,

|

| 37 |

+

"eos_token_id": 2,

|

| 38 |

+

"exponential_decay_length_penalty": null,

|

| 39 |

+

"finetuning_task": null,

|

| 40 |

+

"forced_bos_token_id": null,

|

| 41 |

+

"forced_eos_token_id": null,

|

| 42 |

+

"hidden_act": "silu",

|

| 43 |

+

"hidden_size": 2048,

|

| 44 |

+

"id2label": {

|

| 45 |

+

"0": "LABEL_0",

|

| 46 |

+

"1": "LABEL_1"

|

| 47 |

+

},

|

| 48 |

+

"initializer_range": 0.02,

|

| 49 |

+

"intermediate_size": 8192,

|

| 50 |

+

"is_decoder": false,

|

| 51 |

+

"is_encoder_decoder": false,

|

| 52 |

+

"label2id": {

|

| 53 |

+

"LABEL_0": 0,

|

| 54 |

+

"LABEL_1": 1

|

| 55 |

+

},

|

| 56 |

+

"length_penalty": 1.0,

|

| 57 |

+

"max_length": 20,

|

| 58 |

+

"max_position_embeddings": 32768,

|

| 59 |

+

"min_length": 0,

|

| 60 |

+

"model_type": "internlm2",

|

| 61 |

+

"no_repeat_ngram_size": 0,

|

| 62 |

+

"num_attention_heads": 16,

|

| 63 |

+

"num_beam_groups": 1,

|

| 64 |

+

"num_beams": 1,

|

| 65 |

+

"num_hidden_layers": 24,

|

| 66 |

+

"num_key_value_heads": 8,

|

| 67 |

+

"num_return_sequences": 1,

|

| 68 |

+

"output_attentions": false,

|

| 69 |

+

"output_hidden_states": false,

|

| 70 |

+

"output_scores": false,

|

| 71 |

+

"pad_token_id": 2,

|

| 72 |

+

"prefix": null,

|

| 73 |

+

"problem_type": null,

|

| 74 |

+

"pruned_heads": {},

|

| 75 |

+

"remove_invalid_values": false,

|

| 76 |

+

"repetition_penalty": 1.0,

|

| 77 |

+

"return_dict": true,

|

| 78 |

+

"return_dict_in_generate": false,

|

| 79 |

+

"rms_norm_eps": 1e-05,

|

| 80 |

+

"rope_scaling": null,

|

| 81 |

+

"rope_theta": 1000000,

|

| 82 |

+

"sep_token_id": null,

|

| 83 |

+

"suppress_tokens": null,

|

| 84 |

+

"task_specific_params": null,

|

| 85 |

+

"temperature": 1.0,

|

| 86 |

+

"tf_legacy_loss": false,

|

| 87 |

+

"tie_encoder_decoder": false,

|

| 88 |

+

"tie_word_embeddings": false,

|

| 89 |

+

"tokenizer_class": null,

|

| 90 |

+

"top_k": 50,

|

| 91 |

+

"top_p": 1.0,

|

| 92 |

+

"torch_dtype": "bfloat16",

|

| 93 |

+

"torchscript": false,

|

| 94 |

+

"transformers_version": "4.37.2",

|

| 95 |

+

"typical_p": 1.0,

|

| 96 |

+

"use_bfloat16": false,

|

| 97 |

+

"use_cache": false,

|

| 98 |

+

"vocab_size": 92553

|

| 99 |

+

},

|

| 100 |

+

"max_dynamic_patch": 12,

|

| 101 |

+

"min_dynamic_patch": 1,

|

| 102 |

+

"model_type": "comemo_chat",

|

| 103 |

+

"no_perceiver": false,

|

| 104 |

+

"pad2square": false,

|

| 105 |

+

"ps_version": "v2",

|

| 106 |

+

"select_layer": -1,

|

| 107 |

+

"template": "internlm2-chat",

|

| 108 |

+

"torch_dtype": "bfloat16",

|

| 109 |

+

"transformers_version": null,

|

| 110 |

+

"use_alibi": false,

|

| 111 |

+

"use_backbone_lora": 0,

|

| 112 |

+

"use_llm_lora": 0,

|

| 113 |

+

"use_mask": false,

|

| 114 |

+

"use_temporal": false,

|

| 115 |

+

"use_thumbnail": true,

|

| 116 |

+

"vision_config": {

|

| 117 |

+

"_name_or_path": "",

|

| 118 |

+

"add_cross_attention": false,

|

| 119 |

+

"architectures": [

|

| 120 |

+

"InternVisionModel"

|

| 121 |

+

],

|

| 122 |

+

"attention_dropout": 0.0,

|

| 123 |

+

"auto_map": {

|

| 124 |

+

"AutoConfig": "configuration_intern_vit.InternVisionConfig",

|

| 125 |

+

"AutoModel": "modeling_intern_vit.InternVisionModel"

|

| 126 |

+

},

|

| 127 |

+

"bad_words_ids": null,

|

| 128 |

+

"begin_suppress_tokens": null,

|

| 129 |

+

"bos_token_id": null,

|

| 130 |

+

"chunk_size_feed_forward": 0,

|

| 131 |

+

"cross_attention_hidden_size": null,

|

| 132 |

+

"decoder_start_token_id": null,

|

| 133 |

+

"diversity_penalty": 0.0,

|

| 134 |

+

"do_sample": false,

|

| 135 |

+

"drop_path_rate": 0.1,

|

| 136 |

+

"dropout": 0.0,

|

| 137 |

+

"early_stopping": false,

|

| 138 |

+

"encoder_no_repeat_ngram_size": 0,

|

| 139 |

+

"eos_token_id": null,

|

| 140 |

+

"exponential_decay_length_penalty": null,

|

| 141 |

+

"finetuning_task": null,

|

| 142 |

+

"forced_bos_token_id": null,

|

| 143 |

+

"forced_eos_token_id": null,

|

| 144 |

+

"hidden_act": "gelu",

|

| 145 |

+

"hidden_size": 1024,

|

| 146 |

+

"id2label": {

|

| 147 |

+

"0": "LABEL_0",

|

| 148 |

+

"1": "LABEL_1"

|

| 149 |

+

},

|

| 150 |

+

"image_size": 448,

|

| 151 |

+

"initializer_factor": 1.0,

|

| 152 |

+

"initializer_range": 0.02,

|

| 153 |

+

"intermediate_size": 4096,

|

| 154 |

+

"is_decoder": false,

|

| 155 |

+

"is_encoder_decoder": false,

|

| 156 |

+

"label2id": {

|

| 157 |

+

"LABEL_0": 0,

|

| 158 |

+

"LABEL_1": 1

|

| 159 |

+

},

|

| 160 |

+

"layer_norm_eps": 1e-06,

|

| 161 |

+

"length_penalty": 1.0,

|

| 162 |

+

"max_length": 20,

|

| 163 |

+

"min_length": 0,

|

| 164 |

+

"model_type": "intern_vit_6b",

|

| 165 |

+

"no_repeat_ngram_size": 0,

|

| 166 |

+

"norm_type": "layer_norm",

|

| 167 |

+

"num_attention_heads": 16,

|

| 168 |

+

"num_beam_groups": 1,

|

| 169 |

+

"num_beams": 1,

|

| 170 |

+

"num_channels": 3,

|

| 171 |

+

"num_hidden_layers": 24,

|

| 172 |

+

"num_return_sequences": 1,

|

| 173 |

+

"output_attentions": false,

|

| 174 |

+

"output_hidden_states": false,

|

| 175 |

+

"output_scores": false,

|

| 176 |

+

"pad_token_id": null,

|

| 177 |

+

"patch_size": 14,

|

| 178 |

+

"prefix": null,

|

| 179 |

+

"problem_type": null,

|

| 180 |

+

"pruned_heads": {},

|

| 181 |

+

"qk_normalization": false,

|

| 182 |

+

"qkv_bias": true,

|

| 183 |

+

"remove_invalid_values": false,

|

| 184 |

+

"repetition_penalty": 1.0,

|

| 185 |

+

"return_dict": true,

|

| 186 |

+

"return_dict_in_generate": false,

|

| 187 |

+

"sep_token_id": null,

|

| 188 |

+

"suppress_tokens": null,

|

| 189 |

+

"task_specific_params": null,

|

| 190 |

+

"temperature": 1.0,

|

| 191 |

+

"tf_legacy_loss": false,

|

| 192 |

+

"tie_encoder_decoder": false,

|

| 193 |

+

"tie_word_embeddings": true,

|

| 194 |

+

"tokenizer_class": null,

|

| 195 |

+

"top_k": 50,

|

| 196 |

+

"top_p": 1.0,

|

| 197 |

+

"torch_dtype": "bfloat16",

|

| 198 |

+

"torchscript": false,

|

| 199 |

+

"transformers_version": "4.37.2",

|

| 200 |

+

"typical_p": 1.0,

|

| 201 |

+

"use_bfloat16": false,

|

| 202 |

+

"use_flash_attn": true

|

| 203 |

+

},

|

| 204 |

+

"mixin_config":{

|

| 205 |

+

"mixin_every_n_layers": 4,

|

| 206 |

+

"language_dim": 2048,

|

| 207 |

+

"vision_dim": 2048,

|

| 208 |

+

"head_dim": 128,

|

| 209 |

+

"num_heads": 16,

|

| 210 |

+

"intermediate_size": 8192

|

| 211 |

+

}

|

| 212 |

+

}

|

configuration_comemo_chat.py

ADDED

|

@@ -0,0 +1,102 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# --------------------------------------------------------

|

| 2 |

+

# InternVL

|

| 3 |

+

# Copyright (c) 2024 OpenGVLab

|

| 4 |

+

# Licensed under The MIT License [see LICENSE for details]

|

| 5 |

+

# --------------------------------------------------------

|

| 6 |

+

|

| 7 |

+

import copy

|

| 8 |

+

|

| 9 |

+

from transformers import AutoConfig, LlamaConfig

|

| 10 |

+

from transformers.configuration_utils import PretrainedConfig

|

| 11 |

+

from transformers.utils import logging

|

| 12 |

+

|

| 13 |

+

from .configuration_internlm2 import InternLM2Config

|

| 14 |

+

|

| 15 |

+

from .configuration_intern_vit import InternVisionConfig

|

| 16 |

+

|

| 17 |

+

from .configuration_mixin import MixinConfig

|

| 18 |

+

|

| 19 |

+

logger = logging.get_logger(__name__)

|

| 20 |

+

|

| 21 |

+

|

| 22 |

+

class CoMemoChatConfig(PretrainedConfig):

|

| 23 |

+

model_type = 'comemo_chat'

|

| 24 |

+

is_composition = True

|

| 25 |

+

|

| 26 |

+

def __init__(

|

| 27 |

+

self,

|

| 28 |

+

vision_config=None,

|

| 29 |

+

llm_config=None,

|

| 30 |

+

mixin_config=None,

|

| 31 |

+

use_backbone_lora=0,

|

| 32 |

+

use_llm_lora=0,

|

| 33 |

+

select_layer=-1,

|

| 34 |

+

force_image_size=None,

|

| 35 |

+

downsample_ratio=0.5,

|

| 36 |

+

template=None,

|

| 37 |

+

dynamic_image_size=False,

|

| 38 |

+

use_thumbnail=False,

|

| 39 |

+

ps_version='v1',

|

| 40 |

+

min_dynamic_patch=1,

|

| 41 |

+

max_dynamic_patch=6,

|

| 42 |

+

**kwargs):

|

| 43 |

+

super().__init__(**kwargs)

|

| 44 |

+

|

| 45 |

+

if vision_config is None:

|

| 46 |

+

vision_config = {'architectures': ['InternVisionModel']}

|

| 47 |

+

logger.info('vision_config is None. Initializing the InternVisionConfig with default values.')

|

| 48 |

+

|

| 49 |

+

if llm_config is None:

|

| 50 |

+

llm_config = {'architectures': ['InternLM2ForCausalLM']}

|

| 51 |

+

logger.info('llm_config is None. Initializing the LlamaConfig config with default values (`LlamaConfig`).')

|

| 52 |

+

|

| 53 |

+

self.vision_config = InternVisionConfig(**vision_config)

|

| 54 |

+

self.mixin_config = MixinConfig(**mixin_config)

|

| 55 |

+

if llm_config.get('architectures')[0] == 'LlamaForCausalLM':

|

| 56 |

+

self.llm_config = LlamaConfig(**llm_config)

|

| 57 |

+

elif llm_config.get('architectures')[0] == 'InternLM2ForCausalLM':

|

| 58 |

+

self.llm_config = InternLM2Config(**llm_config)

|

| 59 |

+

else:

|

| 60 |

+

raise ValueError('Unsupported architecture: {}'.format(llm_config.get('architectures')[0]))

|

| 61 |

+

self.use_backbone_lora = use_backbone_lora

|

| 62 |

+

self.use_llm_lora = use_llm_lora

|

| 63 |

+

self.select_layer = select_layer

|

| 64 |

+

self.force_image_size = force_image_size

|

| 65 |

+

self.downsample_ratio = downsample_ratio

|

| 66 |

+

self.template = template

|

| 67 |

+

self.dynamic_image_size = dynamic_image_size

|

| 68 |

+

self.use_thumbnail = use_thumbnail

|

| 69 |

+

self.ps_version = ps_version # pixel shuffle version

|

| 70 |

+

self.min_dynamic_patch = min_dynamic_patch

|

| 71 |

+

self.max_dynamic_patch = max_dynamic_patch

|

| 72 |

+

|

| 73 |

+

logger.info(f'vision_select_layer: {self.select_layer}')

|

| 74 |

+

logger.info(f'ps_version: {self.ps_version}')

|

| 75 |

+

logger.info(f'min_dynamic_patch: {self.min_dynamic_patch}')

|

| 76 |

+

logger.info(f'max_dynamic_patch: {self.max_dynamic_patch}')

|

| 77 |

+

|

| 78 |

+

def to_dict(self):

|

| 79 |

+

"""

|

| 80 |

+

Serializes this instance to a Python dictionary. Override the default [`~PretrainedConfig.to_dict`].

|

| 81 |

+

|

| 82 |

+

Returns:

|

| 83 |

+

`Dict[str, any]`: Dictionary of all the attributes that make up this configuration instance,

|

| 84 |

+

"""

|

| 85 |

+

output = copy.deepcopy(self.__dict__)

|

| 86 |

+

output['vision_config'] = self.vision_config.to_dict()

|

| 87 |

+

output['llm_config'] = self.llm_config.to_dict()

|

| 88 |

+

output['mixin_config'] = self.mixin_config.to_dict()

|

| 89 |

+

output['model_type'] = self.__class__.model_type

|

| 90 |

+

output['use_backbone_lora'] = self.use_backbone_lora

|

| 91 |

+

output['use_llm_lora'] = self.use_llm_lora

|

| 92 |

+

output['select_layer'] = self.select_layer

|

| 93 |

+

output['force_image_size'] = self.force_image_size

|

| 94 |

+

output['downsample_ratio'] = self.downsample_ratio

|

| 95 |

+

output['template'] = self.template

|

| 96 |

+

output['dynamic_image_size'] = self.dynamic_image_size

|

| 97 |

+

output['use_thumbnail'] = self.use_thumbnail

|

| 98 |

+

output['ps_version'] = self.ps_version

|

| 99 |

+

output['min_dynamic_patch'] = self.min_dynamic_patch

|

| 100 |

+

output['max_dynamic_patch'] = self.max_dynamic_patch

|

| 101 |

+

|

| 102 |

+

return output

|

configuration_intern_vit.py

ADDED

|

@@ -0,0 +1,119 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# --------------------------------------------------------

|

| 2 |

+

# InternVL

|

| 3 |

+

# Copyright (c) 2024 OpenGVLab

|

| 4 |

+

# Licensed under The MIT License [see LICENSE for details]

|

| 5 |

+

# --------------------------------------------------------

|

| 6 |

+

import os

|

| 7 |

+

from typing import Union

|

| 8 |

+

|

| 9 |

+

from transformers.configuration_utils import PretrainedConfig

|

| 10 |

+

from transformers.utils import logging

|

| 11 |

+

|

| 12 |

+

logger = logging.get_logger(__name__)

|

| 13 |

+

|

| 14 |

+

|

| 15 |

+

class InternVisionConfig(PretrainedConfig):

|

| 16 |

+

r"""

|

| 17 |

+

This is the configuration class to store the configuration of a [`InternVisionModel`]. It is used to

|

| 18 |

+

instantiate a vision encoder according to the specified arguments, defining the model architecture.

|

| 19 |

+

|

| 20 |

+

Configuration objects inherit from [`PretrainedConfig`] and can be used to control the model outputs. Read the

|

| 21 |

+

documentation from [`PretrainedConfig`] for more information.

|

| 22 |

+

|

| 23 |

+

Args:

|

| 24 |

+

num_channels (`int`, *optional*, defaults to 3):

|

| 25 |

+

Number of color channels in the input images (e.g., 3 for RGB).

|

| 26 |

+

patch_size (`int`, *optional*, defaults to 14):

|

| 27 |

+

The size (resolution) of each patch.

|

| 28 |

+

image_size (`int`, *optional*, defaults to 224):

|

| 29 |

+

The size (resolution) of each image.

|

| 30 |

+

qkv_bias (`bool`, *optional*, defaults to `False`):

|

| 31 |

+

Whether to add a bias to the queries and values in the self-attention layers.

|

| 32 |

+

hidden_size (`int`, *optional*, defaults to 3200):

|

| 33 |

+

Dimensionality of the encoder layers and the pooler layer.

|

| 34 |

+

num_attention_heads (`int`, *optional*, defaults to 25):

|

| 35 |

+

Number of attention heads for each attention layer in the Transformer encoder.

|

| 36 |

+

intermediate_size (`int`, *optional*, defaults to 12800):

|

| 37 |

+

Dimensionality of the "intermediate" (i.e., feed-forward) layer in the Transformer encoder.

|

| 38 |

+

qk_normalization (`bool`, *optional*, defaults to `True`):

|

| 39 |

+

Whether to normalize the queries and keys in the self-attention layers.

|

| 40 |

+

num_hidden_layers (`int`, *optional*, defaults to 48):

|

| 41 |

+

Number of hidden layers in the Transformer encoder.

|

| 42 |

+

use_flash_attn (`bool`, *optional*, defaults to `True`):

|

| 43 |

+

Whether to use flash attention mechanism.

|

| 44 |

+

hidden_act (`str` or `function`, *optional*, defaults to `"gelu"`):

|

| 45 |

+

The non-linear activation function (function or string) in the encoder and pooler. If string, `"gelu"`,

|

| 46 |

+

`"relu"`, `"selu"` and `"gelu_new"` ``"gelu"` are supported.

|

| 47 |

+

layer_norm_eps (`float`, *optional*, defaults to 1e-6):

|

| 48 |

+

The epsilon used by the layer normalization layers.

|

| 49 |

+

dropout (`float`, *optional*, defaults to 0.0):

|

| 50 |

+

The dropout probability for all fully connected layers in the embeddings, encoder, and pooler.

|

| 51 |

+

drop_path_rate (`float`, *optional*, defaults to 0.0):

|

| 52 |

+

Dropout rate for stochastic depth.

|

| 53 |

+

attention_dropout (`float`, *optional*, defaults to 0.0):

|

| 54 |

+

The dropout ratio for the attention probabilities.

|

| 55 |

+

initializer_range (`float`, *optional*, defaults to 0.02):

|

| 56 |

+

The standard deviation of the truncated_normal_initializer for initializing all weight matrices.

|

| 57 |

+

initializer_factor (`float`, *optional*, defaults to 0.1):

|

| 58 |

+

A factor for layer scale.

|

| 59 |

+

"""

|

| 60 |

+

|

| 61 |

+

model_type = 'intern_vit_6b'

|

| 62 |

+

|

| 63 |

+

def __init__(

|

| 64 |

+

self,

|

| 65 |

+

num_channels=3,

|

| 66 |

+

patch_size=14,

|

| 67 |

+

image_size=224,

|

| 68 |

+

qkv_bias=False,

|

| 69 |

+

hidden_size=3200,

|

| 70 |

+

num_attention_heads=25,

|

| 71 |

+

intermediate_size=12800,

|

| 72 |

+

qk_normalization=True,

|

| 73 |

+

num_hidden_layers=48,

|

| 74 |

+

use_flash_attn=True,

|

| 75 |

+

hidden_act='gelu',

|

| 76 |

+

norm_type='rms_norm',

|

| 77 |

+

layer_norm_eps=1e-6,

|

| 78 |

+

dropout=0.0,

|

| 79 |

+

drop_path_rate=0.0,

|

| 80 |

+

attention_dropout=0.0,

|

| 81 |

+

initializer_range=0.02,

|

| 82 |

+

initializer_factor=0.1,

|

| 83 |

+

**kwargs,

|

| 84 |

+

):

|

| 85 |

+

super().__init__(**kwargs)

|

| 86 |

+

|

| 87 |

+

self.hidden_size = hidden_size

|

| 88 |

+

self.intermediate_size = intermediate_size

|

| 89 |

+

self.dropout = dropout

|

| 90 |

+

self.drop_path_rate = drop_path_rate

|

| 91 |

+

self.num_hidden_layers = num_hidden_layers

|

| 92 |

+

self.num_attention_heads = num_attention_heads

|

| 93 |

+

self.num_channels = num_channels

|

| 94 |

+

self.patch_size = patch_size

|

| 95 |

+

self.image_size = image_size

|

| 96 |

+

self.initializer_range = initializer_range

|

| 97 |

+

self.initializer_factor = initializer_factor

|

| 98 |

+

self.attention_dropout = attention_dropout

|

| 99 |

+

self.layer_norm_eps = layer_norm_eps

|

| 100 |

+

self.hidden_act = hidden_act

|

| 101 |

+

self.norm_type = norm_type

|

| 102 |

+

self.qkv_bias = qkv_bias

|

| 103 |

+

self.qk_normalization = qk_normalization

|

| 104 |

+

self.use_flash_attn = use_flash_attn

|

| 105 |

+

|

| 106 |

+

@classmethod

|

| 107 |

+

def from_pretrained(cls, pretrained_model_name_or_path: Union[str, os.PathLike], **kwargs) -> 'PretrainedConfig':

|

| 108 |

+

config_dict, kwargs = cls.get_config_dict(pretrained_model_name_or_path, **kwargs)

|

| 109 |

+

|

| 110 |

+

if 'vision_config' in config_dict:

|

| 111 |

+

config_dict = config_dict['vision_config']

|

| 112 |

+

|

| 113 |

+

if 'model_type' in config_dict and hasattr(cls, 'model_type') and config_dict['model_type'] != cls.model_type:

|

| 114 |

+

logger.warning(

|

| 115 |

+

f"You are using a model of type {config_dict['model_type']} to instantiate a model of type "

|

| 116 |

+

f'{cls.model_type}. This is not supported for all configurations of models and can yield errors.'

|

| 117 |

+

)

|

| 118 |

+

|

| 119 |

+

return cls.from_dict(config_dict, **kwargs)

|

configuration_internlm2.py

ADDED

|

@@ -0,0 +1,150 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# Copyright (c) The InternLM team and The HuggingFace Inc. team. All rights reserved.

|

| 2 |

+

#

|

| 3 |

+

# This code is based on transformers/src/transformers/models/llama/configuration_llama.py

|

| 4 |

+

#

|

| 5 |

+

# Licensed under the Apache License, Version 2.0 (the "License");

|

| 6 |

+

# you may not use this file except in compliance with the License.

|

| 7 |

+

# You may obtain a copy of the License at

|

| 8 |

+

#

|

| 9 |

+

# http://www.apache.org/licenses/LICENSE-2.0

|

| 10 |

+

#

|

| 11 |

+

# Unless required by applicable law or agreed to in writing, software

|

| 12 |

+

# distributed under the License is distributed on an "AS IS" BASIS,

|

| 13 |

+

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

| 14 |

+

# See the License for the specific language governing permissions and

|

| 15 |

+

# limitations under the License.

|

| 16 |

+

""" InternLM2 model configuration"""

|

| 17 |

+

|

| 18 |

+

from transformers.configuration_utils import PretrainedConfig

|

| 19 |

+

from transformers.utils import logging

|

| 20 |

+

|

| 21 |

+

logger = logging.get_logger(__name__)

|

| 22 |

+

|

| 23 |

+

INTERNLM2_PRETRAINED_CONFIG_ARCHIVE_MAP = {}

|

| 24 |

+

|

| 25 |

+

|

| 26 |

+

# Modified from transformers.model.llama.configuration_llama.LlamaConfig

|

| 27 |

+

class InternLM2Config(PretrainedConfig):

|

| 28 |

+

r"""

|

| 29 |

+

This is the configuration class to store the configuration of a [`InternLM2Model`]. It is used to instantiate

|

| 30 |

+

an InternLM2 model according to the specified arguments, defining the model architecture. Instantiating a

|

| 31 |

+

configuration with the defaults will yield a similar configuration to that of the InternLM2-7B.

|

| 32 |

+

|

| 33 |

+

Configuration objects inherit from [`PretrainedConfig`] and can be used to control the model outputs. Read the

|

| 34 |

+

documentation from [`PretrainedConfig`] for more information.

|

| 35 |

+

|

| 36 |

+

|

| 37 |

+

Args:

|

| 38 |

+

vocab_size (`int`, *optional*, defaults to 32000):

|

| 39 |

+

Vocabulary size of the InternLM2 model. Defines the number of different tokens that can be represented by the

|

| 40 |

+

`inputs_ids` passed when calling [`InternLM2Model`]

|

| 41 |

+

hidden_size (`int`, *optional*, defaults to 4096):

|

| 42 |

+

Dimension of the hidden representations.

|

| 43 |

+

intermediate_size (`int`, *optional*, defaults to 11008):

|

| 44 |

+

Dimension of the MLP representations.

|

| 45 |

+

num_hidden_layers (`int`, *optional*, defaults to 32):

|

| 46 |

+

Number of hidden layers in the Transformer encoder.

|

| 47 |

+

num_attention_heads (`int`, *optional*, defaults to 32):

|

| 48 |

+

Number of attention heads for each attention layer in the Transformer encoder.

|

| 49 |

+

num_key_value_heads (`int`, *optional*):

|

| 50 |

+

This is the number of key_value heads that should be used to implement Grouped Query Attention. If

|

| 51 |

+

`num_key_value_heads=num_attention_heads`, the model will use Multi Head Attention (MHA), if

|

| 52 |

+

`num_key_value_heads=1 the model will use Multi Query Attention (MQA) otherwise GQA is used. When

|

| 53 |

+

converting a multi-head checkpoint to a GQA checkpoint, each group key and value head should be constructed

|

| 54 |

+

by meanpooling all the original heads within that group. For more details checkout [this

|

| 55 |

+

paper](https://arxiv.org/pdf/2305.13245.pdf). If it is not specified, will default to

|

| 56 |

+

`num_attention_heads`.

|

| 57 |

+

hidden_act (`str` or `function`, *optional*, defaults to `"silu"`):

|

| 58 |

+

The non-linear activation function (function or string) in the decoder.

|

| 59 |

+

max_position_embeddings (`int`, *optional*, defaults to 2048):

|

| 60 |

+

The maximum sequence length that this model might ever be used with. Typically set this to something large

|

| 61 |

+

just in case (e.g., 512 or 1024 or 2048).

|

| 62 |

+

initializer_range (`float`, *optional*, defaults to 0.02):

|

| 63 |

+

The standard deviation of the truncated_normal_initializer for initializing all weight matrices.

|

| 64 |

+

rms_norm_eps (`float`, *optional*, defaults to 1e-12):

|

| 65 |

+

The epsilon used by the rms normalization layers.

|

| 66 |

+

use_cache (`bool`, *optional*, defaults to `True`):

|

| 67 |

+

Whether or not the model should return the last key/values attentions (not used by all models). Only

|

| 68 |

+

relevant if `config.is_decoder=True`.

|

| 69 |

+

tie_word_embeddings(`bool`, *optional*, defaults to `False`):

|

| 70 |

+

Whether to tie weight embeddings

|

| 71 |

+

Example:

|

| 72 |

+

|

| 73 |

+

"""

|

| 74 |

+

model_type = 'internlm2'

|

| 75 |

+

_auto_class = 'AutoConfig'

|

| 76 |

+

|

| 77 |

+

def __init__( # pylint: disable=W0102

|

| 78 |

+

self,

|

| 79 |

+

vocab_size=103168,

|

| 80 |

+

hidden_size=4096,

|

| 81 |

+

intermediate_size=11008,

|

| 82 |

+

num_hidden_layers=32,

|

| 83 |

+

num_attention_heads=32,

|

| 84 |

+

num_key_value_heads=None,

|

| 85 |

+

hidden_act='silu',

|

| 86 |

+

max_position_embeddings=2048,

|

| 87 |

+

initializer_range=0.02,

|

| 88 |

+

rms_norm_eps=1e-6,

|

| 89 |

+

use_cache=True,

|

| 90 |

+

pad_token_id=0,

|

| 91 |

+

bos_token_id=1,

|

| 92 |

+

eos_token_id=2,

|

| 93 |

+

tie_word_embeddings=False,

|

| 94 |

+

bias=True,

|

| 95 |

+

rope_theta=10000,

|

| 96 |

+

rope_scaling=None,

|

| 97 |

+

attn_implementation='eager',

|

| 98 |

+

**kwargs,

|

| 99 |

+

):

|

| 100 |

+

self.vocab_size = vocab_size

|

| 101 |

+

self.max_position_embeddings = max_position_embeddings

|

| 102 |

+

self.hidden_size = hidden_size

|

| 103 |

+

self.intermediate_size = intermediate_size

|

| 104 |

+

self.num_hidden_layers = num_hidden_layers

|

| 105 |

+

self.num_attention_heads = num_attention_heads

|

| 106 |

+

self.bias = bias

|

| 107 |

+

|

| 108 |

+

if num_key_value_heads is None:

|

| 109 |

+

num_key_value_heads = num_attention_heads

|

| 110 |

+

self.num_key_value_heads = num_key_value_heads

|

| 111 |

+

|

| 112 |

+

self.hidden_act = hidden_act

|

| 113 |

+

self.initializer_range = initializer_range

|

| 114 |

+

self.rms_norm_eps = rms_norm_eps

|

| 115 |

+

self.use_cache = use_cache

|

| 116 |

+

self.rope_theta = rope_theta

|

| 117 |

+

self.rope_scaling = rope_scaling

|

| 118 |

+

self._rope_scaling_validation()

|

| 119 |

+

|

| 120 |

+

self.attn_implementation = attn_implementation

|

| 121 |

+

if self.attn_implementation is None:

|

| 122 |

+

self.attn_implementation = 'eager'

|

| 123 |

+

super().__init__(

|

| 124 |

+

pad_token_id=pad_token_id,

|

| 125 |

+

bos_token_id=bos_token_id,

|

| 126 |

+

eos_token_id=eos_token_id,

|

| 127 |

+

tie_word_embeddings=tie_word_embeddings,

|

| 128 |

+

**kwargs,

|

| 129 |

+