Update README.md

Browse files

README.md

CHANGED

|

@@ -1,6 +1,8 @@

|

|

| 1 |

---

|

| 2 |

tags:

|

| 3 |

- unsloth

|

|

|

|

|

|

|

| 4 |

base_model:

|

| 5 |

- Qwen/Qwen3-Coder-30B-A3B-Instruct

|

| 6 |

library_name: transformers

|

|

@@ -9,12 +11,17 @@ license_link: https://huggingface.co/Qwen/Qwen3-Coder-30B-A3B-Instruct/blob/main

|

|

| 9 |

pipeline_tag: text-generation

|

| 10 |

---

|

| 11 |

> [!NOTE]

|

| 12 |

-

>

|

| 13 |

>

|

| 14 |

-

|

| 15 |

<div>

|

|

|

|

|

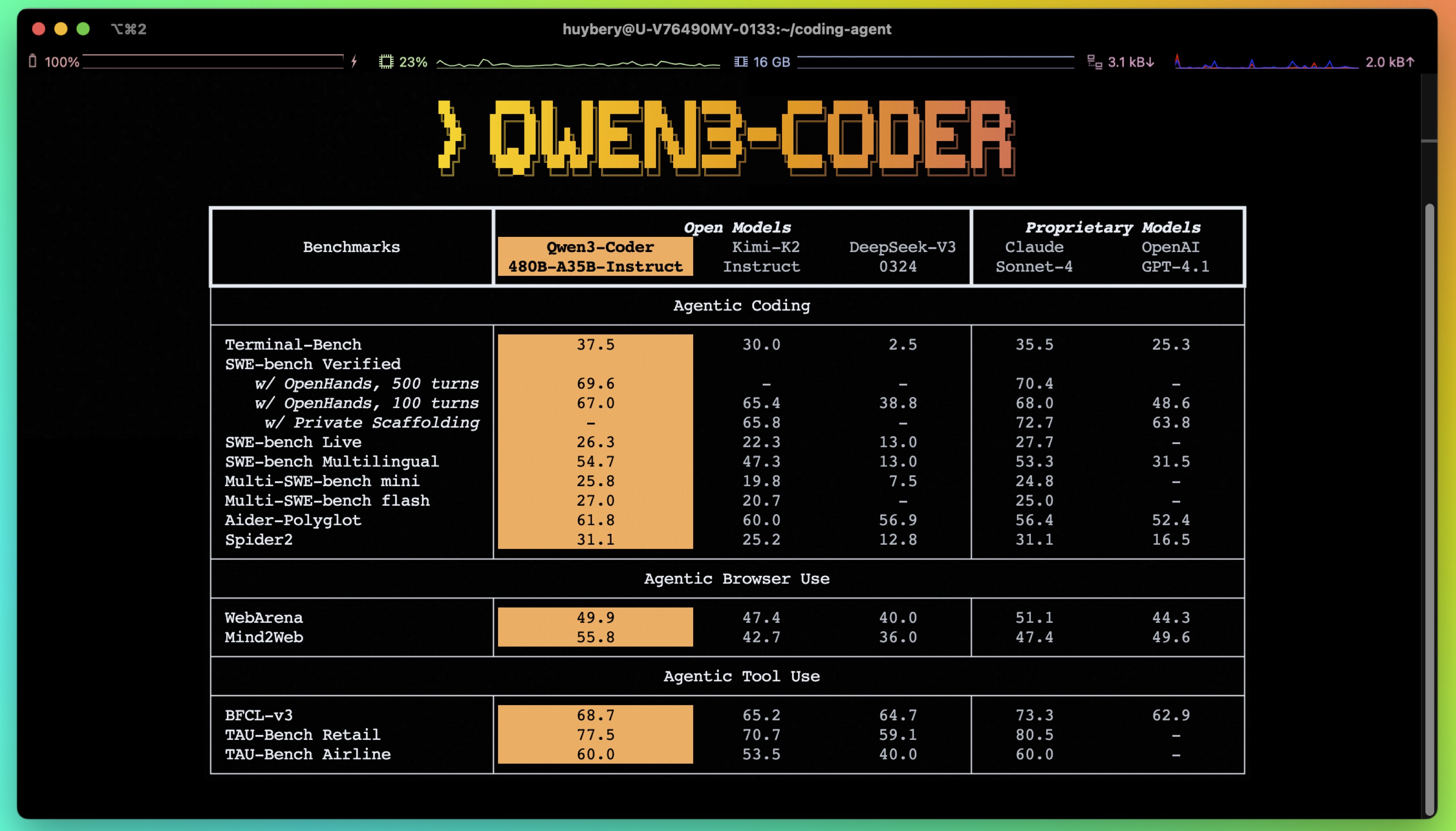

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 16 |

<p style="margin-top: 0;margin-bottom: 0;">

|

| 17 |

-

|

| 18 |

</p>

|

| 19 |

<div style="display: flex; gap: 5px; align-items: center; ">

|

| 20 |

<a href="https://github.com/unslothai/unsloth/">

|

|

@@ -23,37 +30,50 @@ pipeline_tag: text-generation

|

|

| 23 |

<a href="https://discord.gg/unsloth">

|

| 24 |

<img src="https://github.com/unslothai/unsloth/raw/main/images/Discord%20button.png" width="173">

|

| 25 |

</a>

|

| 26 |

-

<a href="https://docs.unsloth.ai/">

|

| 27 |

<img src="https://raw.githubusercontent.com/unslothai/unsloth/refs/heads/main/images/documentation%20green%20button.png" width="143">

|

| 28 |

</a>

|

| 29 |

</div>

|

|

|

|

| 30 |

</div>

|

| 31 |

|

|

|

|

|

|

|

|

|

|

|

|

|

| 32 |

|

| 33 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 34 |

<a href="https://chat.qwen.ai/" target="_blank" style="margin: 2px;">

|

| 35 |

<img alt="Chat" src="https://img.shields.io/badge/%F0%9F%92%9C%EF%B8%8F%20Qwen%20Chat%20-536af5" style="display: inline-block; vertical-align: middle;"/>

|

| 36 |

</a>

|

| 37 |

|

| 38 |

## Highlights

|

| 39 |

|

| 40 |

-

**Qwen3-Coder** is available in multiple sizes

|

| 41 |

|

| 42 |

-

- **Significant Performance** among open models on **Agentic Coding**, **Agentic Browser-Use**, and other foundational coding tasks.

|

| 43 |

- **Long-context Capabilities** with native support for **256K** tokens, extendable up to **1M** tokens using Yarn, optimized for repository-scale understanding.

|

| 44 |

-

- **Agentic Coding** supporting for most

|

| 45 |

|

| 46 |

-

:

|

| 56 |

-

- Number of Experts:

|

| 57 |

- Number of Activated Experts: 8

|

| 58 |

- Context Length: **262,144 natively**.

|

| 59 |

|

|

@@ -75,7 +95,7 @@ The following contains a code snippet illustrating how to use the model generate

|

|

| 75 |

```python

|

| 76 |

from transformers import AutoModelForCausalLM, AutoTokenizer

|

| 77 |

|

| 78 |

-

model_name = "Qwen/Qwen3-

|

| 79 |

|

| 80 |

# load the tokenizer and the model

|

| 81 |

tokenizer = AutoTokenizer.from_pretrained(model_name)

|

|

@@ -156,7 +176,7 @@ messages = [{'role': 'user', 'content': 'square the number 1024'}]

|

|

| 156 |

|

| 157 |

completion = client.chat.completions.create(

|

| 158 |

messages=messages,

|

| 159 |

-

model="Qwen3-

|

| 160 |

max_tokens=65536,

|

| 161 |

tools=tools,

|

| 162 |

)

|

|

@@ -188,4 +208,4 @@ If you find our work helpful, feel free to give us a cite.

|

|

| 188 |

primaryClass={cs.CL},

|

| 189 |

url={https://arxiv.org/abs/2505.09388},

|

| 190 |

}

|

| 191 |

-

```

|

|

|

|

| 1 |

---

|

| 2 |

tags:

|

| 3 |

- unsloth

|

| 4 |

+

- qwen3

|

| 5 |

+

- qwen

|

| 6 |

base_model:

|

| 7 |

- Qwen/Qwen3-Coder-30B-A3B-Instruct

|

| 8 |

library_name: transformers

|

|

|

|

| 11 |

pipeline_tag: text-generation

|

| 12 |

---

|

| 13 |

> [!NOTE]

|

| 14 |

+

> Extends context length from 256K to 1 million

|

| 15 |

>

|

|

|

|

| 16 |

<div>

|

| 17 |

+

<p style="margin-bottom: 0; margin-top: 0;">

|

| 18 |

+

<strong>See <a href="https://huggingface.co/collections/unsloth/qwen3-680edabfb790c8c34a242f95">our collection</a> for all versions of Qwen3 including GGUF, 4-bit & 16-bit formats.</strong>

|

| 19 |

+

</p>

|

| 20 |

+

<p style="margin-bottom: 0;">

|

| 21 |

+

<em>Learn to run Qwen3-Coder correctly - <a href="https://docs.unsloth.ai/basics/qwen3-coder">Read our Guide</a>.</em>

|

| 22 |

+

</p>

|

| 23 |

<p style="margin-top: 0;margin-bottom: 0;">

|

| 24 |

+

<em>See <a href="https://docs.unsloth.ai/basics/unsloth-dynamic-v2.0-gguf">Unsloth Dynamic 2.0 GGUFs</a> for our quantization benchmarks.</em>

|

| 25 |

</p>

|

| 26 |

<div style="display: flex; gap: 5px; align-items: center; ">

|

| 27 |

<a href="https://github.com/unslothai/unsloth/">

|

|

|

|

| 30 |

<a href="https://discord.gg/unsloth">

|

| 31 |

<img src="https://github.com/unslothai/unsloth/raw/main/images/Discord%20button.png" width="173">

|

| 32 |

</a>

|

| 33 |

+

<a href="https://docs.unsloth.ai/basics/qwen3-coder">

|

| 34 |

<img src="https://raw.githubusercontent.com/unslothai/unsloth/refs/heads/main/images/documentation%20green%20button.png" width="143">

|

| 35 |

</a>

|

| 36 |

</div>

|

| 37 |

+

<h1 style="margin-top: 0rem;">✨ Read our Qwen3-Coder Guide <a href="https://docs.unsloth.ai/basics/qwen3-coder">here</a>!</h1>

|

| 38 |

</div>

|

| 39 |

|

| 40 |

+

- Fine-tune Qwen3 (14B) for free using our Google [Colab notebook](https://docs.unsloth.ai/get-started/unsloth-notebooks)!

|

| 41 |

+

- Read our Blog about Qwen3 support: [unsloth.ai/blog/qwen3](https://unsloth.ai/blog/qwen3)

|

| 42 |

+

- View the rest of our notebooks in our [docs here](https://docs.unsloth.ai/get-started/unsloth-notebooks).

|

| 43 |

+

- Run & export your fine-tuned model to Ollama, llama.cpp or HF.

|

| 44 |

|

| 45 |

+

| Unsloth supports | Free Notebooks | Performance | Memory use |

|

| 46 |

+

|-----------------|--------------------------------------------------------------------------------------------------------------------------|-------------|----------|

|

| 47 |

+

| **Qwen3 (14B)** | [▶️ Start on Colab](https://docs.unsloth.ai/get-started/unsloth-notebooks) | 3x faster | 70% less |

|

| 48 |

+

| **GRPO with Qwen3 (8B)** | [▶️ Start on Colab](https://docs.unsloth.ai/get-started/unsloth-notebooks) | 3x faster | 80% less |

|

| 49 |

+

| **Llama-3.2 (3B)** | [▶️ Start on Colab](https://colab.research.google.com/github/unslothai/notebooks/blob/main/nb/Llama3.2_(1B_and_3B)-Conversational.ipynb) | 2.4x faster | 58% less |

|

| 50 |

+

| **Llama-3.2 (11B vision)** | [▶️ Start on Colab](https://colab.research.google.com/github/unslothai/notebooks/blob/main/nb/Llama3.2_(11B)-Vision.ipynb) | 2x faster | 60% less |

|

| 51 |

+

| **Qwen2.5 (7B)** | [▶️ Start on Colab](https://colab.research.google.com/github/unslothai/notebooks/blob/main/nb/Qwen2.5_(7B)-Alpaca.ipynb) | 2x faster | 60% less |

|

| 52 |

+

|

| 53 |

+

# Qwen3-Coder-480B-A35B-Instruct

|

| 54 |

<a href="https://chat.qwen.ai/" target="_blank" style="margin: 2px;">

|

| 55 |

<img alt="Chat" src="https://img.shields.io/badge/%F0%9F%92%9C%EF%B8%8F%20Qwen%20Chat%20-536af5" style="display: inline-block; vertical-align: middle;"/>

|

| 56 |

</a>

|

| 57 |

|

| 58 |

## Highlights

|

| 59 |

|

| 60 |

+

Today, we're announcing **Qwen3-Coder**, our most agentic code model to date. **Qwen3-Coder** is available in multiple sizes, but we're excited to introduce its most powerful variant first: **Qwen3-Coder-480B-A35B-Instruct**. featuring the following key enhancements:

|

| 61 |

|

| 62 |

+

- **Significant Performance** among open models on **Agentic Coding**, **Agentic Browser-Use**, and other foundational coding tasks, achieving results comparable to Claude Sonnet.

|

| 63 |

- **Long-context Capabilities** with native support for **256K** tokens, extendable up to **1M** tokens using Yarn, optimized for repository-scale understanding.

|

| 64 |

+

- **Agentic Coding** supporting for most platfrom such as **Qwen Code**, **CLINE**, featuring a specially designed function call format.

|

| 65 |

|

| 66 |

+

|

| 67 |

|

| 68 |

## Model Overview

|

| 69 |

|

| 70 |

+

**Qwen3-480B-A35B-Instruct** has the following features:

|

| 71 |

- Type: Causal Language Models

|

| 72 |

- Training Stage: Pretraining & Post-training

|

| 73 |

+

- Number of Parameters: 480B in total and 35B activated

|

| 74 |

+

- Number of Layers: 62

|

| 75 |

+

- Number of Attention Heads (GQA): 96 for Q and 8 for KV

|

| 76 |

+

- Number of Experts: 160

|

| 77 |

- Number of Activated Experts: 8

|

| 78 |

- Context Length: **262,144 natively**.

|

| 79 |

|

|

|

|

| 95 |

```python

|

| 96 |

from transformers import AutoModelForCausalLM, AutoTokenizer

|

| 97 |

|

| 98 |

+

model_name = "Qwen/Qwen3-480B-A35B-Instruct"

|

| 99 |

|

| 100 |

# load the tokenizer and the model

|

| 101 |

tokenizer = AutoTokenizer.from_pretrained(model_name)

|

|

|

|

| 176 |

|

| 177 |

completion = client.chat.completions.create(

|

| 178 |

messages=messages,

|

| 179 |

+

model="Qwen3-480B-A35B-Instruct",

|

| 180 |

max_tokens=65536,

|

| 181 |

tools=tools,

|

| 182 |

)

|

|

|

|

| 208 |

primaryClass={cs.CL},

|

| 209 |

url={https://arxiv.org/abs/2505.09388},

|

| 210 |

}

|

| 211 |

+

```

|