Upload folder using huggingface_hub

Browse files- .gitattributes +1 -0

- LICENSE +202 -0

- README.md +249 -0

- added_tokens.json +28 -0

- chat_template.jinja +99 -0

- config.json +333 -0

- generation_config.json +13 -0

- merges.txt +0 -0

- model-00001-of-00024.safetensors +3 -0

- model-00002-of-00024.safetensors +3 -0

- model-00003-of-00024.safetensors +3 -0

- model-00004-of-00024.safetensors +3 -0

- model-00005-of-00024.safetensors +3 -0

- model-00006-of-00024.safetensors +3 -0

- model-00007-of-00024.safetensors +3 -0

- model-00008-of-00024.safetensors +3 -0

- model-00009-of-00024.safetensors +3 -0

- model-00010-of-00024.safetensors +3 -0

- model-00011-of-00024.safetensors +3 -0

- model-00012-of-00024.safetensors +3 -0

- model-00013-of-00024.safetensors +3 -0

- model-00014-of-00024.safetensors +3 -0

- model-00015-of-00024.safetensors +3 -0

- model-00016-of-00024.safetensors +3 -0

- model-00017-of-00024.safetensors +3 -0

- model-00018-of-00024.safetensors +3 -0

- model-00019-of-00024.safetensors +3 -0

- model-00020-of-00024.safetensors +3 -0

- model-00021-of-00024.safetensors +3 -0

- model-00022-of-00024.safetensors +3 -0

- model-00023-of-00024.safetensors +3 -0

- model-00024-of-00024.safetensors +3 -0

- model.safetensors.index.json +0 -0

- special_tokens_map.json +31 -0

- tokenizer.json +3 -0

- tokenizer_config.json +241 -0

- vocab.json +0 -0

.gitattributes

CHANGED

|

@@ -33,3 +33,4 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

tokenizer.json filter=lfs diff=lfs merge=lfs -text

|

LICENSE

ADDED

|

@@ -0,0 +1,202 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

|

| 2 |

+

Apache License

|

| 3 |

+

Version 2.0, January 2004

|

| 4 |

+

http://www.apache.org/licenses/

|

| 5 |

+

|

| 6 |

+

TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION

|

| 7 |

+

|

| 8 |

+

1. Definitions.

|

| 9 |

+

|

| 10 |

+

"License" shall mean the terms and conditions for use, reproduction,

|

| 11 |

+

and distribution as defined by Sections 1 through 9 of this document.

|

| 12 |

+

|

| 13 |

+

"Licensor" shall mean the copyright owner or entity authorized by

|

| 14 |

+

the copyright owner that is granting the License.

|

| 15 |

+

|

| 16 |

+

"Legal Entity" shall mean the union of the acting entity and all

|

| 17 |

+

other entities that control, are controlled by, or are under common

|

| 18 |

+

control with that entity. For the purposes of this definition,

|

| 19 |

+

"control" means (i) the power, direct or indirect, to cause the

|

| 20 |

+

direction or management of such entity, whether by contract or

|

| 21 |

+

otherwise, or (ii) ownership of fifty percent (50%) or more of the

|

| 22 |

+

outstanding shares, or (iii) beneficial ownership of such entity.

|

| 23 |

+

|

| 24 |

+

"You" (or "Your") shall mean an individual or Legal Entity

|

| 25 |

+

exercising permissions granted by this License.

|

| 26 |

+

|

| 27 |

+

"Source" form shall mean the preferred form for making modifications,

|

| 28 |

+

including but not limited to software source code, documentation

|

| 29 |

+

source, and configuration files.

|

| 30 |

+

|

| 31 |

+

"Object" form shall mean any form resulting from mechanical

|

| 32 |

+

transformation or translation of a Source form, including but

|

| 33 |

+

not limited to compiled object code, generated documentation,

|

| 34 |

+

and conversions to other media types.

|

| 35 |

+

|

| 36 |

+

"Work" shall mean the work of authorship, whether in Source or

|

| 37 |

+

Object form, made available under the License, as indicated by a

|

| 38 |

+

copyright notice that is included in or attached to the work

|

| 39 |

+

(an example is provided in the Appendix below).

|

| 40 |

+

|

| 41 |

+

"Derivative Works" shall mean any work, whether in Source or Object

|

| 42 |

+

form, that is based on (or derived from) the Work and for which the

|

| 43 |

+

editorial revisions, annotations, elaborations, or other modifications

|

| 44 |

+

represent, as a whole, an original work of authorship. For the purposes

|

| 45 |

+

of this License, Derivative Works shall not include works that remain

|

| 46 |

+

separable from, or merely link (or bind by name) to the interfaces of,

|

| 47 |

+

the Work and Derivative Works thereof.

|

| 48 |

+

|

| 49 |

+

"Contribution" shall mean any work of authorship, including

|

| 50 |

+

the original version of the Work and any modifications or additions

|

| 51 |

+

to that Work or Derivative Works thereof, that is intentionally

|

| 52 |

+

submitted to Licensor for inclusion in the Work by the copyright owner

|

| 53 |

+

or by an individual or Legal Entity authorized to submit on behalf of

|

| 54 |

+

the copyright owner. For the purposes of this definition, "submitted"

|

| 55 |

+

means any form of electronic, verbal, or written communication sent

|

| 56 |

+

to the Licensor or its representatives, including but not limited to

|

| 57 |

+

communication on electronic mailing lists, source code control systems,

|

| 58 |

+

and issue tracking systems that are managed by, or on behalf of, the

|

| 59 |

+

Licensor for the purpose of discussing and improving the Work, but

|

| 60 |

+

excluding communication that is conspicuously marked or otherwise

|

| 61 |

+

designated in writing by the copyright owner as "Not a Contribution."

|

| 62 |

+

|

| 63 |

+

"Contributor" shall mean Licensor and any individual or Legal Entity

|

| 64 |

+

on behalf of whom a Contribution has been received by Licensor and

|

| 65 |

+

subsequently incorporated within the Work.

|

| 66 |

+

|

| 67 |

+

2. Grant of Copyright License. Subject to the terms and conditions of

|

| 68 |

+

this License, each Contributor hereby grants to You a perpetual,

|

| 69 |

+

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

|

| 70 |

+

copyright license to reproduce, prepare Derivative Works of,

|

| 71 |

+

publicly display, publicly perform, sublicense, and distribute the

|

| 72 |

+

Work and such Derivative Works in Source or Object form.

|

| 73 |

+

|

| 74 |

+

3. Grant of Patent License. Subject to the terms and conditions of

|

| 75 |

+

this License, each Contributor hereby grants to You a perpetual,

|

| 76 |

+

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

|

| 77 |

+

(except as stated in this section) patent license to make, have made,

|

| 78 |

+

use, offer to sell, sell, import, and otherwise transfer the Work,

|

| 79 |

+

where such license applies only to those patent claims licensable

|

| 80 |

+

by such Contributor that are necessarily infringed by their

|

| 81 |

+

Contribution(s) alone or by combination of their Contribution(s)

|

| 82 |

+

with the Work to which such Contribution(s) was submitted. If You

|

| 83 |

+

institute patent litigation against any entity (including a

|

| 84 |

+

cross-claim or counterclaim in a lawsuit) alleging that the Work

|

| 85 |

+

or a Contribution incorporated within the Work constitutes direct

|

| 86 |

+

or contributory patent infringement, then any patent licenses

|

| 87 |

+

granted to You under this License for that Work shall terminate

|

| 88 |

+

as of the date such litigation is filed.

|

| 89 |

+

|

| 90 |

+

4. Redistribution. You may reproduce and distribute copies of the

|

| 91 |

+

Work or Derivative Works thereof in any medium, with or without

|

| 92 |

+

modifications, and in Source or Object form, provided that You

|

| 93 |

+

meet the following conditions:

|

| 94 |

+

|

| 95 |

+

(a) You must give any other recipients of the Work or

|

| 96 |

+

Derivative Works a copy of this License; and

|

| 97 |

+

|

| 98 |

+

(b) You must cause any modified files to carry prominent notices

|

| 99 |

+

stating that You changed the files; and

|

| 100 |

+

|

| 101 |

+

(c) You must retain, in the Source form of any Derivative Works

|

| 102 |

+

that You distribute, all copyright, patent, trademark, and

|

| 103 |

+

attribution notices from the Source form of the Work,

|

| 104 |

+

excluding those notices that do not pertain to any part of

|

| 105 |

+

the Derivative Works; and

|

| 106 |

+

|

| 107 |

+

(d) If the Work includes a "NOTICE" text file as part of its

|

| 108 |

+

distribution, then any Derivative Works that You distribute must

|

| 109 |

+

include a readable copy of the attribution notices contained

|

| 110 |

+

within such NOTICE file, excluding those notices that do not

|

| 111 |

+

pertain to any part of the Derivative Works, in at least one

|

| 112 |

+

of the following places: within a NOTICE text file distributed

|

| 113 |

+

as part of the Derivative Works; within the Source form or

|

| 114 |

+

documentation, if provided along with the Derivative Works; or,

|

| 115 |

+

within a display generated by the Derivative Works, if and

|

| 116 |

+

wherever such third-party notices normally appear. The contents

|

| 117 |

+

of the NOTICE file are for informational purposes only and

|

| 118 |

+

do not modify the License. You may add Your own attribution

|

| 119 |

+

notices within Derivative Works that You distribute, alongside

|

| 120 |

+

or as an addendum to the NOTICE text from the Work, provided

|

| 121 |

+

that such additional attribution notices cannot be construed

|

| 122 |

+

as modifying the License.

|

| 123 |

+

|

| 124 |

+

You may add Your own copyright statement to Your modifications and

|

| 125 |

+

may provide additional or different license terms and conditions

|

| 126 |

+

for use, reproduction, or distribution of Your modifications, or

|

| 127 |

+

for any such Derivative Works as a whole, provided Your use,

|

| 128 |

+

reproduction, and distribution of the Work otherwise complies with

|

| 129 |

+

the conditions stated in this License.

|

| 130 |

+

|

| 131 |

+

5. Submission of Contributions. Unless You explicitly state otherwise,

|

| 132 |

+

any Contribution intentionally submitted for inclusion in the Work

|

| 133 |

+

by You to the Licensor shall be under the terms and conditions of

|

| 134 |

+

this License, without any additional terms or conditions.

|

| 135 |

+

Notwithstanding the above, nothing herein shall supersede or modify

|

| 136 |

+

the terms of any separate license agreement you may have executed

|

| 137 |

+

with Licensor regarding such Contributions.

|

| 138 |

+

|

| 139 |

+

6. Trademarks. This License does not grant permission to use the trade

|

| 140 |

+

names, trademarks, service marks, or product names of the Licensor,

|

| 141 |

+

except as required for reasonable and customary use in describing the

|

| 142 |

+

origin of the Work and reproducing the content of the NOTICE file.

|

| 143 |

+

|

| 144 |

+

7. Disclaimer of Warranty. Unless required by applicable law or

|

| 145 |

+

agreed to in writing, Licensor provides the Work (and each

|

| 146 |

+

Contributor provides its Contributions) on an "AS IS" BASIS,

|

| 147 |

+

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or

|

| 148 |

+

implied, including, without limitation, any warranties or conditions

|

| 149 |

+

of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A

|

| 150 |

+

PARTICULAR PURPOSE. You are solely responsible for determining the

|

| 151 |

+

appropriateness of using or redistributing the Work and assume any

|

| 152 |

+

risks associated with Your exercise of permissions under this License.

|

| 153 |

+

|

| 154 |

+

8. Limitation of Liability. In no event and under no legal theory,

|

| 155 |

+

whether in tort (including negligence), contract, or otherwise,

|

| 156 |

+

unless required by applicable law (such as deliberate and grossly

|

| 157 |

+

negligent acts) or agreed to in writing, shall any Contributor be

|

| 158 |

+

liable to You for damages, including any direct, indirect, special,

|

| 159 |

+

incidental, or consequential damages of any character arising as a

|

| 160 |

+

result of this License or out of the use or inability to use the

|

| 161 |

+

Work (including but not limited to damages for loss of goodwill,

|

| 162 |

+

work stoppage, computer failure or malfunction, or any and all

|

| 163 |

+

other commercial damages or losses), even if such Contributor

|

| 164 |

+

has been advised of the possibility of such damages.

|

| 165 |

+

|

| 166 |

+

9. Accepting Warranty or Additional Liability. While redistributing

|

| 167 |

+

the Work or Derivative Works thereof, You may choose to offer,

|

| 168 |

+

and charge a fee for, acceptance of support, warranty, indemnity,

|

| 169 |

+

or other liability obligations and/or rights consistent with this

|

| 170 |

+

License. However, in accepting such obligations, You may act only

|

| 171 |

+

on Your own behalf and on Your sole responsibility, not on behalf

|

| 172 |

+

of any other Contributor, and only if You agree to indemnify,

|

| 173 |

+

defend, and hold each Contributor harmless for any liability

|

| 174 |

+

incurred by, or claims asserted against, such Contributor by reason

|

| 175 |

+

of your accepting any such warranty or additional liability.

|

| 176 |

+

|

| 177 |

+

END OF TERMS AND CONDITIONS

|

| 178 |

+

|

| 179 |

+

APPENDIX: How to apply the Apache License to your work.

|

| 180 |

+

|

| 181 |

+

To apply the Apache License to your work, attach the following

|

| 182 |

+

boilerplate notice, with the fields enclosed by brackets "[]"

|

| 183 |

+

replaced with your own identifying information. (Don't include

|

| 184 |

+

the brackets!) The text should be enclosed in the appropriate

|

| 185 |

+

comment syntax for the file format. We also recommend that a

|

| 186 |

+

file or class name and description of purpose be included on the

|

| 187 |

+

same "printed page" as the copyright notice for easier

|

| 188 |

+

identification within third-party archives.

|

| 189 |

+

|

| 190 |

+

Copyright 2024 Alibaba Cloud

|

| 191 |

+

|

| 192 |

+

Licensed under the Apache License, Version 2.0 (the "License");

|

| 193 |

+

you may not use this file except in compliance with the License.

|

| 194 |

+

You may obtain a copy of the License at

|

| 195 |

+

|

| 196 |

+

http://www.apache.org/licenses/LICENSE-2.0

|

| 197 |

+

|

| 198 |

+

Unless required by applicable law or agreed to in writing, software

|

| 199 |

+

distributed under the License is distributed on an "AS IS" BASIS,

|

| 200 |

+

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

| 201 |

+

See the License for the specific language governing permissions and

|

| 202 |

+

limitations under the License.

|

README.md

ADDED

|

@@ -0,0 +1,249 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

tags:

|

| 3 |

+

- unsloth

|

| 4 |

+

library_name: transformers

|

| 5 |

+

license: apache-2.0

|

| 6 |

+

license_link: https://huggingface.co/Qwen/Qwen3-235B-A22B-Instruct-2507-FP8/blob/main/LICENSE

|

| 7 |

+

pipeline_tag: text-generation

|

| 8 |

+

base_model:

|

| 9 |

+

- Qwen/Qwen3-235B-A22B-Instruct-2507-FP8

|

| 10 |

+

---

|

| 11 |

+

> [!NOTE]

|

| 12 |

+

> Includes Unsloth **chat template fixes**! <br> For `llama.cpp`, use `--jinja`

|

| 13 |

+

>

|

| 14 |

+

|

| 15 |

+

<div>

|

| 16 |

+

<p style="margin-top: 0;margin-bottom: 0;">

|

| 17 |

+

<em><a href="https://docs.unsloth.ai/basics/unsloth-dynamic-v2.0-gguf">Unsloth Dynamic 2.0</a> achieves superior accuracy & outperforms other leading quants.</em>

|

| 18 |

+

</p>

|

| 19 |

+

<div style="display: flex; gap: 5px; align-items: center; ">

|

| 20 |

+

<a href="https://github.com/unslothai/unsloth/">

|

| 21 |

+

<img src="https://github.com/unslothai/unsloth/raw/main/images/unsloth%20new%20logo.png" width="133">

|

| 22 |

+

</a>

|

| 23 |

+

<a href="https://discord.gg/unsloth">

|

| 24 |

+

<img src="https://github.com/unslothai/unsloth/raw/main/images/Discord%20button.png" width="173">

|

| 25 |

+

</a>

|

| 26 |

+

<a href="https://docs.unsloth.ai/">

|

| 27 |

+

<img src="https://raw.githubusercontent.com/unslothai/unsloth/refs/heads/main/images/documentation%20green%20button.png" width="143">

|

| 28 |

+

</a>

|

| 29 |

+

</div>

|

| 30 |

+

</div>

|

| 31 |

+

|

| 32 |

+

|

| 33 |

+

# Qwen3-235B-A22B-Instruct-2507-FP8

|

| 34 |

+

<a href="https://chat.qwen.ai/" target="_blank" style="margin: 2px;">

|

| 35 |

+

<img alt="Chat" src="https://img.shields.io/badge/%F0%9F%92%9C%EF%B8%8F%20Qwen%20Chat%20-536af5" style="display: inline-block; vertical-align: middle;"/>

|

| 36 |

+

</a>

|

| 37 |

+

|

| 38 |

+

## Highlights

|

| 39 |

+

|

| 40 |

+

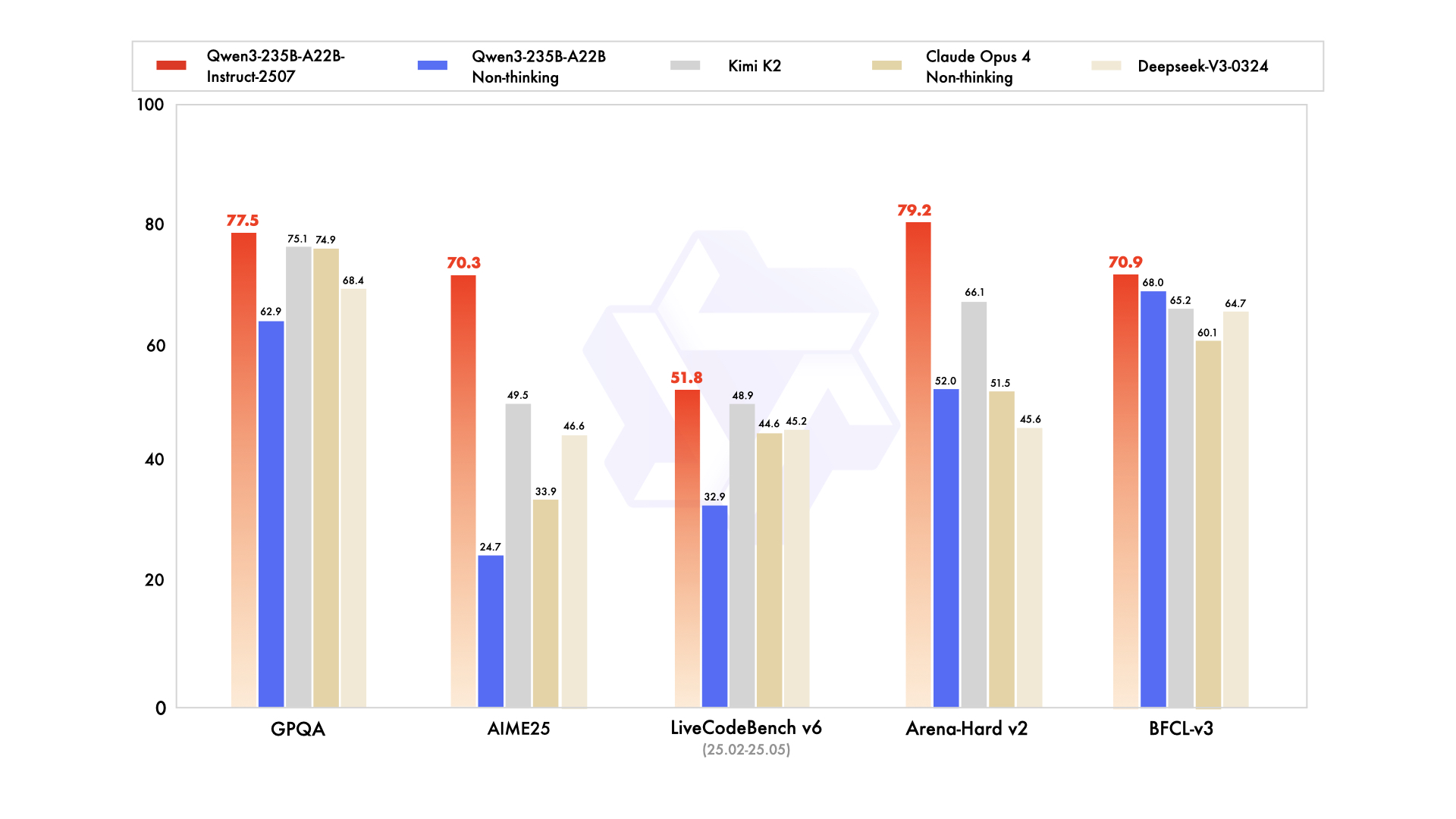

We introduce the updated version of the **Qwen3-235B-A22B-FP8 non-thinking mode**, named **Qwen3-235B-A22B-Instruct-2507-FP8**, featuring the following key enhancements:

|

| 41 |

+

|

| 42 |

+

- **Significant improvements** in general capabilities, including **instruction following, logical reasoning, text comprehension, mathematics, science, coding and tool usage**.

|

| 43 |

+

- **Substantial gains** in long-tail knowledge coverage across **multiple languages**.

|

| 44 |

+

- **Markedly better alignment** with user preferences in **subjective and open-ended tasks**, enabling more helpful responses and higher-quality text generation.

|

| 45 |

+

- **Enhanced capabilities** in **256K long-context understanding**.

|

| 46 |

+

|

| 47 |

+

|

| 48 |

+

|

| 49 |

+

## Model Overview

|

| 50 |

+

|

| 51 |

+

This repo contains the FP8 version of **Qwen3-235B-A22B-Instruct-2507**, which has the following features:

|

| 52 |

+

- Type: Causal Language Models

|

| 53 |

+

- Training Stage: Pretraining & Post-training

|

| 54 |

+

- Number of Parameters: 235B in total and 22B activated

|

| 55 |

+

- Number of Paramaters (Non-Embedding): 234B

|

| 56 |

+

- Number of Layers: 94

|

| 57 |

+

- Number of Attention Heads (GQA): 64 for Q and 4 for KV

|

| 58 |

+

- Number of Experts: 128

|

| 59 |

+

- Number of Activated Experts: 8

|

| 60 |

+

- Context Length: **262,144 natively**.

|

| 61 |

+

|

| 62 |

+

**NOTE: This model supports only non-thinking mode and does not generate ``<think></think>`` blocks in its output. Meanwhile, specifying `enable_thinking=False` is no longer required.**

|

| 63 |

+

|

| 64 |

+

For more details, including benchmark evaluation, hardware requirements, and inference performance, please refer to our [blog](https://qwenlm.github.io/blog/qwen3/), [GitHub](https://github.com/QwenLM/Qwen3), and [Documentation](https://qwen.readthedocs.io/en/latest/).

|

| 65 |

+

|

| 66 |

+

|

| 67 |

+

## Performance

|

| 68 |

+

|

| 69 |

+

| | Deepseek-V3-0324 | GPT-4o-0327 | Claude Opus 4 Non-thinking | Kimi K2 | Qwen3-235B-A22B Non-thinking | Qwen3-235B-A22B-Instruct-2507 |

|

| 70 |

+

|--- | --- | --- | --- | --- | --- | ---|

|

| 71 |

+

| **Knowledge** | | | | | | |

|

| 72 |

+

| MMLU-Pro | 81.2 | 79.8 | **86.6** | 81.1 | 75.2 | 83.0 |

|

| 73 |

+

| MMLU-Redux | 90.4 | 91.3 | **94.2** | 92.7 | 89.2 | 93.1 |

|

| 74 |

+

| GPQA | 68.4 | 66.9 | 74.9 | 75.1 | 62.9 | **77.5** |

|

| 75 |

+

| SuperGPQA | 57.3 | 51.0 | 56.5 | 57.2 | 48.2 | **62.6** |

|

| 76 |

+

| SimpleQA | 27.2 | 40.3 | 22.8 | 31.0 | 12.2 | **54.3** |

|

| 77 |

+

| CSimpleQA | 71.1 | 60.2 | 68.0 | 74.5 | 60.8 | **84.3** |

|

| 78 |

+

| **Reasoning** | | | | | | |

|

| 79 |

+

| AIME25 | 46.6 | 26.7 | 33.9 | 49.5 | 24.7 | **70.3** |

|

| 80 |

+

| HMMT25 | 27.5 | 7.9 | 15.9 | 38.8 | 10.0 | **55.4** |

|

| 81 |

+

| ARC-AGI | 9.0 | 8.8 | 30.3 | 13.3 | 4.3 | **41.8** |

|

| 82 |

+

| ZebraLogic | 83.4 | 52.6 | - | 89.0 | 37.7 | **95.0** |

|

| 83 |

+

| LiveBench 20241125 | 66.9 | 63.7 | 74.6 | **76.4** | 62.5 | 75.4 |

|

| 84 |

+

| **Coding** | | | | | | |

|

| 85 |

+

| LiveCodeBench v6 (25.02-25.05) | 45.2 | 35.8 | 44.6 | 48.9 | 32.9 | **51.8** |

|

| 86 |

+

| MultiPL-E | 82.2 | 82.7 | **88.5** | 85.7 | 79.3 | 87.9 |

|

| 87 |

+

| Aider-Polyglot | 55.1 | 45.3 | **70.7** | 59.0 | 59.6 | 57.3 |

|

| 88 |

+

| **Alignment** | | | | | | |

|

| 89 |

+

| IFEval | 82.3 | 83.9 | 87.4 | **89.8** | 83.2 | 88.7 |

|

| 90 |

+

| Arena-Hard v2* | 45.6 | 61.9 | 51.5 | 66.1 | 52.0 | **79.2** |

|

| 91 |

+

| Creative Writing v3 | 81.6 | 84.9 | 83.8 | **88.1** | 80.4 | 87.5 |

|

| 92 |

+

| WritingBench | 74.5 | 75.5 | 79.2 | **86.2** | 77.0 | 85.2 |

|

| 93 |

+

| **Agent** | | | | | | |

|

| 94 |

+

| BFCL-v3 | 64.7 | 66.5 | 60.1 | 65.2 | 68.0 | **70.9** |

|

| 95 |

+

| TAU-Retail | 49.6 | 60.3# | **81.4** | 70.7 | 65.2 | 71.3 |

|

| 96 |

+

| TAU-Airline | 32.0 | 42.8# | **59.6** | 53.5 | 32.0 | 44.0 |

|

| 97 |

+

| **Multilingualism** | | | | | | |

|

| 98 |

+

| MultiIF | 66.5 | 70.4 | - | 76.2 | 70.2 | **77.5** |

|

| 99 |

+

| MMLU-ProX | 75.8 | 76.2 | - | 74.5 | 73.2 | **79.4** |

|

| 100 |

+

| INCLUDE | 80.1 | **82.1** | - | 76.9 | 75.6 | 79.5 |

|

| 101 |

+

| PolyMATH | 32.2 | 25.5 | 30.0 | 44.8 | 27.0 | **50.2** |

|

| 102 |

+

|

| 103 |

+

*: For reproducibility, we report the win rates evaluated by GPT-4.1.

|

| 104 |

+

|

| 105 |

+

\#: Results were generated using GPT-4o-20241120, as access to the native function calling API of GPT-4o-0327 was unavailable.

|

| 106 |

+

|

| 107 |

+

|

| 108 |

+

## Quickstart

|

| 109 |

+

|

| 110 |

+

The code of Qwen3-MoE has been in the latest Hugging Face `transformers` and we advise you to use the latest version of `transformers`.

|

| 111 |

+

|

| 112 |

+

With `transformers<4.51.0`, you will encounter the following error:

|

| 113 |

+

```

|

| 114 |

+

KeyError: 'qwen3_moe'

|

| 115 |

+

```

|

| 116 |

+

|

| 117 |

+

The following contains a code snippet illustrating how to use the model generate content based on given inputs.

|

| 118 |

+

```python

|

| 119 |

+

from transformers import AutoModelForCausalLM, AutoTokenizer

|

| 120 |

+

|

| 121 |

+

model_name = "Qwen/Qwen3-235B-A22B-Instruct-2507-FP8"

|

| 122 |

+

|

| 123 |

+

# load the tokenizer and the model

|

| 124 |

+

tokenizer = AutoTokenizer.from_pretrained(model_name)

|

| 125 |

+

model = AutoModelForCausalLM.from_pretrained(

|

| 126 |

+

model_name,

|

| 127 |

+

torch_dtype="auto",

|

| 128 |

+

device_map="auto"

|

| 129 |

+

)

|

| 130 |

+

|

| 131 |

+

# prepare the model input

|

| 132 |

+

prompt = "Give me a short introduction to large language model."

|

| 133 |

+

messages = [

|

| 134 |

+

{"role": "user", "content": prompt}

|

| 135 |

+

]

|

| 136 |

+

text = tokenizer.apply_chat_template(

|

| 137 |

+

messages,

|

| 138 |

+

tokenize=False,

|

| 139 |

+

add_generation_prompt=True,

|

| 140 |

+

)

|

| 141 |

+

model_inputs = tokenizer([text], return_tensors="pt").to(model.device)

|

| 142 |

+

|

| 143 |

+

# conduct text completion

|

| 144 |

+

generated_ids = model.generate(

|

| 145 |

+

**model_inputs,

|

| 146 |

+

max_new_tokens=16384

|

| 147 |

+

)

|

| 148 |

+

output_ids = generated_ids[0][len(model_inputs.input_ids[0]):].tolist()

|

| 149 |

+

|

| 150 |

+

content = tokenizer.decode(output_ids, skip_special_tokens=True)

|

| 151 |

+

|

| 152 |

+

print("content:", content)

|

| 153 |

+

```

|

| 154 |

+

|

| 155 |

+

For deployment, you can use `sglang>=0.4.6.post1` or `vllm>=0.8.5` or to create an OpenAI-compatible API endpoint:

|

| 156 |

+

- SGLang:

|

| 157 |

+

```shell

|

| 158 |

+

python -m sglang.launch_server --model-path Qwen/Qwen3-235B-A22B-Instruct-2507-FP8 --tp 4 --context-length 262144

|

| 159 |

+

```

|

| 160 |

+

- vLLM:

|

| 161 |

+

```shell

|

| 162 |

+

vllm serve Qwen/Qwen3-235B-A22B-Instruct-2507-FP8 --tensor-parallel-size 4 --max-model-len 262144

|

| 163 |

+

```

|

| 164 |

+

|

| 165 |

+

**Note: If you encounter out-of-memory (OOM) issues, consider reducing the context length to a shorter value, such as `32,768`.**

|

| 166 |

+

|

| 167 |

+

For local use, applications such as Ollama, LMStudio, MLX-LM, llama.cpp, and KTransformers have also supported Qwen3.

|

| 168 |

+

|

| 169 |

+

## Note on FP8

|

| 170 |

+

|

| 171 |

+

For convenience and performance, we have provided `fp8`-quantized model checkpoint for Qwen3, whose name ends with `-FP8`. The quantization method is fine-grained `fp8` quantization with block size of 128. You can find more details in the `quantization_config` field in `config.json`.

|

| 172 |

+

|

| 173 |

+

You can use the Qwen3-235B-A22B-Instruct-2507-FP8 model with serveral inference frameworks, including `transformers`, `sglang`, and `vllm`, as the original bfloat16 model.

|

| 174 |

+

However, please pay attention to the following known issues:

|

| 175 |

+

- `transformers`:

|

| 176 |

+

- there are currently issues with the "fine-grained fp8" method in `transformers` for distributed inference. You may need to set the environment variable `CUDA_LAUNCH_BLOCKING=1` if multiple devices are used in inference.

|

| 177 |

+

|

| 178 |

+

## Agentic Use

|

| 179 |

+

|

| 180 |

+

Qwen3 excels in tool calling capabilities. We recommend using [Qwen-Agent](https://github.com/QwenLM/Qwen-Agent) to make the best use of agentic ability of Qwen3. Qwen-Agent encapsulates tool-calling templates and tool-calling parsers internally, greatly reducing coding complexity.

|

| 181 |

+

|

| 182 |

+

To define the available tools, you can use the MCP configuration file, use the integrated tool of Qwen-Agent, or integrate other tools by yourself.

|

| 183 |

+

```python

|

| 184 |

+

from qwen_agent.agents import Assistant

|

| 185 |

+

|

| 186 |

+

# Define LLM

|

| 187 |

+

llm_cfg = {

|

| 188 |

+

'model': 'Qwen3-235B-A22B-Instruct-2507-FP8',

|

| 189 |

+

|

| 190 |

+

# Use a custom endpoint compatible with OpenAI API:

|

| 191 |

+

'model_server': 'http://localhost:8000/v1', # api_base

|

| 192 |

+

'api_key': 'EMPTY',

|

| 193 |

+

}

|

| 194 |

+

|

| 195 |

+

# Define Tools

|

| 196 |

+

tools = [

|

| 197 |

+

{'mcpServers': { # You can specify the MCP configuration file

|

| 198 |

+

'time': {

|

| 199 |

+

'command': 'uvx',

|

| 200 |

+

'args': ['mcp-server-time', '--local-timezone=Asia/Shanghai']

|

| 201 |

+

},

|

| 202 |

+

"fetch": {

|

| 203 |

+

"command": "uvx",

|

| 204 |

+

"args": ["mcp-server-fetch"]

|

| 205 |

+

}

|

| 206 |

+

}

|

| 207 |

+

},

|

| 208 |

+

'code_interpreter', # Built-in tools

|

| 209 |

+

]

|

| 210 |

+

|

| 211 |

+

# Define Agent

|

| 212 |

+

bot = Assistant(llm=llm_cfg, function_list=tools)

|

| 213 |

+

|

| 214 |

+

# Streaming generation

|

| 215 |

+

messages = [{'role': 'user', 'content': 'https://qwenlm.github.io/blog/ Introduce the latest developments of Qwen'}]

|

| 216 |

+

for responses in bot.run(messages=messages):

|

| 217 |

+

pass

|

| 218 |

+

print(responses)

|

| 219 |

+

```

|

| 220 |

+

|

| 221 |

+

## Best Practices

|

| 222 |

+

|

| 223 |

+

To achieve optimal performance, we recommend the following settings:

|

| 224 |

+

|

| 225 |

+

1. **Sampling Parameters**:

|

| 226 |

+

- We suggest using `Temperature=0.7`, `TopP=0.8`, `TopK=20`, and `MinP=0`.

|

| 227 |

+

- For supported frameworks, you can adjust the `presence_penalty` parameter between 0 and 2 to reduce endless repetitions. However, using a higher value may occasionally result in language mixing and a slight decrease in model performance.

|

| 228 |

+

|

| 229 |

+

2. **Adequate Output Length**: We recommend using an output length of 16,384 tokens for most queries, which is adequate for instruct models.

|

| 230 |

+

|

| 231 |

+

3. **Standardize Output Format**: We recommend using prompts to standardize model outputs when benchmarking.

|

| 232 |

+

- **Math Problems**: Include "Please reason step by step, and put your final answer within \boxed{}." in the prompt.

|

| 233 |

+

- **Multiple-Choice Questions**: Add the following JSON structure to the prompt to standardize responses: "Please show your choice in the `answer` field with only the choice letter, e.g., `"answer": "C"`."

|

| 234 |

+

|

| 235 |

+

### Citation

|

| 236 |

+

|

| 237 |

+

If you find our work helpful, feel free to give us a cite.

|

| 238 |

+

|

| 239 |

+

```

|

| 240 |

+

@misc{qwen3technicalreport,

|

| 241 |

+

title={Qwen3 Technical Report},

|

| 242 |

+

author={Qwen Team},

|

| 243 |

+

year={2025},

|

| 244 |

+

eprint={2505.09388},

|

| 245 |

+

archivePrefix={arXiv},

|

| 246 |

+

primaryClass={cs.CL},

|

| 247 |

+

url={https://arxiv.org/abs/2505.09388},

|

| 248 |

+

}

|

| 249 |

+

```

|

added_tokens.json

ADDED

|

@@ -0,0 +1,28 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"</think>": 151668,

|

| 3 |

+

"</tool_call>": 151658,

|

| 4 |

+

"</tool_response>": 151666,

|

| 5 |

+

"<think>": 151667,

|

| 6 |

+

"<tool_call>": 151657,

|

| 7 |

+

"<tool_response>": 151665,

|

| 8 |

+

"<|box_end|>": 151649,

|

| 9 |

+

"<|box_start|>": 151648,

|

| 10 |

+

"<|endoftext|>": 151643,

|

| 11 |

+

"<|file_sep|>": 151664,

|

| 12 |

+

"<|fim_middle|>": 151660,

|

| 13 |

+

"<|fim_pad|>": 151662,

|

| 14 |

+

"<|fim_prefix|>": 151659,

|

| 15 |

+

"<|fim_suffix|>": 151661,

|

| 16 |

+

"<|im_end|>": 151645,

|

| 17 |

+

"<|im_start|>": 151644,

|

| 18 |

+

"<|image_pad|>": 151655,

|

| 19 |

+

"<|object_ref_end|>": 151647,

|

| 20 |

+

"<|object_ref_start|>": 151646,

|

| 21 |

+

"<|quad_end|>": 151651,

|

| 22 |

+

"<|quad_start|>": 151650,

|

| 23 |

+

"<|repo_name|>": 151663,

|

| 24 |

+

"<|video_pad|>": 151656,

|

| 25 |

+

"<|vision_end|>": 151653,

|

| 26 |

+

"<|vision_pad|>": 151654,

|

| 27 |

+

"<|vision_start|>": 151652

|

| 28 |

+

}

|

chat_template.jinja

ADDED

|

@@ -0,0 +1,99 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{%- if tools %}

|

| 2 |

+

{{- '<|im_start|>system\n' }}

|

| 3 |

+

{%- if messages[0].role == 'system' %}

|

| 4 |

+

{{- messages[0].content + '\n\n' }}

|

| 5 |

+

{%- endif %}

|

| 6 |

+

{{- "# Tools\n\nYou may call one or more functions to assist with the user query.\n\nYou are provided with function signatures within <tools></tools> XML tags:\n<tools>" }}

|

| 7 |

+

{%- for tool in tools %}

|

| 8 |

+

{{- "\n" }}

|

| 9 |

+

{{- tool | tojson }}

|

| 10 |

+

{%- endfor %}

|

| 11 |

+

{{- "\n</tools>\n\nFor each function call, return a json object with function name and arguments within <tool_call></tool_call> XML tags:\n<tool_call>\n{\"name\": <function-name>, \"arguments\": <args-json-object>}\n</tool_call><|im_end|>\n" }}

|

| 12 |

+

{%- else %}

|

| 13 |

+

{%- if messages[0].role == 'system' %}

|

| 14 |

+

{{- '<|im_start|>system\n' + messages[0].content + '<|im_end|>\n' }}

|

| 15 |

+

{%- endif %}

|

| 16 |

+

{%- endif %}

|

| 17 |

+

{%- set ns = namespace(multi_step_tool=true, last_query_index=messages|length - 1) %}

|

| 18 |

+

{%- for forward_message in messages %}

|

| 19 |

+

{%- set index = (messages|length - 1) - loop.index0 %}

|

| 20 |

+

{%- set message = messages[index] %}

|

| 21 |

+

{%- set current_content = message.content if message.content is defined and message.content is not none else '' %}

|

| 22 |

+

{%- set tool_start = '<tool_response>' %}

|

| 23 |

+

{%- set tool_start_length = tool_start|length %}

|

| 24 |

+

{%- set start_of_message = current_content[:tool_start_length] %}

|

| 25 |

+

{%- set tool_end = '</tool_response>' %}

|

| 26 |

+

{%- set tool_end_length = tool_end|length %}

|

| 27 |

+

{%- set start_pos = (current_content|length) - tool_end_length %}

|

| 28 |

+

{%- if start_pos < 0 %}

|

| 29 |

+

{%- set start_pos = 0 %}

|

| 30 |

+

{%- endif %}

|

| 31 |

+

{%- set end_of_message = current_content[start_pos:] %}

|

| 32 |

+

{%- if ns.multi_step_tool and message.role == "user" and not(start_of_message == tool_start and end_of_message == tool_end) %}

|

| 33 |

+

{%- set ns.multi_step_tool = false %}

|

| 34 |

+

{%- set ns.last_query_index = index %}

|

| 35 |

+

{%- endif %}

|

| 36 |

+

{%- endfor %}

|

| 37 |

+

{%- for message in messages %}

|

| 38 |

+

{%- if (message.role == "user") or (message.role == "system" and not loop.first) %}

|

| 39 |

+

{{- '<|im_start|>' + message.role + '\n' + message.content + '<|im_end|>' + '\n' }}

|

| 40 |

+

{%- elif message.role == "assistant" %}

|

| 41 |

+

{%- set m_content = message.content if message.content is defined and message.content is not none else '' %}

|

| 42 |

+

{%- set content = m_content %}

|

| 43 |

+

{%- set reasoning_content = '' %}

|

| 44 |

+

{%- if message.reasoning_content is defined and message.reasoning_content is not none %}

|

| 45 |

+

{%- set reasoning_content = message.reasoning_content %}

|

| 46 |

+

{%- else %}

|

| 47 |

+

{%- if '</think>' in m_content %}

|

| 48 |

+

{%- set content = (m_content.split('</think>')|last).lstrip('\n') %}

|

| 49 |

+

{%- set reasoning_content = (m_content.split('</think>')|first).rstrip('\n') %}

|

| 50 |

+

{%- set reasoning_content = (reasoning_content.split('<think>')|last).lstrip('\n') %}

|

| 51 |

+

{%- endif %}

|

| 52 |

+

{%- endif %}

|

| 53 |

+

{%- if loop.index0 > ns.last_query_index %}

|

| 54 |

+

{%- if loop.last or (not loop.last and (not reasoning_content.strip() == '')) %}

|

| 55 |

+

{{- '<|im_start|>' + message.role + '\n<think>\n' + reasoning_content.strip('\n') + '\n</think>\n\n' + content.lstrip('\n') }}

|

| 56 |

+

{%- else %}

|

| 57 |

+

{{- '<|im_start|>' + message.role + '\n' + content }}

|

| 58 |

+

{%- endif %}

|

| 59 |

+

{%- else %}

|

| 60 |

+

{{- '<|im_start|>' + message.role + '\n' + content }}

|

| 61 |

+

{%- endif %}

|

| 62 |

+

{%- if message.tool_calls %}

|

| 63 |

+

{%- for tool_call in message.tool_calls %}

|

| 64 |

+

{%- if (loop.first and content) or (not loop.first) %}

|

| 65 |

+

{{- '\n' }}

|

| 66 |

+

{%- endif %}

|

| 67 |

+

{%- if tool_call.function %}

|

| 68 |

+

{%- set tool_call = tool_call.function %}

|

| 69 |

+

{%- endif %}

|

| 70 |

+

{{- '<tool_call>\n{"name": "' }}

|

| 71 |

+

{{- tool_call.name }}

|

| 72 |

+

{{- '", "arguments": ' }}

|

| 73 |

+

{%- if tool_call.arguments is string %}

|

| 74 |

+

{{- tool_call.arguments }}

|

| 75 |

+

{%- else %}

|

| 76 |

+

{{- tool_call.arguments | tojson }}

|

| 77 |

+

{%- endif %}

|

| 78 |

+

{{- '}\n</tool_call>' }}

|

| 79 |

+

{%- endfor %}

|

| 80 |

+

{%- endif %}

|

| 81 |

+

{{- '<|im_end|>\n' }}

|

| 82 |

+

{%- elif message.role == "tool" %}

|

| 83 |

+

{%- if loop.first or (messages[loop.index0 - 1].role != "tool") %}

|

| 84 |

+

{{- '<|im_start|>user' }}

|

| 85 |

+

{%- endif %}

|

| 86 |

+

{{- '\n<tool_response>\n' }}

|

| 87 |

+

{{- message.content }}

|

| 88 |

+

{{- '\n</tool_response>' }}

|

| 89 |

+

{%- if loop.last or (messages[loop.index0 + 1].role != "tool") %}

|

| 90 |

+

{{- '<|im_end|>\n' }}

|

| 91 |

+

{%- endif %}

|

| 92 |

+

{%- endif %}

|

| 93 |

+

{%- endfor %}

|

| 94 |

+

{%- if add_generation_prompt %}

|

| 95 |

+

{{- '<|im_start|>assistant\n' }}

|

| 96 |

+

{%- if enable_thinking is defined and enable_thinking is false %}

|

| 97 |

+

{{- '<think>\n\n</think>\n\n' }}

|

| 98 |

+

{%- endif %}

|

| 99 |

+

{%- endif %}

|

config.json

ADDED

|

@@ -0,0 +1,333 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"architectures": [

|

| 3 |

+

"Qwen3MoeForCausalLM"

|

| 4 |

+

],

|

| 5 |

+

"attention_bias": false,

|

| 6 |

+

"attention_dropout": 0.0,

|

| 7 |

+

"decoder_sparse_step": 1,

|

| 8 |

+

"eos_token_id": 151645,

|

| 9 |

+

"head_dim": 128,

|

| 10 |

+

"hidden_act": "silu",

|

| 11 |

+

"hidden_size": 4096,

|

| 12 |

+

"initializer_range": 0.02,

|

| 13 |

+

"intermediate_size": 12288,

|

| 14 |

+

"max_position_embeddings": 262144,

|

| 15 |

+

"max_window_layers": 94,

|

| 16 |

+

"mlp_only_layers": [],

|

| 17 |

+

"model_type": "qwen3_moe",

|

| 18 |

+

"moe_intermediate_size": 1536,

|

| 19 |

+

"norm_topk_prob": true,

|

| 20 |

+

"num_attention_heads": 64,

|

| 21 |

+

"num_experts": 128,

|

| 22 |

+

"num_experts_per_tok": 8,

|

| 23 |

+

"num_hidden_layers": 94,

|

| 24 |

+

"num_key_value_heads": 4,

|

| 25 |

+

"output_router_logits": false,

|

| 26 |

+

"pad_token_id": 151654,

|

| 27 |

+

"quantization_config": {

|

| 28 |

+

"activation_scheme": "dynamic",

|

| 29 |

+

"fmt": "e4m3",

|

| 30 |

+

"modules_to_not_convert": [

|

| 31 |

+

"lm_head",

|

| 32 |

+

"model.layers.0.input_layernorm",

|

| 33 |

+

"model.layers.0.mlp.gate",

|

| 34 |

+

"model.layers.0.post_attention_layernorm",

|

| 35 |

+

"model.layers.1.input_layernorm",

|

| 36 |

+

"model.layers.1.mlp.gate",

|

| 37 |

+

"model.layers.1.post_attention_layernorm",

|

| 38 |

+

"model.layers.2.input_layernorm",

|

| 39 |

+

"model.layers.2.mlp.gate",

|

| 40 |

+

"model.layers.2.post_attention_layernorm",

|

| 41 |

+

"model.layers.3.input_layernorm",

|

| 42 |

+

"model.layers.3.mlp.gate",

|

| 43 |

+

"model.layers.3.post_attention_layernorm",

|

| 44 |

+

"model.layers.4.input_layernorm",

|

| 45 |

+

"model.layers.4.mlp.gate",

|

| 46 |

+

"model.layers.4.post_attention_layernorm",

|

| 47 |

+

"model.layers.5.input_layernorm",

|

| 48 |

+

"model.layers.5.mlp.gate",

|

| 49 |

+

"model.layers.5.post_attention_layernorm",

|

| 50 |

+

"model.layers.6.input_layernorm",

|

| 51 |

+

"model.layers.6.mlp.gate",

|

| 52 |

+

"model.layers.6.post_attention_layernorm",

|

| 53 |

+

"model.layers.7.input_layernorm",

|

| 54 |

+

"model.layers.7.mlp.gate",

|

| 55 |

+

"model.layers.7.post_attention_layernorm",

|

| 56 |

+

"model.layers.8.input_layernorm",

|

| 57 |

+

"model.layers.8.mlp.gate",

|

| 58 |

+

"model.layers.8.post_attention_layernorm",

|

| 59 |

+

"model.layers.9.input_layernorm",

|

| 60 |

+

"model.layers.9.mlp.gate",

|

| 61 |

+

"model.layers.9.post_attention_layernorm",

|

| 62 |

+

"model.layers.10.input_layernorm",

|

| 63 |

+

"model.layers.10.mlp.gate",

|

| 64 |

+

"model.layers.10.post_attention_layernorm",

|

| 65 |

+

"model.layers.11.input_layernorm",

|

| 66 |

+

"model.layers.11.mlp.gate",

|

| 67 |

+

"model.layers.11.post_attention_layernorm",

|

| 68 |

+

"model.layers.12.input_layernorm",

|

| 69 |

+

"model.layers.12.mlp.gate",

|

| 70 |

+

"model.layers.12.post_attention_layernorm",

|

| 71 |

+

"model.layers.13.input_layernorm",

|

| 72 |

+

"model.layers.13.mlp.gate",

|

| 73 |

+

"model.layers.13.post_attention_layernorm",

|

| 74 |

+

"model.layers.14.input_layernorm",

|

| 75 |

+

"model.layers.14.mlp.gate",

|

| 76 |

+

"model.layers.14.post_attention_layernorm",

|

| 77 |

+

"model.layers.15.input_layernorm",

|

| 78 |

+

"model.layers.15.mlp.gate",

|

| 79 |

+

"model.layers.15.post_attention_layernorm",

|

| 80 |

+

"model.layers.16.input_layernorm",

|

| 81 |

+

"model.layers.16.mlp.gate",

|

| 82 |

+

"model.layers.16.post_attention_layernorm",

|

| 83 |

+

"model.layers.17.input_layernorm",

|

| 84 |

+

"model.layers.17.mlp.gate",

|

| 85 |

+

"model.layers.17.post_attention_layernorm",

|

| 86 |

+

"model.layers.18.input_layernorm",

|

| 87 |

+

"model.layers.18.mlp.gate",

|

| 88 |

+

"model.layers.18.post_attention_layernorm",

|

| 89 |

+

"model.layers.19.input_layernorm",

|

| 90 |

+