File size: 18,775 Bytes

d26d07f ab9abef d26d07f ff664c1 d26d07f 2b4c702 ff664c1 d26d07f 084b6f1 d26d07f a1f51b7 5cfc1ce a1f51b7 5cfc1ce a1f51b7 5cfc1ce a1f51b7 5cfc1ce d26d07f 9125de0 d26d07f cec1344 d26d07f 4384194 a1f51b7 4384194 9125de0 d26d07f 9125de0 d26d07f 354edef d26d07f 354edef d26d07f 354edef d26d07f 354edef d26d07f 354edef d26d07f 354edef d26d07f 354edef d26d07f 354edef adc61cb 354edef adc61cb d26d07f 354edef adc61cb 354edef adc61cb 354edef d26d07f ab9abef d26d07f 9125de0 d26d07f a1f51b7 d26d07f a1f51b7 d26d07f a1f51b7 d26d07f 5f00edd d26d07f |

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 173 174 175 176 177 178 179 180 181 182 183 184 185 186 187 188 189 190 191 192 193 194 195 196 197 198 199 200 201 202 203 204 205 206 207 208 209 210 211 212 213 214 215 216 217 218 219 220 221 222 223 224 225 226 227 228 229 230 231 232 233 234 235 236 237 238 239 240 241 242 243 244 245 246 247 248 249 250 251 252 253 254 255 256 257 258 259 260 261 262 263 264 265 266 267 268 269 270 271 272 273 274 275 276 277 278 279 280 281 282 283 284 285 286 287 288 289 290 291 292 293 294 295 296 297 298 299 300 301 302 303 304 305 306 307 |

---

library_name: pytorch

license: apache-2.0

pipeline_tag: object-detection

tags:

- real_time

- android

---

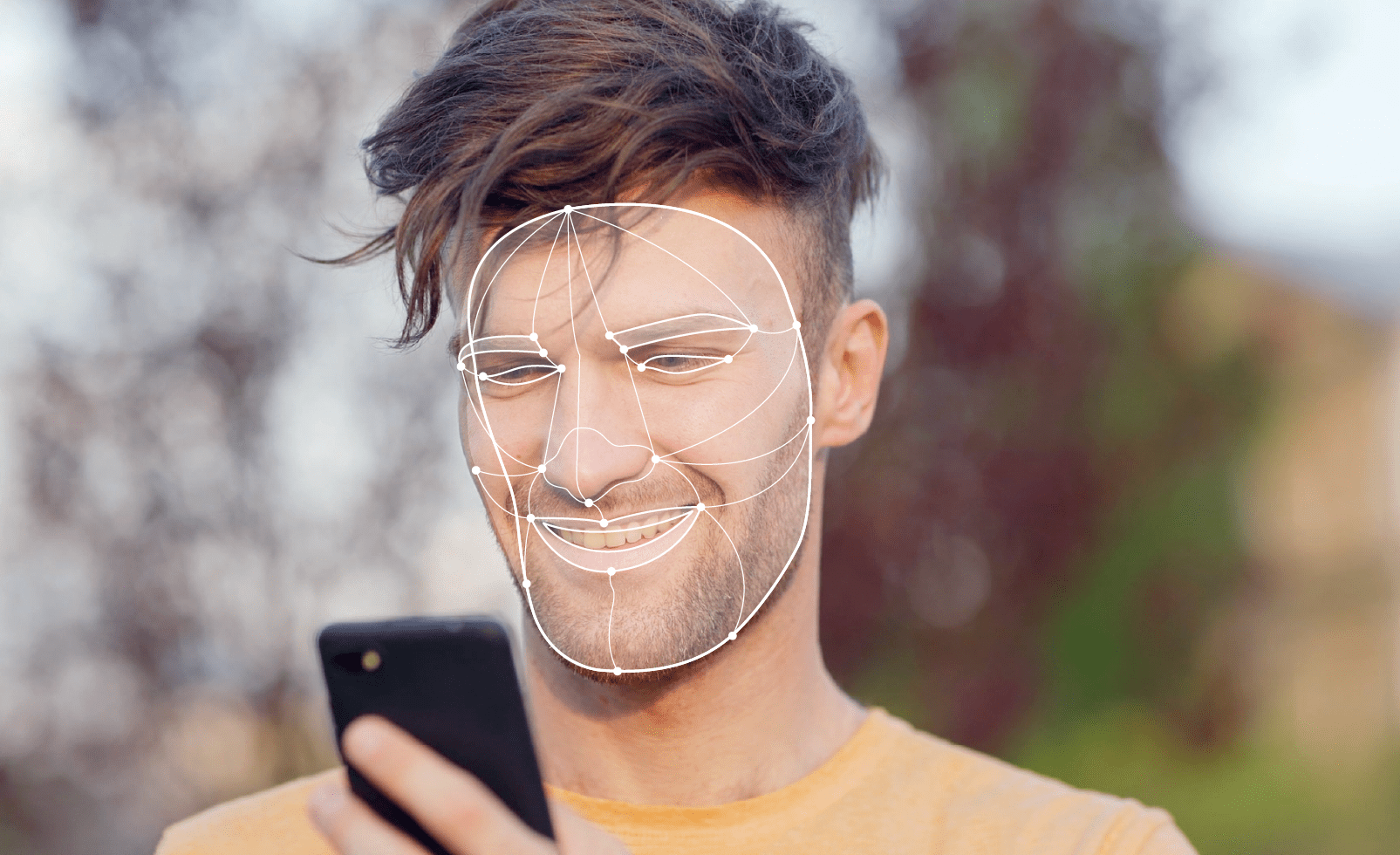

# MediaPipe-Face-Detection: Optimized for Mobile Deployment

## Detect faces and locate facial features in real-time video and image streams

Designed for sub-millisecond processing, this model predicts bounding boxes and pose skeletons (left eye, right eye, nose tip, mouth, left eye tragion, and right eye tragion) of faces in an image.

This model is an implementation of MediaPipe-Face-Detection found [here](https://github.com/zmurez/MediaPipePyTorch/).

This repository provides scripts to run MediaPipe-Face-Detection on Qualcomm® devices.

More details on model performance across various devices, can be found

[here](https://aihub.qualcomm.com/models/mediapipe_face).

### Model Details

- **Model Type:** Object detection

- **Model Stats:**

- Input resolution: 256x256

- Number of parameters (MediaPipeFaceDetector): 135K

- Model size (MediaPipeFaceDetector): 565 KB

- Number of parameters (MediaPipeFaceLandmarkDetector): 603K

- Model size (MediaPipeFaceLandmarkDetector): 2.34 MB

- Number of output classes: 6

| Model | Device | Chipset | Target Runtime | Inference Time (ms) | Peak Memory Range (MB) | Precision | Primary Compute Unit | Target Model

|---|---|---|---|---|---|---|---|---|

| MediaPipeFaceDetector | Samsung Galaxy S23 | Snapdragon® 8 Gen 2 | TFLITE | 0.548 ms | 0 - 1 MB | FP16 | NPU | [MediaPipe-Face-Detection.tflite](https://huggingface.co/qualcomm/MediaPipe-Face-Detection/blob/main/MediaPipeFaceDetector.tflite) |

| MediaPipeFaceDetector | Samsung Galaxy S23 | Snapdragon® 8 Gen 2 | QNN | 0.626 ms | 1 - 6 MB | FP16 | NPU | [MediaPipe-Face-Detection.so](https://huggingface.co/qualcomm/MediaPipe-Face-Detection/blob/main/MediaPipeFaceDetector.so) |

| MediaPipeFaceDetector | Samsung Galaxy S23 | Snapdragon® 8 Gen 2 | ONNX | 1.017 ms | 0 - 3 MB | FP16 | NPU | [MediaPipe-Face-Detection.onnx](https://huggingface.co/qualcomm/MediaPipe-Face-Detection/blob/main/MediaPipeFaceDetector.onnx) |

| MediaPipeFaceDetector | Samsung Galaxy S24 | Snapdragon® 8 Gen 3 | TFLITE | 0.407 ms | 0 - 34 MB | FP16 | NPU | [MediaPipe-Face-Detection.tflite](https://huggingface.co/qualcomm/MediaPipe-Face-Detection/blob/main/MediaPipeFaceDetector.tflite) |

| MediaPipeFaceDetector | Samsung Galaxy S24 | Snapdragon® 8 Gen 3 | QNN | 0.463 ms | 1 - 13 MB | FP16 | NPU | [MediaPipe-Face-Detection.so](https://huggingface.co/qualcomm/MediaPipe-Face-Detection/blob/main/MediaPipeFaceDetector.so) |

| MediaPipeFaceDetector | Samsung Galaxy S24 | Snapdragon® 8 Gen 3 | ONNX | 0.736 ms | 0 - 39 MB | FP16 | NPU | [MediaPipe-Face-Detection.onnx](https://huggingface.co/qualcomm/MediaPipe-Face-Detection/blob/main/MediaPipeFaceDetector.onnx) |

| MediaPipeFaceDetector | Snapdragon 8 Elite QRD | Snapdragon® 8 Elite | TFLITE | 0.434 ms | 0 - 24 MB | FP16 | NPU | [MediaPipe-Face-Detection.tflite](https://huggingface.co/qualcomm/MediaPipe-Face-Detection/blob/main/MediaPipeFaceDetector.tflite) |

| MediaPipeFaceDetector | Snapdragon 8 Elite QRD | Snapdragon® 8 Elite | QNN | 0.458 ms | 0 - 12 MB | FP16 | NPU | Use Export Script |

| MediaPipeFaceDetector | Snapdragon 8 Elite QRD | Snapdragon® 8 Elite | ONNX | 0.751 ms | 0 - 28 MB | FP16 | NPU | [MediaPipe-Face-Detection.onnx](https://huggingface.co/qualcomm/MediaPipe-Face-Detection/blob/main/MediaPipeFaceDetector.onnx) |

| MediaPipeFaceDetector | QCS8550 (Proxy) | QCS8550 Proxy | TFLITE | 0.545 ms | 0 - 1 MB | FP16 | NPU | [MediaPipe-Face-Detection.tflite](https://huggingface.co/qualcomm/MediaPipe-Face-Detection/blob/main/MediaPipeFaceDetector.tflite) |

| MediaPipeFaceDetector | QCS8550 (Proxy) | QCS8550 Proxy | QNN | 0.603 ms | 1 - 2 MB | FP16 | NPU | Use Export Script |

| MediaPipeFaceDetector | SA8255 (Proxy) | SA8255P Proxy | TFLITE | 0.549 ms | 0 - 71 MB | FP16 | NPU | [MediaPipe-Face-Detection.tflite](https://huggingface.co/qualcomm/MediaPipe-Face-Detection/blob/main/MediaPipeFaceDetector.tflite) |

| MediaPipeFaceDetector | SA8255 (Proxy) | SA8255P Proxy | QNN | 0.604 ms | 1 - 2 MB | FP16 | NPU | Use Export Script |

| MediaPipeFaceDetector | SA8775 (Proxy) | SA8775P Proxy | TFLITE | 0.546 ms | 0 - 3 MB | FP16 | NPU | [MediaPipe-Face-Detection.tflite](https://huggingface.co/qualcomm/MediaPipe-Face-Detection/blob/main/MediaPipeFaceDetector.tflite) |

| MediaPipeFaceDetector | SA8775 (Proxy) | SA8775P Proxy | QNN | 0.605 ms | 1 - 3 MB | FP16 | NPU | Use Export Script |

| MediaPipeFaceDetector | SA8650 (Proxy) | SA8650P Proxy | TFLITE | 0.549 ms | 0 - 1 MB | FP16 | NPU | [MediaPipe-Face-Detection.tflite](https://huggingface.co/qualcomm/MediaPipe-Face-Detection/blob/main/MediaPipeFaceDetector.tflite) |

| MediaPipeFaceDetector | SA8650 (Proxy) | SA8650P Proxy | QNN | 0.609 ms | 1 - 2 MB | FP16 | NPU | Use Export Script |

| MediaPipeFaceDetector | SA8295P ADP | SA8295P | TFLITE | 1.163 ms | 0 - 21 MB | FP16 | NPU | [MediaPipe-Face-Detection.tflite](https://huggingface.co/qualcomm/MediaPipe-Face-Detection/blob/main/MediaPipeFaceDetector.tflite) |

| MediaPipeFaceDetector | SA8295P ADP | SA8295P | QNN | 1.261 ms | 1 - 6 MB | FP16 | NPU | Use Export Script |

| MediaPipeFaceDetector | QCS8450 (Proxy) | QCS8450 Proxy | TFLITE | 0.747 ms | 0 - 32 MB | FP16 | NPU | [MediaPipe-Face-Detection.tflite](https://huggingface.co/qualcomm/MediaPipe-Face-Detection/blob/main/MediaPipeFaceDetector.tflite) |

| MediaPipeFaceDetector | QCS8450 (Proxy) | QCS8450 Proxy | QNN | 0.838 ms | 1 - 15 MB | FP16 | NPU | Use Export Script |

| MediaPipeFaceDetector | Snapdragon X Elite CRD | Snapdragon® X Elite | QNN | 0.755 ms | 1 - 1 MB | FP16 | NPU | Use Export Script |

| MediaPipeFaceDetector | Snapdragon X Elite CRD | Snapdragon® X Elite | ONNX | 1.032 ms | 2 - 2 MB | FP16 | NPU | [MediaPipe-Face-Detection.onnx](https://huggingface.co/qualcomm/MediaPipe-Face-Detection/blob/main/MediaPipeFaceDetector.onnx) |

| MediaPipeFaceLandmarkDetector | Samsung Galaxy S23 | Snapdragon® 8 Gen 2 | TFLITE | 0.184 ms | 0 - 1 MB | FP16 | NPU | [MediaPipe-Face-Detection.tflite](https://huggingface.co/qualcomm/MediaPipe-Face-Detection/blob/main/MediaPipeFaceLandmarkDetector.tflite) |

| MediaPipeFaceLandmarkDetector | Samsung Galaxy S23 | Snapdragon® 8 Gen 2 | QNN | 0.277 ms | 0 - 7 MB | FP16 | NPU | [MediaPipe-Face-Detection.so](https://huggingface.co/qualcomm/MediaPipe-Face-Detection/blob/main/MediaPipeFaceLandmarkDetector.so) |

| MediaPipeFaceLandmarkDetector | Samsung Galaxy S23 | Snapdragon® 8 Gen 2 | ONNX | 0.501 ms | 0 - 3 MB | FP16 | NPU | [MediaPipe-Face-Detection.onnx](https://huggingface.co/qualcomm/MediaPipe-Face-Detection/blob/main/MediaPipeFaceLandmarkDetector.onnx) |

| MediaPipeFaceLandmarkDetector | Samsung Galaxy S24 | Snapdragon® 8 Gen 3 | TFLITE | 0.148 ms | 0 - 28 MB | FP16 | NPU | [MediaPipe-Face-Detection.tflite](https://huggingface.co/qualcomm/MediaPipe-Face-Detection/blob/main/MediaPipeFaceLandmarkDetector.tflite) |

| MediaPipeFaceLandmarkDetector | Samsung Galaxy S24 | Snapdragon® 8 Gen 3 | QNN | 0.206 ms | 0 - 12 MB | FP16 | NPU | [MediaPipe-Face-Detection.so](https://huggingface.co/qualcomm/MediaPipe-Face-Detection/blob/main/MediaPipeFaceLandmarkDetector.so) |

| MediaPipeFaceLandmarkDetector | Samsung Galaxy S24 | Snapdragon® 8 Gen 3 | ONNX | 0.385 ms | 0 - 31 MB | FP16 | NPU | [MediaPipe-Face-Detection.onnx](https://huggingface.co/qualcomm/MediaPipe-Face-Detection/blob/main/MediaPipeFaceLandmarkDetector.onnx) |

| MediaPipeFaceLandmarkDetector | Snapdragon 8 Elite QRD | Snapdragon® 8 Elite | TFLITE | 0.123 ms | 0 - 18 MB | FP16 | NPU | [MediaPipe-Face-Detection.tflite](https://huggingface.co/qualcomm/MediaPipe-Face-Detection/blob/main/MediaPipeFaceLandmarkDetector.tflite) |

| MediaPipeFaceLandmarkDetector | Snapdragon 8 Elite QRD | Snapdragon® 8 Elite | QNN | 0.175 ms | 0 - 9 MB | FP16 | NPU | Use Export Script |

| MediaPipeFaceLandmarkDetector | Snapdragon 8 Elite QRD | Snapdragon® 8 Elite | ONNX | 0.319 ms | 0 - 18 MB | FP16 | NPU | [MediaPipe-Face-Detection.onnx](https://huggingface.co/qualcomm/MediaPipe-Face-Detection/blob/main/MediaPipeFaceLandmarkDetector.onnx) |

| MediaPipeFaceLandmarkDetector | QCS8550 (Proxy) | QCS8550 Proxy | TFLITE | 0.194 ms | 0 - 1 MB | FP16 | NPU | [MediaPipe-Face-Detection.tflite](https://huggingface.co/qualcomm/MediaPipe-Face-Detection/blob/main/MediaPipeFaceLandmarkDetector.tflite) |

| MediaPipeFaceLandmarkDetector | QCS8550 (Proxy) | QCS8550 Proxy | QNN | 0.274 ms | 0 - 2 MB | FP16 | NPU | Use Export Script |

| MediaPipeFaceLandmarkDetector | SA8255 (Proxy) | SA8255P Proxy | TFLITE | 0.226 ms | 0 - 2 MB | FP16 | NPU | [MediaPipe-Face-Detection.tflite](https://huggingface.co/qualcomm/MediaPipe-Face-Detection/blob/main/MediaPipeFaceLandmarkDetector.tflite) |

| MediaPipeFaceLandmarkDetector | SA8255 (Proxy) | SA8255P Proxy | QNN | 0.282 ms | 0 - 1 MB | FP16 | NPU | Use Export Script |

| MediaPipeFaceLandmarkDetector | SA8775 (Proxy) | SA8775P Proxy | TFLITE | 0.195 ms | 0 - 2 MB | FP16 | NPU | [MediaPipe-Face-Detection.tflite](https://huggingface.co/qualcomm/MediaPipe-Face-Detection/blob/main/MediaPipeFaceLandmarkDetector.tflite) |

| MediaPipeFaceLandmarkDetector | SA8775 (Proxy) | SA8775P Proxy | QNN | 0.276 ms | 0 - 2 MB | FP16 | NPU | Use Export Script |

| MediaPipeFaceLandmarkDetector | SA8650 (Proxy) | SA8650P Proxy | TFLITE | 0.199 ms | 0 - 1 MB | FP16 | NPU | [MediaPipe-Face-Detection.tflite](https://huggingface.co/qualcomm/MediaPipe-Face-Detection/blob/main/MediaPipeFaceLandmarkDetector.tflite) |

| MediaPipeFaceLandmarkDetector | SA8650 (Proxy) | SA8650P Proxy | QNN | 0.273 ms | 0 - 2 MB | FP16 | NPU | Use Export Script |

| MediaPipeFaceLandmarkDetector | SA8295P ADP | SA8295P | TFLITE | 0.589 ms | 0 - 17 MB | FP16 | NPU | [MediaPipe-Face-Detection.tflite](https://huggingface.co/qualcomm/MediaPipe-Face-Detection/blob/main/MediaPipeFaceLandmarkDetector.tflite) |

| MediaPipeFaceLandmarkDetector | SA8295P ADP | SA8295P | QNN | 0.782 ms | 0 - 6 MB | FP16 | NPU | Use Export Script |

| MediaPipeFaceLandmarkDetector | QCS8450 (Proxy) | QCS8450 Proxy | TFLITE | 0.277 ms | 0 - 29 MB | FP16 | NPU | [MediaPipe-Face-Detection.tflite](https://huggingface.co/qualcomm/MediaPipe-Face-Detection/blob/main/MediaPipeFaceLandmarkDetector.tflite) |

| MediaPipeFaceLandmarkDetector | QCS8450 (Proxy) | QCS8450 Proxy | QNN | 0.379 ms | 0 - 12 MB | FP16 | NPU | Use Export Script |

| MediaPipeFaceLandmarkDetector | Snapdragon X Elite CRD | Snapdragon® X Elite | QNN | 0.402 ms | 0 - 0 MB | FP16 | NPU | Use Export Script |

| MediaPipeFaceLandmarkDetector | Snapdragon X Elite CRD | Snapdragon® X Elite | ONNX | 0.516 ms | 2 - 2 MB | FP16 | NPU | [MediaPipe-Face-Detection.onnx](https://huggingface.co/qualcomm/MediaPipe-Face-Detection/blob/main/MediaPipeFaceLandmarkDetector.onnx) |

## Installation

This model can be installed as a Python package via pip.

```bash

pip install qai-hub-models

```

## Configure Qualcomm® AI Hub to run this model on a cloud-hosted device

Sign-in to [Qualcomm® AI Hub](https://app.aihub.qualcomm.com/) with your

Qualcomm® ID. Once signed in navigate to `Account -> Settings -> API Token`.

With this API token, you can configure your client to run models on the cloud

hosted devices.

```bash

qai-hub configure --api_token API_TOKEN

```

Navigate to [docs](https://app.aihub.qualcomm.com/docs/) for more information.

## Demo off target

The package contains a simple end-to-end demo that downloads pre-trained

weights and runs this model on a sample input.

```bash

python -m qai_hub_models.models.mediapipe_face.demo

```

The above demo runs a reference implementation of pre-processing, model

inference, and post processing.

**NOTE**: If you want running in a Jupyter Notebook or Google Colab like

environment, please add the following to your cell (instead of the above).

```

%run -m qai_hub_models.models.mediapipe_face.demo

```

### Run model on a cloud-hosted device

In addition to the demo, you can also run the model on a cloud-hosted Qualcomm®

device. This script does the following:

* Performance check on-device on a cloud-hosted device

* Downloads compiled assets that can be deployed on-device for Android.

* Accuracy check between PyTorch and on-device outputs.

```bash

python -m qai_hub_models.models.mediapipe_face.export

```

```

Profiling Results

------------------------------------------------------------

MediaPipeFaceDetector

Device : Samsung Galaxy S23 (13)

Runtime : TFLITE

Estimated inference time (ms) : 0.5

Estimated peak memory usage (MB): [0, 1]

Total # Ops : 111

Compute Unit(s) : NPU (111 ops)

------------------------------------------------------------

MediaPipeFaceLandmarkDetector

Device : Samsung Galaxy S23 (13)

Runtime : TFLITE

Estimated inference time (ms) : 0.2

Estimated peak memory usage (MB): [0, 1]

Total # Ops : 100

Compute Unit(s) : NPU (100 ops)

```

## How does this work?

This [export script](https://aihub.qualcomm.com/models/mediapipe_face/qai_hub_models/models/MediaPipe-Face-Detection/export.py)

leverages [Qualcomm® AI Hub](https://aihub.qualcomm.com/) to optimize, validate, and deploy this model

on-device. Lets go through each step below in detail:

Step 1: **Compile model for on-device deployment**

To compile a PyTorch model for on-device deployment, we first trace the model

in memory using the `jit.trace` and then call the `submit_compile_job` API.

```python

import torch

import qai_hub as hub

from qai_hub_models.models.mediapipe_face import MediaPipeFaceDetector,MediaPipeFaceLandmarkDetector

# Load the model

face_detector_model = MediaPipeFaceDetector.from_pretrained()

face_landmark_detector_model = MediaPipeFaceLandmarkDetector.from_pretrained()

# Device

device = hub.Device("Samsung Galaxy S23")

# Trace model

face_detector_input_shape = face_detector_model.get_input_spec()

face_detector_sample_inputs = face_detector_model.sample_inputs()

traced_face_detector_model = torch.jit.trace(face_detector_model, [torch.tensor(data[0]) for _, data in face_detector_sample_inputs.items()])

# Compile model on a specific device

face_detector_compile_job = hub.submit_compile_job(

model=traced_face_detector_model ,

device=device,

input_specs=face_detector_model.get_input_spec(),

)

# Get target model to run on-device

face_detector_target_model = face_detector_compile_job.get_target_model()

# Trace model

face_landmark_detector_input_shape = face_landmark_detector_model.get_input_spec()

face_landmark_detector_sample_inputs = face_landmark_detector_model.sample_inputs()

traced_face_landmark_detector_model = torch.jit.trace(face_landmark_detector_model, [torch.tensor(data[0]) for _, data in face_landmark_detector_sample_inputs.items()])

# Compile model on a specific device

face_landmark_detector_compile_job = hub.submit_compile_job(

model=traced_face_landmark_detector_model ,

device=device,

input_specs=face_landmark_detector_model.get_input_spec(),

)

# Get target model to run on-device

face_landmark_detector_target_model = face_landmark_detector_compile_job.get_target_model()

```

Step 2: **Performance profiling on cloud-hosted device**

After compiling models from step 1. Models can be profiled model on-device using the

`target_model`. Note that this scripts runs the model on a device automatically

provisioned in the cloud. Once the job is submitted, you can navigate to a

provided job URL to view a variety of on-device performance metrics.

```python

face_detector_profile_job = hub.submit_profile_job(

model=face_detector_target_model,

device=device,

)

face_landmark_detector_profile_job = hub.submit_profile_job(

model=face_landmark_detector_target_model,

device=device,

)

```

Step 3: **Verify on-device accuracy**

To verify the accuracy of the model on-device, you can run on-device inference

on sample input data on the same cloud hosted device.

```python

face_detector_input_data = face_detector_model.sample_inputs()

face_detector_inference_job = hub.submit_inference_job(

model=face_detector_target_model,

device=device,

inputs=face_detector_input_data,

)

face_detector_inference_job.download_output_data()

face_landmark_detector_input_data = face_landmark_detector_model.sample_inputs()

face_landmark_detector_inference_job = hub.submit_inference_job(

model=face_landmark_detector_target_model,

device=device,

inputs=face_landmark_detector_input_data,

)

face_landmark_detector_inference_job.download_output_data()

```

With the output of the model, you can compute like PSNR, relative errors or

spot check the output with expected output.

**Note**: This on-device profiling and inference requires access to Qualcomm®

AI Hub. [Sign up for access](https://myaccount.qualcomm.com/signup).

## Deploying compiled model to Android

The models can be deployed using multiple runtimes:

- TensorFlow Lite (`.tflite` export): [This

tutorial](https://www.tensorflow.org/lite/android/quickstart) provides a

guide to deploy the .tflite model in an Android application.

- QNN (`.so` export ): This [sample

app](https://docs.qualcomm.com/bundle/publicresource/topics/80-63442-50/sample_app.html)

provides instructions on how to use the `.so` shared library in an Android application.

## View on Qualcomm® AI Hub

Get more details on MediaPipe-Face-Detection's performance across various devices [here](https://aihub.qualcomm.com/models/mediapipe_face).

Explore all available models on [Qualcomm® AI Hub](https://aihub.qualcomm.com/)

## License

* The license for the original implementation of MediaPipe-Face-Detection can be found [here](https://github.com/zmurez/MediaPipePyTorch/blob/master/LICENSE).

* The license for the compiled assets for on-device deployment can be found [here](https://qaihub-public-assets.s3.us-west-2.amazonaws.com/qai-hub-models/Qualcomm+AI+Hub+Proprietary+License.pdf)

## References

* [BlazeFace: Sub-millisecond Neural Face Detection on Mobile GPUs](https://arxiv.org/abs/1907.05047)

* [Source Model Implementation](https://github.com/zmurez/MediaPipePyTorch/)

## Community

* Join [our AI Hub Slack community](https://aihub.qualcomm.com/community/slack) to collaborate, post questions and learn more about on-device AI.

* For questions or feedback please [reach out to us](mailto:[email protected]).

|