Upload folder using huggingface_hub

Browse files- .gitattributes +1 -0

- README.md +168 -0

- added_tokens.json +28 -0

- chat_template.jinja +131 -0

- config.json +119 -0

- generation_config.json +13 -0

- merges.txt +0 -0

- model-00001-of-00004.safetensors +3 -0

- model-00002-of-00004.safetensors +3 -0

- model-00003-of-00004.safetensors +3 -0

- model-00004-of-00004.safetensors +3 -0

- model.safetensors.index.json +0 -0

- qwen3coder_tool_parser.py +675 -0

- recipe.yaml +10 -0

- special_tokens_map.json +31 -0

- tokenizer.json +3 -0

- tokenizer_config.json +239 -0

- vocab.json +0 -0

.gitattributes

CHANGED

|

@@ -33,3 +33,4 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

tokenizer.json filter=lfs diff=lfs merge=lfs -text

|

README.md

ADDED

|

@@ -0,0 +1,168 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

library_name: transformers

|

| 3 |

+

license: apache-2.0

|

| 4 |

+

license_link: https://huggingface.co/Qwen/Qwen3-Coder-30B-A3B-Instruct/blob/main/LICENSE

|

| 5 |

+

pipeline_tag: text-generation

|

| 6 |

+

base_model:

|

| 7 |

+

- Qwen/Qwen3-Coder-30B-A3B-Instruct

|

| 8 |

+

---

|

| 9 |

+

|

| 10 |

+

# Qwen3-Coder-30B-A3B-Instruct

|

| 11 |

+

<a href="https://chat.qwen.ai/" target="_blank" style="margin: 2px;">

|

| 12 |

+

<img alt="Chat" src="https://img.shields.io/badge/%F0%9F%92%9C%EF%B8%8F%20Qwen%20Chat%20-536af5" style="display: inline-block; vertical-align: middle;"/>

|

| 13 |

+

</a>

|

| 14 |

+

|

| 15 |

+

## Highlights

|

| 16 |

+

|

| 17 |

+

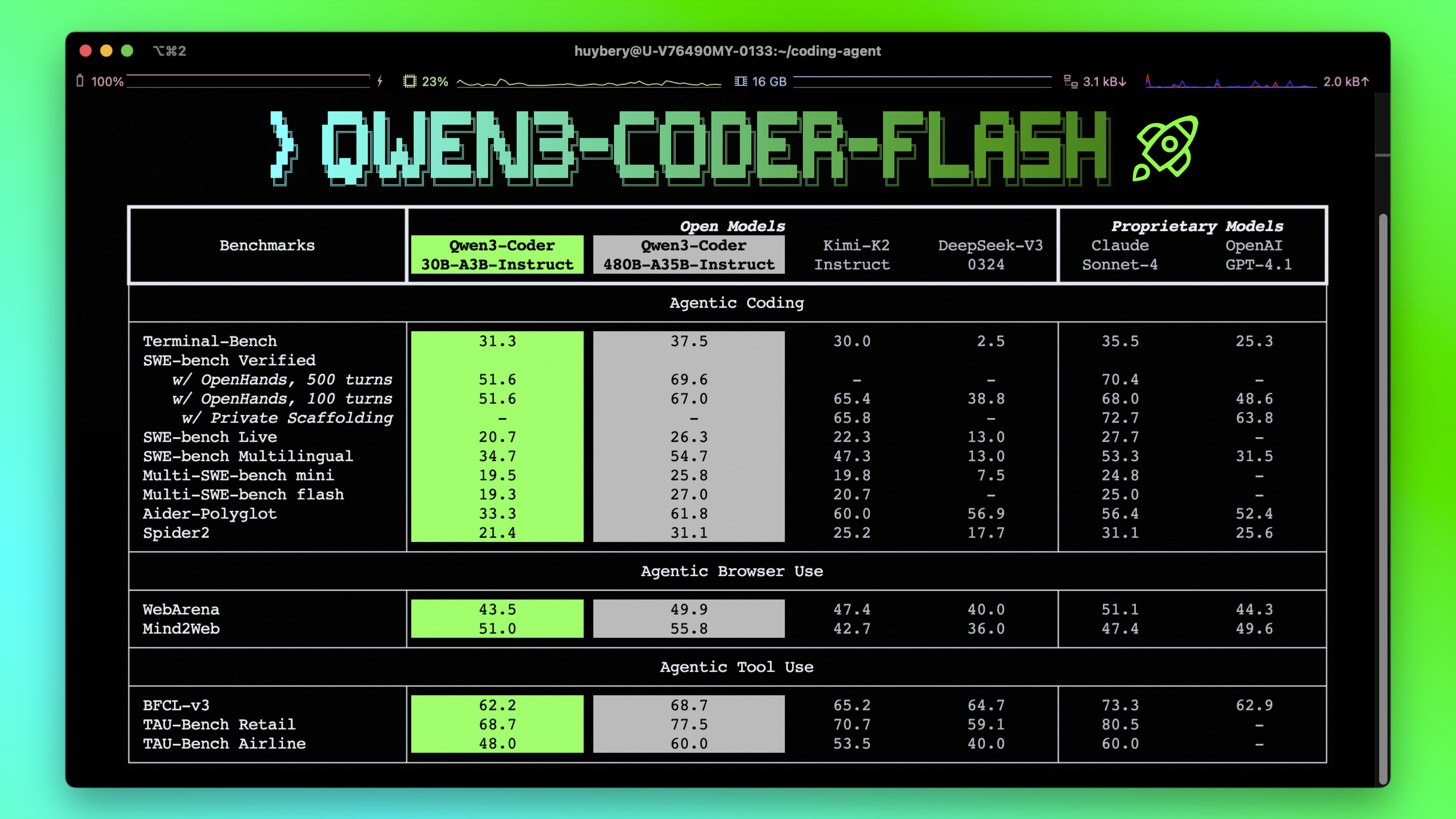

**Qwen3-Coder** is available in multiple sizes. Today, we're excited to introduce **Qwen3-Coder-30B-A3B-Instruct**. This streamlined model maintains impressive performance and efficiency, featuring the following key enhancements:

|

| 18 |

+

|

| 19 |

+

- **Significant Performance** among open models on **Agentic Coding**, **Agentic Browser-Use**, and other foundational coding tasks.

|

| 20 |

+

- **Long-context Capabilities** with native support for **256K** tokens, extendable up to **1M** tokens using Yarn, optimized for repository-scale understanding.

|

| 21 |

+

- **Agentic Coding** supporting for most platform such as **Qwen Code**, **CLINE**, featuring a specially designed function call format.

|

| 22 |

+

|

| 23 |

+

|

| 24 |

+

|

| 25 |

+

## Model Overview

|

| 26 |

+

|

| 27 |

+

**Qwen3-Coder-30B-A3B-Instruct** has the following features:

|

| 28 |

+

- Type: Causal Language Models

|

| 29 |

+

- Training Stage: Pretraining & Post-training

|

| 30 |

+

- Number of Parameters: 30.5B in total and 3.3B activated

|

| 31 |

+

- Number of Layers: 48

|

| 32 |

+

- Number of Attention Heads (GQA): 32 for Q and 4 for KV

|

| 33 |

+

- Number of Experts: 128

|

| 34 |

+

- Number of Activated Experts: 8

|

| 35 |

+

- Context Length: **262,144 natively**.

|

| 36 |

+

|

| 37 |

+

**NOTE: This model supports only non-thinking mode and does not generate ``<think></think>`` blocks in its output. Meanwhile, specifying `enable_thinking=False` is no longer required.**

|

| 38 |

+

|

| 39 |

+

For more details, including benchmark evaluation, hardware requirements, and inference performance, please refer to our [blog](https://qwenlm.github.io/blog/qwen3-coder/), [GitHub](https://github.com/QwenLM/Qwen3-Coder), and [Documentation](https://qwen.readthedocs.io/en/latest/).

|

| 40 |

+

|

| 41 |

+

|

| 42 |

+

## Quickstart

|

| 43 |

+

|

| 44 |

+

We advise you to use the latest version of `transformers`.

|

| 45 |

+

|

| 46 |

+

With `transformers<4.51.0`, you will encounter the following error:

|

| 47 |

+

```

|

| 48 |

+

KeyError: 'qwen3_moe'

|

| 49 |

+

```

|

| 50 |

+

|

| 51 |

+

The following contains a code snippet illustrating how to use the model generate content based on given inputs.

|

| 52 |

+

```python

|

| 53 |

+

from transformers import AutoModelForCausalLM, AutoTokenizer

|

| 54 |

+

|

| 55 |

+

model_name = "Qwen/Qwen3-Coder-30B-A3B-Instruct"

|

| 56 |

+

|

| 57 |

+

# load the tokenizer and the model

|

| 58 |

+

tokenizer = AutoTokenizer.from_pretrained(model_name)

|

| 59 |

+

model = AutoModelForCausalLM.from_pretrained(

|

| 60 |

+

model_name,

|

| 61 |

+

torch_dtype="auto",

|

| 62 |

+

device_map="auto"

|

| 63 |

+

)

|

| 64 |

+

|

| 65 |

+

# prepare the model input

|

| 66 |

+

prompt = "Write a quick sort algorithm."

|

| 67 |

+

messages = [

|

| 68 |

+

{"role": "user", "content": prompt}

|

| 69 |

+

]

|

| 70 |

+

text = tokenizer.apply_chat_template(

|

| 71 |

+

messages,

|

| 72 |

+

tokenize=False,

|

| 73 |

+

add_generation_prompt=True,

|

| 74 |

+

)

|

| 75 |

+

model_inputs = tokenizer([text], return_tensors="pt").to(model.device)

|

| 76 |

+

|

| 77 |

+

# conduct text completion

|

| 78 |

+

generated_ids = model.generate(

|

| 79 |

+

**model_inputs,

|

| 80 |

+

max_new_tokens=65536

|

| 81 |

+

)

|

| 82 |

+

output_ids = generated_ids[0][len(model_inputs.input_ids[0]):].tolist()

|

| 83 |

+

|

| 84 |

+

content = tokenizer.decode(output_ids, skip_special_tokens=True)

|

| 85 |

+

|

| 86 |

+

print("content:", content)

|

| 87 |

+

```

|

| 88 |

+

|

| 89 |

+

**Note: If you encounter out-of-memory (OOM) issues, consider reducing the context length to a shorter value, such as `32,768`.**

|

| 90 |

+

|

| 91 |

+

For local use, applications such as Ollama, LMStudio, MLX-LM, llama.cpp, and KTransformers have also supported Qwen3.

|

| 92 |

+

|

| 93 |

+

## Agentic Coding

|

| 94 |

+

|

| 95 |

+

Qwen3-Coder excels in tool calling capabilities.

|

| 96 |

+

|

| 97 |

+

You can simply define or use any tools as following example.

|

| 98 |

+

```python

|

| 99 |

+

# Your tool implementation

|

| 100 |

+

def square_the_number(num: float) -> dict:

|

| 101 |

+

return num ** 2

|

| 102 |

+

|

| 103 |

+

# Define Tools

|

| 104 |

+

tools=[

|

| 105 |

+

{

|

| 106 |

+

"type":"function",

|

| 107 |

+

"function":{

|

| 108 |

+

"name": "square_the_number",

|

| 109 |

+

"description": "output the square of the number.",

|

| 110 |

+

"parameters": {

|

| 111 |

+

"type": "object",

|

| 112 |

+

"required": ["input_num"],

|

| 113 |

+

"properties": {

|

| 114 |

+

'input_num': {

|

| 115 |

+

'type': 'number',

|

| 116 |

+

'description': 'input_num is a number that will be squared'

|

| 117 |

+

}

|

| 118 |

+

},

|

| 119 |

+

}

|

| 120 |

+

}

|

| 121 |

+

}

|

| 122 |

+

]

|

| 123 |

+

|

| 124 |

+

import OpenAI

|

| 125 |

+

# Define LLM

|

| 126 |

+

client = OpenAI(

|

| 127 |

+

# Use a custom endpoint compatible with OpenAI API

|

| 128 |

+

base_url='http://localhost:8000/v1', # api_base

|

| 129 |

+

api_key="EMPTY"

|

| 130 |

+

)

|

| 131 |

+

|

| 132 |

+

messages = [{'role': 'user', 'content': 'square the number 1024'}]

|

| 133 |

+

|

| 134 |

+

completion = client.chat.completions.create(

|

| 135 |

+

messages=messages,

|

| 136 |

+

model="Qwen3-Coder-30B-A3B-Instruct",

|

| 137 |

+

max_tokens=65536,

|

| 138 |

+

tools=tools,

|

| 139 |

+

)

|

| 140 |

+

|

| 141 |

+

print(completion.choice[0])

|

| 142 |

+

```

|

| 143 |

+

|

| 144 |

+

## Best Practices

|

| 145 |

+

|

| 146 |

+

To achieve optimal performance, we recommend the following settings:

|

| 147 |

+

|

| 148 |

+

1. **Sampling Parameters**:

|

| 149 |

+

- We suggest using `temperature=0.7`, `top_p=0.8`, `top_k=20`, `repetition_penalty=1.05`.

|

| 150 |

+

|

| 151 |

+

2. **Adequate Output Length**: We recommend using an output length of 65,536 tokens for most queries, which is adequate for instruct models.

|

| 152 |

+

|

| 153 |

+

|

| 154 |

+

### Citation

|

| 155 |

+

|

| 156 |

+

If you find our work helpful, feel free to give us a cite.

|

| 157 |

+

|

| 158 |

+

```

|

| 159 |

+

@misc{qwen3technicalreport,

|

| 160 |

+

title={Qwen3 Technical Report},

|

| 161 |

+

author={Qwen Team},

|

| 162 |

+

year={2025},

|

| 163 |

+

eprint={2505.09388},

|

| 164 |

+

archivePrefix={arXiv},

|

| 165 |

+

primaryClass={cs.CL},

|

| 166 |

+

url={https://arxiv.org/abs/2505.09388},

|

| 167 |

+

}

|

| 168 |

+

```

|

added_tokens.json

ADDED

|

@@ -0,0 +1,28 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"</think>": 151668,

|

| 3 |

+

"</tool_call>": 151658,

|

| 4 |

+

"</tool_response>": 151666,

|

| 5 |

+

"<think>": 151667,

|

| 6 |

+

"<tool_call>": 151657,

|

| 7 |

+

"<tool_response>": 151665,

|

| 8 |

+

"<|box_end|>": 151649,

|

| 9 |

+

"<|box_start|>": 151648,

|

| 10 |

+

"<|endoftext|>": 151643,

|

| 11 |

+

"<|file_sep|>": 151664,

|

| 12 |

+

"<|fim_middle|>": 151660,

|

| 13 |

+

"<|fim_pad|>": 151662,

|

| 14 |

+

"<|fim_prefix|>": 151659,

|

| 15 |

+

"<|fim_suffix|>": 151661,

|

| 16 |

+

"<|im_end|>": 151645,

|

| 17 |

+

"<|im_start|>": 151644,

|

| 18 |

+

"<|image_pad|>": 151655,

|

| 19 |

+

"<|object_ref_end|>": 151647,

|

| 20 |

+

"<|object_ref_start|>": 151646,

|

| 21 |

+

"<|quad_end|>": 151651,

|

| 22 |

+

"<|quad_start|>": 151650,

|

| 23 |

+

"<|repo_name|>": 151663,

|

| 24 |

+

"<|video_pad|>": 151656,

|

| 25 |

+

"<|vision_end|>": 151653,

|

| 26 |

+

"<|vision_pad|>": 151654,

|

| 27 |

+

"<|vision_start|>": 151652

|

| 28 |

+

}

|

chat_template.jinja

ADDED

|

@@ -0,0 +1,131 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{% macro render_item_list(item_list, tag_name='required') %}

|

| 2 |

+

{%- if item_list is defined and item_list is iterable and item_list | length > 0 %}

|

| 3 |

+

{%- if tag_name %}{{- '\n<' ~ tag_name ~ '>' -}}{% endif %}

|

| 4 |

+

{{- '[' }}

|

| 5 |

+

{%- for item in item_list -%}

|

| 6 |

+

{%- if loop.index > 1 %}{{- ", "}}{% endif -%}

|

| 7 |

+

{%- if item is string -%}

|

| 8 |

+

{{ "`" ~ item ~ "`" }}

|

| 9 |

+

{%- else -%}

|

| 10 |

+

{{ item }}

|

| 11 |

+

{%- endif -%}

|

| 12 |

+

{%- endfor -%}

|

| 13 |

+

{{- ']' }}

|

| 14 |

+

{%- if tag_name %}{{- '</' ~ tag_name ~ '>' -}}{% endif %}

|

| 15 |

+

{%- endif %}

|

| 16 |

+

{% endmacro %}

|

| 17 |

+

|

| 18 |

+

{%- if messages[0]["role"] == "system" %}

|

| 19 |

+

{%- set system_message = messages[0]["content"] %}

|

| 20 |

+

{%- set loop_messages = messages[1:] %}

|

| 21 |

+

{%- else %}

|

| 22 |

+

{%- set loop_messages = messages %}

|

| 23 |

+

{%- endif %}

|

| 24 |

+

|

| 25 |

+

{%- if not tools is defined %}

|

| 26 |

+

{%- set tools = [] %}

|

| 27 |

+

{%- endif %}

|

| 28 |

+

|

| 29 |

+

{%- if system_message is defined %}

|

| 30 |

+

{{- "<|im_start|>system\n" + system_message }}

|

| 31 |

+

{%- else %}

|

| 32 |

+

{%- if tools is iterable and tools | length > 0 %}

|

| 33 |

+

{{- "<|im_start|>system\nYou are Qwen, a helpful AI assistant that can interact with a computer to solve tasks." }}

|

| 34 |

+

{%- endif %}

|

| 35 |

+

{%- endif %}

|

| 36 |

+

{%- if tools is iterable and tools | length > 0 %}

|

| 37 |

+

{{- "\n\nYou have access to the following functions:\n\n" }}

|

| 38 |

+

{{- "<tools>" }}

|

| 39 |

+

{%- for tool in tools %}

|

| 40 |

+

{%- if tool.function is defined %}

|

| 41 |

+

{%- set tool = tool.function %}

|

| 42 |

+

{%- endif %}

|

| 43 |

+

{{- "\n<function>\n<name>" ~ tool.name ~ "</name>" }}

|

| 44 |

+

{{- '\n<description>' ~ (tool.description | trim) ~ '</description>' }}

|

| 45 |

+

{{- '\n<parameters>' }}

|

| 46 |

+

{%- for param_name, param_fields in tool.parameters.properties|items %}

|

| 47 |

+

{{- '\n<parameter>' }}

|

| 48 |

+

{{- '\n<name>' ~ param_name ~ '</name>' }}

|

| 49 |

+

{%- if param_fields.type is defined %}

|

| 50 |

+

{{- '\n<type>' ~ (param_fields.type | string) ~ '</type>' }}

|

| 51 |

+

{%- endif %}

|

| 52 |

+

{%- if param_fields.description is defined %}

|

| 53 |

+

{{- '\n<description>' ~ (param_fields.description | trim) ~ '</description>' }}

|

| 54 |

+

{%- endif %}

|

| 55 |

+

{{- render_item_list(param_fields.enum, 'enum') }}

|

| 56 |

+

{%- set handled_keys = ['type', 'description', 'enum', 'required'] %}

|

| 57 |

+

{%- for json_key in param_fields.keys() | reject("in", handled_keys) %}

|

| 58 |

+

{%- set normed_json_key = json_key | replace("-", "_") | replace(" ", "_") | replace("$", "") %}

|

| 59 |

+

{%- if param_fields[json_key] is mapping %}

|

| 60 |

+

{{- '\n<' ~ normed_json_key ~ '>' ~ (param_fields[json_key] | tojson | safe) ~ '</' ~ normed_json_key ~ '>' }}

|

| 61 |

+

{%- else %}

|

| 62 |

+

{{-'\n<' ~ normed_json_key ~ '>' ~ (param_fields[json_key] | string) ~ '</' ~ normed_json_key ~ '>' }}

|

| 63 |

+

{%- endif %}

|

| 64 |

+

{%- endfor %}

|

| 65 |

+

{{- render_item_list(param_fields.required, 'required') }}

|

| 66 |

+

{{- '\n</parameter>' }}

|

| 67 |

+

{%- endfor %}

|

| 68 |

+

{{- render_item_list(tool.parameters.required, 'required') }}

|

| 69 |

+

{{- '\n</parameters>' }}

|

| 70 |

+

{%- if tool.return is defined %}

|

| 71 |

+

{%- if tool.return is mapping %}

|

| 72 |

+

{{- '\n<return>' ~ (tool.return | tojson | safe) ~ '</return>' }}

|

| 73 |

+

{%- else %}

|

| 74 |

+

{{- '\n<return>' ~ (tool.return | string) ~ '</return>' }}

|

| 75 |

+

{%- endif %}

|

| 76 |

+

{%- endif %}

|

| 77 |

+

{{- '\n</function>' }}

|

| 78 |

+

{%- endfor %}

|

| 79 |

+

{{- "\n</tools>" }}

|

| 80 |

+

{{- '\n\nIf you choose to call a function ONLY reply in the following format with NO suffix:\n\n<tool_call>\n<function=example_function_name>\n<parameter=example_parameter_1>\nvalue_1\n</parameter>\n<parameter=example_parameter_2>\nThis is the value for the second parameter\nthat can span\nmultiple lines\n</parameter>\n</function>\n</tool_call>\n\n<IMPORTANT>\nReminder:\n- Function calls MUST follow the specified format: an inner <function=...></function> block must be nested within <tool_call></tool_call> XML tags\n- Required parameters MUST be specified\n- You may provide optional reasoning for your function call in natural language BEFORE the function call, but NOT after\n- If there is no function call available, answer the question like normal with your current knowledge and do not tell the user about function calls\n</IMPORTANT>' }}

|

| 81 |

+

{%- endif %}

|

| 82 |

+

{%- if system_message is defined %}

|

| 83 |

+

{{- '<|im_end|>\n' }}

|

| 84 |

+

{%- else %}

|

| 85 |

+

{%- if tools is iterable and tools | length > 0 %}

|

| 86 |

+

{{- '<|im_end|>\n' }}

|

| 87 |

+

{%- endif %}

|

| 88 |

+

{%- endif %}

|

| 89 |

+

{%- for message in loop_messages %}

|

| 90 |

+

{%- if message.role == "assistant" and message.tool_calls is defined and message.tool_calls is iterable and message.tool_calls | length > 0 %}

|

| 91 |

+

{{- '<|im_start|>' + message.role }}

|

| 92 |

+

{%- if message.content is defined and message.content is string and message.content | trim | length > 0 %}

|

| 93 |

+

{{- '\n' + message.content | trim + '\n' }}

|

| 94 |

+

{%- endif %}

|

| 95 |

+

{%- for tool_call in message.tool_calls %}

|

| 96 |

+

{%- if tool_call.function is defined %}

|

| 97 |

+

{%- set tool_call = tool_call.function %}

|

| 98 |

+

{%- endif %}

|

| 99 |

+

{{- '\n<tool_call>\n<function=' + tool_call.name + '>\n' }}

|

| 100 |

+

{%- if tool_call.arguments is defined %}

|

| 101 |

+

{%- for args_name, args_value in tool_call.arguments|items %}

|

| 102 |

+

{{- '<parameter=' + args_name + '>\n' }}

|

| 103 |

+

{%- set args_value = args_value if args_value is string else args_value | string %}

|

| 104 |

+

{{- args_value }}

|

| 105 |

+

{{- '\n</parameter>\n' }}

|

| 106 |

+

{%- endfor %}

|

| 107 |

+

{%- endif %}

|

| 108 |

+

{{- '</function>\n</tool_call>' }}

|

| 109 |

+

{%- endfor %}

|

| 110 |

+

{{- '<|im_end|>\n' }}

|

| 111 |

+

{%- elif message.role == "user" or message.role == "system" or message.role == "assistant" %}

|

| 112 |

+

{{- '<|im_start|>' + message.role + '\n' + message.content + '<|im_end|>' + '\n' }}

|

| 113 |

+

{%- elif message.role == "tool" %}

|

| 114 |

+

{%- if loop.previtem and loop.previtem.role != "tool" %}

|

| 115 |

+

{{- '<|im_start|>user\n' }}

|

| 116 |

+

{%- endif %}

|

| 117 |

+

{{- '<tool_response>\n' }}

|

| 118 |

+

{{- message.content }}

|

| 119 |

+

{{- '\n</tool_response>\n' }}

|

| 120 |

+

{%- if not loop.last and loop.nextitem.role != "tool" %}

|

| 121 |

+

{{- '<|im_end|>\n' }}

|

| 122 |

+

{%- elif loop.last %}

|

| 123 |

+

{{- '<|im_end|>\n' }}

|

| 124 |

+

{%- endif %}

|

| 125 |

+

{%- else %}

|

| 126 |

+

{{- '<|im_start|>' + message.role + '\n' + message.content + '<|im_end|>\n' }}

|

| 127 |

+

{%- endif %}

|

| 128 |

+

{%- endfor %}

|

| 129 |

+

{%- if add_generation_prompt %}

|

| 130 |

+

{{- '<|im_start|>assistant\n' }}

|

| 131 |

+

{%- endif %}

|

config.json

ADDED

|

@@ -0,0 +1,119 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"architectures": [

|

| 3 |

+

"Qwen3MoeForCausalLM"

|

| 4 |

+

],

|

| 5 |

+

"attention_bias": false,

|

| 6 |

+

"attention_dropout": 0.0,

|

| 7 |

+

"decoder_sparse_step": 1,

|

| 8 |

+

"eos_token_id": 151645,

|

| 9 |

+

"head_dim": 128,

|

| 10 |

+

"hidden_act": "silu",

|

| 11 |

+

"hidden_size": 2048,

|

| 12 |

+

"initializer_range": 0.02,

|

| 13 |

+

"intermediate_size": 5472,

|

| 14 |

+

"max_position_embeddings": 262144,

|

| 15 |

+

"max_window_layers": 28,

|

| 16 |

+

"mlp_only_layers": [],

|

| 17 |

+

"model_type": "qwen3_moe",

|

| 18 |

+

"moe_intermediate_size": 768,

|

| 19 |

+

"norm_topk_prob": true,

|

| 20 |

+

"num_attention_heads": 32,

|

| 21 |

+

"num_experts": 128,

|

| 22 |

+

"num_experts_per_tok": 8,

|

| 23 |

+

"num_hidden_layers": 48,

|

| 24 |

+

"num_key_value_heads": 4,

|

| 25 |

+

"output_router_logits": false,

|

| 26 |

+

"qkv_bias": false,

|

| 27 |

+

"quantization_config": {

|

| 28 |

+

"config_groups": {

|

| 29 |

+

"group_0": {

|

| 30 |

+

"input_activations": null,

|

| 31 |

+

"output_activations": null,

|

| 32 |

+

"targets": [

|

| 33 |

+

"Linear"

|

| 34 |

+

],

|

| 35 |

+

"weights": {

|

| 36 |

+

"actorder": null,

|

| 37 |

+

"block_structure": null,

|

| 38 |

+

"dynamic": false,

|

| 39 |

+

"group_size": 128,

|

| 40 |

+

"num_bits": 4,

|

| 41 |

+

"observer": "minmax",

|

| 42 |

+

"observer_kwargs": {},

|

| 43 |

+

"strategy": "group",

|

| 44 |

+

"symmetric": true,

|

| 45 |

+

"type": "int"

|

| 46 |

+

}

|

| 47 |

+

}

|

| 48 |

+

},

|

| 49 |

+

"format": "pack-quantized",

|

| 50 |

+

"global_compression_ratio": null,

|

| 51 |

+

"ignore": [

|

| 52 |

+

"model.layers.0.mlp.gate",

|

| 53 |

+

"model.layers.1.mlp.gate",

|

| 54 |

+

"model.layers.2.mlp.gate",

|

| 55 |

+

"model.layers.3.mlp.gate",

|

| 56 |

+

"model.layers.4.mlp.gate",

|

| 57 |

+

"model.layers.5.mlp.gate",

|

| 58 |

+

"model.layers.6.mlp.gate",

|

| 59 |

+

"model.layers.7.mlp.gate",

|

| 60 |

+

"model.layers.8.mlp.gate",

|

| 61 |

+

"model.layers.9.mlp.gate",

|

| 62 |

+

"model.layers.10.mlp.gate",

|

| 63 |

+

"model.layers.11.mlp.gate",

|

| 64 |

+

"model.layers.12.mlp.gate",

|

| 65 |

+

"model.layers.13.mlp.gate",

|

| 66 |

+

"model.layers.14.mlp.gate",

|

| 67 |

+

"model.layers.15.mlp.gate",

|

| 68 |

+

"model.layers.16.mlp.gate",

|

| 69 |

+

"model.layers.17.mlp.gate",

|

| 70 |

+

"model.layers.18.mlp.gate",

|

| 71 |

+

"model.layers.19.mlp.gate",

|

| 72 |

+

"model.layers.20.mlp.gate",

|

| 73 |

+

"model.layers.21.mlp.gate",

|

| 74 |

+

"model.layers.22.mlp.gate",

|

| 75 |

+

"model.layers.23.mlp.gate",

|

| 76 |

+

"model.layers.24.mlp.gate",

|

| 77 |

+

"model.layers.25.mlp.gate",

|

| 78 |

+

"model.layers.26.mlp.gate",

|

| 79 |

+

"model.layers.27.mlp.gate",

|

| 80 |

+

"model.layers.28.mlp.gate",

|

| 81 |

+

"model.layers.29.mlp.gate",

|

| 82 |

+

"model.layers.30.mlp.gate",

|

| 83 |

+

"model.layers.31.mlp.gate",

|

| 84 |

+

"model.layers.32.mlp.gate",

|

| 85 |

+

"model.layers.33.mlp.gate",

|

| 86 |

+

"model.layers.34.mlp.gate",

|

| 87 |

+

"model.layers.35.mlp.gate",

|

| 88 |

+

"model.layers.36.mlp.gate",

|

| 89 |

+

"model.layers.37.mlp.gate",

|

| 90 |

+

"model.layers.38.mlp.gate",

|

| 91 |

+

"model.layers.39.mlp.gate",

|

| 92 |

+

"model.layers.40.mlp.gate",

|

| 93 |

+

"model.layers.41.mlp.gate",

|

| 94 |

+

"model.layers.42.mlp.gate",

|

| 95 |

+

"model.layers.43.mlp.gate",

|

| 96 |

+

"model.layers.44.mlp.gate",

|

| 97 |

+

"model.layers.45.mlp.gate",

|

| 98 |

+

"model.layers.46.mlp.gate",

|

| 99 |

+

"model.layers.47.mlp.gate",

|

| 100 |

+

"lm_head"

|

| 101 |

+

],

|

| 102 |

+

"kv_cache_scheme": null,

|

| 103 |

+

"quant_method": "compressed-tensors",

|

| 104 |

+

"quantization_status": "compressed"

|

| 105 |

+

},

|

| 106 |

+

"rms_norm_eps": 1e-06,

|

| 107 |

+

"rope_scaling": null,

|

| 108 |

+

"rope_theta": 10000000,

|

| 109 |

+

"router_aux_loss_coef": 0.0,

|

| 110 |

+

"shared_expert_intermediate_size": 0,

|

| 111 |

+

"sliding_window": null,

|

| 112 |

+

"tie_word_embeddings": false,

|

| 113 |

+

"torch_dtype": "bfloat16",

|

| 114 |

+

"transformers_version": "4.55.0.dev0",

|

| 115 |

+

"use_cache": true,

|

| 116 |

+

"use_qk_norm": true,

|

| 117 |

+

"use_sliding_window": false,

|

| 118 |

+

"vocab_size": 151936

|

| 119 |

+

}

|

generation_config.json

ADDED

|

@@ -0,0 +1,13 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"do_sample": true,

|

| 3 |

+

"eos_token_id": [

|

| 4 |

+

151645,

|

| 5 |

+

151643

|

| 6 |

+

],

|

| 7 |

+

"pad_token_id": 151643,

|

| 8 |

+

"repetition_penalty": 1.05,

|

| 9 |

+

"temperature": 0.7,

|

| 10 |

+

"top_k": 20,

|

| 11 |

+

"top_p": 0.8,

|

| 12 |

+

"transformers_version": "4.55.0.dev0"

|

| 13 |

+

}

|

merges.txt

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

model-00001-of-00004.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:2e1a42fe73604e458f1a82f159d8cd1709e4f15811f6435814d7d3f30685bbe9

|

| 3 |

+

size 5001524144

|

model-00002-of-00004.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:ab7c9b80ef26a38e6579b2555c5a04af644a37d987313c93c2f515114a5f99d0

|

| 3 |

+

size 5001803304

|

model-00003-of-00004.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:a4cfbaf0e35bde6aa08fb2be8a0e686fd7b2b67109f030cc8be8f2ea0574628a

|

| 3 |

+

size 5002084152

|

model-00004-of-00004.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:5f121b899487ba1db587a90cb6356853ee6af54ce7d8f9ee14374e5a3504ee8b

|

| 3 |

+

size 1687667728

|

model.safetensors.index.json

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

qwen3coder_tool_parser.py

ADDED

|

@@ -0,0 +1,675 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# SPDX-License-Identifier: Apache-2.0

|

| 2 |

+

|

| 3 |

+

import json

|

| 4 |

+

import re

|

| 5 |

+

import uuid

|

| 6 |

+

from collections.abc import Sequence

|

| 7 |

+

from typing import Union, Optional, Any, List, Dict

|

| 8 |

+

from enum import Enum

|

| 9 |

+

|

| 10 |

+

from vllm.entrypoints.openai.protocol import (

|

| 11 |

+

ChatCompletionRequest,

|

| 12 |

+

ChatCompletionToolsParam,

|

| 13 |

+

DeltaMessage,

|

| 14 |

+

DeltaToolCall,

|

| 15 |

+

DeltaFunctionCall,

|

| 16 |

+

ExtractedToolCallInformation,

|

| 17 |

+

FunctionCall,

|

| 18 |

+

ToolCall,

|

| 19 |

+

)

|

| 20 |

+

from vllm.entrypoints.openai.tool_parsers.abstract_tool_parser import (

|

| 21 |

+

ToolParser,

|

| 22 |

+

ToolParserManager,

|

| 23 |

+

)

|

| 24 |

+

from vllm.logger import init_logger

|

| 25 |

+

from vllm.transformers_utils.tokenizer import AnyTokenizer

|

| 26 |

+

|

| 27 |

+

logger = init_logger(__name__)

|

| 28 |

+

|

| 29 |

+

|

| 30 |

+

@ToolParserManager.register_module("qwen3_xml")

|

| 31 |

+

class Qwen3XMLToolParser(ToolParser):

|

| 32 |

+

def __init__(self, tokenizer: AnyTokenizer):

|

| 33 |

+

super().__init__(tokenizer)

|

| 34 |

+

|

| 35 |

+

self.current_tool_name_sent: bool = False

|

| 36 |

+

self.prev_tool_call_arr: list[dict] = []

|

| 37 |

+

self.current_tool_id: int = -1

|

| 38 |

+

self.streamed_args_for_tool: list[str] = []

|

| 39 |

+

|

| 40 |

+

# Sentinel tokens for streaming mode

|

| 41 |

+

self.tool_call_start_token: str = "<tool_call>"

|

| 42 |

+

self.tool_call_end_token: str = "</tool_call>"

|

| 43 |

+

self.tool_call_prefix: str = "<function="

|

| 44 |

+

self.function_end_token: str = "</function>"

|

| 45 |

+

self.parameter_prefix: str = "<parameter="

|

| 46 |

+

self.parameter_end_token: str = "</parameter>"

|

| 47 |

+

self.is_tool_call_started: bool = False

|

| 48 |

+

self.failed_count: int = 0

|

| 49 |

+

|

| 50 |

+

# Enhanced streaming state - reset for each new message

|

| 51 |

+

self._reset_streaming_state()

|

| 52 |

+

|

| 53 |

+

# Regex patterns

|

| 54 |

+

self.tool_call_complete_regex = re.compile(

|

| 55 |

+

r"<tool_call>(.*?)</tool_call>", re.DOTALL

|

| 56 |

+

)

|

| 57 |

+

self.tool_call_regex = re.compile(

|

| 58 |

+

r"<tool_call>(.*?)</tool_call>|<tool_call>(.*?)$", re.DOTALL

|

| 59 |

+

)

|

| 60 |

+

self.tool_call_function_regex = re.compile(

|

| 61 |

+

r"<function=(.*?)</function>|<function=(.*)$", re.DOTALL

|

| 62 |

+

)

|

| 63 |

+

self.tool_call_parameter_regex = re.compile(

|

| 64 |

+

r"<parameter=(.*?)</parameter>|<parameter=(.*?)$", re.DOTALL

|

| 65 |

+

)

|

| 66 |

+

|

| 67 |

+

if not self.model_tokenizer:

|

| 68 |

+

raise ValueError(

|

| 69 |

+

"The model tokenizer must be passed to the ToolParser "

|

| 70 |

+

"constructor during construction."

|

| 71 |

+

)

|

| 72 |

+

|

| 73 |

+

self.tool_call_start_token_id = self.vocab.get(self.tool_call_start_token)

|

| 74 |

+

self.tool_call_end_token_id = self.vocab.get(self.tool_call_end_token)

|

| 75 |

+

|

| 76 |

+

if self.tool_call_start_token_id is None or self.tool_call_end_token_id is None:

|

| 77 |

+

raise RuntimeError(

|

| 78 |

+

"Qwen3 XML Tool parser could not locate tool call start/end "

|

| 79 |

+

"tokens in the tokenizer!"

|

| 80 |

+

)

|

| 81 |

+

|

| 82 |

+

logger.info(f"vLLM Successfully import tool parser {self.__class__.__name__} !")

|

| 83 |

+

|

| 84 |

+

def _generate_tool_call_id(self) -> str:

|

| 85 |

+

"""Generate a unique tool call ID."""

|

| 86 |

+

return f"call_{uuid.uuid4().hex[:24]}"

|

| 87 |

+

|

| 88 |

+

def _reset_streaming_state(self):

|

| 89 |

+

"""Reset all streaming state."""

|

| 90 |

+

self.current_tool_index = 0

|

| 91 |

+

self.is_tool_call_started = False

|

| 92 |

+

self.header_sent = False

|

| 93 |

+

self.current_tool_id = None

|

| 94 |

+

self.current_function_name = None

|

| 95 |

+

self.current_param_name = None

|

| 96 |

+

self.current_param_value = ""

|

| 97 |

+

self.param_count = 0

|

| 98 |

+

self.in_param = False

|

| 99 |

+

self.in_function = False

|

| 100 |

+

self.accumulated_text = ""

|

| 101 |

+

self.json_started = False

|

| 102 |

+

self.json_closed = False

|

| 103 |

+

|

| 104 |

+

def _parse_xml_function_call(

|

| 105 |

+

self, function_call_str: str, tools: Optional[list[ChatCompletionToolsParam]]

|

| 106 |

+

) -> Optional[ToolCall]:

|

| 107 |

+

def get_arguments_config(func_name: str) -> dict:

|

| 108 |

+

if tools is None:

|

| 109 |

+

return {}

|

| 110 |

+

for config in tools:

|

| 111 |

+

if not hasattr(config, "type") or not (

|

| 112 |

+

hasattr(config, "function") and hasattr(config.function, "name")

|

| 113 |

+

):

|

| 114 |

+

continue

|

| 115 |

+

if config.type == "function" and config.function.name == func_name:

|

| 116 |

+

if not hasattr(config.function, "parameters"):

|

| 117 |

+

return {}

|

| 118 |

+

params = config.function.parameters

|

| 119 |

+

if isinstance(params, dict) and "properties" in params:

|

| 120 |

+

return params["properties"]

|

| 121 |

+

elif isinstance(params, dict):

|

| 122 |

+

return params

|

| 123 |

+

else:

|

| 124 |

+

return {}

|

| 125 |

+

logger.warning(f"Tool '{func_name}' is not defined in the tools list.")

|

| 126 |

+

return {}

|

| 127 |

+

|

| 128 |

+

def convert_param_value(

|

| 129 |

+

param_value: str, param_name: str, param_config: dict, func_name: str

|

| 130 |

+

) -> Any:

|

| 131 |

+

# Handle null value for any type

|

| 132 |

+

if param_value.lower() == "null":

|

| 133 |

+

return None

|

| 134 |

+

|

| 135 |

+

if param_name not in param_config:

|

| 136 |

+

if param_config != {}:

|

| 137 |

+

logger.warning(

|

| 138 |

+

f"Parsed parameter '{param_name}' is not defined in the tool "

|

| 139 |

+

f"parameters for tool '{func_name}', directly returning the string value."

|

| 140 |

+

)

|

| 141 |

+

return param_value

|

| 142 |

+

|

| 143 |

+

if (

|

| 144 |

+

isinstance(param_config[param_name], dict)

|

| 145 |

+

and "type" in param_config[param_name]

|

| 146 |

+

):

|

| 147 |

+

param_type = str(param_config[param_name]["type"]).strip().lower()

|

| 148 |

+

else:

|

| 149 |

+

param_type = "string"

|

| 150 |

+

if param_type in ["string", "str", "text", "varchar", "char", "enum"]:

|

| 151 |

+

return param_value

|

| 152 |

+

elif (

|

| 153 |

+

param_type.startswith("int")

|

| 154 |

+

or param_type.startswith("uint")

|

| 155 |

+

or param_type.startswith("long")

|

| 156 |

+

or param_type.startswith("short")

|

| 157 |

+

or param_type.startswith("unsigned")

|

| 158 |

+

):

|

| 159 |

+

try:

|

| 160 |

+

param_value = int(param_value)

|

| 161 |

+

except:

|

| 162 |

+

logger.warning(

|

| 163 |

+

f"Parsed value '{param_value}' of parameter '{param_name}' is not an integer in tool "

|

| 164 |

+

f"'{func_name}', degenerating to string."

|

| 165 |

+

)

|

| 166 |

+

return param_value

|

| 167 |

+

elif param_type.startswith("num") or param_type.startswith("float"):

|

| 168 |

+

try:

|

| 169 |

+

float_param_value = float(param_value)

|

| 170 |

+

param_value = float_param_value if float_param_value - int(float_param_value) != 0 else int(float_param_value)

|

| 171 |

+

except:

|

| 172 |

+

logger.warning(

|

| 173 |

+

f"Parsed value '{param_value}' of parameter '{param_name}' is not a float in tool "

|

| 174 |

+

f"'{func_name}', degenerating to string."

|

| 175 |

+

)

|

| 176 |

+

return param_value

|

| 177 |

+

elif param_type in ["boolean", "bool", "binary"]:

|

| 178 |

+

param_value = param_value.lower()

|

| 179 |

+

if param_value not in ["true", "false"]:

|

| 180 |

+

logger.warning(

|

| 181 |

+

f"Parsed value '{param_value}' of parameter '{param_name}' is not a boolean (`true` of `false`) in tool '{func_name}', degenerating to false."

|

| 182 |

+

)

|

| 183 |

+

return param_value == "true"

|

| 184 |

+

else:

|

| 185 |

+

if param_type == "object" or param_type.startswith("dict"):

|

| 186 |

+

try:

|

| 187 |

+

param_value = json.loads(param_value)

|

| 188 |

+

return param_value

|

| 189 |

+

except:

|

| 190 |

+

logger.warning(

|

| 191 |

+

f"Parsed value '{param_value}' of parameter '{param_name}' is not a valid JSON object in tool "

|

| 192 |

+

f"'{func_name}', will try other methods to parse it."

|

| 193 |

+

)

|

| 194 |

+

try:

|

| 195 |

+

param_value = eval(param_value)

|

| 196 |

+

except:

|

| 197 |

+

logger.warning(

|

| 198 |

+

f"Parsed value '{param_value}' of parameter '{param_name}' cannot be converted via Python `eval()` in tool '{func_name}', degenerating to string."

|

| 199 |

+

)

|

| 200 |

+

return param_value

|

| 201 |

+

|

| 202 |

+

# Extract function name

|

| 203 |

+

end_index = function_call_str.index(">")

|

| 204 |

+

function_name = function_call_str[:end_index]

|

| 205 |

+

param_config = get_arguments_config(function_name)

|

| 206 |

+

parameters = function_call_str[end_index + 1 :]

|

| 207 |

+

param_dict = {}

|

| 208 |

+

for match in self.tool_call_parameter_regex.findall(parameters):

|

| 209 |

+