Update README.md

Browse files

README.md

CHANGED

|

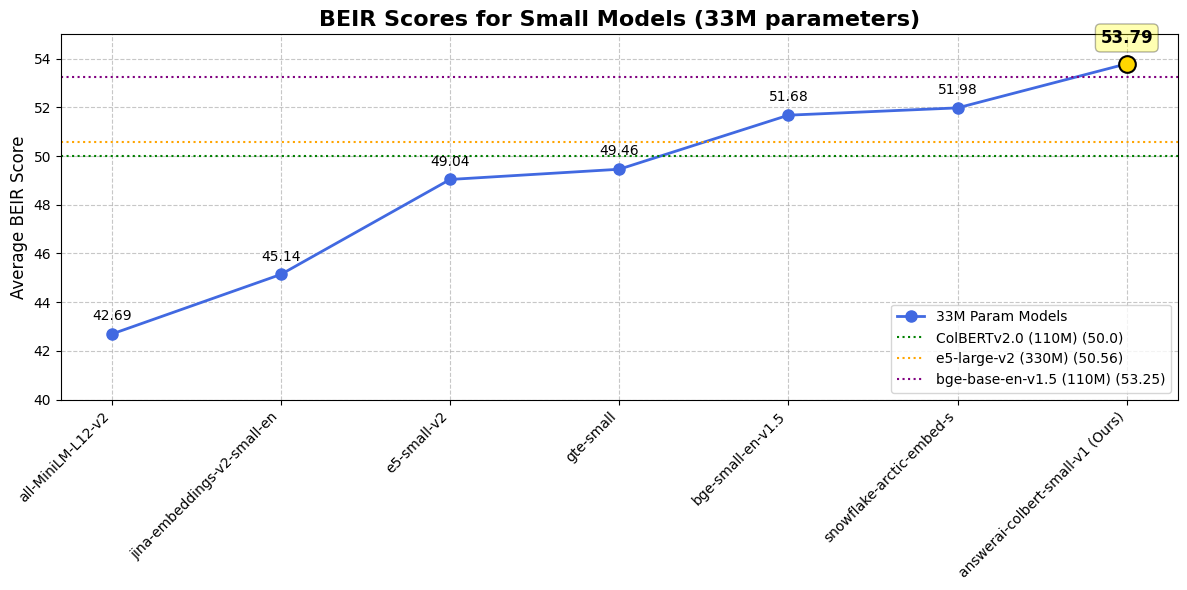

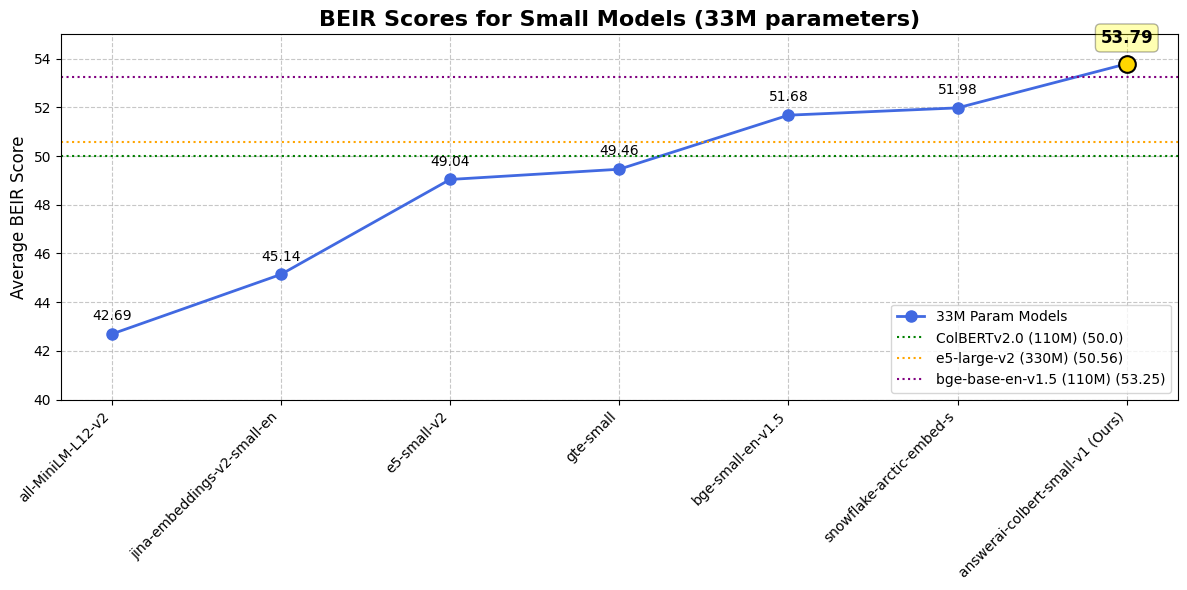

@@ -16,52 +16,6 @@ While being MiniLM-sized, it outperforms all previous similarly-sized models on

|

|

| 16 |

|

| 17 |

For more information about this model or how it was trained, head over to the [announcement blogpost](https://www.answer.ai/posts/2024-08-13-small-but-mighty-colbert.html).

|

| 18 |

|

| 19 |

-

## Results

|

| 20 |

-

|

| 21 |

-

### Against single-vector models

|

| 22 |

-

|

| 23 |

-

|

| 24 |

-

|

| 25 |

-

|

| 26 |

-

| Dataset / Model | answer-colbert-s | snowflake-s | bge-small-en | bge-base-en |

|

| 27 |

-

|:-----------------|:-----------------:|:-------------:|:-------------:|:-------------:|

|

| 28 |

-

| **Size** | 33M (1x) | 33M (1x) | 33M (1x) | **109M (3.3x)** |

|

| 29 |

-

| **BEIR AVG** | **53.79** | 51.99 | 51.68 | 53.25 |

|

| 30 |

-

| **FiQA2018** | **41.15** | 40.65 | 40.34 | 40.65 |

|

| 31 |

-

| **HotpotQA** | **76.11** | 66.54 | 69.94 | 72.6 |

|

| 32 |

-

| **MSMARCO** | **43.5** | 40.23 | 40.83 | 41.35 |

|

| 33 |

-

| **NQ** | **59.1** | 50.9 | 50.18 | 54.15 |

|

| 34 |

-

| **TRECCOVID** | **84.59** | 80.12 | 75.9 | 78.07 |

|

| 35 |

-

| **ArguAna** | 50.09 | 57.59 | 59.55 | **63.61** |

|

| 36 |

-

| **ClimateFEVER**| **33.07** | 35.2 | 31.84 | 31.17 |

|

| 37 |

-

| **CQADupstackRetrieval** | 38.75 | 39.65 | 39.05 | **42.35** |

|

| 38 |

-

| **DBPedia** | **45.58** | 41.02 | 40.03 | 40.77 |

|

| 39 |

-

| **FEVER** | **90.96** | 87.13 | 86.64 | 86.29 |

|

| 40 |

-

| **NFCorpus** | **37.3** | 34.92 | 34.3 | 37.39 |

|

| 41 |

-

| **QuoraRetrieval** | 87.72 | 88.41 | **88.78** | 88.9 |

|

| 42 |

-

| **SCIDOCS** | 18.42 | **21.82** | 20.52 | 21.73 |

|

| 43 |

-

| **SciFact** | **74.77** | 72.22 | 71.28 | 74.04 |

|

| 44 |

-

| **Touche2020** | 25.69 | 23.48 | **26.04** | 25.7 |

|

| 45 |

-

|

| 46 |

-

### Against ColBERTv2.0

|

| 47 |

-

|

| 48 |

-

| Dataset / Model | answerai-colbert-small-v1 | ColBERTv2.0 |

|

| 49 |

-

|:-----------------|:-----------------------:|:------------:|

|

| 50 |

-

| **BEIR AVG** | **53.79** | 50.02 |

|

| 51 |

-

| **DBPedia** | **45.58** | 44.6 |

|

| 52 |

-

| **FiQA2018** | **41.15** | 35.6 |

|

| 53 |

-

| **NQ** | **59.1** | 56.2 |

|

| 54 |

-

| **HotpotQA** | **76.11** | 66.7 |

|

| 55 |

-

| **NFCorpus** | **37.3** | 33.8 |

|

| 56 |

-

| **TRECCOVID** | **84.59** | 73.3 |

|

| 57 |

-

| **Touche2020** | 25.69 | **26.3** |

|

| 58 |

-

| **ArguAna** | **50.09** | 46.3 |

|

| 59 |

-

| **ClimateFEVER**| **33.07** | 17.6 |

|

| 60 |

-

| **FEVER** | **90.96** | 78.5 |

|

| 61 |

-

| **QuoraRetrieval** | **87.72** | 85.2 |

|

| 62 |

-

| **SCIDOCS** | **18.42** | 15.4 |

|

| 63 |

-

| **SciFact** | **74.77** | 69.3 |

|

| 64 |

-

|

| 65 |

## Usage

|

| 66 |

|

| 67 |

### Installation

|

|

@@ -93,6 +47,19 @@ ranker.rank(query=query, docs=docs)

|

|

| 93 |

|

| 94 |

### RAGatouille

|

| 95 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 96 |

### Stanford ColBERT

|

| 97 |

|

| 98 |

#### Indexing

|

|

@@ -149,4 +116,51 @@ from colbert.modeling.checkpoint import Checkpoint

|

|

| 149 |

|

| 150 |

ckpt = Checkpoint(answerdotai/answerai-colbert-small-v1", colbert_config=ColBERTConfig())

|

| 151 |

embedded_query = ckpt.queryFromText(["Who dubs Howl's in English?"], bsize=16)

|

| 152 |

-

```

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 16 |

|

| 17 |

For more information about this model or how it was trained, head over to the [announcement blogpost](https://www.answer.ai/posts/2024-08-13-small-but-mighty-colbert.html).

|

| 18 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 19 |

## Usage

|

| 20 |

|

| 21 |

### Installation

|

|

|

|

| 47 |

|

| 48 |

### RAGatouille

|

| 49 |

|

| 50 |

+

```python

|

| 51 |

+

from ragatouille import RAGPretrainedModel

|

| 52 |

+

|

| 53 |

+

RAG = RAGPretrainedModel.from_pretrained("answerdotai/answerai-colbert-small-v1")

|

| 54 |

+

|

| 55 |

+

docs = ['Hayao Miyazaki is a Japanese director, born on [...]', 'Walt Disney is an American author, director and [...]', ...]

|

| 56 |

+

|

| 57 |

+

RAG.index(documents, index_name="ghibli")

|

| 58 |

+

|

| 59 |

+

query = 'Who directed spirited away?'

|

| 60 |

+

results = RAG.search(query)

|

| 61 |

+

```

|

| 62 |

+

|

| 63 |

### Stanford ColBERT

|

| 64 |

|

| 65 |

#### Indexing

|

|

|

|

| 116 |

|

| 117 |

ckpt = Checkpoint(answerdotai/answerai-colbert-small-v1", colbert_config=ColBERTConfig())

|

| 118 |

embedded_query = ckpt.queryFromText(["Who dubs Howl's in English?"], bsize=16)

|

| 119 |

+

```

|

| 120 |

+

|

| 121 |

+

|

| 122 |

+

## Results

|

| 123 |

+

|

| 124 |

+

### Against single-vector models

|

| 125 |

+

|

| 126 |

+

|

| 127 |

+

|

| 128 |

+

|

| 129 |

+

| Dataset / Model | answer-colbert-s | snowflake-s | bge-small-en | bge-base-en |

|

| 130 |

+

|:-----------------|:-----------------:|:-------------:|:-------------:|:-------------:|

|

| 131 |

+

| **Size** | 33M (1x) | 33M (1x) | 33M (1x) | **109M (3.3x)** |

|

| 132 |

+

| **BEIR AVG** | **53.79** | 51.99 | 51.68 | 53.25 |

|

| 133 |

+

| **FiQA2018** | **41.15** | 40.65 | 40.34 | 40.65 |

|

| 134 |

+

| **HotpotQA** | **76.11** | 66.54 | 69.94 | 72.6 |

|

| 135 |

+

| **MSMARCO** | **43.5** | 40.23 | 40.83 | 41.35 |

|

| 136 |

+

| **NQ** | **59.1** | 50.9 | 50.18 | 54.15 |

|

| 137 |

+

| **TRECCOVID** | **84.59** | 80.12 | 75.9 | 78.07 |

|

| 138 |

+

| **ArguAna** | 50.09 | 57.59 | 59.55 | **63.61** |

|

| 139 |

+

| **ClimateFEVER**| **33.07** | 35.2 | 31.84 | 31.17 |

|

| 140 |

+

| **CQADupstackRetrieval** | 38.75 | 39.65 | 39.05 | **42.35** |

|

| 141 |

+

| **DBPedia** | **45.58** | 41.02 | 40.03 | 40.77 |

|

| 142 |

+

| **FEVER** | **90.96** | 87.13 | 86.64 | 86.29 |

|

| 143 |

+

| **NFCorpus** | **37.3** | 34.92 | 34.3 | 37.39 |

|

| 144 |

+

| **QuoraRetrieval** | 87.72 | 88.41 | **88.78** | 88.9 |

|

| 145 |

+

| **SCIDOCS** | 18.42 | **21.82** | 20.52 | 21.73 |

|

| 146 |

+

| **SciFact** | **74.77** | 72.22 | 71.28 | 74.04 |

|

| 147 |

+

| **Touche2020** | 25.69 | 23.48 | **26.04** | 25.7 |

|

| 148 |

+

|

| 149 |

+

### Against ColBERTv2.0

|

| 150 |

+

|

| 151 |

+

| Dataset / Model | answerai-colbert-small-v1 | ColBERTv2.0 |

|

| 152 |

+

|:-----------------|:-----------------------:|:------------:|

|

| 153 |

+

| **BEIR AVG** | **53.79** | 50.02 |

|

| 154 |

+

| **DBPedia** | **45.58** | 44.6 |

|

| 155 |

+

| **FiQA2018** | **41.15** | 35.6 |

|

| 156 |

+

| **NQ** | **59.1** | 56.2 |

|

| 157 |

+

| **HotpotQA** | **76.11** | 66.7 |

|

| 158 |

+

| **NFCorpus** | **37.3** | 33.8 |

|

| 159 |

+

| **TRECCOVID** | **84.59** | 73.3 |

|

| 160 |

+

| **Touche2020** | 25.69 | **26.3** |

|

| 161 |

+

| **ArguAna** | **50.09** | 46.3 |

|

| 162 |

+

| **ClimateFEVER**| **33.07** | 17.6 |

|

| 163 |

+

| **FEVER** | **90.96** | 78.5 |

|

| 164 |

+

| **QuoraRetrieval** | **87.72** | 85.2 |

|

| 165 |

+

| **SCIDOCS** | **18.42** | 15.4 |

|

| 166 |

+

| **SciFact** | **74.77** | 69.3 |

|