End of training

Browse files- README.md +2 -1

- all_results.json +12 -0

- eval_results.json +7 -0

- train_results.json +8 -0

- trainer_state.json +0 -0

- training_eval_loss.png +0 -0

- training_loss.png +0 -0

README.md

CHANGED

|

@@ -4,6 +4,7 @@ license: apache-2.0

|

|

| 4 |

base_model: Qwen/Qwen2.5-Coder-1.5B-Instruct

|

| 5 |

tags:

|

| 6 |

- llama-factory

|

|

|

|

| 7 |

- generated_from_trainer

|

| 8 |

model-index:

|

| 9 |

- name: qwen2.5_1.5b_500k_O3_16kcw_2ep_risc

|

|

@@ -15,7 +16,7 @@ should probably proofread and complete it, then remove this comment. -->

|

|

| 15 |

|

| 16 |

# qwen2.5_1.5b_500k_O3_16kcw_2ep_risc

|

| 17 |

|

| 18 |

-

This model is a fine-tuned version of [Qwen/Qwen2.5-Coder-1.5B-Instruct](https://huggingface.co/Qwen/Qwen2.5-Coder-1.5B-Instruct) on

|

| 19 |

It achieves the following results on the evaluation set:

|

| 20 |

- Loss: 0.0186

|

| 21 |

|

|

|

|

| 4 |

base_model: Qwen/Qwen2.5-Coder-1.5B-Instruct

|

| 5 |

tags:

|

| 6 |

- llama-factory

|

| 7 |

+

- full

|

| 8 |

- generated_from_trainer

|

| 9 |

model-index:

|

| 10 |

- name: qwen2.5_1.5b_500k_O3_16kcw_2ep_risc

|

|

|

|

| 16 |

|

| 17 |

# qwen2.5_1.5b_500k_O3_16kcw_2ep_risc

|

| 18 |

|

| 19 |

+

This model is a fine-tuned version of [Qwen/Qwen2.5-Coder-1.5B-Instruct](https://huggingface.co/Qwen/Qwen2.5-Coder-1.5B-Instruct) on the anghabench_o3 dataset.

|

| 20 |

It achieves the following results on the evaluation set:

|

| 21 |

- Loss: 0.0186

|

| 22 |

|

all_results.json

ADDED

|

@@ -0,0 +1,12 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"epoch": 2.0,

|

| 3 |

+

"eval_loss": 0.01856272667646408,

|

| 4 |

+

"eval_runtime": 4.0104,

|

| 5 |

+

"eval_samples_per_second": 49.87,

|

| 6 |

+

"eval_steps_per_second": 24.935,

|

| 7 |

+

"total_flos": 1.1570533976082743e+19,

|

| 8 |

+

"train_loss": 0.03249076200670694,

|

| 9 |

+

"train_runtime": 108704.5915,

|

| 10 |

+

"train_samples_per_second": 9.095,

|

| 11 |

+

"train_steps_per_second": 2.274

|

| 12 |

+

}

|

eval_results.json

ADDED

|

@@ -0,0 +1,7 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"epoch": 2.0,

|

| 3 |

+

"eval_loss": 0.01856272667646408,

|

| 4 |

+

"eval_runtime": 4.0104,

|

| 5 |

+

"eval_samples_per_second": 49.87,

|

| 6 |

+

"eval_steps_per_second": 24.935

|

| 7 |

+

}

|

train_results.json

ADDED

|

@@ -0,0 +1,8 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"epoch": 2.0,

|

| 3 |

+

"total_flos": 1.1570533976082743e+19,

|

| 4 |

+

"train_loss": 0.03249076200670694,

|

| 5 |

+

"train_runtime": 108704.5915,

|

| 6 |

+

"train_samples_per_second": 9.095,

|

| 7 |

+

"train_steps_per_second": 2.274

|

| 8 |

+

}

|

trainer_state.json

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

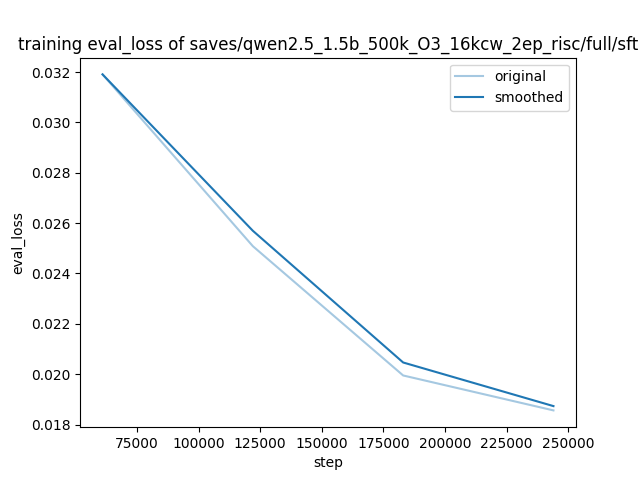

training_eval_loss.png

ADDED

|

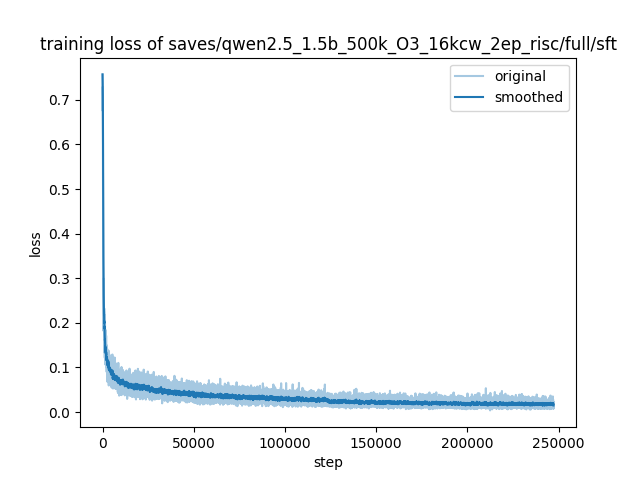

training_loss.png

ADDED

|