File size: 4,443 Bytes

53729b2 d737935 53729b2 d737935 9426747 d737935 |

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 |

---

license: mit

datasets:

- TIGER-Lab/SKGInstruct

language:

- en

---

# 🏗️ StructLM: Towards Building Generalist Models for Structured Knowledge Grounding

Project Page: [https://tiger-ai-lab.github.io/StructLM/](https://tiger-ai-lab.github.io/StructLM/)

Paper: [https://arxiv.org/pdf/2402.16671.pdf](https://arxiv.org/pdf/2402.16671.pdf)

Code: [https://github.com/TIGER-AI-Lab/StructLM](https://github.com/TIGER-AI-Lab/StructLM)

## Introduction

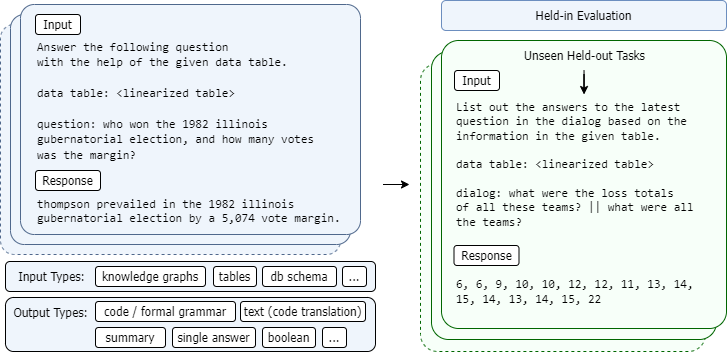

StructLM, is a series of open-source large language models (LLMs) finetuned for structured knowledge grounding (SKG) tasks.

This model is trained using Mistral as the base model, instead of CodeLlama.

## Training Data

This model is trained on 🤗 [SKGInstruct-skg-only Dataset](https://huggingface.co/datasets/TIGER-Lab/SKGInstruct-skg-only). Check out the dataset card for more details.

## Training Procedure

This models is fine-tuned with Mistral-v0.2 models as base models. Each model is trained for 3 epochs, and the best checkpoint is selected.

## Evaluation

Reference our project page for evaluation results on 7B-M

## Usage

You can use the models through Huggingface's Transformers library.

Check our Github repo for the evaluation code: [https://github.com/TIGER-AI-Lab/StructLM](https://github.com/TIGER-AI-Lab/StructLM)

## Prompt Format

**For this 7B model, the prompt format is**

```

Below is an instruction that describes a task, paired with an input that provides further context. Write a response that appropriately completes the request.

### Instruction:

{instruction}

{input}

{question}

### Response:

```

To see concrete examples of this linearization, you can directly reference the 🤗 [SKGInstruct Dataset](https://huggingface.co/datasets/TIGER-Lab/SKGInstruct) (coming soon).

We will provide code for linearizing this data shortly.

A few examples:

**Tabular data**

```

col : day | kilometers row 1 : tuesday | 0 row 2 : wednesday | 0 row 3 : thursday | 4 row 4 : friday | 0 row 5 : saturday | 0

```

**Knowledge triples (dart)**

```

Hawaii Five-O : notes : Episode: The Flight of the Jewels | [TABLECONTEXT] : [title] : Jeff Daniels | [TABLECONTEXT] : title : Hawaii Five-O

```

**Knowledge graph schema (grailqa)**

```

top antiquark: m.094nrqp | physics.particle_antiparticle.self_antiparticle physics.particle_family physics.particle.antiparticle physics.particle_family.subclasses physics.subatomic_particle_generation physics.particle_family.particles physics.particle common.image.appears_in_topic_gallery physics.subatomic_particle_generation.particles physics.particle.family physics.particle_family.parent_class physics.particle_antiparticle physics.particle_antiparticle.particle physics.particle.generation

```

**Example input**

```

Below is an instruction that describes a task, paired with an input that provides further context. Write a response that appropriately completes the request.

### Instruction:

Use the information in the following table to solve the problem, choose between the choices if they are provided. table:

col : day | kilometers row 1 : tuesday | 0 row 2 : wednesday | 0 row 3 : thursday | 4 row 4 : friday | 0 row 5 : saturday | 0

question:

Allie kept track of how many kilometers she walked during the past 5 days. What is the range of the numbers? [/INST]

### Response:

```

## Intended Uses

These models are trained for research purposes. They are designed to be proficient in interpreting linearized structured input. Downstream uses can potentially include various applications requiring the interpretation of structured data.

## Limitations

While we've tried to build an SKG-specialized model capable of generalizing, we have shown that this is a challenging domain, and it may lack performance characteristics that allow it to be directly used in chat or other applications.

## Citation

If you use the models, data, or code from this project, please cite the original paper:

```

@misc{zhuang2024structlm,

title={StructLM: Towards Building Generalist Models for Structured Knowledge Grounding},

author={Alex Zhuang and Ge Zhang and Tianyu Zheng and Xinrun Du and Junjie Wang and Weiming Ren and Stephen W. Huang and Jie Fu and Xiang Yue and Wenhu Chen},

year={2024},

eprint={2402.16671},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

``` |