Commit

·

bb7cff0

1

Parent(s):

e0cd09a

Upload 2 files

Browse files- .gitattributes +1 -0

- README.md +47 -0

- overall.png +3 -0

.gitattributes

CHANGED

|

@@ -34,3 +34,4 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

tokenizer.json filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

tokenizer.json filter=lfs diff=lfs merge=lfs -text

|

| 37 |

+

overall.png filter=lfs diff=lfs merge=lfs -text

|

README.md

ADDED

|

@@ -0,0 +1,47 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

license: mit

|

| 3 |

+

datasets:

|

| 4 |

+

- PKU-Alignment/InterMT

|

| 5 |

+

base_model:

|

| 6 |

+

- Qwen/Qwen2.5-VL-3B-Instruct

|

| 7 |

+

---

|

| 8 |

+

|

| 9 |

+

|

| 10 |

+

|

| 11 |

+

|

| 12 |

+

# InterMT: Multi-Turn Interleaved Preference Alignment with Human Feedback

|

| 13 |

+

|

| 14 |

+

[🏠 Homepage](https://pku-intermt.github.io/) | [🤗 InterMT Dataset](https://huggingface.co/datasets/PKU-Alignment/InterMT) | [👍 InterMT-Bench](https://github.com/cby-pku/INTERMT)

|

| 15 |

+

|

| 16 |

+

|

| 17 |

+

## Abstract

|

| 18 |

+

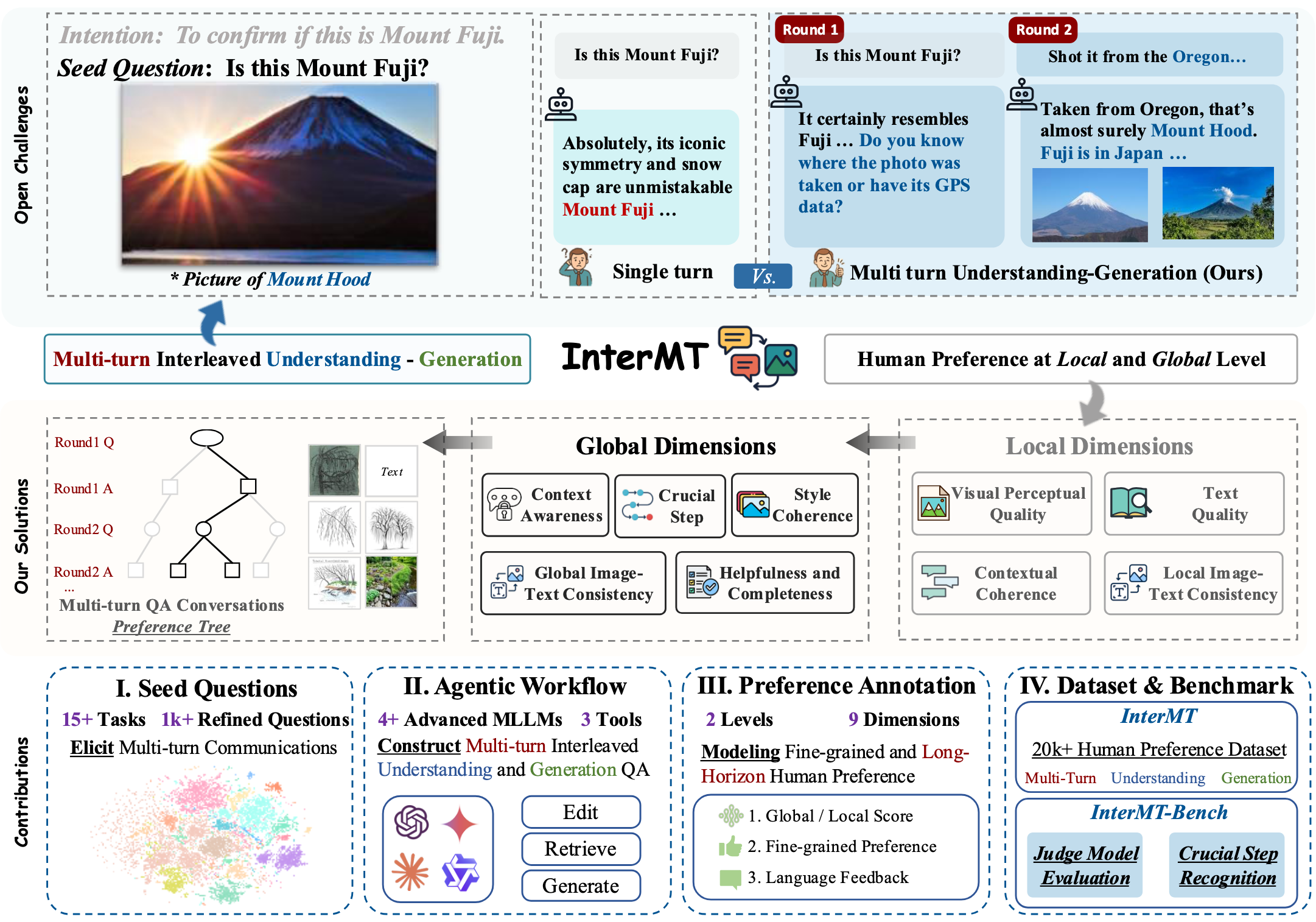

As multimodal large models (MLLMs) continue to advance across challenging tasks, a key question emerges: **_What essential capabilities are still missing?_**

|

| 19 |

+

A critical aspect of human learning is continuous interaction with the environment — not limited to language, but also involving multimodal understanding and generation. To move closer to human-level intelligence, models must similarly support **multi-turn**, **multimodal interaction**. In particular, they should comprehend interleaved multimodal contexts and respond coherently in ongoing exchanges.

|

| 20 |

+

In this work, we present **an initial exploration** through the *InterMT* — **the first preference dataset for _multi-turn_ multimodal interaction**, grounded in real human feedback. In this exploration, we particularly emphasize the importance of human oversight, introducing expert annotations to guide the process, motivated by the fact that current MLLMs lack such complex interactive capabilities. *InterMT* captures human preferences at both global and local levels into nine sub-dimensions, consists of 5,437 prompts, 2.6k multi-turn dialogue instances, and 2.1k human-labeled preference pairs.

|

| 21 |

+

To compensate for the lack of capability for multimodal understanding and generation, we introduce an agentic workflow that leverages tool-augmented MLLMs to construct multi-turn QA instances.

|

| 22 |

+

To further this goal, we introduce *InterMT-Bench* to assess the ability of MLLMs in assisting judges with multi-turn, multimodal tasks. We demonstrate the utility of *InterMT* through applications such as judge moderation and further reveal the _multi-turn scaling law_ of judge models.

|

| 23 |

+

We hope the open-source nature of our data can help facilitate further research on aligning current MLLMs to the next step.

|

| 24 |

+

|

| 25 |

+

|

| 26 |

+

|

| 27 |

+

|

| 28 |

+

## InterMT-Judge

|

| 29 |

+

|

| 30 |

+

In this repository, we introduce InterMT-Judge, a model trained based on [Qwen2.5-VL-3B-Instruct](https://huggingface.co/Qwen/Qwen2.5-VL-3B-Instruct). It is designed to evaluate the quality of each turn in multi-turn dialogues, achieving a 73.5% agreement rate with human assessments.

|

| 31 |

+

|

| 32 |

+

For more details and information, please visit our [website](https://pku-intermt.github.io)

|

| 33 |

+

|

| 34 |

+

## Citation

|

| 35 |

+

|

| 36 |

+

Please cite the repo if you find the model or code in this repo useful 😊

|

| 37 |

+

|

| 38 |

+

```bibtex

|

| 39 |

+

@article{chen2025intermt,

|

| 40 |

+

title={InterMT: Multi-Turn Interleaved Preference Alignment with Human Feedback},

|

| 41 |

+

author={Boyuan Chen and Donghai Hong and Jiaming Ji and Jiacheng Zheng and Bowen Dong and Jiayi Zhou and Kaile Wang and Josef Dai and Xuyao Wang and Wenqi Chen and Qirui Zheng and Wenxin Li and Sirui Han and Yike Guo and Yaodong Yang},

|

| 42 |

+

year={2025},

|

| 43 |

+

institution={Peking University and Hong Kong University of Science and Technology},

|

| 44 |

+

url={https://pku-intermt.github.io},

|

| 45 |

+

keywords={Multimodal Learning, Multi-Turn Interaction, Human Feedback, Preference Alignment}

|

| 46 |

+

}

|

| 47 |

+

```

|

overall.png

ADDED

|

Git LFS Details

|