James

commited on

Upload folder using huggingface_hub

Browse files- .gitattributes +2 -0

- README.md +216 -0

- added_tokens.json +28 -0

- chat_template.jinja +86 -0

- config.json +160 -0

- generation_config.json +13 -0

- hf_quant_config.json +108 -0

- merges.txt +0 -0

- model-00001-of-00027.safetensors +3 -0

- model-00002-of-00027.safetensors +3 -0

- model-00003-of-00027.safetensors +3 -0

- model-00004-of-00027.safetensors +3 -0

- model-00005-of-00027.safetensors +3 -0

- model-00006-of-00027.safetensors +3 -0

- model-00007-of-00027.safetensors +3 -0

- model-00008-of-00027.safetensors +3 -0

- model-00009-of-00027.safetensors +3 -0

- model-00010-of-00027.safetensors +3 -0

- model-00011-of-00027.safetensors +3 -0

- model-00012-of-00027.safetensors +3 -0

- model-00013-of-00027.safetensors +3 -0

- model-00014-of-00027.safetensors +3 -0

- model-00015-of-00027.safetensors +3 -0

- model-00016-of-00027.safetensors +3 -0

- model-00017-of-00027.safetensors +3 -0

- model-00018-of-00027.safetensors +3 -0

- model-00019-of-00027.safetensors +3 -0

- model-00020-of-00027.safetensors +3 -0

- model-00021-of-00027.safetensors +3 -0

- model-00022-of-00027.safetensors +3 -0

- model-00023-of-00027.safetensors +3 -0

- model-00024-of-00027.safetensors +3 -0

- model-00025-of-00027.safetensors +3 -0

- model-00026-of-00027.safetensors +3 -0

- model-00027-of-00027.safetensors +3 -0

- model.safetensors.index.json +3 -0

- special_tokens_map.json +19 -0

- tokenizer.json +3 -0

- tokenizer_config.json +239 -0

- vocab.json +0 -0

.gitattributes

CHANGED

|

@@ -33,3 +33,5 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

tokenizer.json filter=lfs diff=lfs merge=lfs -text

|

| 37 |

+

model.safetensors.index.json filter=lfs diff=lfs merge=lfs -text

|

README.md

ADDED

|

@@ -0,0 +1,216 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

library_name: transformers

|

| 3 |

+

license: apache-2.0

|

| 4 |

+

license_link: https://huggingface.co/Qwen/Qwen3-235B-A22B-Instruct-2507/blob/main/LICENSE

|

| 5 |

+

pipeline_tag: text-generation

|

| 6 |

+

---

|

| 7 |

+

|

| 8 |

+

# Qwen3-235B-A22B-Instruct-2507

|

| 9 |

+

<a href="https://chat.qwen.ai/" target="_blank" style="margin: 2px;">

|

| 10 |

+

<img alt="Chat" src="https://img.shields.io/badge/%F0%9F%92%9C%EF%B8%8F%20Qwen%20Chat%20-536af5" style="display: inline-block; vertical-align: middle;"/>

|

| 11 |

+

</a>

|

| 12 |

+

|

| 13 |

+

## Highlights

|

| 14 |

+

|

| 15 |

+

We introduce the updated version of the **Qwen3-235B-A22B non-thinking mode**, named **Qwen3-235B-A22B-Instruct-2507**, featuring the following key enhancements:

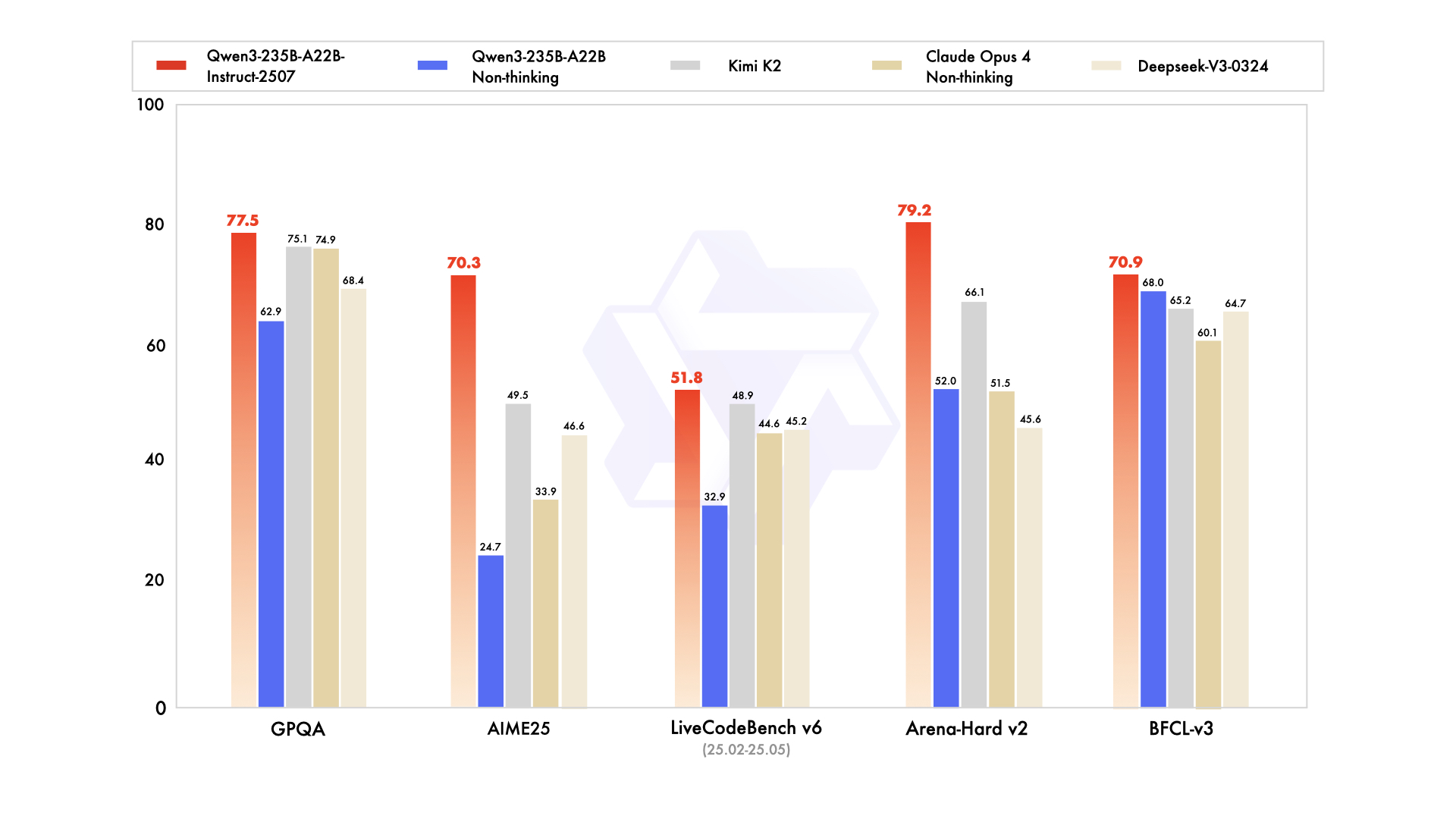

|

| 16 |

+

|

| 17 |

+

- **Significant improvements** in general capabilities, including **instruction following, logical reasoning, text comprehension, mathematics, science, coding and tool usage**.

|

| 18 |

+

- **Substantial gains** in long-tail knowledge coverage across **multiple languages**.

|

| 19 |

+

- **Markedly better alignment** with user preferences in **subjective and open-ended tasks**, enabling more helpful responses and higher-quality text generation.

|

| 20 |

+

- **Enhanced capabilities** in **256K long-context understanding**.

|

| 21 |

+

|

| 22 |

+

|

| 23 |

+

|

| 24 |

+

|

| 25 |

+

## Model Overview

|

| 26 |

+

|

| 27 |

+

**Qwen3-235B-A22B-Instruct-2507** has the following features:

|

| 28 |

+

- Type: Causal Language Models

|

| 29 |

+

- Training Stage: Pretraining & Post-training

|

| 30 |

+

- Number of Parameters: 235B in total and 22B activated

|

| 31 |

+

- Number of Paramaters (Non-Embedding): 234B

|

| 32 |

+

- Number of Layers: 94

|

| 33 |

+

- Number of Attention Heads (GQA): 64 for Q and 4 for KV

|

| 34 |

+

- Number of Experts: 128

|

| 35 |

+

- Number of Activated Experts: 8

|

| 36 |

+

- Context Length: **262,144 natively**.

|

| 37 |

+

|

| 38 |

+

**NOTE: This model supports only non-thinking mode and does not generate ``<think></think>`` blocks in its output. Meanwhile, specifying `enable_thinking=False` is no longer required.**

|

| 39 |

+

|

| 40 |

+

For more details, including benchmark evaluation, hardware requirements, and inference performance, please refer to our [blog](https://qwenlm.github.io/blog/qwen3/), [GitHub](https://github.com/QwenLM/Qwen3), and [Documentation](https://qwen.readthedocs.io/en/latest/).

|

| 41 |

+

|

| 42 |

+

|

| 43 |

+

## Performance

|

| 44 |

+

|

| 45 |

+

| | Deepseek-V3-0324 | GPT-4o-0327 | Claude Opus 4 Non-thinking | Kimi K2 | Qwen3-235B-A22B Non-thinking | Qwen3-235B-A22B-Instruct-2507 |

|

| 46 |

+

|--- | --- | --- | --- | --- | --- | ---|

|

| 47 |

+

| **Knowledge** | | | | | | |

|

| 48 |

+

| MMLU-Pro | 81.2 | 79.8 | **86.6** | 81.1 | 75.2 | 83.0 |

|

| 49 |

+

| MMLU-Redux | 90.4 | 91.3 | **94.2** | 92.7 | 89.2 | 93.1 |

|

| 50 |

+

| GPQA | 68.4 | 66.9 | 74.9 | 75.1 | 62.9 | **77.5** |

|

| 51 |

+

| SuperGPQA | 57.3 | 51.0 | 56.5 | 57.2 | 48.2 | **62.6** |

|

| 52 |

+

| SimpleQA | 27.2 | 40.3 | 22.8 | 31.0 | 12.2 | **54.3** |

|

| 53 |

+

| CSimpleQA | 71.1 | 60.2 | 68.0 | 74.5 | 60.8 | **84.3** |

|

| 54 |

+

| **Reasoning** | | | | | | |

|

| 55 |

+

| AIME25 | 46.6 | 26.7 | 33.9 | 49.5 | 24.7 | **70.3** |

|

| 56 |

+

| HMMT25 | 27.5 | 7.9 | 15.9 | 38.8 | 10.0 | **55.4** |

|

| 57 |

+

| ARC-AGI | 9.0 | 8.8 | 30.3 | 13.3 | 4.3 | **41.8** |

|

| 58 |

+

| ZebraLogic | 83.4 | 52.6 | - | 89.0 | 37.7 | **95.0** |

|

| 59 |

+

| LiveBench 20241125 | 66.9 | 63.7 | 74.6 | **76.4** | 62.5 | 75.4 |

|

| 60 |

+

| **Coding** | | | | | | |

|

| 61 |

+

| LiveCodeBench v6 (25.02-25.05) | 45.2 | 35.8 | 44.6 | 48.9 | 32.9 | **51.8** |

|

| 62 |

+

| MultiPL-E | 82.2 | 82.7 | **88.5** | 85.7 | 79.3 | 87.9 |

|

| 63 |

+

| Aider-Polyglot | 55.1 | 45.3 | **70.7** | 59.0 | 59.6 | 57.3 |

|

| 64 |

+

| **Alignment** | | | | | | |

|

| 65 |

+

| IFEval | 82.3 | 83.9 | 87.4 | **89.8** | 83.2 | 88.7 |

|

| 66 |

+

| Arena-Hard v2* | 45.6 | 61.9 | 51.5 | 66.1 | 52.0 | **79.2** |

|

| 67 |

+

| Creative Writing v3 | 81.6 | 84.9 | 83.8 | **88.1** | 80.4 | 87.5 |

|

| 68 |

+

| WritingBench | 74.5 | 75.5 | 79.2 | **86.2** | 77.0 | 85.2 |

|

| 69 |

+

| **Agent** | | | | | | |

|

| 70 |

+

| BFCL-v3 | 64.7 | 66.5 | 60.1 | 65.2 | 68.0 | **70.9** |

|

| 71 |

+

| TAU-Retail | 49.6 | 60.3# | **81.4** | 70.7 | 65.2 | 71.3 |

|

| 72 |

+

| TAU-Airline | 32.0 | 42.8# | **59.6** | 53.5 | 32.0 | 44.0 |

|

| 73 |

+

| **Multilingualism** | | | | | | |

|

| 74 |

+

| MultiIF | 66.5 | 70.4 | - | 76.2 | 70.2 | **77.5** |

|

| 75 |

+

| MMLU-ProX | 75.8 | 76.2 | - | 74.5 | 73.2 | **79.4** |

|

| 76 |

+

| INCLUDE | 80.1 | **82.1** | - | 76.9 | 75.6 | 79.5 |

|

| 77 |

+

| PolyMATH | 32.2 | 25.5 | 30.0 | 44.8 | 27.0 | **50.2** |

|

| 78 |

+

|

| 79 |

+

*: For reproducibility, we report the win rates evaluated by GPT-4.1.

|

| 80 |

+

|

| 81 |

+

\#: Results were generated using GPT-4o-20241120, as access to the native function calling API of GPT-4o-0327 was unavailable.

|

| 82 |

+

|

| 83 |

+

|

| 84 |

+

## Quickstart

|

| 85 |

+

|

| 86 |

+

The code of Qwen3-MoE has been in the latest Hugging Face `transformers` and we advise you to use the latest version of `transformers`.

|

| 87 |

+

|

| 88 |

+

With `transformers<4.51.0`, you will encounter the following error:

|

| 89 |

+

```

|

| 90 |

+

KeyError: 'qwen3_moe'

|

| 91 |

+

```

|

| 92 |

+

|

| 93 |

+

The following contains a code snippet illustrating how to use the model generate content based on given inputs.

|

| 94 |

+

```python

|

| 95 |

+

from transformers import AutoModelForCausalLM, AutoTokenizer

|

| 96 |

+

|

| 97 |

+

model_name = "Qwen/Qwen3-235B-A22B-Instruct-2507"

|

| 98 |

+

|

| 99 |

+

# load the tokenizer and the model

|

| 100 |

+

tokenizer = AutoTokenizer.from_pretrained(model_name)

|

| 101 |

+

model = AutoModelForCausalLM.from_pretrained(

|

| 102 |

+

model_name,

|

| 103 |

+

torch_dtype="auto",

|

| 104 |

+

device_map="auto"

|

| 105 |

+

)

|

| 106 |

+

|

| 107 |

+

# prepare the model input

|

| 108 |

+

prompt = "Give me a short introduction to large language model."

|

| 109 |

+

messages = [

|

| 110 |

+

{"role": "user", "content": prompt}

|

| 111 |

+

]

|

| 112 |

+

text = tokenizer.apply_chat_template(

|

| 113 |

+

messages,

|

| 114 |

+

tokenize=False,

|

| 115 |

+

add_generation_prompt=True,

|

| 116 |

+

)

|

| 117 |

+

model_inputs = tokenizer([text], return_tensors="pt").to(model.device)

|

| 118 |

+

|

| 119 |

+

# conduct text completion

|

| 120 |

+

generated_ids = model.generate(

|

| 121 |

+

**model_inputs,

|

| 122 |

+

max_new_tokens=16384

|

| 123 |

+

)

|

| 124 |

+

output_ids = generated_ids[0][len(model_inputs.input_ids[0]):].tolist()

|

| 125 |

+

|

| 126 |

+

content = tokenizer.decode(output_ids, skip_special_tokens=True)

|

| 127 |

+

|

| 128 |

+

print("content:", content)

|

| 129 |

+

```

|

| 130 |

+

|

| 131 |

+

For deployment, you can use `sglang>=0.4.6.post1` or `vllm>=0.8.5` or to create an OpenAI-compatible API endpoint:

|

| 132 |

+

- SGLang:

|

| 133 |

+

```shell

|

| 134 |

+

python -m sglang.launch_server --model-path Qwen/Qwen3-235B-A22B-Instruct-2507 --tp 8 --context-length 262144

|

| 135 |

+

```

|

| 136 |

+

- vLLM:

|

| 137 |

+

```shell

|

| 138 |

+

vllm serve Qwen/Qwen3-235B-A22B-Instruct-2507 --tensor-parallel-size 8 --max-model-len 262144

|

| 139 |

+

```

|

| 140 |

+

|

| 141 |

+

**Note: If you encounter out-of-memory (OOM) issues, consider reducing the context length to a shorter value, such as `32,768`.**

|

| 142 |

+

|

| 143 |

+

For local use, applications such as Ollama, LMStudio, MLX-LM, llama.cpp, and KTransformers have also supported Qwen3.

|

| 144 |

+

|

| 145 |

+

## Agentic Use

|

| 146 |

+

|

| 147 |

+

Qwen3 excels in tool calling capabilities. We recommend using [Qwen-Agent](https://github.com/QwenLM/Qwen-Agent) to make the best use of agentic ability of Qwen3. Qwen-Agent encapsulates tool-calling templates and tool-calling parsers internally, greatly reducing coding complexity.

|

| 148 |

+

|

| 149 |

+

To define the available tools, you can use the MCP configuration file, use the integrated tool of Qwen-Agent, or integrate other tools by yourself.

|

| 150 |

+

```python

|

| 151 |

+

from qwen_agent.agents import Assistant

|

| 152 |

+

|

| 153 |

+

# Define LLM

|

| 154 |

+

llm_cfg = {

|

| 155 |

+

'model': 'Qwen3-235B-A22B-Instruct-2507',

|

| 156 |

+

|

| 157 |

+

# Use a custom endpoint compatible with OpenAI API:

|

| 158 |

+

'model_server': 'http://localhost:8000/v1', # api_base

|

| 159 |

+

'api_key': 'EMPTY',

|

| 160 |

+

}

|

| 161 |

+

|

| 162 |

+

# Define Tools

|

| 163 |

+

tools = [

|

| 164 |

+

{'mcpServers': { # You can specify the MCP configuration file

|

| 165 |

+

'time': {

|

| 166 |

+

'command': 'uvx',

|

| 167 |

+

'args': ['mcp-server-time', '--local-timezone=Asia/Shanghai']

|

| 168 |

+

},

|

| 169 |

+

"fetch": {

|

| 170 |

+

"command": "uvx",

|

| 171 |

+

"args": ["mcp-server-fetch"]

|

| 172 |

+

}

|

| 173 |

+

}

|

| 174 |

+

},

|

| 175 |

+

'code_interpreter', # Built-in tools

|

| 176 |

+

]

|

| 177 |

+

|

| 178 |

+

# Define Agent

|

| 179 |

+

bot = Assistant(llm=llm_cfg, function_list=tools)

|

| 180 |

+

|

| 181 |

+

# Streaming generation

|

| 182 |

+

messages = [{'role': 'user', 'content': 'https://qwenlm.github.io/blog/ Introduce the latest developments of Qwen'}]

|

| 183 |

+

for responses in bot.run(messages=messages):

|

| 184 |

+

pass

|

| 185 |

+

print(responses)

|

| 186 |

+

```

|

| 187 |

+

|

| 188 |

+

## Best Practices

|

| 189 |

+

|

| 190 |

+

To achieve optimal performance, we recommend the following settings:

|

| 191 |

+

|

| 192 |

+

1. **Sampling Parameters**:

|

| 193 |

+

- We suggest using `Temperature=0.7`, `TopP=0.8`, `TopK=20`, and `MinP=0`.

|

| 194 |

+

- For supported frameworks, you can adjust the `presence_penalty` parameter between 0 and 2 to reduce endless repetitions. However, using a higher value may occasionally result in language mixing and a slight decrease in model performance.

|

| 195 |

+

|

| 196 |

+

2. **Adequate Output Length**: We recommend using an output length of 16,384 tokens for most queries, which is adequate for instruct models.

|

| 197 |

+

|

| 198 |

+

3. **Standardize Output Format**: We recommend using prompts to standardize model outputs when benchmarking.

|

| 199 |

+

- **Math Problems**: Include "Please reason step by step, and put your final answer within \boxed{}." in the prompt.

|

| 200 |

+

- **Multiple-Choice Questions**: Add the following JSON structure to the prompt to standardize responses: "Please show your choice in the `answer` field with only the choice letter, e.g., `"answer": "C"`."

|

| 201 |

+

|

| 202 |

+

### Citation

|

| 203 |

+

|

| 204 |

+

If you find our work helpful, feel free to give us a cite.

|

| 205 |

+

|

| 206 |

+

```

|

| 207 |

+

@misc{qwen3technicalreport,

|

| 208 |

+

title={Qwen3 Technical Report},

|

| 209 |

+

author={Qwen Team},

|

| 210 |

+

year={2025},

|

| 211 |

+

eprint={2505.09388},

|

| 212 |

+

archivePrefix={arXiv},

|

| 213 |

+

primaryClass={cs.CL},

|

| 214 |

+

url={https://arxiv.org/abs/2505.09388},

|

| 215 |

+

}

|

| 216 |

+

```

|

added_tokens.json

ADDED

|

@@ -0,0 +1,28 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"</think>": 151668,

|

| 3 |

+

"</tool_call>": 151658,

|

| 4 |

+

"</tool_response>": 151666,

|

| 5 |

+

"<think>": 151667,

|

| 6 |

+

"<tool_call>": 151657,

|

| 7 |

+

"<tool_response>": 151665,

|

| 8 |

+

"<|box_end|>": 151649,

|

| 9 |

+

"<|box_start|>": 151648,

|

| 10 |

+

"<|endoftext|>": 151643,

|

| 11 |

+

"<|file_sep|>": 151664,

|

| 12 |

+

"<|fim_middle|>": 151660,

|

| 13 |

+

"<|fim_pad|>": 151662,

|

| 14 |

+

"<|fim_prefix|>": 151659,

|

| 15 |

+

"<|fim_suffix|>": 151661,

|

| 16 |

+

"<|im_end|>": 151645,

|

| 17 |

+

"<|im_start|>": 151644,

|

| 18 |

+

"<|image_pad|>": 151655,

|

| 19 |

+

"<|object_ref_end|>": 151647,

|

| 20 |

+

"<|object_ref_start|>": 151646,

|

| 21 |

+

"<|quad_end|>": 151651,

|

| 22 |

+

"<|quad_start|>": 151650,

|

| 23 |

+

"<|repo_name|>": 151663,

|

| 24 |

+

"<|video_pad|>": 151656,

|

| 25 |

+

"<|vision_end|>": 151653,

|

| 26 |

+

"<|vision_pad|>": 151654,

|

| 27 |

+

"<|vision_start|>": 151652

|

| 28 |

+

}

|

chat_template.jinja

ADDED

|

@@ -0,0 +1,86 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{%- if tools %}

|

| 2 |

+

{{- '<|im_start|>system\n' }}

|

| 3 |

+

{%- if messages[0].role == 'system' %}

|

| 4 |

+

{{- messages[0].content + '\n\n' }}

|

| 5 |

+

{%- endif %}

|

| 6 |

+

{{- "# Tools\n\nYou may call one or more functions to assist with the user query.\n\nYou are provided with function signatures within <tools></tools> XML tags:\n<tools>" }}

|

| 7 |

+

{%- for tool in tools %}

|

| 8 |

+

{{- "\n" }}

|

| 9 |

+

{{- tool | tojson }}

|

| 10 |

+

{%- endfor %}

|

| 11 |

+

{{- "\n</tools>\n\nFor each function call, return a json object with function name and arguments within <tool_call></tool_call> XML tags:\n<tool_call>\n{\"name\": <function-name>, \"arguments\": <args-json-object>}\n</tool_call><|im_end|>\n" }}

|

| 12 |

+

{%- else %}

|

| 13 |

+

{%- if messages[0].role == 'system' %}

|

| 14 |

+

{{- '<|im_start|>system\n' + messages[0].content + '<|im_end|>\n' }}

|

| 15 |

+

{%- endif %}

|

| 16 |

+

{%- endif %}

|

| 17 |

+

{%- set ns = namespace(multi_step_tool=true, last_query_index=messages|length - 1) %}

|

| 18 |

+

{%- for message in messages[::-1] %}

|

| 19 |

+

{%- set index = (messages|length - 1) - loop.index0 %}

|

| 20 |

+

{%- if ns.multi_step_tool and message.role == "user" and message.content is string and not(message.content.startswith('<tool_response>') and message.content.endswith('</tool_response>')) %}

|

| 21 |

+

{%- set ns.multi_step_tool = false %}

|

| 22 |

+

{%- set ns.last_query_index = index %}

|

| 23 |

+

{%- endif %}

|

| 24 |

+

{%- endfor %}

|

| 25 |

+

{%- for message in messages %}

|

| 26 |

+

{%- if message.content is string %}

|

| 27 |

+

{%- set content = message.content %}

|

| 28 |

+

{%- else %}

|

| 29 |

+

{%- set content = '' %}

|

| 30 |

+

{%- endif %}

|

| 31 |

+

{%- if (message.role == "user") or (message.role == "system" and not loop.first) %}

|

| 32 |

+

{{- '<|im_start|>' + message.role + '\n' + content + '<|im_end|>' + '\n' }}

|

| 33 |

+

{%- elif message.role == "assistant" %}

|

| 34 |

+

{%- set reasoning_content = '' %}

|

| 35 |

+

{%- if message.reasoning_content is string %}

|

| 36 |

+

{%- set reasoning_content = message.reasoning_content %}

|

| 37 |

+

{%- else %}

|

| 38 |

+

{%- if '</think>' in content %}

|

| 39 |

+

{%- set reasoning_content = content.split('</think>')[0].rstrip('\n').split('<think>')[-1].lstrip('\n') %}

|

| 40 |

+

{%- set content = content.split('</think>')[-1].lstrip('\n') %}

|

| 41 |

+

{%- endif %}

|

| 42 |

+

{%- endif %}

|

| 43 |

+

{%- if loop.index0 > ns.last_query_index %}

|

| 44 |

+

{%- if loop.last or (not loop.last and reasoning_content) %}

|

| 45 |

+

{{- '<|im_start|>' + message.role + '\n<think>\n' + reasoning_content.strip('\n') + '\n</think>\n\n' + content.lstrip('\n') }}

|

| 46 |

+

{%- else %}

|

| 47 |

+

{{- '<|im_start|>' + message.role + '\n' + content }}

|

| 48 |

+

{%- endif %}

|

| 49 |

+

{%- else %}

|

| 50 |

+

{{- '<|im_start|>' + message.role + '\n' + content }}

|

| 51 |

+

{%- endif %}

|

| 52 |

+

{%- if message.tool_calls %}

|

| 53 |

+

{%- for tool_call in message.tool_calls %}

|

| 54 |

+

{%- if (loop.first and content) or (not loop.first) %}

|

| 55 |

+

{{- '\n' }}

|

| 56 |

+

{%- endif %}

|

| 57 |

+

{%- if tool_call.function %}

|

| 58 |

+

{%- set tool_call = tool_call.function %}

|

| 59 |

+

{%- endif %}

|

| 60 |

+

{{- '<tool_call>\n{"name": "' }}

|

| 61 |

+

{{- tool_call.name }}

|

| 62 |

+

{{- '", "arguments": ' }}

|

| 63 |

+

{%- if tool_call.arguments is string %}

|

| 64 |

+

{{- tool_call.arguments }}

|

| 65 |

+

{%- else %}

|

| 66 |

+

{{- tool_call.arguments | tojson }}

|

| 67 |

+

{%- endif %}

|

| 68 |

+

{{- '}\n</tool_call>' }}

|

| 69 |

+

{%- endfor %}

|

| 70 |

+

{%- endif %}

|

| 71 |

+

{{- '<|im_end|>\n' }}

|

| 72 |

+

{%- elif message.role == "tool" %}

|

| 73 |

+

{%- if loop.first or (messages[loop.index0 - 1].role != "tool") %}

|

| 74 |

+

{{- '<|im_start|>user' }}

|

| 75 |

+

{%- endif %}

|

| 76 |

+

{{- '\n<tool_response>\n' }}

|

| 77 |

+

{{- content }}

|

| 78 |

+

{{- '\n</tool_response>' }}

|

| 79 |

+

{%- if loop.last or (messages[loop.index0 + 1].role != "tool") %}

|

| 80 |

+

{{- '<|im_end|>\n' }}

|

| 81 |

+

{%- endif %}

|

| 82 |

+

{%- endif %}

|

| 83 |

+

{%- endfor %}

|

| 84 |

+

{%- if add_generation_prompt %}

|

| 85 |

+

{{- '<|im_start|>assistant\n' }}

|

| 86 |

+

{%- endif %}

|

config.json

ADDED

|

@@ -0,0 +1,160 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"architectures": [

|

| 3 |

+

"Qwen3MoeForCausalLM"

|

| 4 |

+

],

|

| 5 |

+

"attention_bias": false,

|

| 6 |

+

"attention_dropout": 0.0,

|

| 7 |

+

"bos_token_id": 151643,

|

| 8 |

+

"decoder_sparse_step": 1,

|

| 9 |

+

"eos_token_id": 151645,

|

| 10 |

+

"head_dim": 128,

|

| 11 |

+

"hidden_act": "silu",

|

| 12 |

+

"hidden_size": 4096,

|

| 13 |

+

"initializer_range": 0.02,

|

| 14 |

+

"intermediate_size": 12288,

|

| 15 |

+

"max_position_embeddings": 262144,

|

| 16 |

+

"max_window_layers": 94,

|

| 17 |

+

"mlp_only_layers": [],

|

| 18 |

+

"model_type": "qwen3_moe",

|

| 19 |

+

"moe_intermediate_size": 1536,

|

| 20 |

+

"norm_topk_prob": true,

|

| 21 |

+

"num_attention_heads": 64,

|

| 22 |

+

"num_experts": 128,

|

| 23 |

+

"num_experts_per_tok": 8,

|

| 24 |

+

"num_hidden_layers": 94,

|

| 25 |

+

"num_key_value_heads": 4,

|

| 26 |

+

"output_router_logits": false,

|

| 27 |

+

"rms_norm_eps": 1e-06,

|

| 28 |

+

"rope_scaling": null,

|

| 29 |

+

"rope_theta": 5000000,

|

| 30 |

+

"router_aux_loss_coef": 0.001,

|

| 31 |

+

"sliding_window": null,

|

| 32 |

+

"tie_word_embeddings": false,

|

| 33 |

+

"torch_dtype": "bfloat16",

|

| 34 |

+

"transformers_version": "4.53.3",

|

| 35 |

+

"use_cache": true,

|

| 36 |

+

"use_sliding_window": false,

|

| 37 |

+

"vocab_size": 151936,

|

| 38 |

+

"quantization_config": {

|

| 39 |

+

"config_groups": {

|

| 40 |

+

"group_0": {

|

| 41 |

+

"input_activations": {

|

| 42 |

+

"dynamic": false,

|

| 43 |

+

"num_bits": 4,

|

| 44 |

+

"type": "float",

|

| 45 |

+

"group_size": 16

|

| 46 |

+

},

|

| 47 |

+

"weights": {

|

| 48 |

+

"dynamic": false,

|

| 49 |

+

"num_bits": 4,

|

| 50 |

+

"type": "float",

|

| 51 |

+

"group_size": 16

|

| 52 |

+

}

|

| 53 |

+

}

|

| 54 |

+

},

|

| 55 |

+

"ignore": [

|

| 56 |

+

"model.layers.0.mlp.gate",

|

| 57 |

+

"model.layers.1.mlp.gate",

|

| 58 |

+

"model.layers.10.mlp.gate",

|

| 59 |

+

"model.layers.11.mlp.gate",

|

| 60 |

+

"model.layers.12.mlp.gate",

|

| 61 |

+

"model.layers.13.mlp.gate",

|

| 62 |

+

"model.layers.14.mlp.gate",

|

| 63 |

+

"model.layers.15.mlp.gate",

|

| 64 |

+

"model.layers.16.mlp.gate",

|

| 65 |

+

"model.layers.17.mlp.gate",

|

| 66 |

+

"model.layers.18.mlp.gate",

|

| 67 |

+

"model.layers.19.mlp.gate",

|

| 68 |

+

"model.layers.2.mlp.gate",

|

| 69 |

+

"model.layers.20.mlp.gate",

|

| 70 |

+

"model.layers.21.mlp.gate",

|

| 71 |

+

"model.layers.22.mlp.gate",

|

| 72 |

+

"model.layers.23.mlp.gate",

|

| 73 |

+

"model.layers.24.mlp.gate",

|

| 74 |

+

"model.layers.25.mlp.gate",

|

| 75 |

+

"model.layers.26.mlp.gate",

|

| 76 |

+

"model.layers.27.mlp.gate",

|

| 77 |

+

"model.layers.28.mlp.gate",

|

| 78 |

+

"model.layers.29.mlp.gate",

|

| 79 |

+

"model.layers.3.mlp.gate",

|

| 80 |

+

"model.layers.30.mlp.gate",

|

| 81 |

+

"model.layers.31.mlp.gate",

|

| 82 |

+

"model.layers.32.mlp.gate",

|

| 83 |

+

"model.layers.33.mlp.gate",

|

| 84 |

+

"model.layers.34.mlp.gate",

|

| 85 |

+

"model.layers.35.mlp.gate",

|

| 86 |

+

"model.layers.36.mlp.gate",

|

| 87 |

+

"model.layers.37.mlp.gate",

|

| 88 |

+

"model.layers.38.mlp.gate",

|

| 89 |

+

"model.layers.39.mlp.gate",

|

| 90 |

+

"model.layers.4.mlp.gate",

|

| 91 |

+

"model.layers.40.mlp.gate",

|

| 92 |

+

"model.layers.41.mlp.gate",

|

| 93 |

+

"model.layers.42.mlp.gate",

|

| 94 |

+

"model.layers.43.mlp.gate",

|

| 95 |

+

"model.layers.44.mlp.gate",

|

| 96 |

+

"model.layers.45.mlp.gate",

|

| 97 |

+

"model.layers.46.mlp.gate",

|

| 98 |

+

"model.layers.47.mlp.gate",

|

| 99 |

+

"model.layers.48.mlp.gate",

|

| 100 |

+

"model.layers.49.mlp.gate",

|

| 101 |

+

"model.layers.5.mlp.gate",

|

| 102 |

+

"model.layers.50.mlp.gate",

|

| 103 |

+

"model.layers.51.mlp.gate",

|

| 104 |

+

"model.layers.52.mlp.gate",

|

| 105 |

+

"model.layers.53.mlp.gate",

|

| 106 |

+

"model.layers.54.mlp.gate",

|

| 107 |

+

"model.layers.55.mlp.gate",

|

| 108 |

+

"model.layers.56.mlp.gate",

|

| 109 |

+

"model.layers.57.mlp.gate",

|

| 110 |

+

"model.layers.58.mlp.gate",

|

| 111 |

+

"model.layers.59.mlp.gate",

|

| 112 |

+

"model.layers.6.mlp.gate",

|

| 113 |

+

"model.layers.60.mlp.gate",

|

| 114 |

+

"model.layers.61.mlp.gate",

|

| 115 |

+

"model.layers.62.mlp.gate",

|

| 116 |

+

"model.layers.63.mlp.gate",

|

| 117 |

+

"model.layers.64.mlp.gate",

|

| 118 |

+

"model.layers.65.mlp.gate",

|

| 119 |

+

"model.layers.66.mlp.gate",

|

| 120 |

+

"model.layers.67.mlp.gate",

|

| 121 |

+

"model.layers.68.mlp.gate",

|

| 122 |

+

"model.layers.69.mlp.gate",

|

| 123 |

+

"model.layers.7.mlp.gate",

|

| 124 |

+

"model.layers.70.mlp.gate",

|

| 125 |

+

"model.layers.71.mlp.gate",

|

| 126 |

+

"model.layers.72.mlp.gate",

|

| 127 |

+

"model.layers.73.mlp.gate",

|

| 128 |

+

"model.layers.74.mlp.gate",

|

| 129 |

+

"model.layers.75.mlp.gate",

|

| 130 |

+

"model.layers.76.mlp.gate",

|

| 131 |

+

"model.layers.77.mlp.gate",

|

| 132 |

+

"model.layers.78.mlp.gate",

|

| 133 |

+

"model.layers.79.mlp.gate",

|

| 134 |

+

"model.layers.8.mlp.gate",

|

| 135 |

+

"model.layers.80.mlp.gate",

|

| 136 |

+

"model.layers.81.mlp.gate",

|

| 137 |

+

"model.layers.82.mlp.gate",

|

| 138 |

+

"model.layers.83.mlp.gate",

|

| 139 |

+

"model.layers.84.mlp.gate",

|

| 140 |

+

"model.layers.85.mlp.gate",

|

| 141 |

+

"model.layers.86.mlp.gate",

|

| 142 |

+

"model.layers.87.mlp.gate",

|

| 143 |

+

"model.layers.88.mlp.gate",

|

| 144 |

+

"model.layers.89.mlp.gate",

|

| 145 |

+

"model.layers.9.mlp.gate",

|

| 146 |

+

"model.layers.90.mlp.gate",

|

| 147 |

+

"model.layers.91.mlp.gate",

|

| 148 |

+

"model.layers.92.mlp.gate",

|

| 149 |

+

"model.layers.93.mlp.gate",

|

| 150 |

+

"lm_head"

|

| 151 |

+

],

|

| 152 |

+

"quant_algo": "NVFP4",

|

| 153 |

+

"kv_cache_scheme": "FP8",

|

| 154 |

+

"producer": {

|

| 155 |

+

"name": "modelopt",

|

| 156 |

+

"version": "0.33.0"

|

| 157 |

+

},

|

| 158 |

+

"quant_library": "modelopt"

|

| 159 |

+

}

|

| 160 |

+

}

|

generation_config.json

ADDED

|

@@ -0,0 +1,13 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"bos_token_id": 151643,

|

| 3 |

+

"do_sample": true,

|

| 4 |

+

"eos_token_id": [

|

| 5 |

+

151645,

|

| 6 |

+

151643

|

| 7 |

+

],

|

| 8 |

+

"pad_token_id": 151643,

|

| 9 |

+

"temperature": 0.7,

|

| 10 |

+

"top_k": 20,

|

| 11 |

+

"top_p": 0.8,

|

| 12 |

+

"transformers_version": "4.53.3"

|

| 13 |

+

}

|

hf_quant_config.json

ADDED

|

@@ -0,0 +1,108 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"producer": {

|

| 3 |

+

"name": "modelopt",

|

| 4 |

+

"version": "0.33.0"

|

| 5 |

+

},

|

| 6 |

+

"quantization": {

|

| 7 |

+

"quant_algo": "NVFP4",

|

| 8 |

+

"kv_cache_quant_algo": "FP8",

|

| 9 |

+

"group_size": 16,

|

| 10 |

+

"exclude_modules": [

|

| 11 |

+

"model.layers.0.mlp.gate",

|

| 12 |

+

"model.layers.1.mlp.gate",

|

| 13 |

+

"model.layers.10.mlp.gate",

|

| 14 |

+

"model.layers.11.mlp.gate",

|

| 15 |

+

"model.layers.12.mlp.gate",

|

| 16 |

+

"model.layers.13.mlp.gate",

|

| 17 |

+

"model.layers.14.mlp.gate",

|

| 18 |

+

"model.layers.15.mlp.gate",

|

| 19 |

+

"model.layers.16.mlp.gate",

|

| 20 |

+

"model.layers.17.mlp.gate",

|

| 21 |

+

"model.layers.18.mlp.gate",

|

| 22 |

+

"model.layers.19.mlp.gate",

|

| 23 |

+

"model.layers.2.mlp.gate",

|

| 24 |

+

"model.layers.20.mlp.gate",

|

| 25 |

+

"model.layers.21.mlp.gate",

|

| 26 |

+

"model.layers.22.mlp.gate",

|

| 27 |

+

"model.layers.23.mlp.gate",

|

| 28 |

+

"model.layers.24.mlp.gate",

|

| 29 |

+

"model.layers.25.mlp.gate",

|

| 30 |

+

"model.layers.26.mlp.gate",

|

| 31 |

+

"model.layers.27.mlp.gate",

|

| 32 |

+

"model.layers.28.mlp.gate",

|

| 33 |

+

"model.layers.29.mlp.gate",

|

| 34 |

+

"model.layers.3.mlp.gate",

|

| 35 |

+

"model.layers.30.mlp.gate",

|

| 36 |

+

"model.layers.31.mlp.gate",

|

| 37 |

+

"model.layers.32.mlp.gate",

|

| 38 |

+

"model.layers.33.mlp.gate",

|

| 39 |

+

"model.layers.34.mlp.gate",

|

| 40 |

+

"model.layers.35.mlp.gate",

|

| 41 |

+

"model.layers.36.mlp.gate",

|

| 42 |

+

"model.layers.37.mlp.gate",

|

| 43 |

+

"model.layers.38.mlp.gate",

|

| 44 |

+

"model.layers.39.mlp.gate",

|

| 45 |

+

"model.layers.4.mlp.gate",

|

| 46 |

+

"model.layers.40.mlp.gate",

|

| 47 |

+

"model.layers.41.mlp.gate",

|

| 48 |

+

"model.layers.42.mlp.gate",

|

| 49 |

+

"model.layers.43.mlp.gate",

|

| 50 |

+

"model.layers.44.mlp.gate",

|

| 51 |

+

"model.layers.45.mlp.gate",

|

| 52 |

+

"model.layers.46.mlp.gate",

|

| 53 |

+

"model.layers.47.mlp.gate",

|

| 54 |

+

"model.layers.48.mlp.gate",

|

| 55 |

+

"model.layers.49.mlp.gate",

|

| 56 |

+

"model.layers.5.mlp.gate",

|

| 57 |

+

"model.layers.50.mlp.gate",

|

| 58 |

+

"model.layers.51.mlp.gate",

|

| 59 |

+

"model.layers.52.mlp.gate",

|

| 60 |

+

"model.layers.53.mlp.gate",

|

| 61 |

+

"model.layers.54.mlp.gate",

|

| 62 |

+

"model.layers.55.mlp.gate",

|

| 63 |

+

"model.layers.56.mlp.gate",

|

| 64 |

+

"model.layers.57.mlp.gate",

|

| 65 |

+

"model.layers.58.mlp.gate",

|

| 66 |

+

"model.layers.59.mlp.gate",

|

| 67 |

+

"model.layers.6.mlp.gate",

|

| 68 |

+

"model.layers.60.mlp.gate",

|

| 69 |

+

"model.layers.61.mlp.gate",

|

| 70 |

+

"model.layers.62.mlp.gate",

|

| 71 |

+

"model.layers.63.mlp.gate",

|

| 72 |

+

"model.layers.64.mlp.gate",

|

| 73 |

+

"model.layers.65.mlp.gate",

|

| 74 |

+

"model.layers.66.mlp.gate",

|

| 75 |

+

"model.layers.67.mlp.gate",

|

| 76 |

+

"model.layers.68.mlp.gate",

|

| 77 |

+

"model.layers.69.mlp.gate",

|

| 78 |

+

"model.layers.7.mlp.gate",

|

| 79 |

+

"model.layers.70.mlp.gate",

|

| 80 |

+

"model.layers.71.mlp.gate",

|

| 81 |

+

"model.layers.72.mlp.gate",

|

| 82 |

+

"model.layers.73.mlp.gate",

|

| 83 |

+

"model.layers.74.mlp.gate",

|

| 84 |

+

"model.layers.75.mlp.gate",

|

| 85 |

+

"model.layers.76.mlp.gate",

|

| 86 |

+

"model.layers.77.mlp.gate",

|

| 87 |

+

"model.layers.78.mlp.gate",

|

| 88 |

+

"model.layers.79.mlp.gate",

|

| 89 |

+

"model.layers.8.mlp.gate",

|

| 90 |

+

"model.layers.80.mlp.gate",

|

| 91 |

+

"model.layers.81.mlp.gate",

|

| 92 |

+

"model.layers.82.mlp.gate",

|

| 93 |

+

"model.layers.83.mlp.gate",

|

| 94 |

+

"model.layers.84.mlp.gate",

|

| 95 |

+

"model.layers.85.mlp.gate",

|

| 96 |

+

"model.layers.86.mlp.gate",

|

| 97 |

+

"model.layers.87.mlp.gate",

|

| 98 |

+

"model.layers.88.mlp.gate",

|

| 99 |

+

"model.layers.89.mlp.gate",

|

| 100 |

+

"model.layers.9.mlp.gate",

|

| 101 |

+

"model.layers.90.mlp.gate",

|

| 102 |

+

"model.layers.91.mlp.gate",

|

| 103 |

+

"model.layers.92.mlp.gate",

|

| 104 |

+

"model.layers.93.mlp.gate",

|

| 105 |

+

"lm_head"

|

| 106 |

+

]

|

| 107 |

+

}

|

| 108 |

+

}

|

merges.txt

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

model-00001-of-00027.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:58415b79ad2d04002eb9fc01efa99f753ce9854dcaff5fd52affecd61d0e09d2

|

| 3 |

+

size 4999619880

|

model-00002-of-00027.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:7e489326990f87428bcc1b01b44f29affc1474fcc16533ac3ea393b4f8621f56

|

| 3 |

+

size 4999554200

|

model-00003-of-00027.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:2a9b29eabee616f10bba50176cf22f9a51cd4259af75e85d9c600cf351144401

|

| 3 |

+

size 5000457620

|

model-00004-of-00027.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:0d03825b8d2ac6fdd30eeeef3ffa443d9c5159d2af9d720dbe8dc4a0998b62a3

|

| 3 |

+

size 4999952884

|

model-00005-of-00027.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:4d7cc013b6293abb5df883be3ef3aa0a7eb9773e60cad8599c955603a8b33280

|

| 3 |

+

size 5000463428

|

model-00006-of-00027.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:4f749f2c710c926f3d1aee2fd49042e1421741cae3387487128e5bae8761f780

|

| 3 |

+

size 4999952932

|

model-00007-of-00027.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:a351453339ed1dd388f7e7ad209284966a90a3674332df87a69e74f101d529c2

|

| 3 |

+

size 4999559752

|

model-00008-of-00027.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:a728d9c35f4a2f99134e9daa01f52c276881226485226d68772e110cda44d19f

|

| 3 |

+

size 5000463148

|

model-00009-of-00027.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:d0e337960b02158d3557b3b7e3e388a1390726b24d3cbcab4d6cd20e5e35c510

|

| 3 |

+

size 4999953212

|

model-00010-of-00027.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:b600cd96f5b676778640cdac142f8f86ef96c02d87232325d8104c310b840056

|

| 3 |

+

size 5000463196

|

model-00011-of-00027.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:5b293e9ad349474d0c06b1fb25e5ac37cbd0a9228b5c8c57618c5a8cead7d4c1

|

| 3 |

+

size 4999953172

|

model-00012-of-00027.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:7fcb5fb074d230cd420dfdf6a93b34430f084a316534ac78acd9a5c88e689379

|

| 3 |

+

size 5000463412

|

model-00013-of-00027.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:4b278c51995da7b379ca8aa4902d1e1c6d7a158c276ce94f26fbdb2466a45aab

|

| 3 |

+

size 4999952940

|

model-00014-of-00027.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:180ff0c3f490a8b06f37bf6464d68614e230d044271365248bd7ac2da7cea195

|

| 3 |

+

size 4999559760

|

model-00015-of-00027.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:223b0254df13a7988e0632464f1a3c7054200239fd235ab48c8b6946c8da0218

|

| 3 |

+

size 5000463132

|

model-00016-of-00027.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:6203cabb08cf6a7175ee5d6ed53776ec3a54d6c43b35f0443795cd44a7f24b36

|

| 3 |

+

size 4999953212

|

model-00017-of-00027.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:c78d1cd6933a9ca1fd859ab569a9de016af43da9f2b169493f65923d5964a276

|

| 3 |

+

size 5000463188

|

model-00018-of-00027.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:ac6bb69f2779f332b7fe73cbfee964abcff8e093a20d84c18f906430389ca07f

|

| 3 |

+

size 4999953172

|

model-00019-of-00027.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:bb9bdfff69a9021370cc269930fbb82cdbac29341aa476a1867c2585e0b6b9e8

|

| 3 |

+

size 5000463412

|

model-00020-of-00027.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:0611984639366e94d1abf86dac21c8799aa2373e36742d1e711c2e9ac1220ccf

|

| 3 |

+

size 4999952956

|

model-00021-of-00027.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:87f29283cb37e33c5e68ee259232ecc02cb2cfea97004273b05358b0ee34ff27

|

| 3 |

+

size 4999559760

|

model-00022-of-00027.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:fd7f66b8795cd10199b98fc0c8870e7c2bec9ccfb4b01bbddc1364e72f778a4c

|

| 3 |

+

size 5000463132

|

model-00023-of-00027.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:ec09a87a3a2f9307a49e96df398b51baaf4faeab9a3338813bf6de48f2654211

|

| 3 |

+

size 4999953220

|

model-00024-of-00027.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:491acfcde7df897c66b36b320f99ef22bc962e7ea60a0f392c34f280b02a9f88

|

| 3 |

+

size 5000463172

|

model-00025-of-00027.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:4005cb0c18409d1442869a025903f5b03f15ea4bc57e19ced13fe0ffcfa968b6

|

| 3 |

+

size 4999953180

|

model-00026-of-00027.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:5907dc01c23fe3cfea521d7b0bb43d328a81f81b0509321abdc8b4b4b0bf9089

|

| 3 |

+

size 5000463404

|

model-00027-of-00027.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:e674a683ddc650d6d678ba3cc354dab42807e394a194ade11835698058795cbe

|

| 3 |

+

size 4116499340

|

model.safetensors.index.json

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:8017eca6e9b67270d89d0495f9d91f4fa7d02a7ab9907cac6a3111c4f7f42d2c

|

| 3 |

+

size 13920213

|

special_tokens_map.json

ADDED

|

@@ -0,0 +1,19 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"additional_special_tokens": [

|

| 3 |

+

"<|im_start|>",

|

| 4 |

+

"<|im_end|>",

|

| 5 |

+

"<|object_ref_start|>",

|

| 6 |

+

"<|object_ref_end|>",

|

| 7 |

+

"<|box_start|>",

|

| 8 |

+

"<|box_end|>",

|

| 9 |

+

"<|quad_start|>",

|

| 10 |

+

"<|quad_end|>",

|

| 11 |

+

"<|vision_start|>",

|

| 12 |

+

"<|vision_end|>",

|

| 13 |

+

"<|vision_pad|>",

|

| 14 |

+

"<|image_pad|>",

|

| 15 |

+

"<|video_pad|>"

|

| 16 |

+

],

|

| 17 |

+

"eos_token": "<|endoftext|>",

|

| 18 |

+

"pad_token": "<|endoftext|>"

|

| 19 |

+

}

|

tokenizer.json

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:aeb13307a71acd8fe81861d94ad54ab689df773318809eed3cbe794b4492dae4

|

| 3 |

+

size 11422654

|

tokenizer_config.json

ADDED

|

@@ -0,0 +1,239 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|