Upload folder using huggingface_hub

Browse filesThis view is limited to 50 files because it contains too many changes.

See raw diff

- .cache/huggingface/.gitignore +1 -0

- .cache/huggingface/download/.gitattributes.lock +0 -0

- .cache/huggingface/download/.gitattributes.metadata +3 -0

- .cache/huggingface/download/NOTICE.lock +0 -0

- .cache/huggingface/download/NOTICE.metadata +3 -0

- .cache/huggingface/download/README.md.lock +0 -0

- .cache/huggingface/download/README.md.metadata +3 -0

- .cache/huggingface/download/added_tokens.json.lock +0 -0

- .cache/huggingface/download/added_tokens.json.metadata +3 -0

- .cache/huggingface/download/config.json.lock +0 -0

- .cache/huggingface/download/config.json.metadata +3 -0

- .cache/huggingface/download/configuration_aimv2.py.lock +0 -0

- .cache/huggingface/download/configuration_aimv2.py.metadata +3 -0

- .cache/huggingface/download/configuration_ovis.py.lock +0 -0

- .cache/huggingface/download/configuration_ovis.py.metadata +3 -0

- .cache/huggingface/download/generation_config.json.lock +0 -0

- .cache/huggingface/download/generation_config.json.metadata +3 -0

- .cache/huggingface/download/merges.txt.lock +0 -0

- .cache/huggingface/download/merges.txt.metadata +3 -0

- .cache/huggingface/download/model.safetensors.lock +0 -0

- .cache/huggingface/download/model.safetensors.metadata +3 -0

- .cache/huggingface/download/modeling_aimv2.py.lock +0 -0

- .cache/huggingface/download/modeling_aimv2.py.metadata +3 -0

- .cache/huggingface/download/modeling_ovis.py.lock +0 -0

- .cache/huggingface/download/modeling_ovis.py.metadata +3 -0

- .cache/huggingface/download/preprocessor_config.json.lock +0 -0

- .cache/huggingface/download/preprocessor_config.json.metadata +3 -0

- .cache/huggingface/download/special_tokens_map.json.lock +0 -0

- .cache/huggingface/download/special_tokens_map.json.metadata +3 -0

- .cache/huggingface/download/tokenizer.json.lock +0 -0

- .cache/huggingface/download/tokenizer.json.metadata +3 -0

- .cache/huggingface/download/tokenizer_config.json.lock +0 -0

- .cache/huggingface/download/tokenizer_config.json.metadata +3 -0

- .cache/huggingface/download/vocab.json.lock +0 -0

- .cache/huggingface/download/vocab.json.metadata +3 -0

- .gitattributes +1 -0

- NOTICE +14 -0

- README.md +258 -0

- added_tokens.json +32 -0

- config.json +256 -0

- configuration_aimv2.py +63 -0

- configuration_ovis.py +204 -0

- generation_config.json +15 -0

- merges.txt +0 -0

- model.safetensors +3 -0

- modeling_aimv2.py +198 -0

- modeling_ovis.py +590 -0

- preprocessor_config.json +27 -0

- special_tokens_map.json +39 -0

- tokenizer.json +3 -0

.cache/huggingface/.gitignore

ADDED

|

@@ -0,0 +1 @@

|

|

|

|

|

|

|

| 1 |

+

*

|

.cache/huggingface/download/.gitattributes.lock

ADDED

|

File without changes

|

.cache/huggingface/download/.gitattributes.metadata

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

b5c50bc2836fd46a6cd0feb39269eeb5968fac1d

|

| 2 |

+

52373fe24473b1aa44333d318f578ae6bf04b49b

|

| 3 |

+

1744020437.949933

|

.cache/huggingface/download/NOTICE.lock

ADDED

|

File without changes

|

.cache/huggingface/download/NOTICE.metadata

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

b5c50bc2836fd46a6cd0feb39269eeb5968fac1d

|

| 2 |

+

0e3814d458c5165927f99dcd492d361b92aeaa07

|

| 3 |

+

1744020438.074461

|

.cache/huggingface/download/README.md.lock

ADDED

|

File without changes

|

.cache/huggingface/download/README.md.metadata

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

b5c50bc2836fd46a6cd0feb39269eeb5968fac1d

|

| 2 |

+

6ca32930d9aa4f554c16ffefb5a3826d271f7bd9

|

| 3 |

+

1744020438.045738

|

.cache/huggingface/download/added_tokens.json.lock

ADDED

|

File without changes

|

.cache/huggingface/download/added_tokens.json.metadata

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

b5c50bc2836fd46a6cd0feb39269eeb5968fac1d

|

| 2 |

+

482ced4679301bf287ebb310bdd1790eb4514232

|

| 3 |

+

1744020438.1181376

|

.cache/huggingface/download/config.json.lock

ADDED

|

File without changes

|

.cache/huggingface/download/config.json.metadata

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

b5c50bc2836fd46a6cd0feb39269eeb5968fac1d

|

| 2 |

+

605d0602ab0ca6bf0cd8ee4ba94f18b042a5f093

|

| 3 |

+

1744020437.9545557

|

.cache/huggingface/download/configuration_aimv2.py.lock

ADDED

|

File without changes

|

.cache/huggingface/download/configuration_aimv2.py.metadata

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

b5c50bc2836fd46a6cd0feb39269eeb5968fac1d

|

| 2 |

+

06b2c6d896fbe2be7ca5a2ff32b3057a7d2ec946

|

| 3 |

+

1744020437.9542494

|

.cache/huggingface/download/configuration_ovis.py.lock

ADDED

|

File without changes

|

.cache/huggingface/download/configuration_ovis.py.metadata

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

b5c50bc2836fd46a6cd0feb39269eeb5968fac1d

|

| 2 |

+

b094185a4218ae2bccb58ccf481c894164d8479f

|

| 3 |

+

1744020438.0111387

|

.cache/huggingface/download/generation_config.json.lock

ADDED

|

File without changes

|

.cache/huggingface/download/generation_config.json.metadata

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

b5c50bc2836fd46a6cd0feb39269eeb5968fac1d

|

| 2 |

+

62dec5ceb087a8f3702c4a301495a7215d072ce7

|

| 3 |

+

1744020438.2107105

|

.cache/huggingface/download/merges.txt.lock

ADDED

|

File without changes

|

.cache/huggingface/download/merges.txt.metadata

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

b5c50bc2836fd46a6cd0feb39269eeb5968fac1d

|

| 2 |

+

31349551d90c7606f325fe0f11bbb8bd5fa0d7c7

|

| 3 |

+

1744020439.931449

|

.cache/huggingface/download/model.safetensors.lock

ADDED

|

File without changes

|

.cache/huggingface/download/model.safetensors.metadata

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

b5c50bc2836fd46a6cd0feb39269eeb5968fac1d

|

| 2 |

+

8a25670ed919d7cc9fad1fa4a359b3da5a0b744eab448a498d6c23cdc8b9edc2

|

| 3 |

+

1744020927.641807

|

.cache/huggingface/download/modeling_aimv2.py.lock

ADDED

|

File without changes

|

.cache/huggingface/download/modeling_aimv2.py.metadata

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

b5c50bc2836fd46a6cd0feb39269eeb5968fac1d

|

| 2 |

+

773b8cdad42fef5692dfcf0e837f18d150613d91

|

| 3 |

+

1744020439.1234415

|

.cache/huggingface/download/modeling_ovis.py.lock

ADDED

|

File without changes

|

.cache/huggingface/download/modeling_ovis.py.metadata

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

b5c50bc2836fd46a6cd0feb39269eeb5968fac1d

|

| 2 |

+

8288613495aaae374749d6e387d8c1a1437997f9

|

| 3 |

+

1744020439.0775173

|

.cache/huggingface/download/preprocessor_config.json.lock

ADDED

|

File without changes

|

.cache/huggingface/download/preprocessor_config.json.metadata

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

b5c50bc2836fd46a6cd0feb39269eeb5968fac1d

|

| 2 |

+

91bd2284ac30e92dc70023899547f700e542a911

|

| 3 |

+

1744020439.146727

|

.cache/huggingface/download/special_tokens_map.json.lock

ADDED

|

File without changes

|

.cache/huggingface/download/special_tokens_map.json.metadata

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

b5c50bc2836fd46a6cd0feb39269eeb5968fac1d

|

| 2 |

+

ac23c0aaa2434523c494330aeb79c58395378103

|

| 3 |

+

1744020439.3326318

|

.cache/huggingface/download/tokenizer.json.lock

ADDED

|

File without changes

|

.cache/huggingface/download/tokenizer.json.metadata

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

b5c50bc2836fd46a6cd0feb39269eeb5968fac1d

|

| 2 |

+

9c5ae00e602b8860cbd784ba82a8aa14e8feecec692e7076590d014d7b7fdafa

|

| 3 |

+

1744020444.7309973

|

.cache/huggingface/download/tokenizer_config.json.lock

ADDED

|

File without changes

|

.cache/huggingface/download/tokenizer_config.json.metadata

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

b5c50bc2836fd46a6cd0feb39269eeb5968fac1d

|

| 2 |

+

8adf747ccaf85ff9587338cee6ed6be027b98210

|

| 3 |

+

1744020439.3145587

|

.cache/huggingface/download/vocab.json.lock

ADDED

|

File without changes

|

.cache/huggingface/download/vocab.json.metadata

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

b5c50bc2836fd46a6cd0feb39269eeb5968fac1d

|

| 2 |

+

4783fe10ac3adce15ac8f358ef5462739852c569

|

| 3 |

+

1744020443.0863569

|

.gitattributes

CHANGED

|

@@ -33,3 +33,4 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

tokenizer.json filter=lfs diff=lfs merge=lfs -text

|

NOTICE

ADDED

|

@@ -0,0 +1,14 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

Copyright (C) 2025 AIDC-AI

|

| 2 |

+

Licensed under the Apache License, Version 2.0 (the "License");

|

| 3 |

+

you may not use this file except in compliance with the License.

|

| 4 |

+

You may obtain a copy of the License at

|

| 5 |

+

http://www.apache.org/licenses/LICENSE-2.0

|

| 6 |

+

Unless required by applicable law or agreed to in writing, software

|

| 7 |

+

distributed under the License is distributed on an "AS IS" BASIS,

|

| 8 |

+

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

| 9 |

+

See the License for the specific language governing permissions and

|

| 10 |

+

limitations under the License.

|

| 11 |

+

|

| 12 |

+

This model was trained based on the following models:

|

| 13 |

+

1. Qwen2.5 (https://huggingface.co/Qwen/Qwen2.5-0.5B-Instruct), license:(https://huggingface.co/Qwen/Qwen2.5-0.5B-Instruct/blob/main/LICENSE, SPDX-License-identifier: Apache-2.0).

|

| 14 |

+

2. AimV2 (https://huggingface.co/apple/aimv2-large-patch14-448), license: Apple-Sample-Code-License (https://developer.apple.com/support/downloads/terms/apple-sample-code/Apple-Sample-Code-License.pdf)

|

README.md

ADDED

|

@@ -0,0 +1,258 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

license: apache-2.0

|

| 3 |

+

datasets:

|

| 4 |

+

- AIDC-AI/Ovis-dataset

|

| 5 |

+

library_name: transformers

|

| 6 |

+

tags:

|

| 7 |

+

- MLLM

|

| 8 |

+

pipeline_tag: image-text-to-text

|

| 9 |

+

language:

|

| 10 |

+

- en

|

| 11 |

+

- zh

|

| 12 |

+

---

|

| 13 |

+

|

| 14 |

+

# Ovis2-1B

|

| 15 |

+

<div align="center">

|

| 16 |

+

<img src=https://cdn-uploads.huggingface.co/production/uploads/637aebed7ce76c3b834cea37/3IK823BZ8w-mz_QfeYkDn.png width="30%"/>

|

| 17 |

+

</div>

|

| 18 |

+

|

| 19 |

+

## Introduction

|

| 20 |

+

[GitHub](https://github.com/AIDC-AI/Ovis) | [Paper](https://arxiv.org/abs/2405.20797)

|

| 21 |

+

|

| 22 |

+

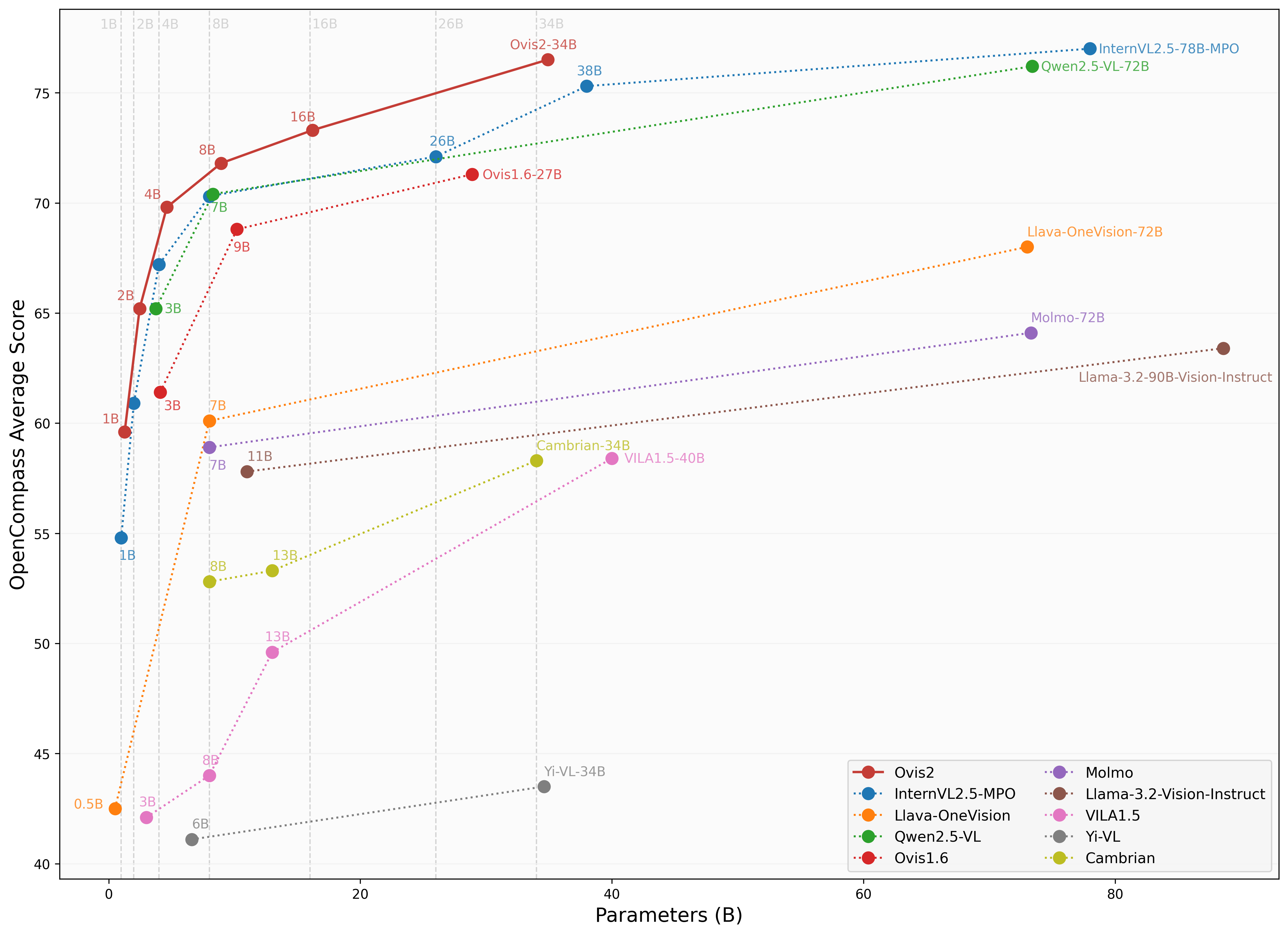

We are pleased to announce the release of **Ovis2**, our latest advancement in multi-modal large language models (MLLMs). Ovis2 inherits the innovative architectural design of the Ovis series, aimed at structurally aligning visual and textual embeddings. As the successor to Ovis1.6, Ovis2 incorporates significant improvements in both dataset curation and training methodologies.

|

| 23 |

+

|

| 24 |

+

**Key Features**:

|

| 25 |

+

|

| 26 |

+

- **Small Model Performance**: Optimized training strategies enable small-scale models to achieve higher capability density, demonstrating cross-tier leading advantages.

|

| 27 |

+

|

| 28 |

+

- **Enhanced Reasoning Capabilities**: Significantly strengthens Chain-of-Thought (CoT) reasoning abilities through the combination of instruction tuning and preference learning.

|

| 29 |

+

|

| 30 |

+

- **Video and Multi-Image Processing**: Video and multi-image data are incorporated into training to enhance the ability to handle complex visual information across frames and images.

|

| 31 |

+

|

| 32 |

+

- **Multilingual Support and OCR**: Enhances multilingual OCR beyond English and Chinese and improves structured data extraction from complex visual elements like tables and charts.

|

| 33 |

+

|

| 34 |

+

<div align="center">

|

| 35 |

+

<img src="https://cdn-uploads.huggingface.co/production/uploads/637aebed7ce76c3b834cea37/XB-vgzDL6FshrSNGyZvzc.png" width="100%" />

|

| 36 |

+

</div>

|

| 37 |

+

|

| 38 |

+

## Model Zoo

|

| 39 |

+

|

| 40 |

+

| Ovis MLLMs | ViT | LLM | Model Weights | Demo |

|

| 41 |

+

|:-----------|:-----------------------:|:---------------------:|:-------------------------------------------------------:|:--------------------------------------------------------:|

|

| 42 |

+

| Ovis2-1B | aimv2-large-patch14-448 | Qwen2.5-0.5B-Instruct | [Huggingface](https://huggingface.co/AIDC-AI/Ovis2-1B) | [Space](https://huggingface.co/spaces/AIDC-AI/Ovis2-1B) |

|

| 43 |

+

| Ovis2-2B | aimv2-large-patch14-448 | Qwen2.5-1.5B-Instruct | [Huggingface](https://huggingface.co/AIDC-AI/Ovis2-2B) | [Space](https://huggingface.co/spaces/AIDC-AI/Ovis2-2B) |

|

| 44 |

+

| Ovis2-4B | aimv2-huge-patch14-448 | Qwen2.5-3B-Instruct | [Huggingface](https://huggingface.co/AIDC-AI/Ovis2-4B) | [Space](https://huggingface.co/spaces/AIDC-AI/Ovis2-4B) |

|

| 45 |

+

| Ovis2-8B | aimv2-huge-patch14-448 | Qwen2.5-7B-Instruct | [Huggingface](https://huggingface.co/AIDC-AI/Ovis2-8B) | [Space](https://huggingface.co/spaces/AIDC-AI/Ovis2-8B) |

|

| 46 |

+

| Ovis2-16B | aimv2-huge-patch14-448 | Qwen2.5-14B-Instruct | [Huggingface](https://huggingface.co/AIDC-AI/Ovis2-16B) | [Space](https://huggingface.co/spaces/AIDC-AI/Ovis2-16B) |

|

| 47 |

+

| Ovis2-34B | aimv2-1B-patch14-448 | Qwen2.5-32B-Instruct | [Huggingface](https://huggingface.co/AIDC-AI/Ovis2-34B) | - |

|

| 48 |

+

|

| 49 |

+

## Performance

|

| 50 |

+

We use [VLMEvalKit](https://github.com/open-compass/VLMEvalKit), as employed in the OpenCompass [multimodal](https://rank.opencompass.org.cn/leaderboard-multimodal) and [reasoning](https://rank.opencompass.org.cn/leaderboard-multimodal-reasoning) leaderboard, to evaluate Ovis2.

|

| 51 |

+

|

| 52 |

+

|

| 53 |

+

|

| 54 |

+

### Image Benchmark

|

| 55 |

+

| Benchmark | Qwen2.5-VL-3B | SAIL-VL-2B | InternVL2.5-2B-MPO | Ovis1.6-3B | InternVL2.5-1B-MPO | Ovis2-1B | Ovis2-2B |

|

| 56 |

+

|:-----------------------------|:---------------:|:------------:|:--------------------:|:------------:|:--------------------:|:----------:|:----------:|

|

| 57 |

+

| MMBench-V1.1<sub>test</sub> | **77.1** | 73.6 | 70.7 | 74.1 | 65.8 | 68.4 | 76.9 |

|

| 58 |

+

| MMStar | 56.5 | 56.5 | 54.9 | 52.0 | 49.5 | 52.1 | **56.7** |

|

| 59 |

+

| MMMU<sub>val</sub> | **51.4** | 44.1 | 44.6 | 46.7 | 40.3 | 36.1 | 45.6 |

|

| 60 |

+

| MathVista<sub>testmini</sub> | 60.1 | 62.8 | 53.4 | 58.9 | 47.7 | 59.4 | **64.1** |

|

| 61 |

+

| HallusionBench | 48.7 | 45.9 | 40.7 | 43.8 | 34.8 | 45.2 | **50.2** |

|

| 62 |

+

| AI2D | 81.4 | 77.4 | 75.1 | 77.8 | 68.5 | 76.4 | **82.7** |

|

| 63 |

+

| OCRBench | 83.1 | 83.1 | 83.8 | 80.1 | 84.3 | **89.0** | 87.3 |

|

| 64 |

+

| MMVet | 63.2 | 44.2 | **64.2** | 57.6 | 47.2 | 50.0 | 58.3 |

|

| 65 |

+

| MMBench<sub>test</sub> | 78.6 | 77 | 72.8 | 76.6 | 67.9 | 70.2 | **78.9** |

|

| 66 |

+

| MMT-Bench<sub>val</sub> | 60.8 | 57.1 | 54.4 | 59.2 | 50.8 | 55.5 | **61.7** |

|

| 67 |

+

| RealWorldQA | 66.5 | 62 | 61.3 | **66.7** | 57 | 63.9 | 66.0 |

|

| 68 |

+

| BLINK | **48.4** | 46.4 | 43.8 | 43.8 | 41 | 44.0 | 47.9 |

|

| 69 |

+

| QBench | 74.4 | 72.8 | 69.8 | 75.8 | 63.3 | 71.3 | **76.2** |

|

| 70 |

+

| ABench | 75.5 | 74.5 | 71.1 | 75.2 | 67.5 | 71.3 | **76.6** |

|

| 71 |

+

| MTVQA | 24.9 | 20.2 | 22.6 | 21.1 | 21.7 | 23.7 | **25.6** |

|

| 72 |

+

|

| 73 |

+

### Video Benchmark

|

| 74 |

+

| Benchmark | Qwen2.5-VL-3B | InternVL2.5-2B | InternVL2.5-1B | Ovis2-1B | Ovis2-2B |

|

| 75 |

+

| ------------------- |:-------------:|:--------------:|:--------------:|:---------:|:-------------:|

|

| 76 |

+

| VideoMME(wo/w-subs) | **61.5/67.6** | 51.9 / 54.1 | 50.3 / 52.3 | 48.6/49.5 | 57.2/60.8 |

|

| 77 |

+

| MVBench | 67.0 | **68.8** | 64.3 | 60.32 | 64.9 |

|

| 78 |

+

| MLVU(M-Avg/G-Avg) | 68.2/- | 61.4/- | 57.3/- | 58.5/3.66 | **68.6**/3.86 |

|

| 79 |

+

| MMBench-Video | **1.63** | 1.44 | 1.36 | 1.26 | 1.57 |

|

| 80 |

+

| TempCompass | **64.4** | - | - | 51.43 | 62.64 |

|

| 81 |

+

|

| 82 |

+

## Usage

|

| 83 |

+

Below is a code snippet demonstrating how to run Ovis with various input types. For additional usage instructions, including inference wrapper and Gradio UI, please refer to [Ovis GitHub](https://github.com/AIDC-AI/Ovis?tab=readme-ov-file#inference).

|

| 84 |

+

```bash

|

| 85 |

+

pip install torch==2.4.0 transformers==4.46.2 numpy==1.25.0 pillow==10.3.0

|

| 86 |

+

pip install flash-attn==2.7.0.post2 --no-build-isolation

|

| 87 |

+

```

|

| 88 |

+

```python

|

| 89 |

+

import torch

|

| 90 |

+

from PIL import Image

|

| 91 |

+

from transformers import AutoModelForCausalLM

|

| 92 |

+

|

| 93 |

+

# load model

|

| 94 |

+

model = AutoModelForCausalLM.from_pretrained("AIDC-AI/Ovis2-1B",

|

| 95 |

+

torch_dtype=torch.bfloat16,

|

| 96 |

+

multimodal_max_length=32768,

|

| 97 |

+

trust_remote_code=True).cuda()

|

| 98 |

+

text_tokenizer = model.get_text_tokenizer()

|

| 99 |

+

visual_tokenizer = model.get_visual_tokenizer()

|

| 100 |

+

|

| 101 |

+

# single-image input

|

| 102 |

+

image_path = '/data/images/example_1.jpg'

|

| 103 |

+

images = [Image.open(image_path)]

|

| 104 |

+

max_partition = 9

|

| 105 |

+

text = 'Describe the image.'

|

| 106 |

+

query = f'<image>\n{text}'

|

| 107 |

+

|

| 108 |

+

## cot-style input

|

| 109 |

+

# cot_suffix = "Provide a step-by-step solution to the problem, and conclude with 'the answer is' followed by the final solution."

|

| 110 |

+

# image_path = '/data/images/example_1.jpg'

|

| 111 |

+

# images = [Image.open(image_path)]

|

| 112 |

+

# max_partition = 9

|

| 113 |

+

# text = "What's the area of the shape?"

|

| 114 |

+

# query = f'<image>\n{text}\n{cot_suffix}'

|

| 115 |

+

|

| 116 |

+

## multiple-images input

|

| 117 |

+

# image_paths = [

|

| 118 |

+

# '/data/images/example_1.jpg',

|

| 119 |

+

# '/data/images/example_2.jpg',

|

| 120 |

+

# '/data/images/example_3.jpg'

|

| 121 |

+

# ]

|

| 122 |

+

# images = [Image.open(image_path) for image_path in image_paths]

|

| 123 |

+

# max_partition = 4

|

| 124 |

+

# text = 'Describe each image.'

|

| 125 |

+

# query = '\n'.join([f'Image {i+1}: <image>' for i in range(len(images))]) + '\n' + text

|

| 126 |

+

|

| 127 |

+

## video input (require `pip install moviepy==1.0.3`)

|

| 128 |

+

# from moviepy.editor import VideoFileClip

|

| 129 |

+

# video_path = '/data/videos/example_1.mp4'

|

| 130 |

+

# num_frames = 12

|

| 131 |

+

# max_partition = 1

|

| 132 |

+

# text = 'Describe the video.'

|

| 133 |

+

# with VideoFileClip(video_path) as clip:

|

| 134 |

+

# total_frames = int(clip.fps * clip.duration)

|

| 135 |

+

# if total_frames <= num_frames:

|

| 136 |

+

# sampled_indices = range(total_frames)

|

| 137 |

+

# else:

|

| 138 |

+

# stride = total_frames / num_frames

|

| 139 |

+

# sampled_indices = [min(total_frames - 1, int((stride * i + stride * (i + 1)) / 2)) for i in range(num_frames)]

|

| 140 |

+

# frames = [clip.get_frame(index / clip.fps) for index in sampled_indices]

|

| 141 |

+

# frames = [Image.fromarray(frame, mode='RGB') for frame in frames]

|

| 142 |

+

# images = frames

|

| 143 |

+

# query = '\n'.join(['<image>'] * len(images)) + '\n' + text

|

| 144 |

+

|

| 145 |

+

## text-only input

|

| 146 |

+

# images = []

|

| 147 |

+

# max_partition = None

|

| 148 |

+

# text = 'Hello'

|

| 149 |

+

# query = text

|

| 150 |

+

|

| 151 |

+

# format conversation

|

| 152 |

+

prompt, input_ids, pixel_values = model.preprocess_inputs(query, images, max_partition=max_partition)

|

| 153 |

+

attention_mask = torch.ne(input_ids, text_tokenizer.pad_token_id)

|

| 154 |

+

input_ids = input_ids.unsqueeze(0).to(device=model.device)

|

| 155 |

+

attention_mask = attention_mask.unsqueeze(0).to(device=model.device)

|

| 156 |

+

if pixel_values is not None:

|

| 157 |

+

pixel_values = pixel_values.to(dtype=visual_tokenizer.dtype, device=visual_tokenizer.device)

|

| 158 |

+

pixel_values = [pixel_values]

|

| 159 |

+

|

| 160 |

+

# generate output

|

| 161 |

+

with torch.inference_mode():

|

| 162 |

+

gen_kwargs = dict(

|

| 163 |

+

max_new_tokens=1024,

|

| 164 |

+

do_sample=False,

|

| 165 |

+

top_p=None,

|

| 166 |

+

top_k=None,

|

| 167 |

+

temperature=None,

|

| 168 |

+

repetition_penalty=None,

|

| 169 |

+

eos_token_id=model.generation_config.eos_token_id,

|

| 170 |

+

pad_token_id=text_tokenizer.pad_token_id,

|

| 171 |

+

use_cache=True

|

| 172 |

+

)

|

| 173 |

+

output_ids = model.generate(input_ids, pixel_values=pixel_values, attention_mask=attention_mask, **gen_kwargs)[0]

|

| 174 |

+

output = text_tokenizer.decode(output_ids, skip_special_tokens=True)

|

| 175 |

+

print(f'Output:\n{output}')

|

| 176 |

+

```

|

| 177 |

+

|

| 178 |

+

<details>

|

| 179 |

+

<summary>Batch Inference</summary>

|

| 180 |

+

|

| 181 |

+

```python

|

| 182 |

+

import torch

|

| 183 |

+

from PIL import Image

|

| 184 |

+

from transformers import AutoModelForCausalLM

|

| 185 |

+

|

| 186 |

+

# load model

|

| 187 |

+

model = AutoModelForCausalLM.from_pretrained("AIDC-AI/Ovis2-1B",

|

| 188 |

+

torch_dtype=torch.bfloat16,

|

| 189 |

+

multimodal_max_length=32768,

|

| 190 |

+

trust_remote_code=True).cuda()

|

| 191 |

+

text_tokenizer = model.get_text_tokenizer()

|

| 192 |

+

visual_tokenizer = model.get_visual_tokenizer()

|

| 193 |

+

|

| 194 |

+

# preprocess inputs

|

| 195 |

+

batch_inputs = [

|

| 196 |

+

('/data/images/example_1.jpg', 'What colors dominate the image?'),

|

| 197 |

+

('/data/images/example_2.jpg', 'What objects are depicted in this image?'),

|

| 198 |

+

('/data/images/example_3.jpg', 'Is there any text in the image?')

|

| 199 |

+

]

|

| 200 |

+

|

| 201 |

+

batch_input_ids = []

|

| 202 |

+

batch_attention_mask = []

|

| 203 |

+

batch_pixel_values = []

|

| 204 |

+

|

| 205 |

+

for image_path, text in batch_inputs:

|

| 206 |

+

image = Image.open(image_path)

|

| 207 |

+

query = f'<image>\n{text}'

|

| 208 |

+

prompt, input_ids, pixel_values = model.preprocess_inputs(query, [image], max_partition=9)

|

| 209 |

+

attention_mask = torch.ne(input_ids, text_tokenizer.pad_token_id)

|

| 210 |

+

batch_input_ids.append(input_ids.to(device=model.device))

|

| 211 |

+

batch_attention_mask.append(attention_mask.to(device=model.device))

|

| 212 |

+

batch_pixel_values.append(pixel_values.to(dtype=visual_tokenizer.dtype, device=visual_tokenizer.device))

|

| 213 |

+

|

| 214 |

+

batch_input_ids = torch.nn.utils.rnn.pad_sequence([i.flip(dims=[0]) for i in batch_input_ids], batch_first=True,

|

| 215 |

+

padding_value=0.0).flip(dims=[1])

|

| 216 |

+

batch_input_ids = batch_input_ids[:, -model.config.multimodal_max_length:]

|

| 217 |

+

batch_attention_mask = torch.nn.utils.rnn.pad_sequence([i.flip(dims=[0]) for i in batch_attention_mask],

|

| 218 |

+

batch_first=True, padding_value=False).flip(dims=[1])

|

| 219 |

+

batch_attention_mask = batch_attention_mask[:, -model.config.multimodal_max_length:]

|

| 220 |

+

|

| 221 |

+

# generate outputs

|

| 222 |

+

with torch.inference_mode():

|

| 223 |

+

gen_kwargs = dict(

|

| 224 |

+

max_new_tokens=1024,

|

| 225 |

+

do_sample=False,

|

| 226 |

+

top_p=None,

|

| 227 |

+

top_k=None,

|

| 228 |

+

temperature=None,

|

| 229 |

+

repetition_penalty=None,

|

| 230 |

+

eos_token_id=model.generation_config.eos_token_id,

|

| 231 |

+

pad_token_id=text_tokenizer.pad_token_id,

|

| 232 |

+

use_cache=True

|

| 233 |

+

)

|

| 234 |

+

output_ids = model.generate(batch_input_ids, pixel_values=batch_pixel_values, attention_mask=batch_attention_mask,

|

| 235 |

+

**gen_kwargs)

|

| 236 |

+

|

| 237 |

+

for i in range(len(batch_inputs)):

|

| 238 |

+

output = text_tokenizer.decode(output_ids[i], skip_special_tokens=True)

|

| 239 |

+

print(f'Output {i + 1}:\n{output}\n')

|

| 240 |

+

```

|

| 241 |

+

</details>

|

| 242 |

+

|

| 243 |

+

## Citation

|

| 244 |

+

If you find Ovis useful, please consider citing the paper

|

| 245 |

+

```

|

| 246 |

+

@article{lu2024ovis,

|

| 247 |

+

title={Ovis: Structural Embedding Alignment for Multimodal Large Language Model},

|

| 248 |

+

author={Shiyin Lu and Yang Li and Qing-Guo Chen and Zhao Xu and Weihua Luo and Kaifu Zhang and Han-Jia Ye},

|

| 249 |

+

year={2024},

|

| 250 |

+

journal={arXiv:2405.20797}

|

| 251 |

+

}

|

| 252 |

+

```

|

| 253 |

+

|

| 254 |

+

## License

|

| 255 |

+

This project is licensed under the [Apache License, Version 2.0](https://www.apache.org/licenses/LICENSE-2.0.txt) (SPDX-License-Identifier: Apache-2.0).

|

| 256 |

+

|

| 257 |

+

## Disclaimer

|

| 258 |

+

We used compliance-checking algorithms during the training process, to ensure the compliance of the trained model to the best of our ability. Due to the complexity of the data and the diversity of language model usage scenarios, we cannot guarantee that the model is completely free of copyright issues or improper content. If you believe anything infringes on your rights or generates improper content, please contact us, and we will promptly address the matter.

|

added_tokens.json

ADDED

|

@@ -0,0 +1,32 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"</tool_call>": 151658,

|

| 3 |

+

"<tool_call>": 151657,

|

| 4 |

+

"<|box_end|>": 151649,

|

| 5 |

+

"<|box_start|>": 151648,

|

| 6 |

+

"<|endoftext|>": 151643,

|

| 7 |

+

"<|file_sep|>": 151664,

|

| 8 |

+

"<|fim_middle|>": 151660,

|

| 9 |

+

"<|fim_pad|>": 151662,

|

| 10 |

+

"<|fim_prefix|>": 151659,

|

| 11 |

+

"<|fim_suffix|>": 151661,

|

| 12 |

+

"<|im_end|>": 151645,

|

| 13 |

+

"<|im_start|>": 151644,

|

| 14 |

+

"<|image_pad|>": 151655,

|

| 15 |

+

"<|object_ref_end|>": 151647,

|

| 16 |

+

"<|object_ref_start|>": 151646,

|

| 17 |

+

"<|quad_end|>": 151651,

|

| 18 |

+

"<|quad_start|>": 151650,

|

| 19 |

+

"<|repo_name|>": 151663,

|

| 20 |

+

"<|video_pad|>": 151656,

|

| 21 |

+

"<|vision_end|>": 151653,

|

| 22 |

+

"<|vision_pad|>": 151654,

|

| 23 |

+

"<|vision_start|>": 151652,

|

| 24 |

+

"<col>": 151669,

|

| 25 |

+

"<image>": 151665,

|

| 26 |

+

"<image_atom>": 151666,

|

| 27 |

+

"<image_pad>": 151672,

|

| 28 |

+

"<img>": 151667,

|

| 29 |

+

"<pre>": 151668,

|

| 30 |

+

"<row>": 151670,

|

| 31 |

+

"</img>": 151671

|

| 32 |

+

}

|

config.json

ADDED

|

@@ -0,0 +1,256 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"architectures": [

|

| 3 |

+

"Ovis"

|

| 4 |

+

],

|

| 5 |

+

"auto_map": {

|

| 6 |

+

"AutoConfig": "configuration_ovis.OvisConfig",

|

| 7 |

+

"AutoModelForCausalLM": "modeling_ovis.Ovis"

|

| 8 |

+

},

|

| 9 |

+

"conversation_formatter_class": "QwenConversationFormatter",

|

| 10 |

+

"disable_tie_weight": false,

|

| 11 |

+

"hidden_size": 896,

|

| 12 |

+

"llm_attn_implementation": "flash_attention_2",

|

| 13 |

+

"llm_config": {

|

| 14 |

+

"_attn_implementation_autoset": true,

|

| 15 |

+

"_name_or_path": "Qwen/Qwen2.5-0.5B-Instruct",

|

| 16 |

+

"add_cross_attention": false,

|

| 17 |

+

"architectures": [

|

| 18 |

+

"Qwen2ForCausalLM"

|

| 19 |

+

],

|

| 20 |

+

"attention_dropout": 0.0,

|

| 21 |

+

"bad_words_ids": null,

|

| 22 |

+

"begin_suppress_tokens": null,

|

| 23 |

+

"bos_token_id": 151643,

|

| 24 |

+

"chunk_size_feed_forward": 0,

|

| 25 |

+

"cross_attention_hidden_size": null,

|

| 26 |

+

"decoder_start_token_id": null,

|

| 27 |

+

"diversity_penalty": 0.0,

|

| 28 |

+

"do_sample": false,

|

| 29 |

+

"early_stopping": false,

|

| 30 |

+

"encoder_no_repeat_ngram_size": 0,

|

| 31 |

+

"eos_token_id": 151645,

|

| 32 |

+

"exponential_decay_length_penalty": null,

|

| 33 |

+

"finetuning_task": null,

|

| 34 |

+

"forced_bos_token_id": null,

|

| 35 |

+

"forced_eos_token_id": null,

|

| 36 |

+

"hidden_act": "silu",

|

| 37 |

+

"hidden_size": 896,

|

| 38 |

+

"id2label": {

|

| 39 |

+

"0": "LABEL_0",

|

| 40 |

+

"1": "LABEL_1"

|

| 41 |

+

},

|

| 42 |

+

"initializer_range": 0.02,

|

| 43 |

+

"intermediate_size": 4864,

|

| 44 |

+

"is_decoder": false,

|

| 45 |

+

"is_encoder_decoder": false,

|

| 46 |

+

"label2id": {

|

| 47 |

+

"LABEL_0": 0,

|

| 48 |

+

"LABEL_1": 1

|

| 49 |

+

},

|

| 50 |

+

"length_penalty": 1.0,

|

| 51 |

+

"max_length": 20,

|

| 52 |

+

"max_position_embeddings": 32768,

|

| 53 |

+

"max_window_layers": 21,

|

| 54 |

+

"min_length": 0,

|

| 55 |

+

"model_type": "qwen2",

|

| 56 |

+

"no_repeat_ngram_size": 0,

|

| 57 |

+

"num_attention_heads": 14,

|

| 58 |

+

"num_beam_groups": 1,

|

| 59 |

+

"num_beams": 1,

|

| 60 |

+

"num_hidden_layers": 24,

|

| 61 |

+

"num_key_value_heads": 2,

|

| 62 |

+

"num_return_sequences": 1,

|

| 63 |

+

"output_attentions": false,

|

| 64 |

+

"output_hidden_states": false,

|

| 65 |

+

"output_scores": false,

|

| 66 |

+

"pad_token_id": null,

|

| 67 |

+

"prefix": null,

|

| 68 |

+

"problem_type": null,

|

| 69 |

+

"pruned_heads": {},

|

| 70 |

+

"remove_invalid_values": false,

|

| 71 |

+

"repetition_penalty": 1.0,

|

| 72 |

+

"return_dict": true,

|

| 73 |

+

"return_dict_in_generate": false,

|

| 74 |

+

"rms_norm_eps": 1e-06,

|

| 75 |

+

"rope_scaling": null,

|

| 76 |

+

"rope_theta": 1000000.0,

|

| 77 |

+

"sep_token_id": null,

|

| 78 |

+

"sliding_window": null,

|

| 79 |

+

"suppress_tokens": null,

|

| 80 |

+

"task_specific_params": null,

|

| 81 |

+

"temperature": 1.0,

|

| 82 |

+

"tf_legacy_loss": false,

|

| 83 |

+

"tie_encoder_decoder": false,

|

| 84 |

+

"tie_word_embeddings": true,

|

| 85 |

+

"tokenizer_class": null,

|

| 86 |

+

"top_k": 50,

|

| 87 |

+

"top_p": 1.0,

|

| 88 |

+

"torch_dtype": "bfloat16",

|

| 89 |

+

"torchscript": false,

|

| 90 |

+

"typical_p": 1.0,

|

| 91 |

+

"use_bfloat16": false,

|

| 92 |

+

"use_cache": true,

|

| 93 |

+

"use_sliding_window": false,

|

| 94 |

+

"vocab_size": 151936

|

| 95 |

+

},

|

| 96 |

+

"model_type": "ovis",

|

| 97 |

+

"multimodal_max_length": 32768,

|

| 98 |

+

"torch_dtype": "bfloat16",

|

| 99 |

+

"transformers_version": "4.46.2",

|

| 100 |

+

"use_cache": true,

|

| 101 |

+

"visual_tokenizer_config": {

|

| 102 |

+

"_attn_implementation_autoset": true,

|

| 103 |

+

"_name_or_path": "",

|

| 104 |

+

"add_cross_attention": false,

|

| 105 |

+

"architectures": null,

|

| 106 |

+

"backbone_config": {

|

| 107 |

+

"_attn_implementation_autoset": true,

|

| 108 |

+

"_name_or_path": "apple/aimv2-large-patch14-448",

|

| 109 |

+

"add_cross_attention": false,

|

| 110 |

+

"architectures": [

|

| 111 |

+

"AIMv2Model"

|

| 112 |

+

],

|

| 113 |

+

"attention_dropout": 0.0,

|

| 114 |

+

"auto_map": {

|

| 115 |

+

"AutoConfig": "configuration_aimv2.AIMv2Config",

|

| 116 |

+

"AutoModel": "modeling_aimv2.AIMv2Model",

|

| 117 |

+

"FlaxAutoModel": "modeling_flax_aimv2.FlaxAIMv2Model"

|

| 118 |

+

},

|

| 119 |

+

"bad_words_ids": null,

|

| 120 |

+

"begin_suppress_tokens": null,

|

| 121 |

+

"bos_token_id": null,

|

| 122 |

+

"chunk_size_feed_forward": 0,

|

| 123 |

+

"cross_attention_hidden_size": null,

|

| 124 |

+

"decoder_start_token_id": null,

|

| 125 |

+

"diversity_penalty": 0.0,

|

| 126 |

+

"do_sample": false,

|

| 127 |

+

"early_stopping": false,

|

| 128 |

+

"encoder_no_repeat_ngram_size": 0,

|

| 129 |

+

"eos_token_id": null,

|

| 130 |

+

"exponential_decay_length_penalty": null,

|

| 131 |

+

"finetuning_task": null,

|

| 132 |

+

"forced_bos_token_id": null,

|

| 133 |

+

"forced_eos_token_id": null,

|

| 134 |

+

"hidden_size": 1024,

|

| 135 |

+

"id2label": {

|

| 136 |

+

"0": "LABEL_0",

|

| 137 |

+

"1": "LABEL_1"

|

| 138 |

+

},

|

| 139 |

+

"image_size": 448,

|

| 140 |

+

"intermediate_size": 2816,

|

| 141 |

+

"is_decoder": false,

|

| 142 |

+

"is_encoder_decoder": false,

|

| 143 |

+

"label2id": {

|

| 144 |

+

"LABEL_0": 0,

|

| 145 |

+

"LABEL_1": 1

|

| 146 |

+

},

|

| 147 |

+

"length_penalty": 1.0,

|

| 148 |

+

"max_length": 20,

|

| 149 |

+

"min_length": 0,

|

| 150 |

+

"model_type": "aimv2",

|

| 151 |

+

"no_repeat_ngram_size": 0,

|

| 152 |

+

"num_attention_heads": 8,

|

| 153 |

+

"num_beam_groups": 1,

|

| 154 |

+

"num_beams": 1,

|

| 155 |

+

"num_channels": 3,

|

| 156 |

+

"num_hidden_layers": 24,

|

| 157 |

+

"num_return_sequences": 1,

|

| 158 |

+

"output_attentions": false,

|

| 159 |

+

"output_hidden_states": false,

|

| 160 |

+

"output_scores": false,

|

| 161 |

+

"pad_token_id": null,

|

| 162 |

+

"patch_size": 14,

|

| 163 |

+

"prefix": null,

|

| 164 |

+

"problem_type": null,

|

| 165 |

+

"projection_dropout": 0.0,

|

| 166 |

+

"pruned_heads": {},

|

| 167 |

+

"qkv_bias": false,

|

| 168 |

+

"remove_invalid_values": false,

|

| 169 |

+

"repetition_penalty": 1.0,

|

| 170 |

+

"return_dict": true,

|

| 171 |

+

"return_dict_in_generate": false,

|

| 172 |

+

"rms_norm_eps": 1e-05,

|

| 173 |

+

"sep_token_id": null,

|

| 174 |

+

"suppress_tokens": null,

|

| 175 |

+

"task_specific_params": null,

|

| 176 |

+

"temperature": 1.0,

|

| 177 |

+

"tf_legacy_loss": false,

|

| 178 |

+

"tie_encoder_decoder": false,

|

| 179 |

+

"tie_word_embeddings": true,

|

| 180 |

+

"tokenizer_class": null,

|

| 181 |

+

"top_k": 50,

|

| 182 |

+

"top_p": 1.0,

|

| 183 |

+

"torch_dtype": "bfloat16",

|

| 184 |

+

"torchscript": false,

|

| 185 |

+

"typical_p": 1.0,

|

| 186 |

+

"use_bfloat16": false,

|

| 187 |

+

"use_bias": false

|

| 188 |

+

},

|

| 189 |

+

"backbone_kwargs": {},

|

| 190 |

+

"bad_words_ids": null,

|

| 191 |

+

"begin_suppress_tokens": null,

|

| 192 |

+

"bos_token_id": null,

|

| 193 |

+

"chunk_size_feed_forward": 0,

|

| 194 |

+

"cross_attention_hidden_size": null,

|

| 195 |

+

"decoder_start_token_id": null,

|

| 196 |

+

"depths": null,

|

| 197 |

+

"diversity_penalty": 0.0,

|

| 198 |

+

"do_sample": false,

|

| 199 |

+

"drop_cls_token": false,

|

| 200 |

+

"early_stopping": false,

|

| 201 |

+

"encoder_no_repeat_ngram_size": 0,

|

| 202 |

+

"eos_token_id": null,

|

| 203 |

+

"exponential_decay_length_penalty": null,

|

| 204 |

+

"finetuning_task": null,

|

| 205 |

+

"forced_bos_token_id": null,

|

| 206 |

+

"forced_eos_token_id": null,

|

| 207 |

+

"hidden_stride": 2,

|

| 208 |

+

"id2label": {

|

| 209 |

+

"0": "LABEL_0",

|

| 210 |

+

"1": "LABEL_1"

|

| 211 |

+

},

|

| 212 |

+

"is_decoder": false,

|

| 213 |

+

"is_encoder_decoder": false,

|

| 214 |

+

"label2id": {

|

| 215 |

+

"LABEL_0": 0,

|

| 216 |

+

"LABEL_1": 1

|

| 217 |

+

},

|

| 218 |

+

"length_penalty": 1.0,

|

| 219 |

+

"max_length": 20,

|

| 220 |

+

"min_length": 0,

|

| 221 |

+

"model_type": "aimv2_visual_tokenizer",

|

| 222 |

+

"no_repeat_ngram_size": 0,

|

| 223 |

+

"num_beam_groups": 1,

|

| 224 |

+

"num_beams": 1,

|

| 225 |

+

"num_return_sequences": 1,

|

| 226 |

+

"output_attentions": false,

|

| 227 |

+

"output_hidden_states": false,

|

| 228 |

+

"output_scores": false,

|

| 229 |

+

"pad_token_id": null,

|

| 230 |

+

"prefix": null,

|

| 231 |

+

"problem_type": null,

|

| 232 |

+

"pruned_heads": {},

|

| 233 |

+

"remove_invalid_values": false,

|

| 234 |

+

"repetition_penalty": 1.0,

|

| 235 |

+

"return_dict": true,

|

| 236 |

+

"return_dict_in_generate": false,

|

| 237 |

+

"sep_token_id": null,

|

| 238 |

+

"suppress_tokens": null,

|

| 239 |

+

"task_specific_params": null,

|

| 240 |

+

"tau": 1.0,

|

| 241 |

+

"temperature": 1.0,

|

| 242 |

+

"tf_legacy_loss": false,

|

| 243 |

+

"tie_encoder_decoder": false,

|

| 244 |

+

"tie_word_embeddings": true,

|

| 245 |

+

"tokenize_function": "softmax",

|

| 246 |

+

"tokenizer_class": null,

|

| 247 |

+

"top_k": 50,

|

| 248 |

+

"top_p": 1.0,

|

| 249 |

+

"torch_dtype": null,

|

| 250 |

+

"torchscript": false,

|

| 251 |

+

"typical_p": 1.0,

|

| 252 |

+

"use_bfloat16": false,

|

| 253 |

+

"use_indicators": false,

|

| 254 |

+

"vocab_size": 65536

|

| 255 |

+

}

|

| 256 |

+

}

|

configuration_aimv2.py

ADDED

|

@@ -0,0 +1,63 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# copied from https://huggingface.co/apple/aimv2-huge-patch14-448

|

| 2 |

+

from typing import Any

|

| 3 |

+

|

| 4 |

+

from transformers.configuration_utils import PretrainedConfig

|

| 5 |

+

|

| 6 |

+

__all__ = ["AIMv2Config"]

|

| 7 |

+

|

| 8 |

+

|

| 9 |

+

class AIMv2Config(PretrainedConfig):

|

| 10 |

+

"""This is the configuration class to store the configuration of an [`AIMv2Model`].

|

| 11 |

+

|

| 12 |

+

Instantiating a configuration with the defaults will yield a similar configuration

|

| 13 |

+

to that of the [apple/aimv2-large-patch14-224](https://huggingface.co/apple/aimv2-large-patch14-224).

|

| 14 |

+

|

| 15 |

+

Args:

|

| 16 |

+

hidden_size: Dimension of the hidden representations.

|

| 17 |

+

intermediate_size: Dimension of the SwiGLU representations.

|

| 18 |

+

num_hidden_layers: Number of hidden layers in the Transformer.

|

| 19 |

+

num_attention_heads: Number of attention heads for each attention layer

|

| 20 |

+

in the Transformer.

|

| 21 |

+

num_channels: Number of input channels.

|

| 22 |

+

image_size: Image size.

|

| 23 |

+

patch_size: Patch size.

|

| 24 |

+

rms_norm_eps: Epsilon value used for the RMS normalization layer.

|

| 25 |

+

attention_dropout: Dropout ratio for attention probabilities.

|

| 26 |

+

projection_dropout: Dropout ratio for the projection layer after the attention.

|

| 27 |

+

qkv_bias: Whether to add a bias to the queries, keys and values.

|

| 28 |

+

use_bias: Whether to add a bias in the feed-forward and projection layers.

|

| 29 |

+

kwargs: Keyword arguments for the [`PretrainedConfig`].

|

| 30 |

+

"""

|

| 31 |

+

|

| 32 |

+

model_type: str = "aimv2"

|

| 33 |

+

|

| 34 |

+

def __init__(

|

| 35 |

+

self,

|

| 36 |

+

hidden_size: int = 1024,

|

| 37 |

+

intermediate_size: int = 2816,

|

| 38 |

+

num_hidden_layers: int = 24,

|

| 39 |

+

num_attention_heads: int = 8,

|

| 40 |

+

num_channels: int = 3,

|

| 41 |

+

image_size: int = 224,

|

| 42 |

+

patch_size: int = 14,

|

| 43 |

+

rms_norm_eps: float = 1e-5,

|

| 44 |

+

attention_dropout: float = 0.0,

|

| 45 |

+

projection_dropout: float = 0.0,

|

| 46 |

+

qkv_bias: bool = False,

|

| 47 |

+

use_bias: bool = False,

|

| 48 |

+

**kwargs: Any,

|

| 49 |

+

):

|

| 50 |

+

super().__init__(**kwargs)

|

| 51 |

+

self.hidden_size = hidden_size

|

| 52 |

+

self.intermediate_size = intermediate_size

|

| 53 |

+

self.num_hidden_layers = num_hidden_layers

|

| 54 |

+

self.num_attention_heads = num_attention_heads

|

| 55 |

+

self.num_channels = num_channels

|

| 56 |

+

self.patch_size = patch_size

|

| 57 |

+

self.image_size = image_size

|

| 58 |

+

self.attention_dropout = attention_dropout

|

| 59 |

+

self.rms_norm_eps = rms_norm_eps

|

| 60 |

+

|

| 61 |

+

self.projection_dropout = projection_dropout

|

| 62 |

+

self.qkv_bias = qkv_bias

|

| 63 |

+

self.use_bias = use_bias

|

configuration_ovis.py

ADDED

|

@@ -0,0 +1,204 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|